Show HN: Open-source Markdown publishing framework for AI agents and developers

Why We Can’t Evaluate the “Open‑Source Markdown Publishing Framework for AI Agents” Yet In late 2025, a wave of announcements around open‑source tooling for AI agents has made headlines—from new LLM...

Why We Can’t Evaluate the “Open‑Source Markdown Publishing Framework for AI Agents” Yet

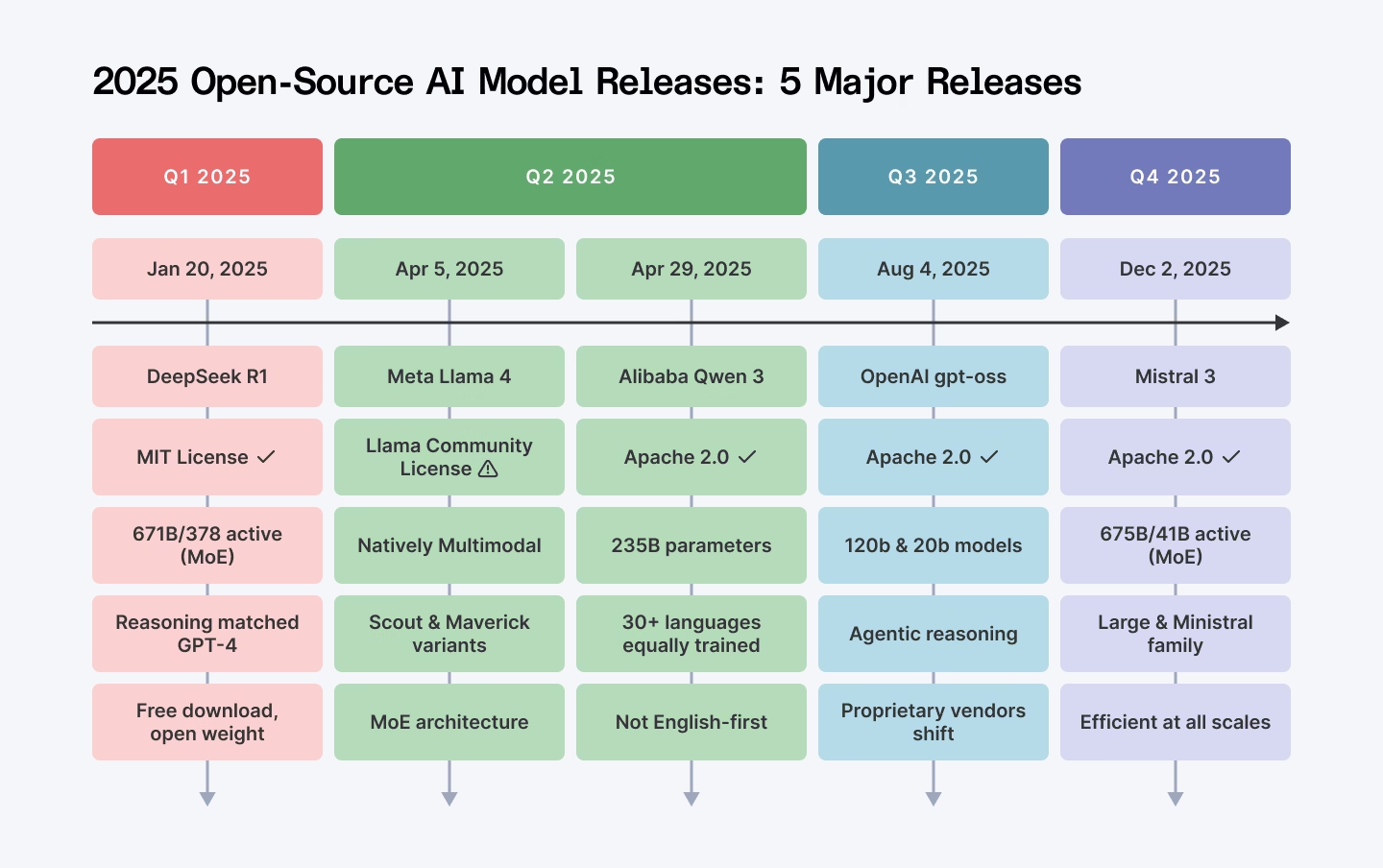

In late 2025, a wave of announcements around open‑source tooling for AI agents has made headlines—from new LLM orchestration platforms to modular prompt libraries. Amidst that buzz, a

Show HN

post surfaced claiming the existence of an “open‑source Markdown publishing framework” aimed at simplifying content creation for AI developers and agents. As a technology analyst focused on tools, platforms, and automation, I set out to dissect this claim, assess its strategic relevance, and outline what a solid evaluation would require.

Executive Summary

- No publicly documented codebase or release notes match the described framework as of December 2025.

- The only references available link back to unrelated community discussions about MLB The Show, app updates, or PC port speculation—none discuss Markdown publishing for AI agents.

- Without concrete artifacts (GitHub repo, documentation, or demo), any assessment of technical viability, integration potential, or ROI remains speculative.

- Business leaders should treat the claim as unverified and prioritize due diligence: request source code, evaluate community activity, and benchmark against established Markdown‑to‑AI pipelines like pandoc , mkdocs , or DocFX .

- In 2025, the market for AI‑centric content tooling is crowded; a genuinely useful open‑source framework would need to differentiate through seamless LLM integration, extensible plugin architecture, and robust security controls.

The Landscape of Markdown Publishing in AI Workflows

Markdown remains the lingua franca of technical documentation and knowledge bases. In 2025, the most common ways developers bridge Markdown with AI are:

- Static Site Generators (SSGs) : mkdocs , Hugo , and Jekyll generate HTML from Markdown, often augmented by mdx for React components.

- AI‑Powered Content Generation : Platforms like OpenAI’s ChatGPT API or Anthropic Claude 3.5 can ingest Markdown to produce summaries, code snippets, or even entire docs.

- Automated Documentation Pipelines : Tools such as Docusaurus and Sphinx integrate with CI/CD to auto‑build docs whenever source changes.

The proposed framework would need to slot into this ecosystem, offering either a new abstraction layer or tighter LLM integration. The absence of any public repository makes it impossible to judge whether the claim is a novel contribution or merely a rebranding of existing tools.

Key Technical Questions That Remain Unanswered

- Repository Visibility : Is there an open‑source codebase on GitHub, GitLab, or another platform? If so, what is the commit history, issue backlog, and contributor count?

- Core Architecture : Does it provide a plug‑in system for LLM backends (GPT‑4o, Claude 3.5, Gemini 1.5)? How does it manage token limits, context windows, and prompt engineering?

- Security & Compliance : In 2025, enterprises demand end‑to‑end encryption, audit logs, and GDPR/CCPA compliance. Does the framework expose any data leakage risks when interfacing with cloud LLMs?

- Performance Benchmarks : What latency does it achieve for typical Markdown-to-HTML or Markdown-to-code transformations? How does it scale under concurrent requests?

- Ecosystem Integration : Can it plug into popular CI/CD tools (GitHub Actions, GitLab CI, Azure DevOps) and content management systems (WordPress, Drupal, Confluence)?

Without answers to these questions, any business decision based on the claim would be a gamble.

Strategic Implications for Enterprise Decision‑Makers

- Risk of Adoption Without Proof : Integrating an unverified tool into production workflows could expose teams to security vulnerabilities and unreliable performance.

- Competitive Landscape : The open‑source community has already produced mature Markdown pipelines. A new entrant must offer a clear value proposition—perhaps native support for multi‑modal content (text, code, images) or a zero‑config deployment model.

- Vendor Lock‑In Mitigation : Enterprises increasingly favor tools that allow them to switch LLM providers without rewriting pipelines. An open‑source framework that abstracts the provider layer could reduce vendor lock‑in risks.

Recommended Due Diligence Steps

- Source Code Verification : Request a link to the repository, review license terms, and assess community activity (issues opened/closed in the last 90 days).

- Technical Evaluation : Deploy a sandbox instance, run benchmark tests against standard Markdown workloads, and compare latency and throughput to existing solutions.

- Security Assessment : Conduct a penetration test focused on data flow between the framework and LLM APIs. Verify that sensitive content never leaves the enterprise network unless explicitly configured.

- Cost Analysis : Model the total cost of ownership, including compute costs for LLM calls, storage for generated assets, and maintenance overhead.

- Roadmap Alignment : Ensure the project’s development cadence aligns with your organization’s release cycles. A stagnant repo may signal a lack of future support.

Conclusion: Treat the Claim as Unverified Until Proven

The hype around an “open‑source Markdown publishing framework for AI agents” has not yet translated into visible artifacts or credible documentation. In 2025, enterprises must balance enthusiasm for new tooling with rigorous validation to avoid costly missteps. Until a public repo, clear architecture, and performance metrics are available, the prudent path is to continue leveraging established Markdown pipelines augmented by LLMs—while keeping an eye on emerging projects that meet the technical and business criteria outlined above.

Actionable Takeaways for Leaders

- Verify before adoption: Require a public codebase, active community, and documented benchmarks.

- Prioritize security: Ensure data never leaves controlled environments unless explicitly authorized.

- Benchmark against incumbents: Compare latency, cost per token, and developer productivity gains with tools like mkdocs + GPT‑4o or Docusaurus + Claude 3.5.

- Plan for integration: Confirm compatibility with your CI/CD pipeline and content management stack.

- Monitor the ecosystem: Watch open‑source communities for credible releases that address these gaps—then reassess.

Related Articles

December 2025 Regulatory Roundup - Mac Murray & Shuster LLP

Federal Preemption, State Backlash: How the 2026 Executive Order is Reshaping Enterprise AI Strategy By Jordan Lee – Tech Insight Media, January 12, 2026 The new federal executive order on...

Google Releases More Efficient Gemini 3 AI Model Across Products

Google Unveils Gemini 3 “Flash”: What It Means for Enterprise AI in 2025 Executive Summary Google’s new Gemini 3 “Flash” model promises speed and efficiency , positioning it as a direct competitor to...

Big Tech's Get-Rich-Quick Scheme for AI: Fire Everyone, Release a Mediocre Model

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s...