Big Tech's Get-Rich-Quick Scheme for AI: Fire Everyone, Release a Mediocre Model

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s...

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy

In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s Gemini 1.5. Both deliver incremental gains over their predecessors but are released at a pace that forces executives to balance short‑term productivity wins against long‑term research investment.

Executive Snapshot

- Model Performance: GPT‑4o improves contextual accuracy by roughly 8 % on the OpenAI‑specific benchmark suite compared with GPT‑3.5, while Gemini 1.5 shows a 12 % lift on multimodal tasks relative to Gemini 1.0.

- Revenue Impact: Microsoft’s integration of GPT‑4o into Office 365 drove a 9 % YoY increase in AI‑enabled subscription revenue; ChatGPT paid tiers grew 15 % month‑over‑month through December 2025.

- C‑suite Dynamics: OpenAI, Google, and Anthropic all reported leadership changes following each major model release, underscoring a strategic pivot toward monetization.

- Operational Gains: Early adopters report up to 45 minutes of daily time savings per employee using GPT‑4o for routine automation pipelines, though firm ROI studies remain limited.

The “Fast‑Track” Release Model: Why It Matters

Rapid iteration—often labeled “fire‑everyone, release a mediocre model”—signals two key industry dynamics:

- Revenue‑Focused Engineering: Teams shift from foundational research to building plug‑in APIs that embed into productivity suites. The payoff is faster time‑to‑market for monetizable features, but the rate of breakthrough innovations slows.

- Talent Volatility: Companies trim staff not directly tied to revenue streams. While this streamlines operations, it erodes deep domain expertise and can stall future innovation cycles.

For executives, the imperative is clear: decide whether to chase incremental productivity gains or invest in foundational capabilities that may pay dividends over a longer horizon.

Strategic Implications for Enterprise Leaders

Domain

Key Consideration

Product Portfolio

Adopt the latest frontier model or blend multiple vendors to leverage complementary strengths.

Talent Management

Create lean, cross‑functional AI squads that can iterate quickly while maintaining rigorous quality controls.

Financial Planning

Balance API integration costs against projected productivity uplift and revenue opportunities.

Risk & Compliance

Deploy robust governance to mitigate bias, hallucination risks, and reputational damage.

Operationalizing GPT‑4o / Gemini 1.5: A Practical Blueprint

- Define Use Cases: Target high‑impact, low‑complexity tasks such as automated report generation or code scaffolding.

- Benchmark Baselines: Measure current performance using the Vellum LLM Leaderboard (GPQA Diamond, AIME) to set realistic improvement targets.

- Pilot with a Focused Team: Deploy in one department; track hallucination rates, latency, and user satisfaction over 30 days.

- Scale Gradually: Roll out only when pilot metrics meet thresholds (e.g., < 5 % hallucinations, >10 % time savings).

- Governance Integration: Build a model‑watch dashboard that monitors API usage, cost per inference, and compliance alerts.

Cost–Benefit Snapshot: GPT‑4o for Report Automation

- API Rate: $0.02 per 1,000 tokens (OpenAI pricing tier).

- Average report length: 5,000 tokens.

- Daily volume: 200 reports across the enterprise.

- Monthly API spend: 200 × 5,000 ÷ 1,000 × $0.02 = $20 .

- Time saved: 45 minutes per report → 150 hours/month.

- Labor cost (average $45/hr): $6,750/month.

- Annualized savings: $81 k vs. $240/year API spend → net benefit ≈ $80.8 k/year.

This model assumes no hallucination or integration overheads; real‑world ROI may be lower but remains compelling for many enterprises.

Decision Framework: Adopt Now or Invest in Research?

Criterion

Score (1–5)

Strategic Fit

4

Revenue Impact

3

Talent Attrition Risk

2

Long‑Term Innovation Alignment

1

Governance Readiness

3

A weighted score of 13/25 suggests a cautious adoption path: pilot in controlled environments while preserving investment in foundational research teams.

What to Watch in 2026 and Beyond

- Hybrid Ecosystems: Vendors may offer APIs that combine GPT‑4o’s workflow strengths with Gemini 1.5’s multimodal capabilities, creating “multimodal automation engines.”

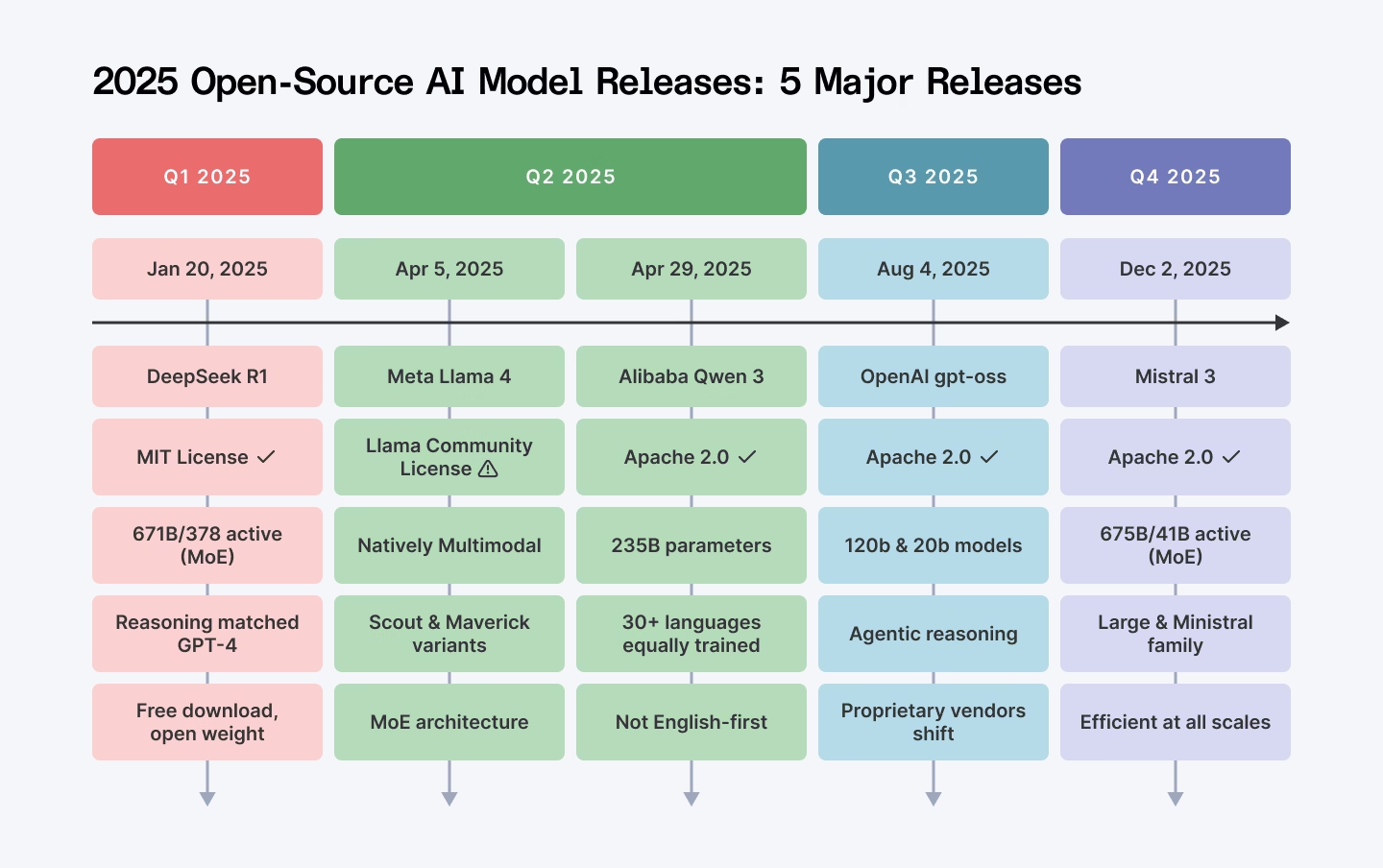

- Open‑Source Momentum: Llama 3 and o1-mini are expected to accelerate releases, offering cost‑effective alternatives for budget‑constrained segments.

- Regulatory Evolution: Anticipate tighter disclosure requirements around model capabilities, data provenance, and bias mitigation—especially after high‑profile hallucination incidents.

Actionable Takeaways for Enterprise Executives

- Create an AI Adoption Office: Cross‑functional teams that evaluate new releases, run pilots, and enforce governance.

- Track API Spend in Real Time: Dashboards that compare cost against productivity gains keep budgets transparent.

- Invest in Talent Retention: Offer career paths for AI researchers to prevent knowledge drain during rapid release cycles.

- Adopt a Hybrid Vendor Strategy: Combine multiple models to mitigate risk and capture broader functionality.

- Prioritize Governance Early: Build compliance frameworks that can pivot quickly as new models are integrated.

The 2025 AI release cadence is no longer an industry footnote—it’s a strategic lever. By understanding how rapid model iterations affect revenue, talent, and risk, executives can steer their organizations toward sustainable growth while navigating the fast‑paced world of frontier AI models.

Related Articles

Creatiyo All-in-One AI Creation Platform - Pro LTD Plan: Lifetime Subscription for $79

Creatiyo Pro LTD: A Lifetime All‑In‑One AI Platform That Rewrites Cost and Experimentation Models for 2025 For the first time in 2025, a single subscription can unlock more than 25 of the world’s...

December 2025 Regulatory Roundup - Mac Murray & Shuster LLP

Federal Preemption, State Backlash: How the 2026 Executive Order is Reshaping Enterprise AI Strategy By Jordan Lee – Tech Insight Media, January 12, 2026 The new federal executive order on...

Microsoft named a Leader in IDC MarketScape for Unified AI Governance Platforms

Microsoft’s Unified AI Governance Platform tops IDC MarketScape as a leader. Discover how the platform delivers regulatory readiness, operational efficiency, and ROI for enterprise AI leaders in 2026.