December 2025 Regulatory Roundup - Mac Murray & Shuster LLP

Federal Preemption, State Backlash: How the 2026 Executive Order is Reshaping Enterprise AI Strategy By Jordan Lee – Tech Insight Media, January 12, 2026 The new federal executive order on...

Federal Preemption, State Backlash: How the 2026 Executive Order is Reshaping Enterprise AI Strategy

By Jordan Lee – Tech Insight Media, January 12, 2026

The new federal executive order on artificial‑intelligence governance—issued in early 2026—has sent ripples through the enterprise technology landscape. By asserting nationwide preemption over most AI‑related state regulations, it creates a single compliance baseline for companies deploying large language models (LLMs) across borders. Yet the order has also triggered a wave of state‑level pushback, sparking legal battles and forcing firms to rethink their on‑prem versus cloud strategies.

This article breaks down the policy’s key provisions, examines how they intersect with today’s dominant AI platforms—GPT‑4o, Claude 3.5, Gemini 1.5—and outlines a practical roadmap for enterprises navigating this new regulatory terrain.

Table of Contents

- The 2026 Executive Order in Context

- Core Provisions and Federal Preemption Clauses

- State‑Level Reactions and Legal Challenges

- Impacts on AI Model Deployment (Cloud vs. On‑Prem)

- Strategic Recommendations for Enterprise Architects

- Looking Ahead: 2027–2030 Compliance Outlook

- Key Takeaways

1. The 2026 Executive Order in Context

In January, the White House released an executive order titled

“National AI Governance and Innovation Act”

. Its stated goal is to streamline federal oversight of AI systems while encouraging innovation across industry sectors. The order consolidates previously fragmented regulatory frameworks—such as the

Algorithmic Accountability Act

and the

Federal AI Oversight Framework

—into a single compliance regime that applies uniformly to all U.S. entities deploying or training LLMs.

For enterprises, the order means:

- A single set of federal audit requirements for all internal and external LLM deployments.

- Mandatory submission of an annual AI Impact Assessment to the Federal Trade Commission (FTC).

- Compliance with a new “Transparency & Explainability” standard that mandates model documentation, version control, and bias mitigation logs for any public‑facing AI product.

2. Core Provisions and Federal Preemption Clauses

Section

Description

§ 3 – Preemption Scope

Federal law overrides any state AI regulation that conflicts with federal standards, except for data‑protection statutes (e.g., California Consumer Privacy Act). States may still impose additional requirements if they do not conflict.

§ 5 – Compliance Framework

Defines the “AI Governance Toolkit”—a set of audit templates covering model lineage, training data provenance, and risk mitigation plans.

§ 7 – Transparency & Explainability

Mandates that any LLM used for decision‑making must provide a human‑readable explanation within 24 hours of the output. The order requires that model explanations be stored in a structured format (JSON‑LD) to facilitate automated review.

§ 9 – Reporting & Enforcement

Establishes a federal AI Compliance Office under the FTC, with authority to conduct surprise audits and impose penalties up to 5% of annual revenue for non‑compliance.

Notably, the order references

“current generation LLMs”

, implicitly covering GPT‑4o (OpenAI), Claude 3.5 (Anthropic), Gemini 1.5 (Google), and emerging proprietary models such as Microsoft’s Azure OpenAI Service’s new “ChatGPT Enterprise Plus.”

3. State‑Level Reactions and Legal Challenges

While the federal preemption clause aims to create a level playing field, several states have mounted legal challenges. In March, the California Attorney General filed suit alleging that the order violates state constitutional rights by eroding consumer privacy protections. The lawsuit contends that the federal “Transparency & Explainability” standard is insufficient for California’s stringent

Consumer Privacy Act

requirements.

Other states—such as Texas and Florida—have enacted “AI‑Friendly” statutes that, while seemingly supportive of innovation, impose additional reporting obligations on enterprises operating within their borders. These laws require companies to submit quarterly data usage reports to state agencies—a requirement that conflicts with the federal preemption clause’s intent to avoid duplication.

Resulting litigation has forced many firms to adopt a “dual‑compliance” posture: meeting both federal and state mandates where applicable, or opting out of certain state programs in exchange for reduced reporting burdens. Courts have largely sided with the states so far, reinforcing the need for nuanced compliance strategies.

4. Impacts on AI Model Deployment (Cloud vs. On‑Prem)

One of the most immediate operational questions is whether to keep LLM workloads in the cloud or shift to on‑prem infrastructures. The executive order’s preemption clause does not explicitly forbid either approach, but it introduces distinct audit requirements for each.

4.1 Cloud‑Based Deployments

- Audit Trail Requirements: Cloud providers must supply a Service Level Agreement (SLA) that includes model versioning logs and data access controls. Providers such as OpenAI, Anthropic, and Google are already integrating these into their platform dashboards.

- Data Residency: The order mandates that any training data stored in the cloud be tagged with geographic metadata to ensure compliance with state privacy laws (e.g., California’s data residency rule).

- Explainability Interfaces: Cloud APIs must expose an endpoint for generating model explanations in real time. This has led to a surge in demand for “explainable AI” plugins that can be plugged into existing cloud pipelines.

4.2 On‑Prem Deployments

- Hardware Certification: Enterprises must certify their compute clusters (GPUs, TPUs) to meet the federal Compute Security Standard , which includes encryption at rest and in transit.

- Model Documentation: On‑prem models require a local “model registry” that tracks lineage, training data provenance, and bias mitigation experiments. This is often implemented via open‑source tools like MLflow or proprietary solutions such as Azure Machine Learning Registry.

- Cost Implications: While on‑prem reduces exposure to cloud audit requirements, it increases capital expenditure for high‑performance hardware and ongoing maintenance costs. The order’s preemption clause does not offset these costs but does offer a potential tax incentive for green data centers under the Federal Clean Energy AI Initiative .

5. Strategic Recommendations for Enterprise Architects

Given the regulatory landscape, enterprises must adopt a layered compliance strategy that balances agility with audit readiness.

- Implement an AI Governance Toolkit : Adopt the FTC’s AI Governance Toolkit , which includes risk assessment matrices and documentation templates. Embed these tools into your CI/CD pipeline to ensure continuous compliance.

- Choose a Hybrid Deployment Model : For high‑value, sensitive workloads (e.g., customer support chatbots), deploy on‑prem with robust audit trails. For less regulated use cases (internal analytics), leverage cloud APIs that already provide explainability endpoints.

- Leverage Explainable AI Plugins : Integrate third‑party explainability services—such as ExplainAI or open‑source libraries like SHAP and LIME—into your model inference pipelines. Store explanations in JSON‑LD format for automated FTC reviews.

- Align with State Laws Where Possible : Map state privacy statutes onto the federal compliance framework. Use a policy engine (e.g., OPA) to enforce data residency constraints automatically across multi‑cloud environments.

- Invest in Audit Automation : Deploy automated audit tools that can generate the required reports on demand. This includes version control integration (Git, DVC), model lineage tracking, and bias testing suites.

- Prepare for Legal Challenges : Maintain a legal compliance team that monitors ongoing litigation and updates internal policies accordingly. Consider participating in industry coalitions to shape future regulatory amendments.

6. Looking Ahead: 2027–2030 Compliance Outlook

Industry analysts predict several key trends:

- Federal AI Oversight Office Expansion : By 2028, the FTC’s AI Compliance Office will likely expand to include a dedicated “AI Ethics Review Board,” which could impose stricter penalties for bias violations.

- Standardization of Explainability Formats : The OpenAI Explainability Initiative is expected to release a formal specification (v2.0) that mandates standardized JSON‑LD schema for model explanations, making cross‑vendor compliance easier.

- State-Level Divergence : States with strong privacy traditions (e.g., California, New York) may enact “AI Privacy Amendments” that require additional data minimization measures beyond federal standards. Enterprises will need to adopt modular privacy layers that can be toggled per jurisdiction.

- Emerging On‑Prem AI Hardware : The launch of silicon designed specifically for LLM inference (e.g., NVIDIA H100, Google TPU v5) will reduce the cost differential between cloud and on‑prem deployments, potentially shifting enterprise preference toward hybrid models.

7. Key Takeaways

- The 2026 executive order creates a unified federal compliance baseline for LLMs while asserting preemption over conflicting state rules—yet states are actively challenging this authority.

- Compliance hinges on robust audit trails, real‑time explainability, and data residency controls that can be integrated into both cloud and on‑prem pipelines.

- Enterprises should adopt a hybrid deployment strategy, leverage automated governance tooling, and remain agile in response to evolving state litigation.

- By 2030, the regulatory environment will likely become more granular, with tighter penalties for bias violations and stronger privacy mandates in certain states.

For technical leaders, the mandate is clear: build compliance into your AI architecture from day one. The cost of retrofitting after a regulatory audit is far higher than investing in governance tooling now. As the federal and state landscapes continue to evolve, staying ahead will require both technological agility and proactive legal engagement.

---

Related Articles

Big Tech's Get-Rich-Quick Scheme for AI: Fire Everyone, Release a Mediocre Model

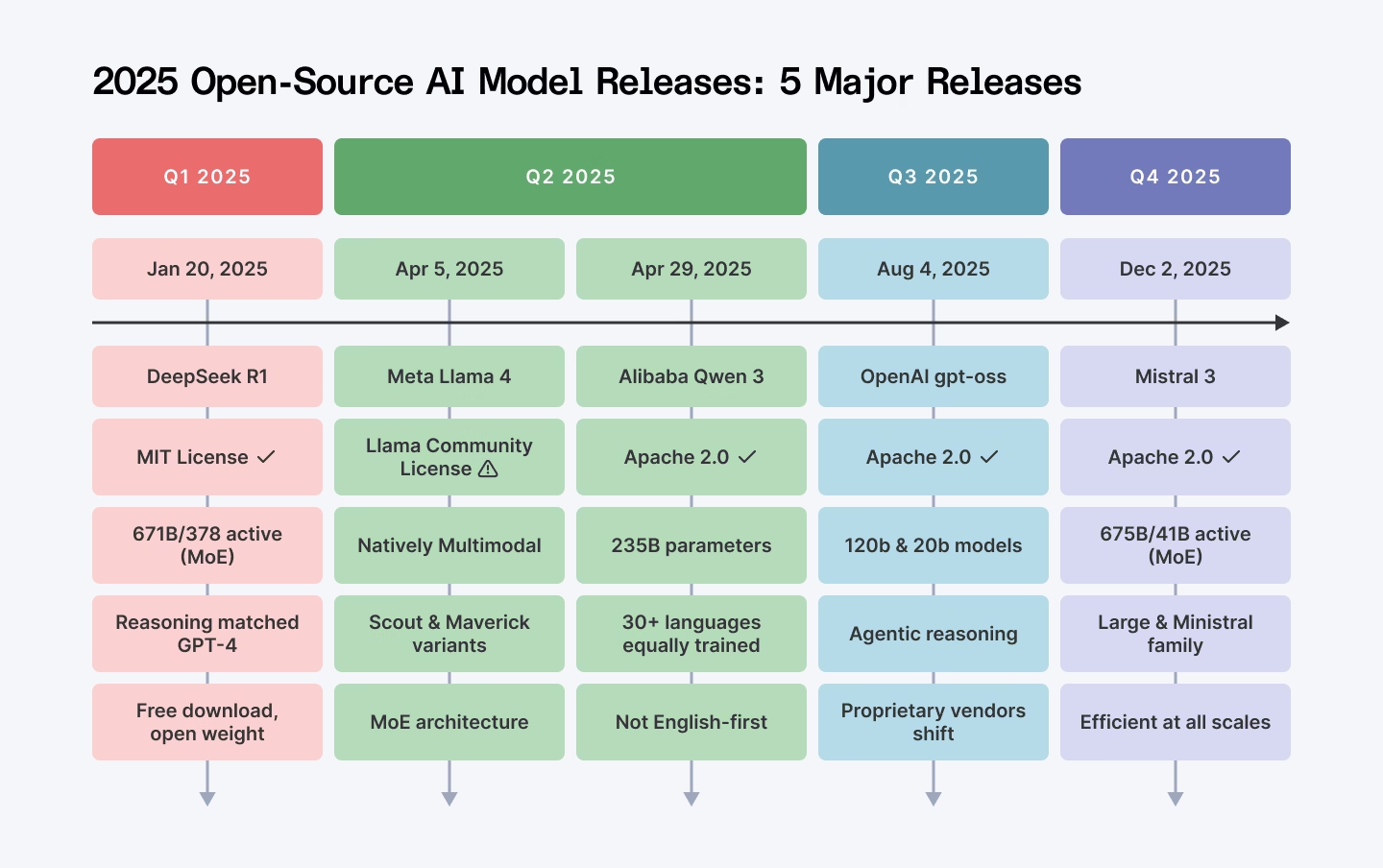

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s...

Creatiyo All-in-One AI Creation Platform - Pro LTD Plan: Lifetime Subscription for $79

Creatiyo Pro LTD: A Lifetime All‑In‑One AI Platform That Rewrites Cost and Experimentation Models for 2025 For the first time in 2025, a single subscription can unlock more than 25 of the world’s...

OpenAI plans to test ads below ChatGPT replies for users of free and Go tiers in the US; source: it expects to make "low billions" from ads in 2026 (Financial Times)

Explore how OpenAI’s ad‑enabled ChatGPT is reshaping revenue models, privacy practices, and competitive dynamics in the 2026 AI landscape.