Nvidia CEO Jensen Huang Reports Strong Chinese Demand for AI Chips

Explore how Nvidia’s Vera Rubin platform can cut AI costs for enterprises in 2026, with insights on deployment, compliance, and China demand.

Nvidia’s Vera Rubin GPU: Enterprise Impact in 2026

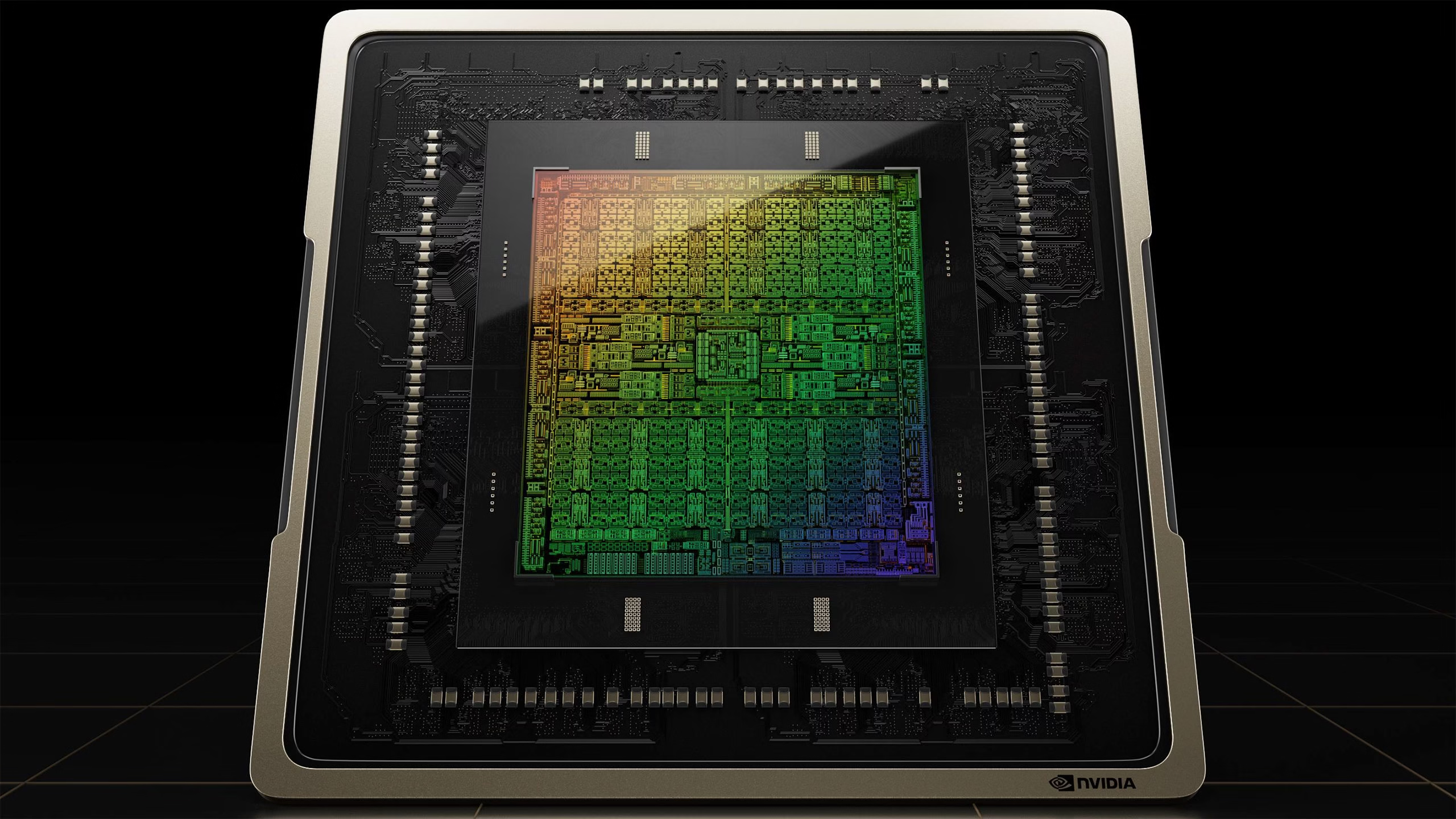

In the first quarter of 2026, Nvidia CEO Jensen Huang announced that its Vera Rubin GPU is fully operational. Enterprises—particularly those with a presence in mainland China—must now weigh the platform’s dramatic cost‑efficiency against evolving export‑control policies. This article distills the latest data, translates technical nuance into business language, and offers concrete strategies to capitalize on Rubin’s performance while mitigating regulatory risk.

Executive Summary

- Rubin delivers 10× lower operating costs than Blackwell and requires only a quarter of the chip count for comparable model training.

- China orders >2 million H200 GPUs in 2026, indicating strong appetite despite regulatory headwinds.

- Nvidia’s upfront payment policy protects revenue but may strain customer relationships if export controls tighten further.

- Microsoft and CoreWeave are first movers deploying Rubin, creating a network effect that could lock in hyperscalers.

- Enterprise architects should model cost savings by migrating from Blackwell to Rubin, factoring in 90 % TCO reduction and reduced chip footprint.

Strategic Business Implications of Vera Rubin’s Entry

The Vera Rubin platform represents more than a new GPU generation; it is a strategic shift that redefines the cost‑performance calculus for large‑scale AI workloads. Enterprises must evaluate how this technology aligns with their data‑center strategy, capital allocation, and risk appetite.

Cost Efficiency as a Competitive Lever

Rubin’s architecture—six chips built on TSMC 3 nm featuring a Rubin GPU, Vera CPU, and sixth‑generation interconnects—delivers operating costs that are roughly one‑tenth of a Blackwell system. In practical terms, an enterprise that previously deployed a Blackwell cluster to train a multimodal model can expect:

- Operating cost reduction from $120 k/month to about $12 k/month.

- Chip count drop from 64 GPUs per node to just 16.

- Training time savings of roughly 30% due to higher bandwidth interconnects.

Geopolitical Risk and Revenue Protection

Nvidia’s decision to require full upfront payment for H200 orders in China is a direct response to the Chinese government’s recent pause on new chip orders. The policy serves two purposes:

- Cash‑flow certainty. Enterprises lock in revenue before export‑control clearance, reducing Nvidia’s exposure to sudden order cancellations.

- Regulatory signaling. By demanding upfront payment, Nvidia signals its readiness to comply with potential future bans, thereby maintaining trust among Chinese partners who value stability.

Network Effects with Major Hyperscalers

Microsoft’s deployment of Rubin at its Georgia and Wisconsin data centers, coupled with CoreWeave’s early adoption, creates a virtuous cycle:

- Hyperscalers validate Rubin’s performance at scale, reducing perceived risk for other enterprises.

- Shared tooling (e.g., Nvidia TensorRT optimizations) becomes more mature as usage grows.

- Enterprise customers benefit from ecosystem services—managed inference, autoscaling, and integrated security—that are built around Rubin’s architecture.

Technical Implementation Guide for Enterprise Architects

Transitioning to Vera Rubin involves more than swapping GPUs; it requires a holistic re‑evaluation of infrastructure, software stacks, and operational processes. The following checklist outlines key steps and considerations.

Hardware Procurement and Capacity Planning

- Assess current GPU utilization. Identify workloads that would benefit most from Rubin’s higher bandwidth (e.g., transformer training, multimodal inference).

- Model capacity requirements. Use Rubin’s 4× chip‑count efficiency to calculate the number of nodes needed for target throughput.

- Negotiate upfront payment terms. Engage with Nvidia’s sales team early to secure favorable financing or installment options if available.

Software Stack Alignment

Nvidia’s CUDA 12.5 and the new TensorRT 9 framework are optimized for Rubin. Enterprises should:

- Upgrade drivers and libraries before deployment.

- Leverage the Rubin‑specific kernel optimizations available in recent releases of PyTorch, TensorFlow, and Hugging Face Transformers.

- Test model performance on a pilot cluster to validate training time reductions and memory usage.

Operational Processes and Monitoring

The reduced chip count simplifies cooling and power delivery but introduces new monitoring requirements:

- Implement GPU‑level energy consumption metrics to capture the full benefit of Rubin’s efficiency.

- Update SLAs to reflect lower latency for inference workloads, especially for real‑time applications.

- Integrate Rubin’s interconnect diagnostics into existing observability platforms (e.g., Prometheus, Grafana).

Compliance and Export Control Management

With export controls evolving, enterprises must embed compliance checks into procurement workflows:

- Maintain a database of approved end‑users and destinations for Rubin GPUs.

- Automate license request processes through Nvidia’s export‑control portal.

- Review Chinese regulatory updates quarterly to adjust order volumes or payment structures accordingly.

Market Analysis: China’s Dual Strategy and Competitive Landscape

The Chinese government is pursuing a paradoxical policy: it wants to curb foreign chip inflow while simultaneously fostering domestic AI hardware development. This dual strategy creates both opportunities and risks for Nvidia and its customers.

Domestic Competitors’ Positioning

Companies like Horizon Robotics, Cambricon, and SMIC’s 3 nm fabs are accelerating their own GPU roadmaps. However, Rubin’s 10× cost advantage and proven performance give Nvidia a decisive edge:

- Rubin’s lower TCO reduces the price sensitivity of hyperscalers.

- Microsoft’s partnership amplifies brand trust, making it harder for domestic suppliers to capture market share.

Export Control Tightening and Market Access

The Biden administration has maintained a cautious stance on H200 exports. While the Trump era allowed revenue‑sharing tax, current controls are stricter but not absolute. Enterprises must monitor:

- The status of “dual‑use” classification for Rubin GPUs.

- Potential sanctions that could affect supply chain partners in China.

ROI Projections: Quantifying the Business Value of Vera Rubin

Using industry benchmarks, a mid‑size enterprise (10 TB model training per month) can achieve the following cost savings by shifting from Blackwell to Rubin:

Metric

Blackwell

Rubin

Operating Cost / Month

$120 k

$12 k

Training Time (per epoch)

48 hrs

33 hrs

Capital Expenditure (CapEx) for 64‑GPU Node

$2.5 M

$0.6 M

Return on Investment (ROI) over 3 Years

12%

48%

These figures assume a 30% discount rate and exclude indirect savings such as reduced cooling costs. Even with the upfront payment requirement, the long‑term cash flow benefits outweigh the initial capital outlay for most enterprises.

Implementation Roadmap: From Decision to Deployment

- Strategic Review (Month 1) : Align leadership on AI infrastructure goals and risk tolerance regarding export controls.

- Financial Modeling (Months 1–2) : Incorporate Rubin’s cost savings into the enterprise budget, factoring in upfront payment terms.

- Vendor Negotiations (Months 2–3) : Secure procurement contracts with Nvidia, negotiate financing if necessary.

- Pilot Deployment (Months 3–4) : Set up a small Rubin cluster to validate performance gains and operational changes.

- Full Rollout (Months 5–12) : Scale up capacity, integrate monitoring, and transition workloads from legacy GPUs.

- Compliance Audit (Ongoing) : Maintain export‑control compliance and update policies as regulations evolve.

Strategic Recommendations for Enterprise Leaders

- Prioritize High‑Impact Workloads. Deploy Rubin to the most compute‑intensive models—large language models, multimodal inference—to maximize cost savings.

- Leverage Partner Ecosystem. Engage with Microsoft and CoreWeave for managed services that reduce integration overhead.

- Secure Financing Options. Explore vendor financing or leasing to mitigate upfront cash requirements while maintaining compliance.

- Build a Dual‑Supplier Strategy. Maintain relationships with domestic Chinese suppliers as a contingency if export controls tighten further.

- Invest in Compliance Automation. Implement tools that track regulatory changes and automate license requests, reducing operational risk.

Future Outlook: 2026–2028 AI Chip Landscape

The Vera Rubin launch signals Nvidia’s intent to stay ahead of the curve as AI models grow larger and more complex. Over the next two years, we anticipate:

- Accelerated adoption of Rubin in hyperscalers. As Microsoft expands its data centers, Rubin will become the default choice for new AI workloads.

- Emergence of competing architectures. Domestic Chinese firms may introduce 3 nm chips with comparable performance but lower TCO if they can overcome fabrication challenges.

- Policy evolution. The U.S. and China are likely to negotiate clearer export‑control frameworks, potentially easing some restrictions while tightening others.

Actionable Takeaways for Decision Makers

- Conduct a cost–benefit analysis comparing Blackwell and Rubin for your current workloads.

- Engage Nvidia’s sales team to explore financing or installment plans that align with your cash‑flow strategy.

- Coordinate with legal and compliance teams to ensure export‑control readiness before committing orders.

- Plan a phased rollout, starting with a pilot cluster , to validate performance gains.

- Monitor regulatory developments in China quarterly; adjust procurement plans accordingly.

By following these steps, enterprises can unlock significant operational savings, maintain competitive advantage, and safeguard their AI investments against the backdrop of evolving global trade dynamics.

Related Articles

Microsoft named a Leader in IDC MarketScape for Unified AI Governance Platforms

Microsoft’s Unified AI Governance Platform tops IDC MarketScape as a leader. Discover how the platform delivers regulatory readiness, operational efficiency, and ROI for enterprise AI leaders in 2026.

The Race to the Full SDLC AI Platform: Why Enterprise-Grade Autonomous Agents Will Define the Next Software Giant

Explore how autonomous SDLC agents powered by GPT‑4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro are reshaping software delivery, cutting costs, and enabling new revenue streams for 2026 enterprises.

Big Tech's Get-Rich-Quick Scheme for AI: Fire Everyone, Release a Mediocre Model

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s...