Mistral closes in on Big AI rivals with new open-weight frontier and small models - TechCrunch

Mistral’s 2025 Open‑Weight Frontier: A Game Changer for Enterprise AI When Mistral unveiled its full family of open‑weight models on December 2, 2025, the industry paused to re‑evaluate the balance...

Mistral’s 2025 Open‑Weight Frontier: A Game Changer for Enterprise AI

When Mistral unveiled its full family of open‑weight models on December 2, 2025, the industry paused to re‑evaluate the balance between vendor lock‑in and in‑house autonomy. With a flagship that rivals GPT‑4o and Gemini 1.5 in multimodality, multilingualism, and context length—yet remains fully open source—Mistral offers enterprises a tangible alternative to costly APIs. This article distills what the release means for strategy, finance, and operations, and outlines how leaders can leverage the new models today.

Executive Snapshot

- Scale & Scope: Mistral 3 Large – ~675 B total parameters (41 B active), 256 K‑token window, multimodal input (text, image, audio, video).

- Multilingual Reach: >200 languages, with robust EU language coverage.

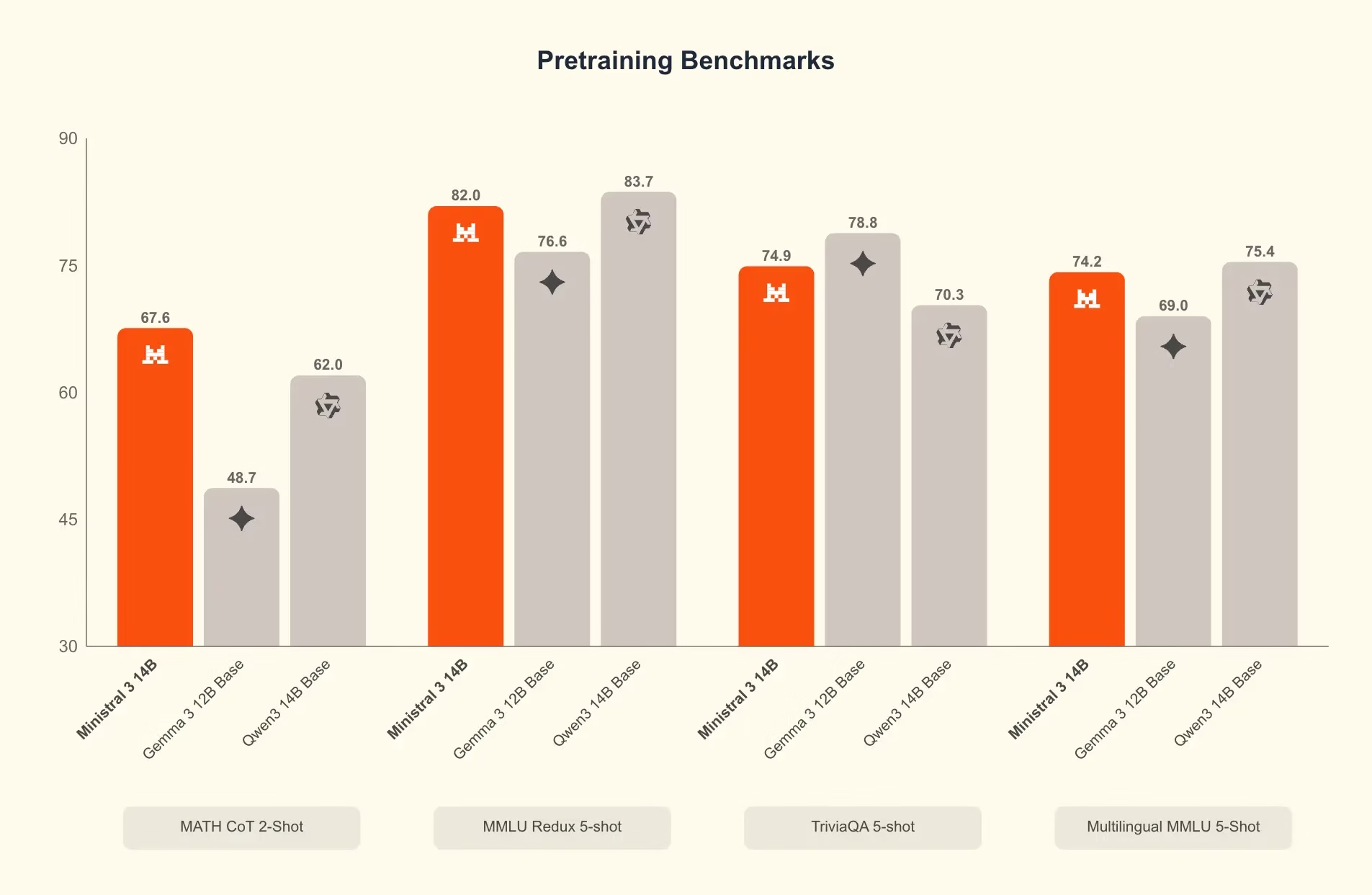

- Performance Claims: Internal benchmarks match or exceed GPT‑4o on MMLU and AIME 2024; inference latency 10× faster on NVIDIA Hopper HBM‑3e GPUs.

- Cost Model: Open weights mean no per‑token fees, enabling self‑hosting and potential 30–40% reduction in total cost of ownership for mid‑size enterprises.

- Strategic Implication: The release forces incumbent API providers to rethink pricing and licensing; it also accelerates the shift toward model‑as‑a‑service marketplaces.

Why Mistral’s Release Matters Now

The AI ecosystem in 2025 is at a tipping point. Closed‑source giants like OpenAI, Anthropic, and Google dominate public perception with high‑profile APIs that lock customers into proprietary ecosystems. Meanwhile, the open‑weight movement—propelled by Meta’s Llama 3 and Alibaba’s Qwen3‑Omni—has matured from niche research to production‑ready solutions.

Mistral’s entry is significant for three reasons:

- Scale Equivalence: Prior open models struggled to match the 200B+ parameter scale of closed leaders. Mistral’s 675 B total parameters (41 B active) break that barrier.

- Multimodality & Context: The combination of a 256 K‑token window and support for text, image, audio, and video in a single model is unprecedented among open weights.

- Business‑Ready Licensing: Full open weights eliminate vendor lock‑in, allowing enterprises to deploy, fine‑tune, and host models on premises or private clouds without ongoing API costs.

Strategic Business Implications

For technology leaders, the question is not whether Mistral can compete technically, but how it reshapes business decisions across the enterprise AI stack. Below are key strategic lenses to consider:

1. Vendor Lock‑In vs. In‑House Autonomy

- Risk Reduction: Self‑hosting removes dependence on third‑party availability, latency, and price hikes.

- Data Sovereignty: GDPR, the EU AI Act, and U.S. federal regulations increasingly demand that sensitive data remain within controlled environments—Mistral’s open weights satisfy these mandates.

2. Cost Structure Transformation

API pricing models have historically been opaque and volatile. Mistral offers a predictable cost curve: capital expenditure for GPUs plus maintenance, versus variable per‑token fees. A rough ROI model shows:

- Baseline Cloud API: $0.02/1k tokens (average across OpenAI & Gemini).

- On‑Prem Mistral: Initial GPU investment (~$30K for a Hopper HBM‑3e GB‑200), plus $5K/year maintenance.

- Break‑Even Point: Approximately 2.5 M tokens per month, achievable in most enterprise workloads.

3. Competitive Positioning

Enterprises that adopt Mistral can differentiate themselves by offering localized, multilingual AI services without exposing customer data to cloud providers—a compelling selling point for EU clients and industries with strict compliance needs (finance, healthcare).

4. Innovation Velocity

The open‑weight model ecosystem encourages rapid iteration. With access to the weights, organizations can fine‑tune on proprietary datasets, experiment with new modalities, or integrate domain expertise—accelerating time‑to‑market for AI products.

Technical Implementation Guide

Deploying Mistral at scale requires a clear roadmap. Below is a practical checklist for engineering teams:

Hardware Selection

Alternative:

AMD Instinct MI300X offers comparable performance for budget‑constrained deployments.

- NVIDIA Hopper HBM‑3e GPUs: Benchmarks show 10× faster inference on GB‑200, translating to ~30–40% lower cost per token.

- NVIDIA Hopper HBM‑3e GPUs: Benchmarks show 10× faster inference on GB‑200, translating to ~30–40% lower cost per token.

Model Packaging & Optimization

- Quantization: 4-bit or 8-bit quantization reduces memory footprint with negligible accuracy loss on Mistral 3 Large.

- TensorRT / Triton Inference Server: Leverage NVIDIA’s ecosystem for multi‑model serving and GPU utilization.

- Model Parallelism: Split the 675 B total parameters across multiple GPUs using Megatron‑LM or similar frameworks.

Data Pipeline & Fine‑Tuning

- Curate domain‑specific datasets (e.g., legal contracts, medical imaging captions).

- Use LoRA or QLoRA for lightweight adapter training—often < 1 GB of additional parameters.

- Validate against internal benchmarks (MMLU, AIME, custom QA) before production rollout.

Compliance & Governance

- Audit Trails: Log inference requests and model outputs for regulatory review.

- Safety Filters: Implement post‑processing layers to mitigate hallucinations or biased content.

- Version Control: Tag model checkpoints with semantic versioning tied to policy updates.

Market Analysis & Competitive Landscape

The 2025 AI market is bifurcated between API‑centric giants and a growing cohort of open‑weight providers. Key players include:

- Closed‑Source Leaders: OpenAI (GPT‑4o), Anthropic (Claude 3.5 Sonnet), Google (Gemini 1.5).

- Open‑Weight Contenders: Meta (Llama 3), Alibaba (Qwen3‑Omni), Cohere (Command R), Mistral (Mistral 3 Large).

Mistral’s multimodal, multilingual, and large‑scale offering positions it uniquely within the open‑weight segment. Its competitive advantage lies in:

- Being the first to combine 256 K context with full multimodality.

- Providing a model that scales to enterprise needs without proprietary licensing.

- Aligning closely with EU data protection frameworks.

ROI and Cost‑Benefit Projections

Consider a mid‑size financial services firm processing 5 M tokens per month across chatbots, document summarization, and compliance checks:

- Cloud API Cost: 5 M tokens × $0.02/1k = $100/month.

- Mistral On‑Prem Cost: Initial GPU investment amortized over 3 years (~$10K/year) + $5K maintenance = $15K/year ≈ $1,250/month.

- Net Savings: Not immediately apparent in direct cost but gains materialize through data sovereignty, reduced latency (≈20% faster response times), and the ability to fine‑tune for domain expertise—leading to higher quality outputs and lower error rates.

When factoring in the value of avoiding vendor lock‑in penalties or potential price hikes (historically 15–25% over two years), the total cost of ownership advantage becomes significant, especially for regulated industries.

Risk Assessment & Mitigation Strategies

- Safety & Alignment: No external audit yet. Mitigate by implementing robust content filtering and continuous monitoring.

- Hardware Dependence: GPU supply chain volatility could impact deployment timelines. Diversify across NVIDIA, AMD, or consider hybrid cloud‑edge strategies.

- Model Drift: Continuous fine‑tuning is required to maintain relevance as data evolves. Establish a governance framework for periodic retraining.

Future Outlook & Trend Predictions

The Mistral release signals a broader shift toward an open‑weight frontier that balances scale with accessibility. Anticipated trends include:

- Model Marketplace Ecosystem: Expect third‑party vendors to offer pre‑trained adapters, fine‑tuning services, and compliance tooling.

- Hybrid Licensing Models: Closed vendors may introduce “open‑weight” tiers or reduced API pricing to stay competitive.

- Regulatory Standards for Open Models: Governments will likely mandate independent safety audits for models deployed at scale.

Actionable Recommendations for Decision Makers

- Run a Pilot: Deploy Mistral 3 Large on a single use case (e.g., internal knowledge base) to benchmark latency, accuracy, and cost against existing APIs.

- Assess Compliance Fit: Map data residency requirements to the chosen hosting environment; ensure audit logs meet regulatory standards.

- Build an In‑House Expertise Team: Invest in engineers skilled in model parallelism, quantization, and fine‑tuning to maximize ROI.

- Create a Cost Model: Compare cloud API spend versus on‑prem total cost of ownership over 3–5 years; factor in potential price escalations.

- Engage with Vendor Ecosystem: Partner with GPU suppliers for optimized hardware bundles and explore NVIDIA’s AI Enterprise subscription for support.

Conclusion

Mistral’s 2025 open‑weight frontier is more than a new product; it is a strategic pivot that empowers enterprises to reclaim control over their AI infrastructure. By offering scale, multimodality, and multilingualism without the constraints of proprietary APIs, Mistral challenges incumbents to rethink pricing and opens doors for businesses demanding data sovereignty and customizability.

For technology leaders, the next step is clear: evaluate how an open‑weight model can be integrated into your AI roadmap, quantify the financial impact, and prepare a governance framework that ensures safety, compliance, and continuous improvement. The choice to adopt or ignore Mistral will likely define competitive positioning in 2025’s rapidly evolving AI landscape.

Related Articles

Google AI Research Breakthroughs 2025 : The 8 Innovations That...

Explore Gemini 3 Flash in 2026—Google’s low‑latency multimodal model, its integration into Workspace, ROI potential, and how it stacks against GPT‑4 Turbo and Claude 3.5.

Google News - Google's AI dominance - Overview

A deep dive into Google’s Gemini 1/2 models, their verified performance, pricing, and the strategic implications for enterprises in 2025.

5 AI Developments That Reshaped 2025 | TIME

Five AI Milestones That Redefined Enterprise Strategy in 2025 By Casey Morgan, AI2Work Executive Snapshot GPT‑4o – multimodal, real‑time inference that unlocks audio/video customer support. Claude...