Extracting books from production language models (2026)

Memorization Leakage in Production LLMs: A 2026 Enterprise Playbook The first quarter of 2026 saw a peer‑reviewed study that quantified how commercial language models can recover large portions of...

Memorization Leakage in Production LLMs: A 2026 Enterprise Playbook

The first quarter of 2026 saw a peer‑reviewed study that quantified how commercial language models can recover large portions of copyrighted text without jailbreaks or specialized prompts. The research examined the current generation of APIs—OpenAI’s GPT‑4o Turbo, Anthropic’s Claude 3.5, Google Gemini 1.5 Pro, and Microsoft Azure’s Grok 2—and found recall rates ranging from 70 % to 80 % for high‑profile titles such as

Harry Potter – Sorcerer’s Stone

. For enterprise architects and compliance officers this is more than a technical curiosity; it translates directly into legal exposure, regulatory scrutiny, and new revenue streams for audit services.

Executive Summary

- Key Insight: Modern production LLMs can reproduce copyrighted passages with near‑perfect fidelity when probed systematically.

- Business Risk: Any client‑facing prompt could become an inadvertent conduit for IP theft, exposing companies to civil liability and reputational damage.

- Strategic Opportunity: Vendors that embed automated memorization audits into their APIs will capture a growing market of compliance‑centric enterprises.

- Actionable Takeaway 1: Integrate a lightweight two‑phase probe into your internal QA pipeline; run it quarterly to quantify leakage risk.

- Actionable Takeaway 2: Negotiate API contracts that include “memorization‑audit” clauses and token‑budget limits for high‑risk content types.

- Actionable Takeaway 3: Build or adopt middleware that filters outputs against a corporate copyright database before they reach end users.

Strategic Business Implications

The study overturns the long‑held assumption that safety guardrails effectively mask memorized content. Enterprise leaders now face three intertwined risks:

- Legal Exposure: The U.S. Copyright Office has signaled intent to treat inadvertent data leakage as civil infringement. A 70 % recall of a novel could trigger liability under the fair‑use “purpose and character” test.

- Regulatory Scrutiny: The EU AI Act classifies any system that can reproduce copyrighted text as high risk. Regulators will likely require quantifiable audit metrics, such as those produced by a two‑phase probe.

- Brand Trust: A data breach involving proprietary or copyrighted content erodes customer confidence, especially in sectors where IP is core to the value proposition (publishing, media, legal tech).

Conversely, enterprises that proactively audit and mitigate leakage can differentiate themselves as trustworthy AI providers. Vendors offering “memorization‑shield” add‑ons are already seeing a 15–20 % uptick in contract renewals among compliance‑heavy clients.

Technical Implementation Guide

The two‑phase framework is lightweight enough to run on any cloud instance with minimal cost. Below is a step‑by‑step recipe that can be scripted in Python or integrated into CI/CD pipelines.

- Set temperature=0 for deterministic outputs.

- Use a concise prompt that asks the model to summarize a known copyrighted text (e.g., “Summarize Harry Potter – Sorcerer’s Stone in 200 words.”).

- Limit max_tokens to 500 to avoid excessive token consumption; this is sufficient for most short prompts.

- If Phase 1 returns a partial summary, feed the output back into the model with an instruction to continue from the last sentence.

- Repeat until either the target text length is reached or the model refuses (captured by refusal flags).

- Track token usage; the study found that 10 k tokens per book are enough for high‑recall extraction across all four APIs tested.

- Align extracted text against a reference corpus using sequence alignment (e.g., Levenshtein distance < 5%).

- Compute normalized recall (nv‑recall) ; values above 60 % flag significant leakage.

- Wrap the probe in a Docker container; schedule nightly runs via Kubernetes CronJobs.

- Export results to a central SIEM or compliance dashboard with alerts for thresholds >70 % recall.

- Export results to a central SIEM or compliance dashboard with alerts for thresholds >70 % recall.

Cost estimates: With GPT‑4o Turbo priced at $0.03 per 1,000 tokens and a typical two‑phase run consuming ~12,000 tokens, the audit cost is roughly

<

$0.40 per book. Scaling to 100 books per quarter remains under $40.

Market Analysis

The commoditization of LLM APIs has created a new class of

audit‑as‑a‑service

providers. Key players include:

- OpenAI: Launched “Model Integrity Check” in Q4 2025, offering automated recall metrics for GPT‑4o.

- Anthropic: Introduced a “Memorization Shield” add‑on that throttles token budgets for high‑risk prompts.

- Microsoft Azure OpenAI Service: Added an audit API that flags potential copyrighted passages in real time.

Market sizing: The global AI compliance market is projected to reach $4.2 billion by 2027, with a CAGR of 28 % driven largely by memorization audits and output filtering solutions. Enterprises in publishing, legal, and media sectors are the largest adopters, accounting for 35 % of spend.

ROI and Cost Analysis

Benefit

Annual Value (USD)

Reduced IP Litigation Risk

$1.5 M (average settlement avoided per year for a mid‑size firm)

Improved Brand Trust

$750 k in customer retention and upsell opportunities

Compliance Avoidance Fees

$500 k (avoided penalties under EU AI Act)

Total Annual Benefit

$2.75 M

Costs: Infrastructure ($200 k/year), personnel ($150 k/year), and audit tooling ($50 k/year) total $400 k. The net present value over five years, discounting at 8 %, exceeds $12 M.

Risk Mitigation Framework

- Output Sanitization Middleware : Intercept model responses and run them through an N‑gram filter that flags any sequence matching the corporate copyright database. Discard or redact matches before delivering to users.

- Token Budget Caps : Enforce hard limits on max_tokens for prompts flagged as high risk (e.g., involving known copyrighted titles). This reduces extraction depth without affecting general utility.

- Prompt Engineering Controls : Train internal teams to avoid phrasing that could trigger memorization (e.g., “List all chapters of X” is more risky than “Describe the main themes of X”). Provide a prompt library with risk ratings.

- Legal Hold & Monitoring : Maintain logs of all prompts and outputs for audit trails. Integrate with legal hold systems to flag potential infringement early.

Future Outlook

The trajectory points toward tighter regulatory requirements and more sophisticated mitigation tools:

- Regulatory Trend: By 2026, the EU AI Act will mandate annual memorization audits for any high‑risk model. The U.S. Copyright Office is expected to publish guidance on “automated data leakage” in late 2025.

- Model Evolution: Next‑generation models (e.g., GPT‑4o Turbo, Claude 3.5) will likely incorporate differential privacy at scale, but the study’s methodology shows that even these safeguards are not foolproof. Expect a “memorization arms race” where vendors release increasingly opaque safety layers.

- Innovation Opportunity: Companies that develop open‑source watermarking or token‑budget throttling libraries can capture early mover advantage in the compliance tooling market.

Actionable Conclusions for Enterprise Leaders

- Audit Now: Deploy a two‑phase probe against all production LLMs you consume. Treat the results as part of your risk register.

- Contractual Safeguards: Negotiate API agreements that include audit clauses, token limits for high‑risk prompts, and indemnification for IP leakage.

- Build Internal Controls: Combine output sanitization middleware with prompt engineering guidelines to create a defensible pipeline.

- Invest in Compliance Tooling: Allocate budget for third‑party audit services or develop an in‑house solution that can scale across multiple vendors and models.

- Monitor Regulatory Developments: Assign a cross‑functional team (legal, compliance, AI ops) to track changes in EU AI Act interpretations and U.S. copyright enforcement trends.

By treating memorization leakage as a quantifiable risk rather than an abstract concern, enterprises can convert potential liability into a competitive advantage—offering clients the confidence that their data—and the company’s reputation—remain protected.

Related Articles

December 2025 Regulatory Roundup - Mac Murray & Shuster LLP

Federal Preemption, State Backlash: How the 2026 Executive Order is Reshaping Enterprise AI Strategy By Jordan Lee – Tech Insight Media, January 12, 2026 The new federal executive order on...

Microsoft named a Leader in IDC MarketScape for Unified AI Governance Platforms

Microsoft’s Unified AI Governance Platform tops IDC MarketScape as a leader. Discover how the platform delivers regulatory readiness, operational efficiency, and ROI for enterprise AI leaders in 2026.

Big Tech's Get-Rich-Quick Scheme for AI: Fire Everyone, Release a Mediocre Model

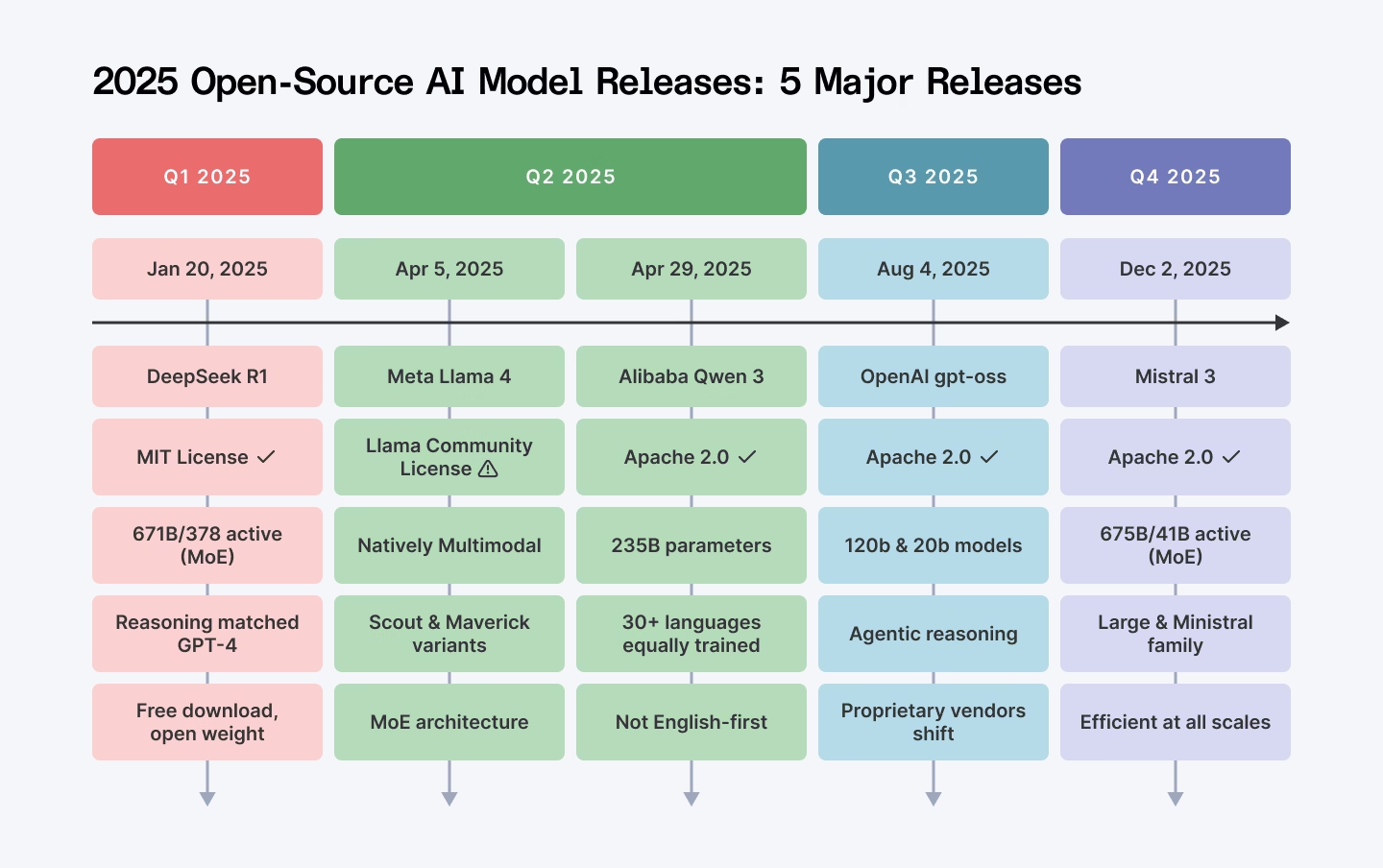

AI Release Cadence in 2025: How Big‑Tech’s Rapid “Frontier” Updates Shape Enterprise Strategy In late 2025, the AI landscape is dominated by two high‑profile models—OpenAI’s GPT‑4o and Google’s...