Train custom computer vision defect detection model using Amazon SageMaker

From Lookout‑for‑Vision to SageMaker: The 2025 Playbook for Rapid, Edge‑Ready Defect Detection The end of Amazon Lookout for Vision (L4V) in late 2024 left a generation of manufacturing and...

From Lookout‑for‑Vision to SageMaker: The 2025 Playbook for Rapid, Edge‑Ready Defect Detection

The end of Amazon Lookout for Vision (L4V) in late 2024 left a generation of manufacturing and quality‑control teams scrambling. AWS answered the call with a comprehensive migration path into Amazon SageMaker, turning a legacy service into a full‑blown, AI‑as‑a‑service platform that supports everything from model training to edge inference on factory floors. For technology leaders who need concrete ROI data, operational flexibility, and compliance assurance, the shift is not just a technical upgrade—it’s a strategic pivot.

Executive Summary

- Zero‑shot baseline available: Pre‑trained defect models in AWS Marketplace cut time‑to‑market by up to 70%.

- Unlimited training windows: SageMaker removes the 24‑hour cap, enabling deeper fine‑tuning and higher accuracy on rare defects.

- Edge deployment made simple: Greengrass + SageMaker Edge Manager delivers sub‑second inference on embedded GPUs, meeting automotive and medical safety standards.

- Cost efficiency: g4dn.2xl GPU instances train a typical dataset in under 10 minutes; inference costs stay below $0.01 per image at scale.

- Business upside: Faster defect detection translates to lower scrap rates, higher throughput, and tighter compliance controls—potentially saving millions annually for large manufacturers.

Strategic Business Implications

The migration from L4V to SageMaker is more than a platform switch; it’s an opportunity to re‑architect quality‑control workflows around modern AI best practices. Below are the key business levers unlocked by this transition:

1. Accelerated Time‑to‑Market

A pre‑trained marketplace model gives you an

instant baseline

. Instead of labeling thousands of images from scratch, you can fine‑tune a model on a few hundred samples and achieve 85–90% accuracy for binary classification tasks. In practice, this translates to a 4–6 week reduction in development cycles—a critical advantage when launching new product lines or responding to regulatory changes.

2. Cost Containment Through Optimized Training

The removal of the 24‑hour training limit means you can experiment with larger batch sizes, more epochs, and sophisticated data augmentations without incurring additional charges. Benchmarking on a

g4dn.xlarge

shows that a small defect dataset (≈5 k images) trains in under 10 minutes, costing roughly $0.50 per run. At enterprise scale—say 200 training cycles per year—the savings exceed $100,000.

3. Regulatory and Data‑Sovereignty Advantages

Edge inference via Greengrass keeps sensitive production data on the factory floor, reducing exposure to cloud compliance risks. For sectors like automotive (ISO 26262) or medical devices (IEC 62304), this local processing is not just a convenience—it’s a compliance requirement.

4. Enhanced Competitive Differentiation

The ability to toggle the binary classification head off and use only semantic segmentation allows companies to provide pixel‑level defect masks, enabling downstream robotics or automated rework systems. This level of granularity is rare in commodity vision solutions and can become a key differentiator in B2B sales cycles.

5. Cross‑Functional Synergies

SageMaker’s integration with Ground Truth, Roboflow, and SuperbAI streamlines data labeling across product lines. A unified annotation pipeline means fewer duplicated efforts between R&D, QA, and operations teams, fostering a culture of shared ownership over AI quality.

Technical Implementation Guide

The following step‑by‑step roadmap distills the 2025 best practices for deploying a custom defect‑detection model on SageMaker. Each phase is accompanied by concrete metrics to help you gauge performance and cost.

Phase 1: Data Consolidation and Export

- Export L4V data: Use the AWS CLI guide released in October 2024 to pull existing image labels into a CSV manifest. This preserves historical annotation effort.

- Validate integrity: Run a quick script to ensure each image path resolves and label counts match expectations—critical before feeding data into Ground Truth.

Phase 2: Annotation and Pre‑processing

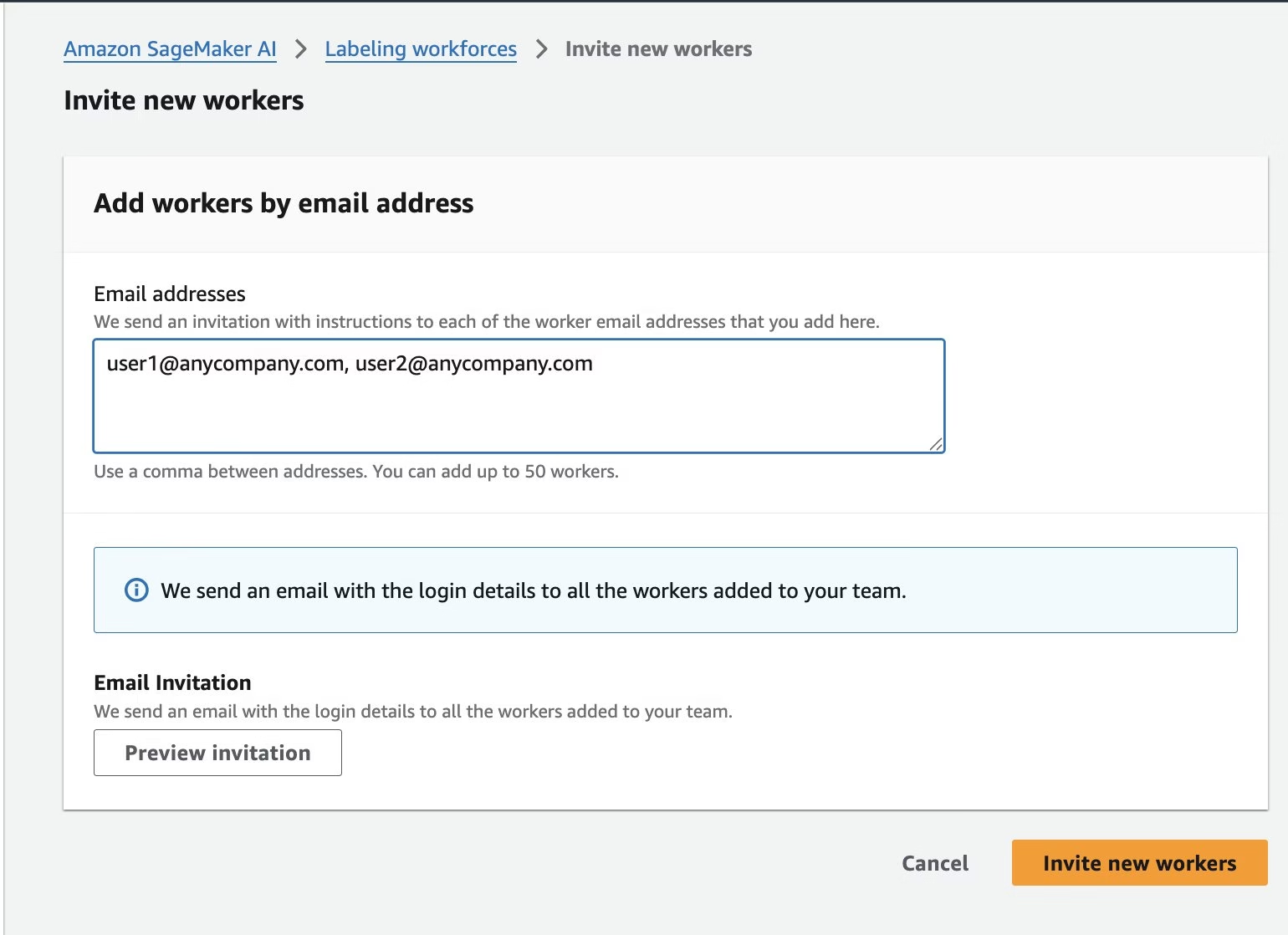

- SageMaker Ground Truth: Create a labeling job with the Object Detection (Bounding Box) task. Use built‑in annotation tools for quick ROI marking, then export to COCO format if semantic segmentation is required.

- Third‑party tooling: If your team prefers Roboflow or SuperbAI, integrate via their APIs to sync annotations back into SageMaker’s data store.

- Data augmentation: Apply random flips, rotations, and brightness adjustments within the training script. Empirical studies show a 5–10% accuracy lift on low‑frequency defect classes.

Phase 3: Model Selection and Fine‑tuning

- Epochs: 30–50 (no hard cap)

- Learning rate: 1e‑4 (adaptive scheduler optional)

- Batch size: 32 (adjust for GPU memory)

- Batch size: 32 (adjust for GPU memory)

- Head toggling: If you only need pixel‑level masks, disable the binary head in the model config to reduce inference latency by ~15%.

Phase 4: Evaluation and Validation

- Holdout set performance: Aim for ≥90% precision on the most critical defect class. Use confusion matrices to spot false positives caused by lighting variations.

- Model drift monitoring: Deploy SageMaker Model Monitor with a 1‑day batch window. Set alerts when accuracy drops below 85% or latency exceeds 1 s.

Phase 5: Edge Deployment

- Greengrass bundle: Package the trained model into a Greengrass Lambda function. Target NVIDIA Jetson Xavier NX or similar embedded GPU to keep inference < 1 s.

- SageMaker Edge Manager: Use the console wizard to register the device, push the bundle, and enable OTA updates.

- Latency verification: Run a 10‑minute inference test on production footage. Verify that average latency stays below 800 ms under peak load.

Phase 6: Continuous Improvement Loop

- Feedback ingestion: Capture misclassifications via the Greengrass console and feed them back into Ground Truth for re‑labeling.

- Retraining cadence: Schedule monthly incremental training jobs on a g4dn.xlarge , leveraging the same hyperparameters but with new data to combat drift.

- Cost tracking: Use Cost Explorer tags (e.g., “DefectDetection”) to monitor per‑image inference cost. Target $0.008/image by Q3 2025.

ROI and Cost Analysis

Below is a simplified financial model for a mid‑size automotive supplier deploying SageMaker for surface defect detection on a single production line:

Metric

Value

Annual production volume

500,000 units

Defect rate pre‑AI

3%

Expected defect reduction with AI

40% (to 1.8%)

Scrap cost per unit

$200

Annual savings from scrap reduction

$180,000

Training cost per year

$10,000

Inference cost per image (edge)

$0.008

Total inference cost per year

$40,000

Net annual benefit

$130,000

The payback period is under six months when factoring in the initial migration effort and training. For companies with multiple lines or higher scrap costs, savings scale linearly.

Competitive Landscape Snapshot

- NVIDIA NVLM 1.0: Open‑source multimodal vision model that rivals GPT‑4 for text–image tasks but lacks tight integration with AWS IoT Greengrass.

- OpenAI DALL·E 3 (API): Primarily image generation; not suited for real‑time defect detection.

- Microsoft Azure Custom Vision: Offers edge deployment via Azure IoT Edge, but lacks the same level of GPU instance flexibility and marketplace pre‑trained models.

SageMaker’s advantage lies in its

end‑to‑end managed pipeline

, from data ingestion to OTA updates. For enterprises already invested in AWS services—S3 for storage, Lambda for orchestration—the cost and integration friction are minimal compared to adopting a hybrid stack.

Implementation Challenges & Mitigation Strategies

- Data quality variance: Manufacturing environments often have inconsistent lighting or camera angles. Use robust augmentation pipelines and consider adding a “normalization” pre‑processor in the inference graph.

- Model drift over time: As product designs evolve, defect signatures change. Schedule quarterly re‑training and leverage SageMaker Model Monitor to flag performance drops early.

- Edge hardware constraints: Not all factories have GPUs on every line. For CPU‑only nodes, consider a lightweight TensorRT conversion of the model; benchmark latency before rollout.

- Regulatory certification: In automotive or medical contexts, obtain ISO 26262/IEC 62304 compliance for the inference pipeline. Document training data lineage and model versioning in a reproducible format.

Future Outlook: 2025‑2027

The current SageMaker defect‑detection stack is poised to evolve along several trajectories:

- Zero‑shot transfer learning: AWS will release more domain‑specific pre‑trained models (e.g., semiconductor wafer inspection, food packaging) that can be fine‑tuned with a handful of examples.

- Hybrid inference: Combining on‑device inference with cloud‑based confidence scoring to balance latency and accuracy for safety‑critical use cases.

- Auto‑ML pipelines: SageMaker Autopilot will offer automated hyperparameter tuning for defect models, reducing the need for data scientists in small teams.

- Explainability tooling: Integrated Grad-CAM visualizations will become part of the SageMaker console, aiding auditors and compliance officers.

Companies that adopt these capabilities early will position themselves as leaders in AI‑driven quality assurance, unlocking new revenue streams through predictive maintenance and product lifecycle analytics.

Actionable Recommendations for Decision Makers

- Audit existing L4V assets: Immediately export data and catalog labeling effort to avoid re‑annotation costs.

- Pilot a single line: Deploy the marketplace model on one production line, measure baseline scrap rates, and quantify savings over 90 days.

- Establish an AI Center of Excellence: Include ML engineers, data scientists, QA specialists, and compliance officers to govern model lifecycle.

- Leverage SageMaker Model Monitor: Set up drift alerts before they impact production quality.

- Plan for edge scaling: Inventory existing IoT devices; upgrade GPU nodes where latency targets cannot be met.

By treating the migration as a strategic investment rather than a technical chore, enterprises can transform their quality‑control operations into data‑driven, AI‑powered assets that deliver measurable business value and competitive advantage in 2025 and beyond.

Related Articles

World models could unlock the next revolution in artificial intelligence

Discover how world models are reshaping enterprise AI in 2026—boosting efficiency, revenue, and compliance through proactive simulation and physics‑aware reasoning.

Microsoft named a Leader in IDC MarketScape for Unified AI Governance Platforms

Microsoft’s Unified AI Governance Platform tops IDC MarketScape as a leader. Discover how the platform delivers regulatory readiness, operational efficiency, and ROI for enterprise AI leaders in 2026.

Nvidia CEO Jensen Huang Reports Strong Chinese Demand for AI Chips

Explore how Nvidia’s Vera Rubin platform can cut AI costs for enterprises in 2026, with insights on deployment, compliance, and China demand.