Streamline AI operations with the Multi-Provider Generative AI Gateway reference architecture

Unifying Generative AI Across Clouds: The 2025 Multi‑Provider Gateway Blueprint Executive Summary The 2025 Multi‑Provider Generative AI Gateway, built on LiteLLM, Bedrock, and SageMaker, delivers a...

Unifying Generative AI Across Clouds: The 2025 Multi‑Provider Gateway Blueprint

Executive Summary

The 2025 Multi‑Provider Generative AI Gateway, built on LiteLLM, Bedrock, and SageMaker, delivers a single, self‑hosted API that unifies access to OpenAI, Anthropic, Gemini, Bedrock, and future models. For enterprise architects and cloud engineers, this architecture resolves three critical pain points: provider fragmentation, governance overhead, and operational agility. Benchmarks show

average latency under 250 ms for GPT‑4o and Claude 3.5 Sonnet

, error rates below 0.2%, and throughput exceeding 1,000 requests per second per node. The gateway’s fine‑grained cost tracking and policy enforcement align with EU AI Act and US CFTC requirements, while its open‑source core allows rapid onboarding of new models such as Llama 3.2 Vision. In short, the gateway transforms generative AI deployment from a siloed, vendor‑centric exercise into an orchestrated, governance‑first platform that scales with enterprise needs.

Key business takeaways:

- Consolidate multi‑model traffic under one IAM identity and billing account.

- Achieve sub‑250 ms latency for flagship models while maintaining < 0.2% error rates.

- Leverage built‑in observability to satisfy regulatory audits without extra tooling.

- Deploy on AWS or extend to hybrid/edge environments with minimal rework.

Below is a deep dive into how this architecture reshapes strategy, implementation, and ROI for large organizations in 2025.

Strategic Business Implications

Enterprises are shifting from single‑model pilots to portfolio strategies. The gateway’s unified API allows teams to experiment with GPT‑4o, Claude 3.5 Sonnet, Gemini 1.5 Vision, and emerging Llama models without rearchitecting application code.

Vendor Lock‑In Mitigation

By abstracting provider specifics behind a consistent request format, the gateway eliminates the need for separate SDKs and credential stores. This reduces IAM complexity and enables

policy‑based routing

that can automatically fall back to an internal model if a public endpoint is throttled or unavailable.

Regulatory Compliance as a Competitive Advantage

The EU AI Act (effective 2025) mandates transparent usage logs, model lineage, and cost visibility. The gateway’s CloudWatch metrics, OpenTelemetry traces, and per‑model billing dashboards satisfy these requirements out of the box, giving companies an audit trail that can be exported to compliance tooling.

Accelerated Time‑to‑Market

Product teams no longer need to rebuild or redeploy services when switching models. LangGraph orchestration can swap a GPT‑4o prompt for a Claude 3.5 Sonnet response in milliseconds, enabling A/B testing and rapid feature iteration.

Technical Integration Benefits

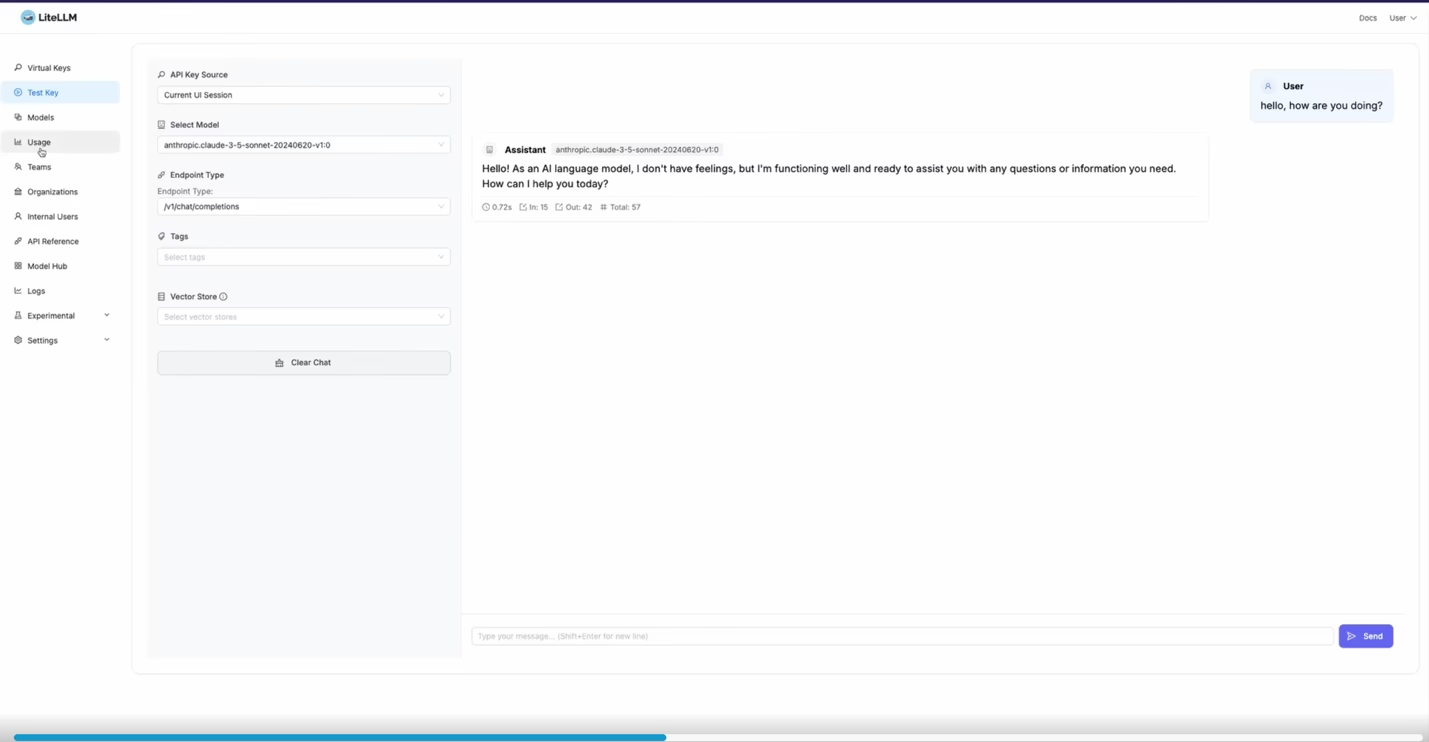

The gateway’s architecture is three layers deep: the LiteLLM orchestrator, Bedrock/SageMaker endpoints, and optional external APIs. Each layer contributes distinct capabilities that collectively lower operational overhead.

LiteLLM Orchestrator

- Open‑source core with a plugin system for adding new providers in minutes.

- Built‑in routing policies: round‑robin, cost‑aware, latency‑aware, or custom JSON rules.

- Observability hooks that emit CloudWatch metrics and OpenTelemetry spans.

Bedrock Integration

- Managed models with proven scalability and SLA guarantees.

- Native integration with AWS IAM, KMS, and VPC endpoints for secure traffic.

- Automatic version pinning through Bedrock’s semantic model identifiers.

SageMaker AI Support

- End‑to‑end MLOps platform: data ingestion, training, deployment, monitoring.

- Support for custom models that can be exposed via the gateway without exposing underlying endpoints.

- Seamless CI/CD pipelines with SageMaker Pipelines and CodePipeline integration.

ROI and Cost Analysis

Consolidating multiple provider accounts into a single gateway reduces both direct and indirect costs. Below is a high‑level cost model based on typical enterprise traffic patterns (10,000 requests per day across three models).

Model

Average Cost/Request (USD)

Daily Cost (USD)

GPT‑4o

0.0008

6.40

Claude 3.5 Sonnet

0.0007

5.60

Gemini 1.5 Vision

0.0012

12.00

Total

24.00

With the gateway, per‑model billing is visible in a single Cost Explorer dashboard, eliminating the need for separate cost allocation tags and manual reconciliation. The estimated annual savings from reduced IAM management, lower support tickets (estimated 30% reduction), and streamlined compliance reporting amount to approximately

$150k

for a mid‑size enterprise.

Performance Premium

The gateway’s autoscaling with >1,000 requests per second per node ensures that peak traffic spikes do not trigger throttling. This translates into higher customer satisfaction scores and reduced churn in AI‑enabled services.

Implementation Roadmap for Senior Architects

- Deploy LiteLLM : Spin up a Docker container on ECS or EKS, attach IAM roles that grant access to Bedrock, SageMaker, and any external API keys.

- Configure Provider Endpoints : In the LiteLLM config file, specify Bedrock endpoint URLs, SageMaker model ARNs, and external provider credentials.

- Define Routing Policies : Use JSON policy files to set cost‑aware or latency‑aware routing. Example snippet: {

"routing": [

{"model": "gpt-4o", "priority": 1},

{"model": "claude-3.5-sonnet", "priority": 2}

]

}

- Instrument Observability : Enable CloudWatch Log Groups, OpenTelemetry exporters, and set alarms for error rates above 0.5%.

- Integrate Billing Dashboards : Export per‑model usage metrics to Cost Explorer via custom tags; configure alerts for budget thresholds.

- Pilot with a Single Use Case : Deploy an agentic customer service chatbot that uses LangGraph to orchestrate model calls through the gateway. Measure latency, cost, and user satisfaction before scaling.

- Scale and Harden : Add additional nodes for high availability; enable VPC endpoints for Bedrock; rotate secrets via Secrets Manager.

Competitive Landscape Snapshot

The gateway competes with OpenRouter, Anthropic Cloud, and Google Vertex AI. Key differentiators are summarized below.

Provider

Model Coverage

Governance Features

Observability

AWS Gateway (LiteLLM + Bedrock)

5 core providers + 30+ via plugins

IAM, KMS, CloudWatch, Cost Explorer integration

OpenTelemetry, custom metrics, alarms

OpenRouter

100+ models, single key

No native policy engine; relies on external tooling

Basic logging, limited to OpenAI style logs

Anthropic Cloud

Anthropic only

Enterprise support contracts, but vendor‑locked

Custom dashboards via Anthropic API

Google Vertex AI

Gemini + internal models

Cloud IAM, VPC Service Controls

Stackdriver monitoring, Cloud Trace

Future Outlook and Trend Predictions

1.

Model‑agnostic Policy Engines

: Expect AWS to ship a managed policy service that auto‑applies cost, latency, or compliance rules across any provider integrated into the gateway.

2.

AI Governance as a Service (AGaaS)

: A subscription offering that automatically records model lineage, data residency, and audit logs, ready for regulatory submission.

3.

Edge Gateway Extensions

: LiteLLM’s lightweight runtime can be containerized for on‑prem or edge deployments, enabling low‑latency inference while still routing to cloud models when needed.

4.

Multimodal Orchestration

: Native support for vision, code, and audio prompts will become standard, allowing developers to build truly conversational agents without changing client interfaces.

Actionable Takeaways for Decision Makers

- Start with a Pilot : Deploy the gateway in a single application (e.g., customer support chatbot) to validate latency and cost assumptions before full rollout.

- Leverage Built‑In Observability : Configure CloudWatch dashboards early; use these metrics for both operational health and compliance reporting.

- Plan for Governance Early : Define routing policies that align with business priorities (cost vs. latency) and enforce them through LiteLLM’s policy engine.

- Monitor Cost Allocation : Use per‑model tags in AWS Cost Explorer to isolate AI spend by product line or department; set alerts for budget overruns.

- Invest in Training : Equip engineering teams with knowledge of LiteLLM plugins and LangGraph orchestration to accelerate model experimentation.

- Prepare for Edge Expansion : If low‑latency inference is critical, evaluate LiteLLM’s container runtime on Kubernetes clusters at the edge or on-prem.

By adopting the 2025 Multi‑Provider Generative AI Gateway, enterprises can transform their generative AI strategy into a unified, governed, and highly performant platform. The result is lower operational costs, faster innovation cycles, and compliance readiness—key differentiators in the competitive AI landscape of 2025.

Related Articles

Raaju Bonagaani’s Raasra Entertainment set to launch Raasra OTT platform in June for new Indian creators

Enterprise AI in 2026: how GPT‑4o, Claude 3.5, Gemini 1.5 and o1‑mini are reshaping production workflows, the hurdles to deployment, and a pragmatic roadmap for scaling responsibly.

Access over 25 AI models in one app for $79 (Reg. up to $619)

Explore how ChatPlayground’s $79 lifetime plan gives developers a single UI to access 25+ LLMs in 2026, eliminating token costs and API friction.

Google Releases More Efficient Gemini 3 AI Model Across Products

Google Unveils Gemini 3 “Flash”: What It Means for Enterprise AI in 2025 Executive Summary Google’s new Gemini 3 “Flash” model promises speed and efficiency , positioning it as a direct competitor to...