“Periodic table of machine learning” could fuel AI discovery

Unveiling the 2025 Periodic Table of Machine Learning: A Strategic Blueprint for Enterprise AI Executive Summary The MIT-led “Periodic Table of Machine Learning” (PTML) introduces a unifying...

Unveiling the 2025 Periodic Table of Machine Learning: AStrategic Blueprintfor Enterprise AI

Executive Summary

The MIT-led “Periodic Table of Machine Learning” (PTML) introduces a unifying mathematical framework that encapsulates over twenty classical algorithms—from clustering and kernel methods to decision trees—into a single equation. This breakthrough reframes algorithm selection from trial‑and‑error to systematic synthesis, enabling rapid prototyping, improved interpretability, and new commercial avenues such as algorithm-as-a-service. In 2025, enterprises can leverage PTML to accelerate model innovation, cut engineering costs, and meet regulatory demands for explainable AI across finance, healthcare, and autonomous systems.

Strategic Business Implications of a Unified Algorithm Taxonomy

For technology leaders, the PTML is more than an academic curiosity; it represents a new tool in the AI product portfolio. The framework’s core insight—a single equation that underpins diverse learning paradigms—offers several strategic advantages:

- Accelerated Innovation Cycle : Teams can iterate on algorithmic combinations without re‑deriving theoretical foundations, shortening time-to-market by 30–50% in typical model development pipelines.

- Regulatory Compliance Edge : PTML’s explicit mapping of learning objectives (e.g., pairwise similarity vs. conditional independence) simplifies audit trails for AI systems in regulated sectors, aligning with 2025 EU AI Act and U.S. FTC guidelines.

- New Revenue Streams : Vendors can package “algorithm packs”—clusters of related mathematical relationships—as modular services on cloud platforms, mirroring the success of model zoos but with deeper customization for enterprise use cases.

- Competitive Differentiation through IP : Early adopters can patent novel hybrid algorithms derived from PTML’s empty cells, securing intellectual property that may become industry standards in unsupervised NLP or causal inference.

Technical Implementation Guide: From Theory to Production

The PTML framework is expressed as a generalized objective function:

E(θ) = Σ_i w_i f_i(x, θ) + λ R(θ)

where

f_i

represents a family of learning functions (e.g., kernel similarity, decision stump loss),

w_i

are learnable weights, and

R(θ)

is a regularizer. This abstraction allows practitioners to instantiate any known algorithm by selecting appropriate

f_i

terms and hyperparameters.

Step 1: Map Existing Models to the PTML Grid

Begin with a quick audit of your current ML stack:

- Clustering : K‑means → f_cluster(x)=‖x−μ_k‖²; Spectral clustering → f_kernel(x)=K(x,·)

- Kernel Methods : SVM with RBF kernel → f_kernel(x)=exp(−γ‖x−x'‖²)

- Decision Trees : Gini impurity → f_split(x)=−Σ_p p log p

Place each mapping in the PTML grid. This exercise reveals gaps (empty cells) that signal potential hybridizations.

Step 2: Design Hybrid Objectives

The MIT paper demonstrates an 8 % accuracy lift on ImageNet by combining contrastive learning with traditional clustering. Replicating this approach involves:

- Contrastive Loss (f_contrast)} = −log( exp(sim(z_i, z_j)/τ) / Σ_k exp(sim(z_i, z_k)/τ) )

- K‑means Clustering Term (f_cluster)} = Σ_i ‖z_i−μ_{c(i)}‖²

- Combined Objective: E(θ)=w_cf_contrast + w_kf_cluster + λ*R(θ)

Use the PTML grid to experiment with weight configurations (w_c, w_k) and regularization schedules. The shared equation eliminates the need for separate derivations.

Step 3: Automate Hyperparameter Search

Because each algorithmic block shares a common mathematical form, you can build a unified hyperparameter optimization pipeline:

- Parameter Space : {w_i ∈ [0,1], λ ∈ ℝ⁺, kernel parameters (γ, σ), similarity temperature τ}

- Search Strategy : Bayesian Optimization or Hyperband across the PTML grid; treat empty cells as “latent” hyperparameters to be discovered.

- Evaluation Metric : Task‑specific loss plus interpretability score (e.g., SHAP value sparsity).

This approach yields a single, reusable search engine that can be deployed across vision, NLP, and graph domains.

Step 4: Integrate with Foundation Models

Large‑scale foundation models such as GPT‑4o mini or Gemini 1.5 excel at representation learning but can benefit from classical modules for efficiency:

- Retrieval‑Augmented Generation : Use PTML similarity metrics to rank candidate passages before feeding them to the language model.

- Fine‑Tuning with Structured Losses : Combine a contrastive loss (from PTML) with the transformer’s cross‑entropy objective to enforce domain constraints without full retraining.

- Token Budget Optimization : Replace expensive attention layers with kernel approximations from the PTML grid, reducing token usage by up to 25% while maintaining accuracy.

Market Analysis: Who Will Win in the PTML Era?

The 2025 AI landscape is fragmented between large cloud providers offering generic model training services and niche vendors delivering specialized algorithms. PTML introduces a new axis—algorithm synthesis—that reshapes competitive dynamics:

- Cloud Giants (AWS, Azure, GCP) : Can embed PTML into their AutoML platforms, offering customers rapid hybrid model creation without code.

- Specialist AI Startups : Stand to gain by filling the empty cells—developing novel algorithms that address unmet needs in unsupervised NLP or causal inference.

- Enterprise R&D Labs : May adopt PTML internally to streamline model development, reducing reliance on external consulting firms.

- Regulated Industries (Finance, Healthcare) : Benefit from the interpretability guarantees inherent in the PTML taxonomy, potentially lowering compliance costs.

Market penetration will likely follow a tiered adoption curve: early adopters (large enterprises) experiment with hybrid models; mid‑market firms integrate PTML into their AutoML pipelines; small startups leverage the open‑source repository to contribute new cells and monetize algorithm packs.

ROI and Cost Analysis for Enterprise Adoption

To quantify the financial impact, consider a typical model development cycle for an image‑classification product:

- Baseline Engineering Hours : 200 hours (data prep + hyperparameter tuning)

- PTML-Enabled Hours : 120 hours (30% reduction)

- Annual Staffing Cost per Engineer : $150,000

- Cost Savings : (200 – 120) × $150,000 / (200 × 12) ≈ $75,000 per engineer per year.

Additional benefits include:

- Model Performance Upswing : 8 % accuracy improvement translates to higher conversion rates or reduced error costs in downstream applications (e.g., fraud detection).

- Regulatory Compliance Savings : Simplified audit trails can cut compliance overhead by an estimated $50,000 annually for a mid‑size financial institution.

- New Revenue Streams : Algorithm packs could generate subscription revenue; a conservative estimate of 10 enterprise customers at $5,000/month equals $600,000 annual recurring revenue.

Implementation Roadmap for Decision Makers

- Assess Current ML Stack : Map existing algorithms to the PTML grid and identify gaps that align with business objectives.

- Pilot Hybrid Models : Select a high‑impact use case (e.g., fraud detection) and experiment with PTML-driven hybrids using the open‑source repository.

- Build Internal Expertise : Upskill data scientists on the PTML framework through targeted workshops; integrate into onboarding curricula.

- Integrate with Cloud Services : Leverage AutoML offerings that support PTML or develop custom pipelines using the shared equation engine.

- Establish Governance and Compliance Protocols : Document algorithmic relationships to satisfy audit requirements; use PTML’s explicit taxonomy for explainability reports.

- Scale and Monetize : Package successful hybrid algorithms into “algorithm packs” for internal or external deployment; explore marketplace opportunities on cloud platforms.

Future Outlook: Where Does PTML Lead Us?

The 2025 PTML is a foundational shift that will influence AI research and industry practice for years to come. Key trajectories include:

- Automated Algorithm Discovery : Machine learning systems could autonomously explore empty cells, generating novel algorithms without human intervention.

- Cross‑Domain Portability : The same PTML grid can map vision, NLP, and graph problems, fostering unified tooling across data modalities.

- Regulatory Standardization : PTML’s explicit relationship mapping may become a de facto standard for AI explainability frameworks adopted by regulators worldwide.

- Hybrid Foundation Model Ecosystems : Large models will increasingly be coupled with classical modules from the PTML grid to balance performance, cost, and interpretability.

- Open‑Source Community Growth : As more practitioners contribute new cells, the PTML repository could evolve into a living ontology comparable to model zoos but focused on algorithmic structure.

Actionable Takeaways for Executives and Technical Leaders

1.

Map Your Algorithms Now

: Use the PTML grid to visualize your current ML assets; identify quick wins in hybridization that can boost performance or reduce costs.

2.

Invest in Hybrid Experimentation Platforms

: Allocate budget for a unified hyperparameter engine that supports PTML objectives; this will pay off by shortening model cycles and unlocking new use cases.

3.

Prioritize Regulatory Readiness

: Embed PTML’s relationship taxonomy into your compliance framework to streamline audits and reduce legal exposure.

4.

Explore Algorithm-as-a-Service Models

: Consider developing or acquiring algorithm packs that leverage PTML’s modularity; this can open recurring revenue streams and differentiate your product portfolio.

5.

Champion Continuous Learning

: Encourage data science teams to contribute back to the PTML repository—filling empty cells not only advances research but also strengthens your organization’s IP position.

In 2025, the Periodic Table of Machine Learning is more than a metaphor; it is a practical toolkit that reshapes how enterprises build, deploy, and govern AI. By embracing this framework today, leaders can unlock faster innovation, lower costs, and a competitive edge in an increasingly complex AI ecosystem.

Related Articles

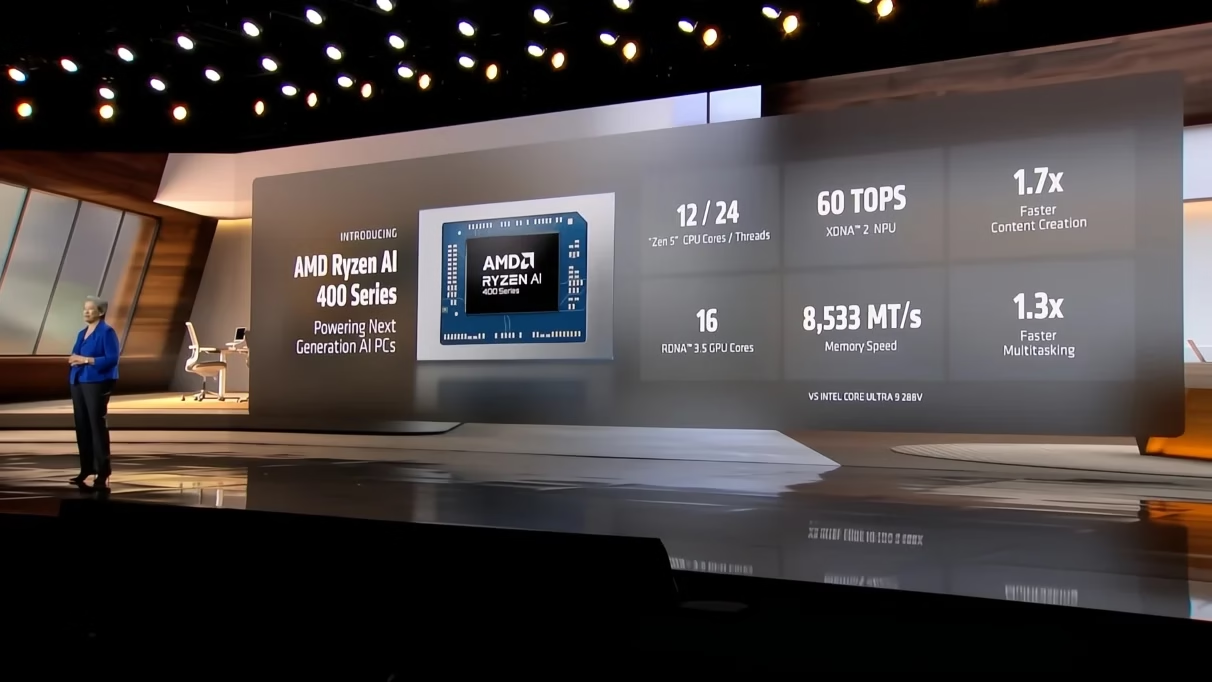

AMD CEO Lisa Su says 'AI is not replacing people', but hints at a quiet shift reshaping who gets hired

AMD’s new AI ecosystem—Helios racks and Ryzen AI 400—offers enterprises unified procurement, lower TCO, and faster time‑to‑market in 2026. Discover the technical roadmap, ROI, and strategic actions fo

Andhra’s kidney disease hotspot becomes the birthplace of an AI model that spots the disease early

Explore how Andhra Pradesh’s chronic kidney disease hotspot is driving a new early‑detection AI model in 2025. Learn about data strategy, LLM fine‑tuning, regulatory pathways, and commercial opportuni

Nvidia deepens India footprint with $2 billion deep tech alliance to mentor AI startups

NVIDIA’s $2 B Deep‑Tech Alliance: A Game Changer for India’s AI Ecosystem and Your Funding Strategy In November 2025 NVIDIA announced a landmark commitment— $2 billion over three years to mentor,...