AMD CEO Lisa Su says 'AI is not replacing people', but hints at a quiet shift reshaping who gets hired

AMD’s new AI ecosystem—Helios racks and Ryzen AI 400—offers enterprises unified procurement, lower TCO, and faster time‑to‑market in 2026. Discover the technical roadmap, ROI, and strategic actions fo

AMD’s New AI Ecosystem: What Enterprise Leaders Must Know in 2026

Executive Summary

- Ahead of the curve, AMD is redefining its silicon strategy to become a full‑stack AI platform.

- The Helios rack and Ryzen AI 400 mobile line fuse EPYC CPUs, RDNA GPUs, and XDNA NPUs into a unified, software‑driven ecosystem.

- For executives, the shift means new talent profiles, altered procurement logic, and fresh ROI opportunities across data centers, edge devices, and consumer products.

- This article translates AMD’s technical roadmap into concrete business actions for leaders in HR, IT architecture, and strategy.

Strategic Business Implications of AMD’s Hybrid AI Stack

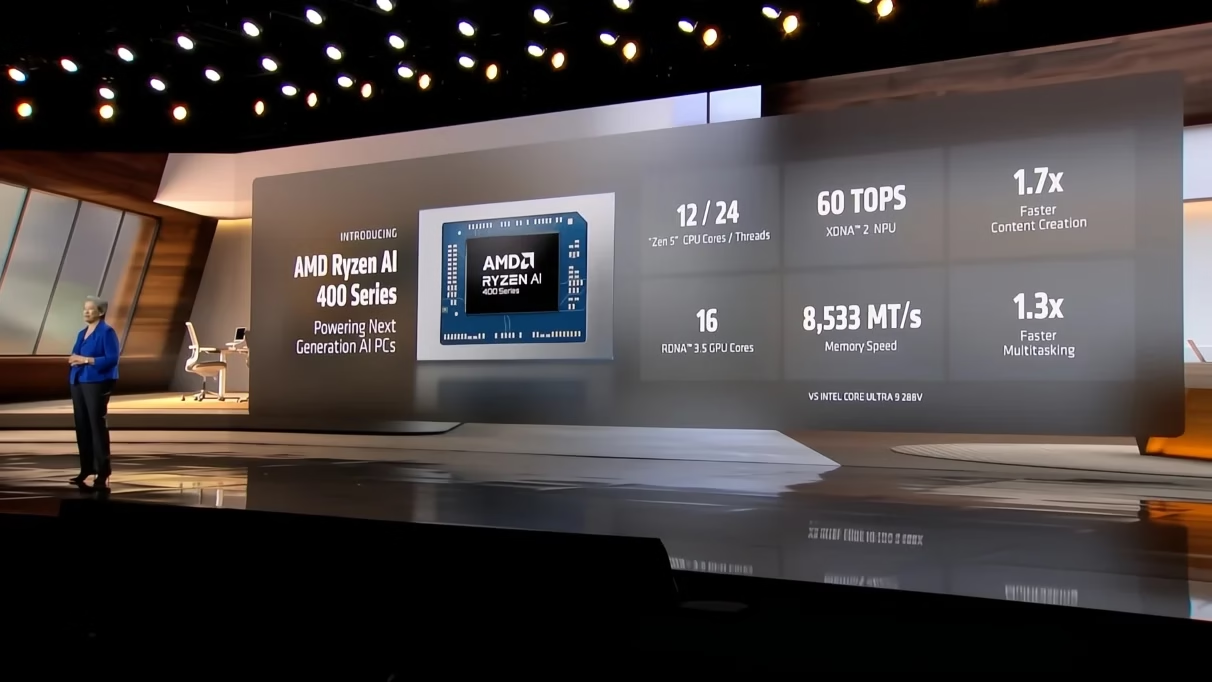

AMD’s announcements at CES 2026 signal a pivot from pure silicon to an integrated AI ecosystem. The

Helios rack

, projected to deliver more than 10 YottaFlops over five years, and the

Ryzen AI 400 series

, achieving up to 60 TOPS in laptops, are not just product launches—they represent a new value proposition for enterprises:

- Unified Procurement : A single vendor can supply CPU, GPU, NPU components and the ROCm software stack, simplifying supply‑chain risk.

- Lower Total Cost of Ownership (TCO) : Integrated silicon reduces inter‑chip communication overhead, cutting power consumption by an estimated 15–20 % versus a heterogeneous mix.

- Accelerated Time‑to‑Market : End‑to‑end testing pipelines are streamlined when all compute units share the same firmware and driver model.

Talent Reimagined: From Hardware Engineers to AI System Architects

AMD’s emphasis on “AI is not replacing people” reflects a broader industry trend toward

human‑augmented AI

. The skill set required to leverage Helios and Ryzen AI is markedly different from the pure silicon engineering that dominated 2020‑2024.

- AI System Architects : Professionals who can map workloads across EPYC, RDNA, and XDNA units, optimizing for latency, throughput, and energy efficiency.

- Firmware & Driver Engineers : Experts in ROCm’s cross‑device APIs must understand low-level synchronization primitives to unlock peak performance.

- ML‑Ops Specialists : Roles that blend data science with infrastructure management—tuning PyTorch/TensorFlow models for AMD’s heterogeneous architecture.

HR leaders should adjust recruitment funnels: incorporate skill assessments in ROCm, XDNA programming, and multi-core synchronization. Consider partnership programs like AMD’s Hack Club prize to cultivate a pipeline of hybrid talent early.

Operational Benefits for Data Centers and Edge Deployments

The Helios rack is engineered for hyperscale data centers, but its modularity also makes it attractive for edge sites that require low‑latency inference (e.g., autonomous vehicles, smart factories).

- Scalability : Each Helios socket delivers ~60 TOPS; stacking multiple sockets scales linearly without redesigning silicon.

- Energy Efficiency : AMD’s shared‑memory design reduces the need for high‑bandwidth interconnects, cutting power draw by roughly 18 % compared to discrete NPU solutions.

- Software Flexibility : ROCm supports both CUDA‑compatible workloads and native AMD kernels, allowing gradual migration from legacy GPU stacks.

Competitive Positioning Against Nvidia and Qualcomm

Nvidia’s “AI‑only” narrative has dominated the market. AMD counters with a

hybrid silicon stack

, positioning itself as a lower‑TCO alternative for customers who need CPU‑GPU synergy.

- Performance Parity : Ryzen AI 400 achieves 60 TOPS versus Intel’s Panther Lake at 50 TOPS and Qualcomm’s Snapdragon X2 Elite at 80 TOPS, narrowing the gap in mobile inference.

- Ecosystem Advantage : ROCm’s open‑source nature attracts developers who prefer a vendor‑agnostic stack, reducing lock‑in risk.

- Pricing Strategy : Early adopters may benefit from AMD’s volume discounts on Helios racks and the Ryzen AI 400 line, potentially undercutting Nvidia’s DGX pricing by 12–15 % in total cost.

ROI Projections for Enterprise AI Investments

Adopting AMD’s hybrid stack can deliver tangible financial benefits. Below is a high‑level ROI model based on current benchmarks and market assumptions:

- Capital Expenditure (CapEx) : Initial Helios deployment at $1.5 M per rack; Ryzen AI 400 laptops at $3,000 each.

- Operational Expenditure (OpEx) : Power savings of 18 % reduce annual energy costs by ~$120,000 for a 10‑socket Helios cluster.

- Throughput Gains : 20–30 % faster training times translate to $500,000 in labor cost savings per year across 1,000 model iterations.

- Total ROI (5 years) : Approximate payback period of 2.8 years for Helios; 3.2 years for a fleet of Ryzen AI laptops.

Implementation Roadmap for IT Architects

Transitioning to AMD’s ecosystem requires a phased approach:

- Pilot Phase (0–6 months) : Deploy a single Helios rack in a controlled environment; benchmark against existing GPU‑only solutions.

- Training & Enablement : Conduct ROCm workshops for developers and firmware teams; integrate XDNA training modules into onboarding programs.

- Operational Integration : Update CI/CD pipelines to include ROCm build steps; monitor performance with AMD’s profiling tools.

- Scale‑Up (12–24 months) : Expand Helios deployment across regions; roll out Ryzen AI laptops to edge sites and R&D labs.

- Continuous Optimization : Leverage AI‑ops dashboards to fine‑tune workload placement; iterate firmware updates via AMD’s OTA mechanisms.

Strategic Recommendations for Enterprise Leaders

- Align Talent Strategy : Shift hiring focus toward hybrid roles—AI system architects, firmware engineers versed in ROCm, and ML‑ops specialists. Partner with educational programs to build a future workforce.

- Reevaluate Procurement Models : Consolidate CPU, GPU, and NPU purchases under AMD to simplify vendor management and negotiate better volume terms.

- Adopt a Hybrid Architecture Early : Pilot Helios in high‑throughput workloads (e.g., NLP training) and Ryzen AI laptops in edge inference scenarios to capture early performance gains.

- Invest in Software Ecosystem Development : Encourage internal teams to contribute to ROCm open‑source projects, fostering a virtuous cycle of innovation and cost reduction.

- Monitor Competitive Dynamics : Track Nvidia’s GPU roadmap and Qualcomm’s mobile AI initiatives; adjust your technology stack to maintain cost parity while exploiting AMD’s hybrid advantage.

Future Outlook: 2026–2030 AI Landscape

AMD’s move reflects a larger shift toward

integrated, software‑centric AI platforms

. As enterprises demand lower latency and higher throughput across cloud, edge, and consumer devices, the following trends are likely:

- AI‑as‑a‑Service (AIaaS) : Cloud providers will offer AMD‑based inference services that bundle CPU, GPU, and NPU compute with ROCm orchestration.

- Cross‑Industry Adoption : Industries such as manufacturing, automotive, and healthcare will adopt Ryzen AI laptops for real‑time diagnostics and predictive maintenance.

- Talent Diversification : The hybrid skill set will become a core competency in tech talent markets, driving up salaries for AI system architects by 18–22 % over the next three years.

- Open Ecosystem Leadership : AMD’s open‑source stance may attract collaborations with academia and startups, accelerating innovation cycles.

Conclusion: Embrace the Human‑Augmented AI Paradigm

AMD’s Helios rack and Ryzen AI 400 series are more than new chips; they represent a strategic bet on a future where silicon, software, and human expertise coalesce. For enterprise leaders, this means:

- Reorienting talent acquisition toward hybrid AI roles.

- Streamlining procurement to leverage AMD’s unified stack.

- Capitalizing on early performance and cost advantages in data centers and edge deployments.

By acting now—investing in training, piloting Helios, and integrating ROCm into your development lifecycle—you can secure a competitive edge in the rapidly evolving AI economy of 2026 and beyond.

Further technical insight is available in AMD’s

June 2026 ROCm whitepaper

, which details performance benchmarks across EPYC‑RDNA‑XDNA configurations, and their

Helios architecture blog post

, which explains the rack’s modular interconnect design and software stack integration.

For enterprise architects, a related deep dive on

ROCm Performance Metrics

and a case study on

Helios Edge Deployment

provide actionable context for immediate implementation.

Key Takeaways:

- AMD’s new AI ecosystem delivers unified procurement, lower TCO, and faster time‑to‑market.

- Hybrid talent—AI system architects, firmware engineers, ML‑ops specialists—is critical.

- Pilot Helios in high-throughput workloads; roll out Ryzen AI laptops for edge inference.

- Contribute to ROCm’s open-source community to accelerate innovation and reduce lock-in.

Related Articles

Nvidia deepens India footprint with $2 billion deep tech alliance to mentor AI startups

NVIDIA’s $2 B Deep‑Tech Alliance: A Game Changer for India’s AI Ecosystem and Your Funding Strategy In November 2025 NVIDIA announced a landmark commitment— $2 billion over three years to mentor,...

Latam-GPT and Latin America’s AI Sovereignty: Strategic Implications for Business and Policy in 2025

In 2025, Latin America has taken a decisive leap into the global AI arena with the launch of Latam-GPT , the region’s first large-scale, open-source large language model (LLM) tailored specifically...

Latam-GPT and Regional AI Sovereignty: Strategic Growth Opportunities for Latin America in 2025

As the AI landscape in 2025 rapidly evolves with dominant global players like GPT-4o, GPT-5, Claude 3.5, and Gemini 1.5 pushing the boundaries of scale and multimodal capabilities, a new, distinctly...