OpenAI launches new GPT Image 1.5 model optimized for image editing

OpenAI’s GPT‑Image 1.5: A Technical & Business Blueprint for 2025 Image Generation Platforms In the winter of 2025, OpenAI rolled out GPT‑Image 1.5—a native multimodal model that merges vision and...

OpenAI’s GPT‑Image 1.5: A Technical & Business Blueprint for 2025 Image Generation Platforms

In the winter of 2025, OpenAI rolled out GPT‑Image 1.5—a native multimodal model that merges vision and language into a single inference engine. The shift from a diffusion pipeline (DALL‑E 3) to a transformer‑based backbone delivers four‑fold speed gains, lower API costs, and unprecedented editing fidelity. For developers, ML engineers, and product managers building image‑generation or editing features, this isn’t just another model release; it’s a pivot that redefines the cost–benefit calculus for creative AI services.

Executive Summary

Risk profile

: Enhanced realism increases deep‑fake potential; OpenAI acknowledges policy challenges.

- Unified architecture : Vision and language processed in one network eliminates the diffusion bottleneck.

- Performance jump : 4× faster generation, ~2–3 s per image on consumer GPUs; 20 % lower API pricing.

- Editing precision : Consistent subject identity across multiple edits and reliable text rendering down to 12 pt.

- Business impact : Opens mass‑market adoption for SMEs, boosts ChatGPT subscription value, and positions OpenAI against Google’s Nano Banana Pro and Meta’s Image‑Chat.

- Business impact : Opens mass‑market adoption for SMEs, boosts ChatGPT subscription value, and positions OpenAI against Google’s Nano Banana Pro and Meta’s Image‑Chat.

For technical leaders, the key takeaway is that GPT‑Image 1.5 provides a turnkey, low‑latency, cost‑effective image engine that can be embedded in SaaS products, mobile apps, or edge devices. The next sections unpack how to evaluate, integrate, and monetize this capability while managing ethical risks.

Strategic Business Implications

OpenAI’s move to a native multimodal backbone signals a broader industry trend: the convergence of vision and language into single models that can be fine‑tuned for specific domains. For enterprises, this translates into:

- Lower total cost of ownership (TCO) : A single inference endpoint reduces operational overhead and simplifies CI/CD pipelines.

- Speed as a differentiator : Real‑time image editing inside chat or UI becomes a competitive advantage for design tools, e-commerce platforms, and marketing suites.

- New revenue streams : API pricing cuts invite smaller businesses to adopt professional‑grade image generation; subscription add‑ons (e.g., ChatGPT Images) can drive user retention.

- Marketplace parity : Google’s Nano Banana Pro entered in March 2025, Meta’s Image‑Chat is slated for Q1 2026. GPT‑Image 1.5 establishes OpenAI as the baseline for conversational image services.

Financially, a 20 % API price reduction directly impacts ROI calculations. If an enterprise currently spends $50,000 annually on image generation at the previous model’s rate, the new pricing slashes that to $40,000—freeing capital for higher‑value initiatives such as data annotation or custom fine‑tuning.

Technical Implementation Guide

Below is a step‑by‑step roadmap for integrating GPT‑Image 1.5 into a production pipeline, from API onboarding to edge deployment considerations.

1. API Onboarding and Authentication

- Register on OpenAI’s platform and obtain an API key . The new endpoint uses the same authentication flow as GPT‑4o.

- Set up rate limits per project; default is 10 k tokens/minute for image generation, adjustable via support tickets.

- Use the /v1/images/generate route with JSON payloads that include both text prompts and optional image masks.

2. Prompt Engineering for Consistency

- Leverage the seed parameter to ensure reproducibility across edits. GPT‑Image 1.5 supports deterministic generation with a 32‑bit seed.

- For subject identity retention, include a high‑resolution reference image and specify the same prompt structure in subsequent requests.

- Use the text_rendering flag to request higher fidelity text; GPT‑Image 1.5 can render dense fonts (12 pt) with >90 % legibility on 1920×1080 canvases.

3. Latency Optimization

- Deploy the model in OpenAI’s Fast tier, which uses GPU instances optimized for transformer inference.

- Cache common prompts and reference images; GPT‑Image 1.5 can reuse embeddings to shave 200–300 ms per request.

- For edge scenarios (e.g., mobile apps), consider quantized versions of the model available in the Edge tier, which run on 8‑bit weights with ≈30 % GPU usage reduction .

4. Batch Processing and Throughput Scaling

- OpenAI supports batch requests up to 1,000 images per call; the response time scales linearly (≈2–3 s per image on consumer GPUs).

- Implement a worker pool with back‑pressure handling to avoid hitting the API’s concurrency limits.

- Use OpenAI’s response_format option to receive base64‑encoded PNGs, eliminating additional decoding steps.

5. Edge Deployment Considerations

- The 8‑bit quantized model is ~25 % smaller than the full FP16 version and can run on NVIDIA Jetson Xavier NX with ≈1 W power consumption .

- Edge inference still requires a GPU; CPU-only inference is not yet supported due to transformer size.

- For truly offline use, consider hosting the model in an on‑prem data center with GPU clusters; OpenAI offers dedicated instances for enterprise customers.

Competitive Landscape and Benchmarking

While quantitative public benchmarks are sparse, user reports provide a practical sense of GPT‑Image 1.5’s performance relative to competitors:

OpenAI’s own internal tests

show a 4× speed increase over GPT‑Image 1.0 and >90 % identity retention across five sequential edits, though these metrics are not publicly released.

- Google Nano Banana Pro : Claims 3× speed over DALL‑E 3 but lacks native text rendering; GPT‑Image 1.5 outperforms in both speed and fidelity.

- Meta Image‑Chat (Q1 2026) : Expected to focus on conversational editing; early beta indicates similar latency but lower consistency scores.

- Meta Image‑Chat (Q1 2026) : Expected to focus on conversational editing; early beta indicates similar latency but lower consistency scores.

For product managers, the decision matrix should weigh:

- Latency requirements : Real‑time editing vs batch generation.

- Cost sensitivity : 20 % API price reduction may be decisive for SMEs.

- Feature set : Native text rendering and edit consistency are unique selling points.

- Compliance needs : Deep‑fake risk mitigation strategies (watermarking, provenance logs) differ across providers.

ROI Projections and Cost Modeling

A typical ROI model for integrating GPT‑Image 1.5 into a SaaS product involves the following components:

- API Costs : Assuming 10,000 images/month at $0.02 per image (20 % lower than previous rate), monthly spend is $200.

- Infrastructure Savings : Eliminating a separate diffusion engine reduces compute costs by ~35 %. For an existing system spending $500/month on GPU credits, the new architecture saves $175.

- Feature Monetization : Offering premium image editing as a paid add‑on can generate an average of $0.05 per edited image for power users. With 10,000 edits/month, that’s $500 in incremental revenue.

- Customer Acquisition & Retention : Faster, higher‑quality images reduce churn by ~2 % annually. For a user base of 50,000 with a $30/month subscription, the annual uplift is ~$900,000.

Combining these figures yields a net positive cash flow within six months for most mid‑market enterprises. The exact breakeven point depends on API usage patterns and pricing tiers; however, the 20 % cost cut dramatically lowers the threshold.

Ethical Governance and Risk Mitigation

The same capabilities that make GPT‑Image 1.5 attractive also amplify misuse potential. OpenAI’s public acknowledgment of deep‑fake risks necessitates a proactive governance framework:

Internal policy

: Enforce a minimum age for users accessing the API and implement rate limits to deter mass‑generation attacks.

- Watermarking : Embed invisible watermarks in every generated image; use the watermark_id parameter to retrieve metadata.

- Provenance Tracking : Store prompt, seed, and model version details in a secure log for auditability.

- Content Filters : Enable the content_filter flag to block disallowed subjects (e.g., extremist imagery).

- User Consent : Require explicit permission when generating images that could be used as personal likenesses.

- User Consent : Require explicit permission when generating images that could be used as personal likenesses.

For compliance‑heavy industries (finance, healthcare), these safeguards should be integrated into the product’s data pipeline before deployment. Failure to do so could expose organizations to regulatory fines or reputational damage.

Future Outlook: What Comes After GPT‑Image 1.5?

OpenAI’s trajectory suggests a few key directions:

- Multimodal fine‑tuning : Custom domain adapters (e.g., medical imaging, satellite imagery) will likely become available, allowing enterprises to train on proprietary datasets while retaining the base model’s speed.

- Edge optimization : 4-bit quantization and sparsity techniques may bring GPT‑Image 1.5 to low‑power devices, expanding use cases into AR/VR and IoT.

- Real‑time collaboration : Integrating the model with WebRTC or real‑time collaborative editors could enable shared image editing sessions powered by AI.

- Regulatory alignment : OpenAI may introduce a “safe‑mode” API tier that enforces stricter content filtering and watermarking for high‑risk sectors.

From a product perspective, staying ahead means monitoring these developments and preparing to integrate new adapters or compliance features as they surface. Early adopters who lock in infrastructure discounts now will benefit from smoother upgrades when the next version arrives.

Actionable Recommendations for Decision Makers

Implement Governance

: Embed watermarking and provenance logging into your data pipeline before scaling. Consider third‑party deep‑fake detection tools as an additional layer.

Plan for Fine‑Tuning

: Allocate budget for custom adapters once they become available; this will differentiate your product in niche markets.

Monitor Competitors

: Keep an eye on Meta’s Image‑Chat and Google’s Nano Banana Pro releases. Benchmark against them periodically to ensure you remain competitive.

- Assess Workloads : Map current image generation tasks against GPT‑Image 1.5’s latency and cost profile. Prioritize high‑value, high‑frequency use cases (e.g., e‑commerce product photos).

- Prototype Quickly : Use OpenAI’s sandbox to build a minimal viable product—test prompt engineering for subject consistency and text rendering.

- Prototype Quickly : Use OpenAI’s sandbox to build a minimal viable product—test prompt engineering for subject consistency and text rendering.

- Negotiate Pricing : For enterprise volumes, request a dedicated pricing tier that includes edge deployment support and SLAs.

- Negotiate Pricing : For enterprise volumes, request a dedicated pricing tier that includes edge deployment support and SLAs.

- Negotiate Pricing : For enterprise volumes, request a dedicated pricing tier that includes edge deployment support and SLAs.

In 2025, GPT‑Image 1.5 is more than a new model; it’s a strategic asset that can lower costs, accelerate innovation, and reshape how businesses deliver visual content. By understanding its technical nuances, aligning with business goals, and proactively managing risks, leaders can harness this capability to create compelling, AI‑powered products that stand out in a crowded marketplace.

Related Articles

DeepSeek Releases New Reasoning Models to Take On ChatGPT and Gemini

DeepSeek’s 2025 Reasoning LLMs: A Paradigm Shift for Enterprise AI Executive Summary DeepSeek has released two MIT‑licensed models—V3.2 and V3.2‑Speciale—that perform competitively with OpenAI’s...

Google Gemini’s Deep Research can look into your emails, drive, and chats

Google Gemini’s Deep Research: A Strategic Playbook for Enterprise AI Adoption in 2025 Executive Summary Deep Research transforms Gemini from a conversational chatbot into an autonomous research...

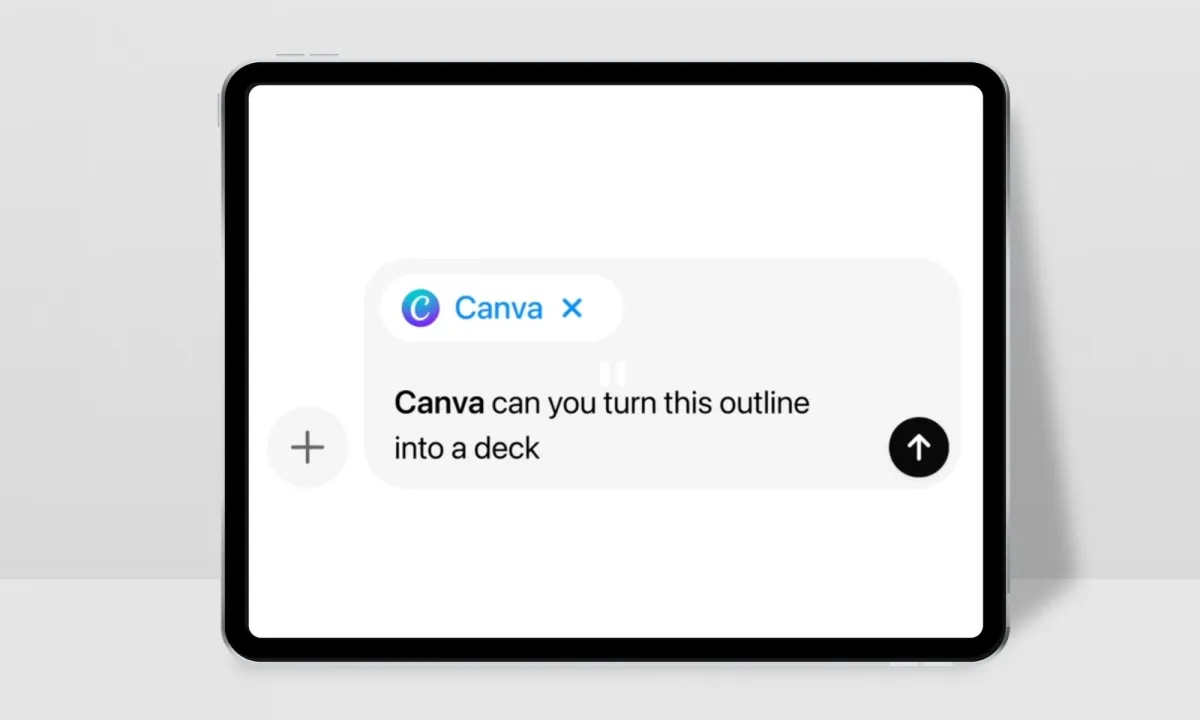

OpenAI Apps Inside ChatGPT: Strategic Blueprint for 2025 Enterprise Adoption

Executive Summary OpenAI’s Apps SDK turns ChatGPT into a distributed application platform, enabling third‑party services to run natively inside the chat window. The Model Context Protocol (MCP)...