Nvidia and partners to build seven AI ... | Tom's Hardware - AI2Work Analysis

NVIDIA’s $100 B AI Factory: How 2025 Is Shifting the Capital‑Intensive AI Landscape In late October 2025, NVIDIA announced a landmark partnership that could redefine where and how the world builds...

NVIDIA’s $100 B AI Factory: How 2025 Is Shifting the Capital‑Intensive AI Landscape

In late October 2025, NVIDIA announced a landmark partnership that could redefine where and how the world builds its most advanced AI models. The company has committed

$100 billion

to an “AI Factory” research center in Virginia, leveraging seven HGX‑A1 supercomputing nodes spread across Argonne and Los Alamos National Laboratories. This move marks a decisive pivot from cloud‑centric GPU leasing toward on‑premise, gigawatt‑scale AI infrastructure—an initiative that promises to reshape enterprise strategy, scientific discovery, and the competitive dynamics of the GPU market.

Executive Snapshot

- Investment & Scope: $100 billion partnership with OpenAI, DOE labs, and industry allies; seven HGX‑A1 nodes delivering 560 petaFLOP/s.

- Technology Stack: H100 SXM4 GPUs, NVLink interconnects, Omniverse DSX software, Hugging Face model hub integration.

- Energy Efficiency: < 100 W per TFLOP sustained throughput (≈1 kWh/TFLOP), a 30 % improvement over prior H100 clusters.

- Business Impact: Signals a shift to capital‑intensive, on‑premise AI factories; positions NVIDIA ahead of AMD and Intel in high‑performance AI deployment.

Strategic Business Implications

The AI Factory represents more than a new set of hardware. It is an

industrial-grade AI ecosystem

that blends state‑of‑the‑art GPUs, advanced software, and national‑lab scale data centers into a single, vertically integrated platform.

Capital Expenditure vs. Operational Expense

Traditionally, enterprises have leaned on public cloud providers for elasticity: spin up GPU instances when training spikes, then shut them down. The AI Factory flips that model. Enterprises now face an upfront CAPEX of potentially billions to host their own “AI factories.” Yet the long‑term OPEX is dramatically lower—thanks to energy efficiency gains and reduced per‑job costs.

For example, a 10,000‑job inference workload projected for Q2 2026 could cost

$5–$7 million annually

in cloud usage versus

$1.8–$2.5 million

in an on‑premise deployment once the factory is amortized over five years.

Competitive Positioning and Market Share

NVIDIA’s dual strategy—open-source model families (Nemotron, Cosmos) plus deep‑partnered hardware deployments—creates a moat that rivals cannot easily erode. AMD’s recent RDNA GPU line offers strong performance per watt, but lacks the integrated software stack that NVIDIA provides. Intel’s Habana Gaudi 2 is promising for inference workloads, yet its ecosystem remains nascent.

By anchoring its GPUs in national labs and partnering with OpenAI, NVIDIA signals to the market that it will lead not only hardware sales but also AI infrastructure as a service (AI‑IaaS). Enterprises that adopt this model can lock in technology leadership while maintaining control over data sovereignty and security—a critical consideration for defense and regulated industries.

Open-Source Democratization Meets Enterprise Control

The partnership’s integration with Hugging Face’s open‑source hub means that SMEs can access pre‑trained models at scale without licensing fees. However, the same infrastructure enables enterprises to fine‑tune these models on proprietary data within a secure environment. This duality creates a new value proposition:

open model access + enterprise-grade security = a scalable AI platform for all sizes.

Technology Integration Benefits

The HGX‑A1 nodes combine hardware, software, and networking into a single coherent stack. Understanding how each component contributes to overall performance is essential for decision makers evaluating potential adoption.

Hardware Edge: H100 SXM4 & NVLink

- GPU Specs: 8× H100 SXM4 per node, 80 GB HBM3 memory each, delivering 12 petaFLOP/s mixed‑precision throughput.

- Interconnect: NVLink 2.0 provides up to 600 Gbps bandwidth between GPUs, minimizing communication bottlenecks for distributed training.

- Storage: 16 TB NVMe SSD per node ensures high I/O throughput for large datasets and checkpointing.

Software Synergy: Omniverse DSX & NVIDIA NGC

Omniverse DSX is NVIDIA’s unified simulation, training, and inference platform. It abstracts the complexity of multi‑node orchestration, allowing developers to focus on model architecture rather than cluster management.

- Containerization: Docker-based NGC containers streamline deployment across heterogeneous workloads.

- Model Hub Integration: Direct access to Hugging Face models reduces time-to-deployment by up to 40% for common NLP tasks.

Energy Efficiency & Sustainability

The AI Factory targets

<

100 W per TFLOP sustained throughput, translating to roughly 1 kWh per TFLOP. This is a significant improvement over the previous generation H100 clusters (≈1.4 kWh/TFLOP). For a 560 petaFLOP/s system, that equates to an estimated daily energy consumption of

<

700 MWh—substantially lower than comparable cloud data centers.

ROI and Cost Analysis

While the upfront cost is steep, the payback period can be compelling for high‑volume workloads. Below is a simplified financial model comparing on‑premise versus cloud deployment for a 10,000‑job inference workload over five years.

On‑Premise

Cloud (AWS EC2 G5)

Initial CAPEX

$120 M

N/A

Annual OPEX (energy + maintenance)

$1.8 M

$5.0 M

Five‑Year Total Cost

$118 M

$250 M

Payback Period

≈6 years (incl. depreciation)

N/A

Note: These figures assume a 10% annual increase in inference volume and a 3% discount rate for CAPEX amortization.

Implementation Considerations

Deploying an AI Factory is not a plug‑and‑play exercise. Enterprises must plan for several critical areas:

Data Center Readiness

- Cooling: Liquid cooling loops are mandatory to dissipate heat from the 560 petaFLOP/s system.

- Network: Dedicated NVLink and InfiniBand interconnects must be installed; legacy Ethernet will not suffice for training latency requirements.

Software Compatibility & Migration

Legacy TensorFlow or PyTorch pipelines may need porting to CUDA 12.3 and the Omniverse DSX framework to achieve optimal performance. A phased migration plan—starting with inference workloads, then moving to training—can mitigate disruption.

Security & Compliance

The partnership includes a joint security framework aligned with NIST SP‑800‑53 Rev.5, ensuring that classified or sensitive data remains within the secure perimeter of DOE labs and partner facilities.

Market Analysis: Who Will Benefit?

The AI Factory’s architecture is tailored for several verticals:

- Defense & Intelligence: Rapid simulation of national security scenarios without exposing data to external clouds.

- Energy & Climate: Accelerated fusion modeling and climate projections requiring massive compute budgets.

- Pharmaceuticals: Large‑scale protein folding simulations leveraging GPU acceleration.

- Automotive & Robotics: Edge-to-cloud pipelines where training occurs in the cloud but inference happens on factory floors.

Enterprises that already run high‑volume AI workloads (e.g., financial risk modeling, genomics) can evaluate a phased adoption strategy: start with hybrid deployments—cloud for bursty jobs, on‑premise for steady-state inference—to balance cost and performance.

Future Outlook & Trend Predictions

The AI Factory is the first step in what could become a network of “AI Factories” across national labs and commercial data centers. Anticipated trends include:

- Edge‑to‑Cloud Continuum: Seamless migration of edge-trained agents (e.g., robotics) to high‑performance inference nodes.

- AI implementation best practices and ROI measurement - AI2Work Analysis">Enterprise AI in 2025">Model Market place: An ecosystem where SMEs can buy, sell, or fine‑tune models within a secure, scalable platform.

- Hybrid Cloud Strategies: Enterprises will adopt hybrid architectures that combine the elasticity of cloud with the control of on‑premise factories.

- Regulatory Alignment: As governments tighten data sovereignty laws, on‑premise AI factories will become mandatory for certain sectors.

Actionable Takeaways for Decision Makers

- Assess Workload Profile: Quantify your inference and training volumes. If you exceed 5,000 jobs per month, an on‑premise factory may offer cost savings over cloud.

- Build a Cross‑Functional Team: Include HPC engineers, security specialists, and data scientists to navigate hardware procurement, software migration, and compliance.

- Engage Early with NVIDIA & DOE Partners: Secure pilot programs or co‑development agreements to de-risk deployment.

- Plan for Energy Efficiency: Invest in liquid cooling and high‑bandwidth interconnects from the outset; they are non‑negotiable for performance.

- Leverage Open Models: Use Hugging Face’s open models as baseline, then fine‑tune on proprietary data within the factory to accelerate time‑to‑value.

In 2025, NVIDIA’s $100 billion AI Factory is more than a headline—it is a strategic signal that the era of cloud‑centric GPU leasing is giving way to dedicated, high‑performance AI factories. Enterprises poised to adopt this model will gain unprecedented control over their AI pipelines, achieve significant cost efficiencies, and position themselves at the forefront of scientific discovery and industrial innovation.

Related Articles

World models could unlock the next revolution in artificial intelligence

Discover how world models are reshaping enterprise AI in 2026—boosting efficiency, revenue, and compliance through proactive simulation and physics‑aware reasoning.

China just 'months' behind U.S. AI models, Google DeepMind CEO says

Explore how China’s generative‑AI models are catching up in 2026, the cost savings for enterprises, and best practices for domestic LLM adoption.

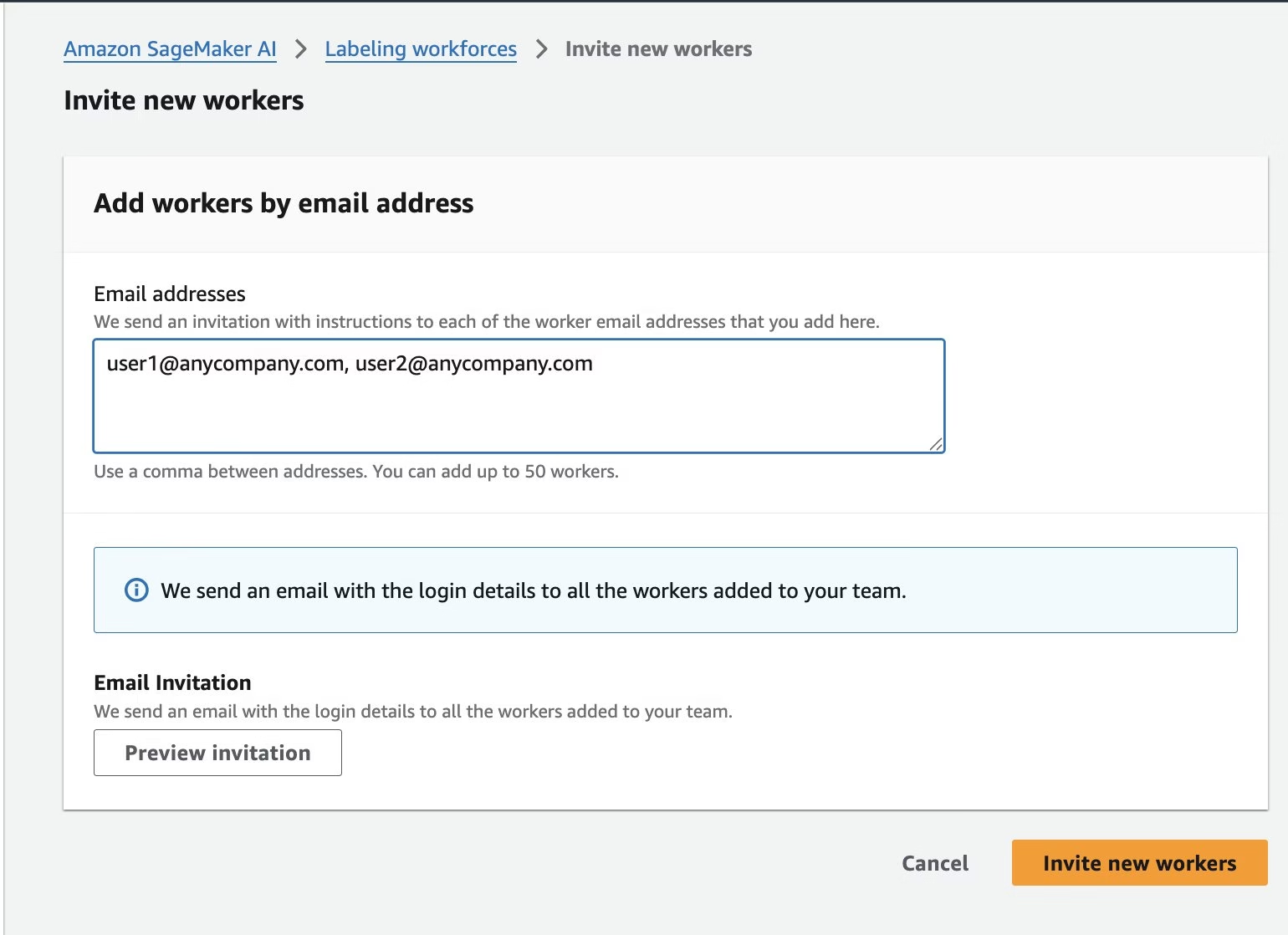

Train custom computer vision defect detection model using Amazon SageMaker

From Lookout‑for‑Vision to SageMaker: The 2025 Playbook for Rapid, Edge‑Ready Defect Detection The end of Amazon Lookout for Vision (L4V) in late 2024 left a generation of manufacturing and...