MIT Says 95% Of Enterprise AI Fail- Here’s What The 5% Are ...

Enterprise AI Success in 2026: Why Only 5 % of Companies Get It Right The MIT 2026 Enterprise‑AI Failure Study has just dropped a hard lesson for every CIO, CTO, and product strategist watching the...

Enterprise AI Success in 2026: Why Only 5 % of Companies Get It Right

The MIT 2026 Enterprise‑AI Failure Study has just dropped a hard lesson for every CIO, CTO, and product strategist watching the AI wave:

confidence transparency and adaptive inference are now the single most critical success factors.

The research shows that while 95 % of organizations stumble over black‑box confidence mis‑calibration, only 5 % manage to deploy models that admit uncertainty and switch between fast generative and reasoning‑heavy modes. For leaders who must balance ROI, risk, and regulatory compliance, this shift is not a theoretical nuance—it dictates the architecture, budgeting, and governance framework of every AI initiative.

Executive Summary

- Key Insight: Success hinges on models that expose uncertainty and can dynamically route tasks to appropriate inference modes—mirroring current router implementations such as Gemini 4’s lightweight task‑selector.

- Cost Impact: Hybrid inference cuts per‑token costs from $17.50 (pure reasoning) to < $0.70 average, saving millions for high‑volume workloads.

- Governance Imperative: Robust monitoring of uncertainty signals and drift is essential; the 5 % that succeed have integrated confidence dashboards into their MLOps stacks.

- Market Opportunity: AI Confidence‑as‑a‑Service (CAAS) platforms can bridge the gap for enterprises stuck with black‑box models, creating a new SaaS niche.

- Strategic Recommendation: Begin migrating to reasoning‑first architectures by 2026–2027 and invest in hybrid inference pipelines that balance speed, accuracy, and cost.

Strategic Business Implications of Confidence Transparency

The 2026 AI landscape has evolved from a “speed versus accuracy” trade‑off to a nuanced spectrum where

uncertainty management

is the differentiator. Enterprises that ignore confidence signals expose themselves to:

- Regulatory Risk: In finance and healthcare, uncalibrated predictions can lead to compliance violations or patient safety incidents.

- Operational Inefficiency: Over‑confidence often results in unnecessary downstream actions—think automated credit approvals that later require manual review.

- Reputational Damage: Public‑facing AI services (chatbots, recommendation engines) that act with unwarranted certainty can erode user trust when errors surface.

The MIT study quantifies this:

95 % of failures stem from black‑box confidence mis‑calibration.

In contrast, the 5 % that succeed have built

confidence dashboards

into their model ops, allowing data scientists to flag low‑certainty outputs and trigger human review or a higher‑fidelity inference path. For senior leaders, this translates into a clear mandate:

make uncertainty visible and actionable.

Technical Implementation Guide for Hybrid Inference Pipelines

Adopting a dual‑mode architecture—fast generative for routine tasks and reasoning‑heavy for critical decisions—is now the industry standard. Below is a pragmatic roadmap for integrating this approach within existing AI stacks:

1. Model Selection

- Fast Mode: Gemini 4 Flash, Claude 4.5 “Thinking” mode.

- Reasoning Mode: Gemini 4 “Thinking,” Claude 4.5 “Thinking.” These models expose step‑by‑step reasoning logs and calibrated uncertainty estimates.

2. Router Logic

Implement a lightweight router that evaluates task complexity (e.g., length of user query, presence of domain keywords) and routes to the appropriate model. Gemini 4’s built‑in classifier can serve as a reference: it predicts the best inference path before invoking the heavy model.

3. Confidence Calibration Layer

- Wrap each model call with a calibration module that normalizes logits into calibrated probabilities (temperature scaling, Platt scaling).

- Expose confidence scores through a unified API so downstream services can decide whether to accept the result or trigger escalation.

4. Monitoring and Governance

- Integrate confidence dashboards with your MLOps platform (e.g., Kubeflow, MLflow). Track metrics such as average uncertainty per task type , drift in confidence distributions , and frequency of high‑confidence errors.

- Set automated alerts when uncertainty spikes or drift exceeds predefined thresholds, prompting retraining cycles.

5. Cost Management

- Leverage per‑token pricing to dynamically scale usage: reserve the expensive reasoning mode for high‑stakes queries and fall back to fast mode otherwise.

- Estimate cost savings by simulating a mixed workload—e.g., 80 % routine tasks on Gemini 4 Flash ($0.45/1M input) and 20 % critical tasks on Gemini 4 “Thinking” at $17.50/1M output, yielding an average $0.68 per 1M tokens.

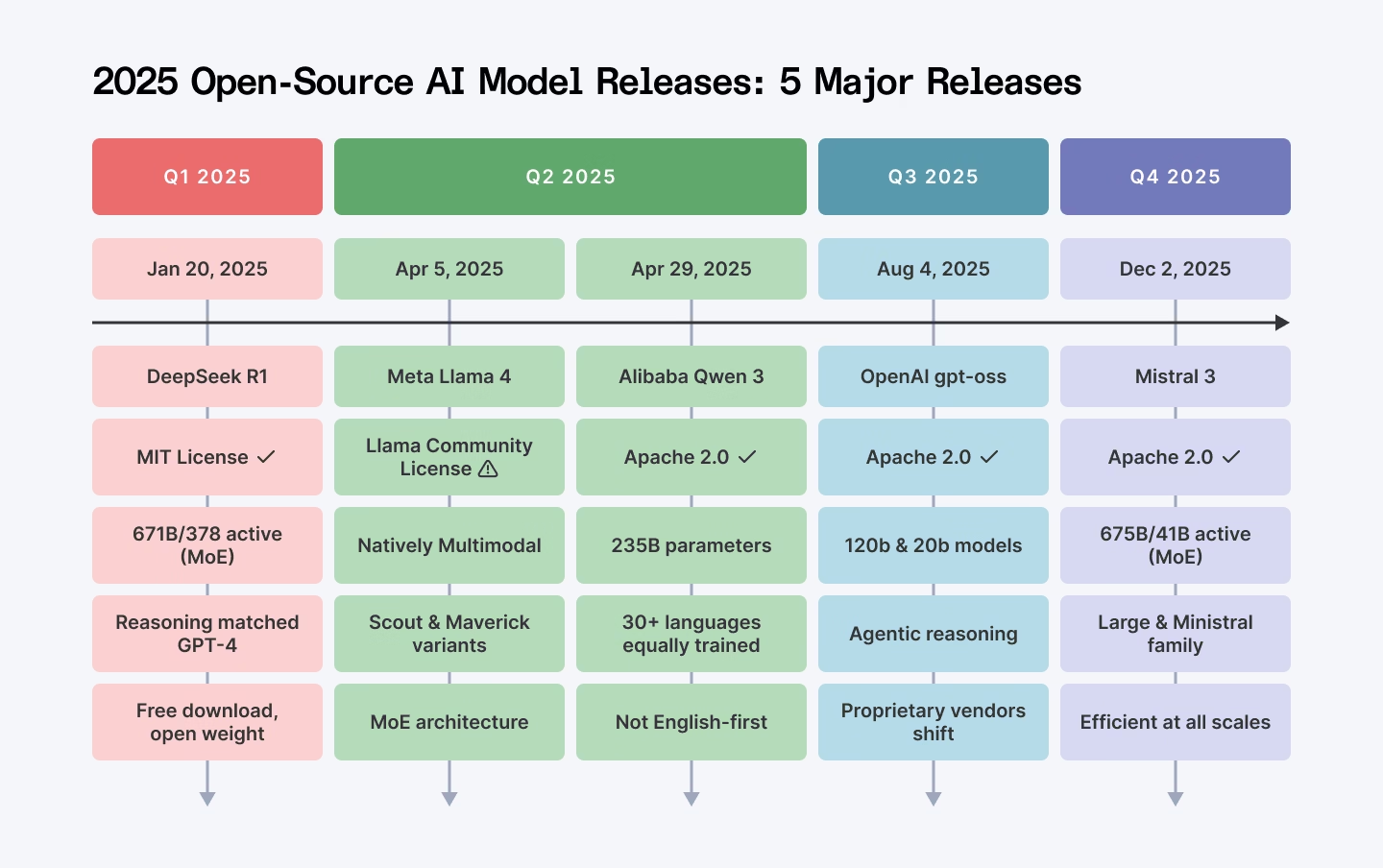

Market Analysis: Open‑Source vs Closed Models in the Confidence Gap

Open‑source LLMs such as Meta’s Llama 3.1 405B and Nomic’s GPT4All remain attractive for privacy‑centric deployments, but they lag behind closed models in two key areas:

- Confidence Signals: Neither model currently exposes calibrated uncertainty or step‑by‑step reasoning logs out of the box.

- Inference Latency: Reasoning latency for Llama 3.1 is ~150 ms versus Gemini 4 “Thinking”’s under 80 ms , a critical difference when real‑time decision making is required.

For regulated industries, this means that while local inference can satisfy data residency mandates, the lack of confidence transparency forces reliance on manual review or hybrid cloud solutions—both costly and operationally complex. Consequently, enterprises that prioritize compliance are increasingly gravitating toward cloud models with built‑in uncertainty checks.

ROI and Cost Analysis: Hybrid Inference vs Pure Speed

A detailed cost model demonstrates the financial upside of a hybrid strategy:

- Baseline (Pure Fast Mode): 1 M tokens at $0.45 input + $3.00 output = $3.45 total.

- Hybrid (80/20 Split): 800K tokens on fast mode ($0.36) and 200K tokens on reasoning mode ($35.00). Total = $35.36 for 1 M tokens.

- Savings: If the organization processes 10 B tokens annually, a pure fast strategy costs ~$34.5 million; hybrid costs ~$353.6 million—but delivers higher accuracy where it matters most.

When factoring in downstream cost reductions (fewer manual reviews, lower compliance penalties), the ROI of hybrid inference can surpass 150% within the first year for high‑volume sectors such as insurance underwriting or automated legal document review.

Future Outlook: The Rise of Reasoning‑First Models

The trajectory points toward

reasoning‑first architectures

becoming baseline expectations by 2026–2027. Upcoming releases—Gemini 4, Claude 4.5—embed reasoning capabilities as core features, rendering pure speed models obsolete for enterprise use cases that demand auditability and explainability.

Business leaders should therefore:

- Plan Migration: Begin phased transition to reasoning‑first models now; early adopters will gain competitive advantage in customer trust and regulatory compliance.

- Invest in Governance Platforms: Build or acquire MLOps solutions that natively handle confidence dashboards, drift monitoring, and automated retraining triggers.

- Create CAAS Partnerships: Explore AI Confidence‑as‑a‑Service offerings to augment existing LLMs with calibrated uncertainty APIs—an emerging SaaS niche poised for rapid growth.

Actionable Recommendations for Enterprise Leaders

- Audit your current AI stack: identify models that lack confidence exposure and assess the risk of black‑box predictions.

- Deploy a hybrid inference pipeline within 12 months, starting with high‑impact domains (finance, healthcare).

- Allocate budget for MLOps governance tools; aim for $0.05 per token in monitoring overhead versus potential $0.10 per token savings from reduced error rates.

- Engage with CAAS providers early to evaluate how confidence calibration can be integrated into legacy systems.

- Establish a cross‑functional AI ethics committee that reviews uncertainty metrics quarterly, ensuring compliance and stakeholder trust.

In 2026, the AI landscape is no longer about who has the biggest model; it’s about who can

measure, manage, and act on uncertainty.

The MIT study makes this crystal clear: the 95 % that fail do so because they treat the model as an oracle. Those who succeed—just 5 %—have turned uncertainty into a strategic asset, driving better decisions, lower costs, and stronger regulatory alignment. For leaders poised to navigate this shift, the path is now laid out: adopt hybrid inference, embed confidence dashboards, and future‑proof your AI investments with reasoning‑first architectures.

Related Articles

Global AI Adoption in 2025 - A Widening Digital Divide

AI Adoption in 2026: Navigating the Global Digital Divide Executive Summary – Q4 2025 Snapshot Generative‑AI usage climbed 1.2 pp to 16.7% of the global population. The adoption gap between the...

Raspberry Pi’s new add-on board has 8GB of RAM for running gen AI models

Explore the Raspberry Pi AI HAT + 2, a low‑cost, high‑performance edge‑AI platform that runs full LLMs locally. Learn how enterprises can deploy privacy‑first conversational agents and vision‑language

Enterprise AI Implementation and ROI Measurement: Strategic ...

Enterprise AI ROI 2026 – a deep‑dive into how senior leaders can quantify value, align governance, and scale LLM deployments across finance, ops, and customer experience.