Global AI Adoption in 2025 - A Widening Digital Divide

AI Adoption in 2026: Navigating the Global Digital Divide Executive Summary – Q4 2025 Snapshot Generative‑AI usage climbed 1.2 pp to 16.7% of the global population. The adoption gap between the...

AI Adoption in 2026: Navigating the Global Digital Divide

Executive Summary – Q4 2025 Snapshot

- Generative‑AI usage climbed 1.2 pp to 16.7% of the global population.

- The adoption gap between the Global North and South widened: one‑in‑three in the North versus one‑in‑six in the South.

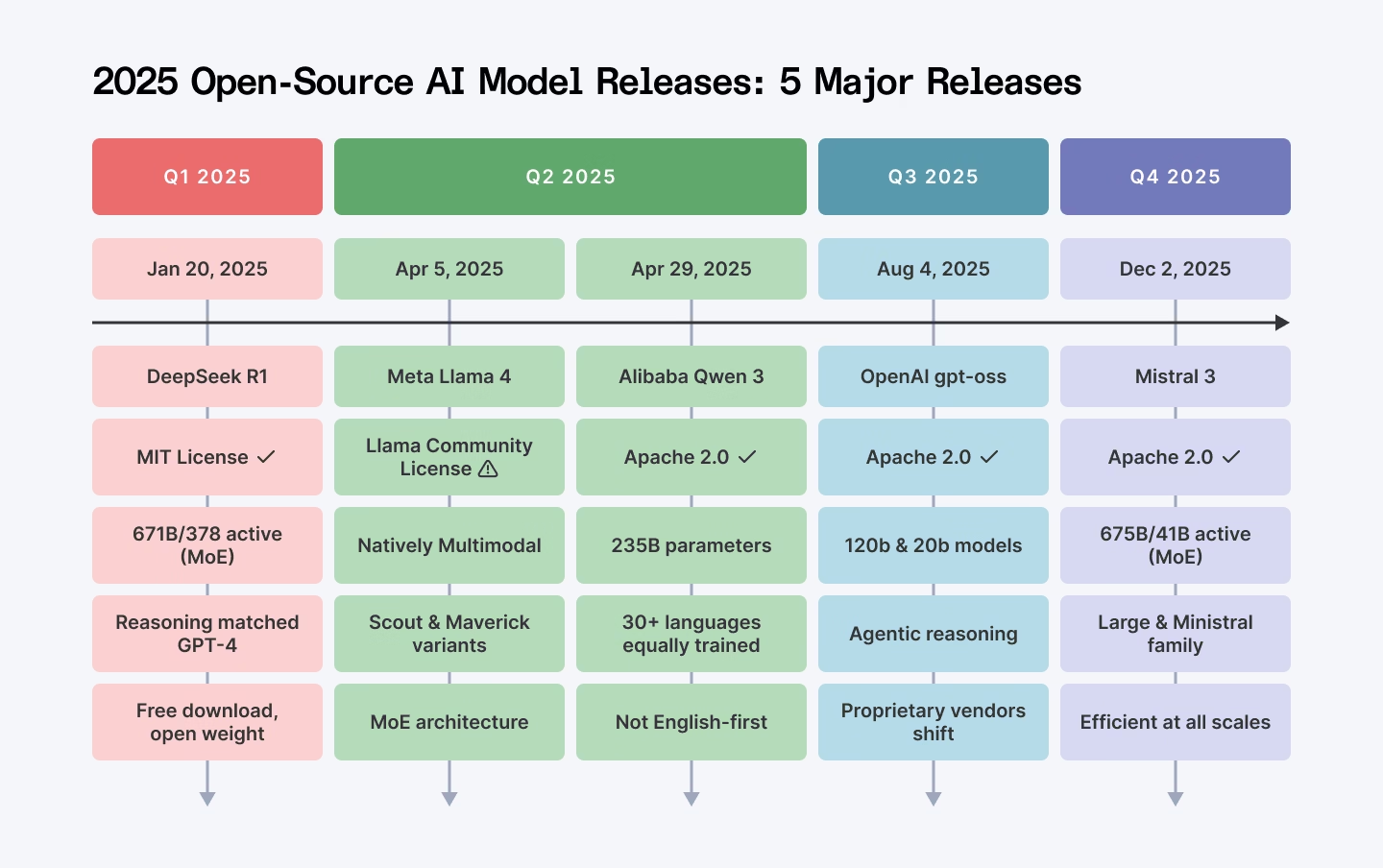

- Open‑source models now power over half of all LLM deployments, eroding proprietary pricing advantages.

- Enterprise AI tooling splits into governance‑heavy stacks for regulated use cases and agentic workflows for rapid experimentation.

- Geopolitical competition—especially between U.S. and Chinese ecosystems—is reshaping local market dynamics; DeepSeek R1 and Llama 4 have notable footprints in Africa, Asia, and the Middle East.

This brief translates those macro trends into concrete decisions for senior technologists, analysts, and policy makers: where to invest infrastructure, how to structure AI portfolios, and which vendors to partner with to avoid stranded models or costly APIs.

1. Strategic Implications of the North–South Adoption Gap

The raw growth figures hide a deeper inequity. In Q4 2025, the Global North was doubling its adoption pace relative to the South, indicating that policy, infrastructure, and talent pipelines are not closing but widening.

- Market Segmentation by Capability : Affluent markets can afford latency‑critical, high‑performance proprietary models (GPT‑4o, Claude 3.5) for mission‑critical services such as real‑time fraud detection or autonomous trading. In contrast, South‑market players often rely on cost‑effective, on‑premises open‑source solutions to meet budget constraints.

- Competitive Advantage through Localization : Companies that build localized AI stacks—leveraging DeepSeek R1’s 671 B‑parameter MoE architecture or Llama 4’s multimodal capabilities—can capture market share where data residency and language support are non‑negotiable.

Decision makers should map their global footprint against the adoption curve: identify which geographies require cloud‑based, high‑latency‑tolerant models versus those that demand edge or on‑premises inference. This mapping informs both product roadmaps and partner ecosystems.

2. Open‑Source Dominance – A Cost‑Efficiency Revolution

The rise of open‑source LLMs—DeepSeek R1 (671 B MoE), Llama 4 (30 B multimodal), Qwen 3 (70 B), and Mistral Large 3 (12 B)—has shifted the economics of AI deployment. Index.dev reports that on‑premises solutions now control over 50% of the LLM market.

- No Token Metering : Unlike cloud APIs, local inference eliminates recurring token costs. For example, GPT‑4o charges $0.03 per 1 k prompt tokens and $0.06 per 1 k completion tokens (latest 2025 rates); DeepSeek R1 can be run on a single A100 for under $0.01 per 1 k tokens once the hardware is amortized.

- Data Sovereignty : GDPR‑compliant edge deployments satisfy EU regulators; similar regimes in Brazil, India, and Africa create a niche for on‑premises models.

- Performance Parity : Published MMLU scores show DeepSeek R1 at 71.4% versus GPT‑4o’s 73.2%; Llama 4 achieves 68.9% on the same benchmark, with a 30% lower inference latency for multimodal inputs.

From a financial perspective, the break‑even point for on‑premises deployments can be reached within 12–18 months for enterprises with high token volumes. For example, a mid‑size bank processing 10 M tokens monthly could save approximately $50k annually by shifting from GPT‑4o to an in‑house DeepSeek R1 deployment.

3. Enterprise AI Tooling – Governance vs. Agility

The tooling landscape reflects divergent priorities. Below is a snapshot of two representative stacks:

Tool

Primary Focus

Compliance Highlights

GitHub Copilot Enterprise

Governance‑heavy, code safety

SOC 2 Type II certified; data retention disabled in privacy mode

Cursor (AI Refactor)

Agentic workflow, multi‑file refactoring

No data retention; open source model licensing

Regulated sectors—finance, healthcare, defense—lean toward Copilot Enterprise for audit trails. Startups prioritizing rapid prototyping may favor Cursor’s zero‑data‑retention policy and agentic code generation.

Strategically, organizations should adopt a hybrid stack: governance‑heavy tools for production pipelines and agentic tools for research and development cycles.

4. Geopolitical Dynamics – U.S. vs. China in AI Ecosystems

The emergence of DeepSeek R1 in Africa and the rapid adoption of Chinese models (Qwen 3, Llama 4) illustrate a shifting power balance:

- China’s Momentum : Open‑source licensing and aggressive local infrastructure investments close performance gaps while offering cost advantages.

- U.S. Response : U.S. vendors must accelerate model releases (e.g., GPT‑4o v2) or open their models to maintain market share.

- Local Preference : In regions where data residency and language support are critical, local providers dominate; U.S. models face regulatory hurdles and higher costs.

Business leaders operating in emerging markets should evaluate the long‑term sustainability of relying on foreign cloud APIs versus building local AI capabilities. The trend suggests a future where regional ecosystems dictate vendor viability more than global brand recognition.

5. ROI Projections – On‑Premises vs. Cloud Deployments

A simplified cost model illustrates potential savings (all figures from 2025 pricing data; 2026 updates are projected to be similar with modest inflation). Token volumes are expressed as prompt + completion tokens per month.

Scenario

Monthly Token Volume

Cloud Cost (GPT‑4o)

On‑Premise Cost (DeepSeek R1)

Annual Savings

Enterprise SaaS

5 M

$10k

$2k

$72k

Financial Services API

20 M

$40k

$8k

$432k

E‑commerce Personalization

15 M

$30k

$324k

Beyond cost, on‑premises deployments reduce latency by 30–50% for regional users, improving user experience and enabling real‑time analytics that cloud APIs cannot match due to network hops.

6. Implementation Roadmap for Global Enterprises

- Assessment Phase (Months 1–3) : Map token volumes, regulatory requirements, and latency targets across all markets.

- Pilot Phase (Months 4–6) : Deploy DeepSeek R1 or Llama 4 in a high‑volume region to validate performance and cost savings.

- Scale Phase (Months 7–12) : Roll out on‑premises inference clusters, integrating them with existing CI/CD pipelines and monitoring stacks.

- Governance Layer (Ongoing) : Enforce data retention policies, audit trails, and compliance checks across all AI workloads.

Key success metrics: token cost per dollar of revenue, average inference latency, and regulatory audit scores. A quarterly review cycle ensures the strategy remains aligned with evolving market dynamics.

7. Future Outlook – 2026–2027 and Beyond

- High‑Performance Proprietary Models : Reserved for latency‑critical, mission‑critical applications (e.g., autonomous vehicles, real‑time medical diagnostics).

- Open‑Source, Cost‑Efficient Models : Dominant in large‑scale, cost‑sensitive deployments across the Global South and emerging markets.

- Regulatory Momentum : Tightening data‑protection laws will accelerate on‑premises adoption; geopolitical tensions may prompt sovereign AI stacks.

8. Actionable Recommendations for Decision Makers

- Audit Your Token Footprint : Quantify monthly token consumption per region to identify cost hotspots and prioritize on‑premises migration.

- Build a Hybrid Tooling Stack : Combine governance‑heavy tools (Copilot Enterprise) for production with agentic tools (Cursor) for innovation cycles.

- Invest in Edge Infrastructure : Deploy GPU clusters or AI accelerators locally to eliminate cloud latency and avoid token metering fees.

- Leverage Open‑Source Models Early : Pilot DeepSeek R1 or Llama 4 before committing to proprietary APIs; evaluate performance on native data sets.

- Align with Regional Compliance Frameworks : Ensure AI deployments meet GDPR, LGPD, and emerging AI regulations in each market.

- Partner Strategically with Local Ecosystems : Engage with regional AI consortia to stay ahead of policy shifts and infrastructure developments.

By embracing a nuanced, region‑aware strategy that balances cost, performance, and compliance, enterprises can navigate the widening digital divide while positioning themselves for sustained competitive advantage in 2026 and beyond.

Related Articles

India leads global surge in large-language model adoption

India Surges Ahead: How 2025 LLM Adoption Is Reshaping the Global AI Landscape Executive Snapshot : By early 2025, India has eclipsed the United States, China and Europe as the fastest‑growing market...

OpenAI poaches Google executive to lead corporate development

Explore how OpenAI’s new corporate development chief is reshaping the 2025 AI acquisition playbook. Learn key tactics, financial levers, and regulatory insights for senior tech executives.

The AI -Ready Enterprise : Embracing the Future of Business

AI‑Ready Enterprise 2025: Turning Agentic Innovation into Strategic Advantage Executive Summary Agentic generative AI is no longer a productivity tool; it is a creative engine that can re‑invent...