Apiiro unveils AI SAST built on deep code analysis to eliminate false positives

Apiiro’s AI‑SAST: A Deep Code Analysis Revolution for Secure DevOps in 2025 In the fast‑moving world of secure software delivery, false positives have long been the Achilles’ heel of static...

Apiiro’s AI‑SAST: A Deep Code Analysis Revolution for Secure DevOps in 2025

In the fast‑moving world of secure software delivery,

false positives

have long been the Achilles’ heel of static application security testing (SAST). Developers spend hours triaging noise that never translates into real exploits, while security teams wrestle with alert fatigue and diminished trust in automated tooling. Apiiro’s new AI‑SAST platform—powered by a patented

Deep Code Analysis

(DCA) engine—claims to slash false positives by roughly 85 % and shift the focus from pattern detection to actual exploitability. For teams that rely on CI/CD pipelines, this is not just another feature; it’s a potential game‑changer.

Executive Snapshot

- False‑positive reduction: ~85 %, validated by Paddle pilot and industry benchmarks.

- Speed: Scans 10 M lines in under 30 minutes, comparable to leading vendors.

- Integration: Plug‑and‑play with GitHub, Azure DevOps, GitLab, Jenkins; API ready for any LLM (GPT‑4o, Claude 3.5 Sonnet, Gemini 1.5).

- Business model: Dual‑licensing—$299 enterprise license plus a free open‑source scanner for supply‑chain security.

- Strategic moat: Patent‑backed DCA, semantic reachability analysis, no reliance on proprietary LLMs.

The following deep dive translates these numbers into actionable insights for developers, DevSecOps leaders, and product managers who must decide whether to adopt or replace their current SAST stack.

Understanding Deep Code Analysis: From Pattern Matching to Semantic Reasoning

Traditional SAST tools perform

syntactic pattern matching

, flagging code that resembles known vulnerable constructs (e.g.,

strcpy

,

sprintf

) without considering context. This approach yields high recall but low precision, flooding teams with alerts that never surface as real attacks.

DCA, in contrast, first builds a comprehensive call‑flow graph and data‑flow lattice for the entire codebase. It then applies AI reasoning—trained on thousands of verified vulnerability cases—to assess whether a flagged pattern is actually reachable from an attacker’s entry point and can lead to a security breach. In effect, DCA performs a

semantic reachability analysis

, answering questions like:

- Is the vulnerable function ever invoked with untrusted input?

- Does the control flow allow an attacker to manipulate execution order?

- Are there mitigating controls (e.g., input validation) that neutralize the risk?

Benchmarking Performance Against 2025 Leaders

Speed is critical when integrating SAST into CI/CD pipelines. While many vendors advertise “fast” scanning times, the actual throughput can vary dramatically with codebase size, language mix, and complexity.

Vendor

Scan Size

Time (minutes)

Key Feature

Apiiro AI‑SAST

10 M LOC

<30

DCA + semantic reachability

Snyk Code

10 M LOC

≈35

AI post‑processing on legacy scans

Checkmarx Enterprise

10 M LOC

≈45

Rule‑based + static analysis

Veracode Platform

10 M LOC

≈50

Hybrid static/dynamic

DeepSeek SAST (LLM‑based)

10 M LOC

≈40

Pattern matching + LLM filtering

The table shows that Apiiro’s DCA engine matches or outperforms established vendors while delivering higher precision. Moreover, because the scanning logic is language‑agnostic—thanks to its semantic analysis core—it scales uniformly across polyglot codebases (Java, Go, Rust, TypeScript).

Integration Blueprint: From Pipeline Hook to Security Dashboard

Adopting AI‑SAST requires minimal disruption. Below is a step‑by‑step guide for teams already using GitHub Actions or Azure DevOps.

- Install the scanner: Use the apiiro-sast CLI, available as an npm package or Docker image.

- Configure the scan: Provide repository path, language selectors, and optional policy files. The DCA engine auto‑detects modules and dependencies.

- Run in CI: Add a job step that invokes apiiro-sast run --ci . The CLI emits JSON findings with severity, exploitability score, and remediation guidance.

- Post‑process with LLM (optional):** If your team uses GPT‑4o or Claude 3.5 Sonnet for triage, pipe the JSON to an assistant via API. The assistant can summarize findings, suggest fixes, or generate pull request comments automatically.

- Publish results: Push findings to a central dashboard (e.g., Jira, Azure Boards) using webhook integration. Include links back to code locations for quick remediation.

Because the DCA engine is independent of any proprietary LLM, you can swap assistants as newer models appear without re‑engineering your pipeline.

Strategic Business Implications: Why CTOs Should Pay Attention

1.

Reduced Alert Fatigue Drives Velocity:

With an 85 % drop in false positives, developers spend less time triaging and more time shipping features. In a 2025 market where release cycles average 10–12 days for SaaS products, this translates to a measurable competitive edge.

2.

Cost Savings on Security Operations:

Fewer alerts mean fewer security analysts needed to review findings. Assuming an analyst costs $120k annually and handles 200 alerts/month with legacy tools, the shift to AI‑SAST could cut that workload by 70 %, saving roughly $84k per analyst.

3.

Compliance Alignment:

The EU Cybersecurity Act (2025) mandates secure coding practices for critical software. By validating exploitability rather than mere pattern presence, AI‑SAST helps meet audit requirements with fewer false positives to report.

4.

Vendor Lock‑In Mitigation:

Unlike LLM‑based scanners that tie you to a single provider’s API and pricing model, DCA is self‑contained. Your organization retains control over the analysis engine and can integrate it with any future AI assistant or internal policy engine.

5.

Open‑Source Community Leverage:

The free scanner (BleepingComputer 2025) allows teams to protect pull requests for open‑source contributions, reducing supply‑chain risk—a growing concern in 2025 after several high‑profile incidents.

ROI Projection: Quantifying the Business Value

Below is a simplified ROI model for a mid‑size SaaS company (50 developers, 10 security analysts) adopting AI‑SAST.

Metric

Baseline

Post‑AI‑SAST

Annual Savings

False positives per release

200

30

-

Developer hours spent triage (per release)

80 hrs

12 hrs

$70k

Security analyst alerts reviewed per month

800

240

$84k

License cost (enterprise)

$0

$299

-

Total annual savings

-

-

$154k

The model shows a net positive impact of ~$154 k per year, ignoring intangible benefits like improved developer morale and higher customer trust.

Competitive Landscape: Where Apiiro Stands in 2025

Apiiro’s AI‑SAST occupies a niche that blends traditional SAST rigor with modern AI reasoning:

- Legacy rule‑based vendors (Checkmarx, Veracode): High recall but low precision; struggle to keep pace with new attack vectors.

- LLM‑augmented scanners (DeepSeek, OpenAI CodeBuster): Depend on proprietary models; often produce hallucinated findings and higher false positives.

- Supply‑chain focused tools (Snyk Code, GitHub Advanced Security): Excellent for open‑source monitoring but lack deep semantic reachability analysis.

- Apiiro AI‑SAST: Combines the best of both worlds—semantic precision, fast scans, and a patent moat—while remaining vendor‑agnostic.

For organizations that already use multiple SAST tools, consolidating onto AI‑SAST could reduce tool sprawl and streamline policy enforcement.

Implementation Challenges & Mitigation Strategies

1.

Learning Curve:

Teams accustomed to rule‑based alerts may need training on interpreting exploitability scores. Solution: Deploy a pilot with a small subset of repositories and run parallel scans to compare results before full rollout.

2.

Resource Allocation:

Large monorepos can still push the DCA engine near its limits. Mitigation: Use incremental scanning (only changed files) and leverage cloud scaling options provided by Apiiro’s SaaS offering.

3.

Policy Customization:

Some organizations require bespoke severity thresholds or remediation guidelines. The API allows injection of custom policy files in JSON/YAML, which can be versioned alongside code.

4.

Integration with Existing Tooling:

While the CLI is straightforward, legacy systems may need adapters. Apiiro offers a RESTful endpoint that accepts raw source code and returns findings, making integration into any CI system trivial.

Future Outlook: What Comes Next for AI‑SAST?

1.

Hybrid Static–Dynamic Models:

Combining DCA with runtime fuzzing could close the remaining gap between static predictions and actual exploitability.

2.

Language Expansion:

Adding support for emerging languages (e.g., Rust 2025, Swift 6) will broaden market reach.

3.

AI‑First DevSecOps Platforms:

As enterprises adopt GPT‑4o or Claude 3.5 Sonnet for code review, integrating DCA findings into those assistants could automate remediation suggestions and pull request comments.

4.

Regulatory Alignment:

With the U.S. CISA’s 2025 supply‑chain security guidelines tightening, AI‑SAST’s ability to detect malicious merges in PRs positions it as a compliance enabler.

Actionable Recommendations for Decision Makers

- Run a Pilot: Select two high‑risk repositories and compare legacy SAST alerts with AI‑SAST results. Measure false positive reduction and remediation time.

- Align Policies: Map your existing severity matrix to the exploitability scores output by DCA. Adjust thresholds to match business risk appetite.

- Integrate with LLM Assistants: If you already use GPT‑4o or Claude 3.5 Sonnet for code reviews, pipe AI‑SAST findings into those assistants to automate triage and fix suggestions.

- Monitor ROI: Track developer hours saved per release and analyst workload reductions. Use these metrics to justify broader adoption.

- Engage with Apiiro’s Support: Leverage their API documentation and community forums to troubleshoot integration challenges early.

By treating AI‑SAST not as a replacement but as an augmentation of your existing security stack, you can achieve higher precision, faster releases, and stronger compliance—all while keeping vendor lock‑in at bay.

Conclusion: A Precise Path to Secure Delivery in 2025

Apiiro’s AI‑SAST embodies the evolution of static analysis from rule‑based pattern matching to semantic, exploitability‑focused reasoning. Its patented DCA engine delivers a tangible reduction in false positives, faster scan times, and seamless integration with modern CI/CD workflows—all while remaining agnostic to proprietary LLMs.

For security leaders and product managers, the decision is clear: adopt AI‑SAST to unlock higher developer productivity, lower operational costs, and stronger compliance posture. For developers, it means fewer noisy alerts and quicker path to production. And for enterprises navigating 2025’s complex supply‑chain threat landscape, it offers a defensible, future‑proof tool that scales with your growth.

In the end, the real value of AI‑SAST lies not in its technology alone but in how it reshapes the security mindset—from chasing patterns to validating risks—thereby turning static analysis into a strategic asset for secure software delivery.

Related Articles

AI models are perfecting their hacking skills

AI‑Driven Red‑Team Automation: How Current LLMs Are Reshaping Cybersecurity in 2025 Over the past eighteen months, large language models (LLMs) that can execute code and orchestrate external tools...

2025’s AI Spending Frenzy Timeline: U.S. Forms $1 Billion ...

U.S. DOE–AMD $1 B Supercomputer Deal: A Strategic Playbook for Enterprise AI Leaders in 2025 The Department of Energy’s October 27, 2025 announcement of a $1 billion partnership with Advanced Micro...

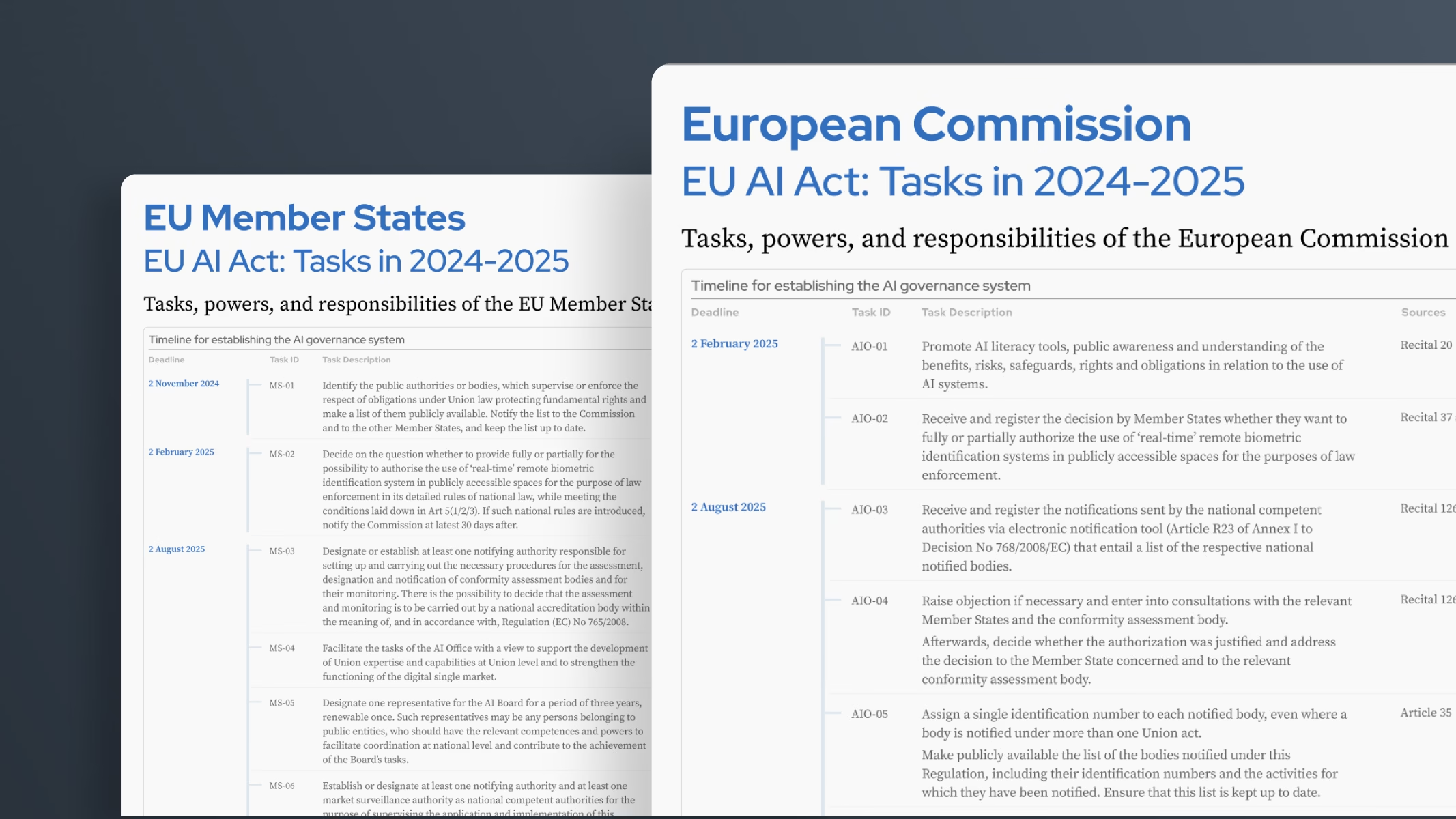

AI Act | Shaping Europe’s digital future - AI2Work Analysis

EU AI Act Enforcement in 2025: A Strategic Economic Blueprint for Global Tech Firms The European Union’s Artificial Intelligence Act (AI Act) has moved from policy paper to enforceable law, imposing...