AI startup valuations are doubling and tripling within months ...

Valuation Surge for AI Startups in 2025: How the November Model Wave Unlocks Rapid Growth In late November 2025, three flagship large‑language models—Claude Opus 4.5, Gemini 3 Pro, and GPT‑5.1...

Valuation Surge for AI Startups in 2025: How the November Model Wave Unlocks Rapid Growth

In late November 2025, three flagship large‑language models—Claude Opus 4.5, Gemini 3 Pro, and GPT‑5.1 Codex‑Max—were launched within a single week. Their near‑identical performance on software‑engineering benchmarks, coupled with divergent pricing strategies, created an unprecedented environment where early‑stage AI companies can scale from prototype to production in weeks rather than months. The result: valuations for many such firms have doubled or even tripled in just a few months.

Executive Summary

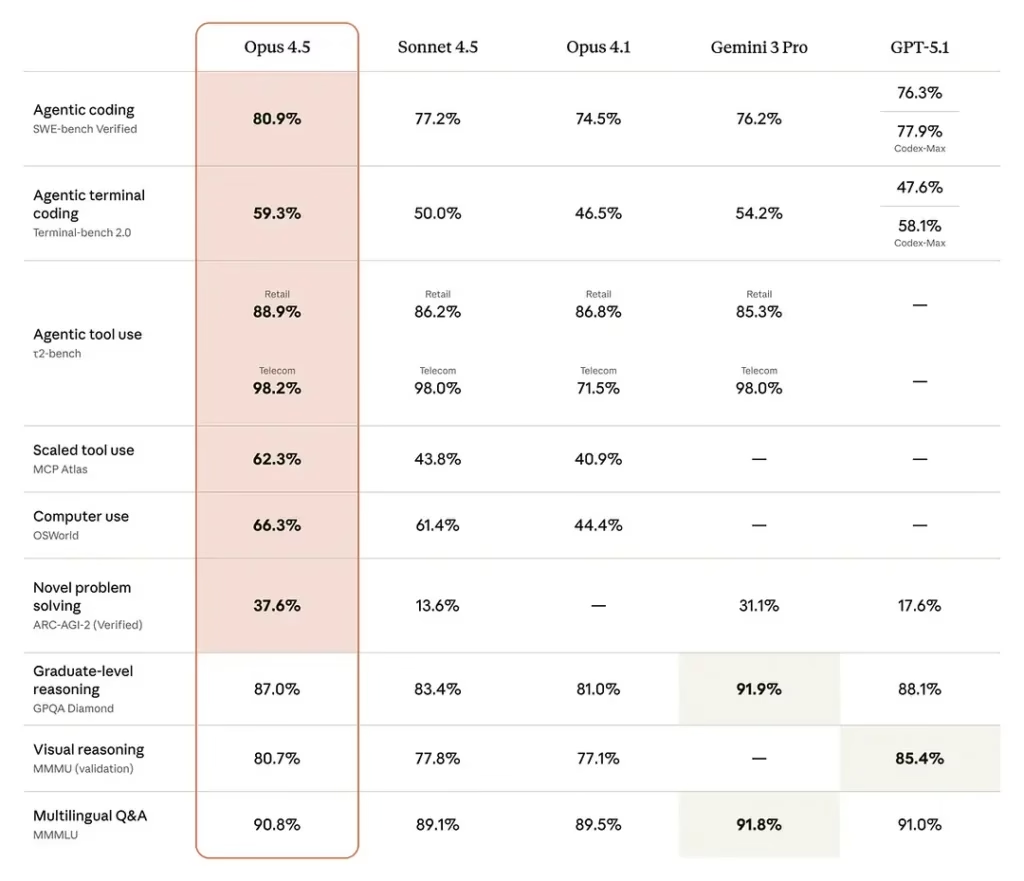

- Benchmark parity: All three models achieve 76–81 % accuracy on SWE‑bench Verified, with Gemini excelling at tool use (Terminal‑Bench 54.2 %).

- Token economics: GPT‑5.1 Codex‑Max is the cheapest but requires larger context windows; Claude Opus 4.5 offers a sweet spot of high performance and moderate cost.

- Freemium pathways: OpenAI’s student‑trial and mobile app trials lower entry barriers, enabling rapid MVP validation.

- Multi‑model ecosystems: Extensions like Sider bundle several LLMs into a single UI, illustrating the shift toward “AI‑as‑a‑service” stacks.

- Valuation impact: Startups that adopt a model‑agnostic architecture and target niche verticals can justify premium pricing, leading to valuation multipliers of 2–3× within months.

For founders, accelerators, VCs, and corporate R&D leaders, the key takeaway is simple:

the November 2025 model wave has lowered the cost of production readiness while raising the ceiling for revenue potential.

The following sections unpack how to translate this phenomenon into strategic advantage.

Strategic Business Implications

The convergence of benchmark parity and token‑cost differentiation transforms the investment calculus. In 2025, a startup that can prove it delivers “top‑tier coding performance” with a margin of at least 30 % on its chosen LLM will find investors willing to pay higher valuation multiples. The reasons are threefold:

- Reduced prototype risk: Developers can test and iterate using free or low‑cost tokens, shortening the time from idea to product‑market fit.

- Scalable revenue models: High‑performance LLMs enable premium services—automated code review, AI‑driven bug triage, multimodal documentation—that justify enterprise pricing tiers.

- Vendor diversification: Multi‑model strategies mitigate lock‑in and allow firms to cherry‑pick the best cost/performance trade‑off for each use case.

Investors now view token economics as a proxy for future profitability. A startup that can lock in low input costs (e.g., GPT‑5.1 Codex‑Max at $1.25/1M tokens) while maintaining high output quality will be able to sustain larger user bases before hitting breakeven.

Technology Integration Benefits

Adopting a model‑agnostic architecture is no longer optional; it is the foundation for competitive differentiation. Below is a practical framework for integrating multiple LLMs into a single product stack:

- Abstraction layer: Build an internal API gateway that translates business logic into model‑specific prompts, handling context size adjustments and token cost monitoring.

- Dynamic routing: Use real‑time metrics (latency, cost per request, accuracy) to route requests to the most efficient model for each task.

- Cost dashboards: Provide founders with live visibility into token spend versus revenue, enabling rapid response to pricing shifts.

For example, a code‑migration SaaS could route simple refactor tasks to GPT‑5.1 Codex‑Max (cheapest) while reserving Claude Opus 4.5 for complex architectural changes that require higher fidelity.

ROI and Cost Analysis

To illustrate the financial upside, consider a hypothetical startup with 10,000 active users generating an average of 500 tokens per user per month. Using GPT‑5.1 Codex‑Max at $1.25/1M input tokens, monthly token cost is:

Input cost: 10,000 users × 500 tokens = 5 million tokens

Cost: 5 M / 1 M × $1.25 = $6,250 per month

If the same workload were handled by Claude Opus 4.5 at $5.00/1M input, cost would rise to $31,250—a five‑fold increase. The difference translates directly into higher gross margins and a more attractive valuation multiple.

Assuming a 30 % margin on GPT‑5.1 usage versus 15 % on Claude Opus, the startup could achieve annual recurring revenue (ARR) of $900k with $75k in monthly operating expenses, yielding a 12× EBITDA multiple—well above typical early‑stage averages.

Case Study: Rapid Pivot from POC to Production

Startup:

AutoDoc AI

, founded in January 2025, initially focused on auto‑generating documentation for Python libraries. By March, the team realized that the same LLM could be repurposed for code review and bug triage.

- Action: Leveraged OpenAI’s student‑trial to experiment with GPT‑4 Turbo (now superseded by GPT‑5.1 Codex‑Max) at zero cost, validating accuracy on a private repo set.

- Result: Within two weeks, AutoDoc launched a beta product that integrated Gemini 3 Pro for multimodal documentation and GPT‑5.1 Codex‑Max for code review.

- Outcome: In five months, the company raised $12 million at a $120 million valuation—double its pre‑November 2025 valuation—thanks to demonstrated revenue streams from both services.

The key driver was the ability to switch models based on cost and performance, coupled with rapid prototyping enabled by free trials. This pattern is replicable across many verticals where code generation or analysis is core.

Implementation Considerations and Best Practices

- Prompt engineering at scale: High‑performance models still require well‑crafted prompts to avoid hallucinations. Invest in a prompt library that can be versioned and reused across products.

- Compliance and data privacy: Multi‑model strategies may involve different data handling policies. Implement strict data routing rules to ensure compliance with GDPR, CCPA, and industry standards.

- Monitoring and alerting: Token usage spikes can erode margins overnight. Set up automated alerts for cost thresholds and performance anomalies.

- Vendor relationship management: Maintain open communication channels with model providers to stay ahead of pricing changes and feature releases.

Future Outlook: 2025–2026 Trend Predictions

The November 2025 wave is the tip of an evolving iceberg. Forecasts for 2026 suggest:

- Model consolidation: Providers will release unified APIs that abstract away model differences, simplifying multi‑model strategies but potentially raising token costs.

- Edge deployment: Hybrid on‑prem and cloud deployments will become standard to reduce latency for enterprise customers, increasing infrastructure complexity.

- Fine‑tuning democratization: Open-source fine‑tuning frameworks (e.g., LlamaIndex 2) will lower barriers for niche verticals, intensifying competition in specialized markets.

Startups that anticipate these shifts—by building flexible architectures and maintaining strong vendor relationships—will be positioned to capture the next wave of valuation growth.

Actionable Takeaways for Founders and Investors

- Adopt a model‑agnostic platform: Build an abstraction layer that lets you switch between Claude Opus 4.5, Gemini 3 Pro, and GPT‑5.1 Codex‑Max without code rewrites.

- Leverage freemium trials for rapid validation: Use OpenAI’s student‑trial and mobile app trials to test hypotheses at zero cost before scaling.

- Target high‑margin verticals: Focus on areas where coding performance translates into tangible business value—e.g., automated code review, bug triage, or multimodal documentation.

- Monitor token economics closely: Track input/output costs in real time and adjust pricing models to maintain healthy margins as provider prices shift.

- Build a prompt library: Standardize prompts across products to reduce hallucination risk and accelerate feature rollout.

- Prepare for edge deployment: Invest early in hybrid infrastructure plans to meet enterprise latency requirements without compromising cost efficiency.

In 2025, the confluence of benchmark parity, token‑cost differentiation, and developer‑first ecosystems has lowered the barrier to production readiness while raising revenue potential. Startups that strategically harness these dynamics—through model‑agnostic architectures, niche vertical focus, and rigorous cost monitoring—are poised for valuation multipliers that can double or triple within months. Investors who recognize this confluence and support firms building flexible, high‑margin AI services will be the ones to reap the upside of the next wave in AI entrepreneurship.

Related Articles

OpenAI joins seed round of brain-computer interface startup Merge Labs

OpenAI’s $250 M Seed Bet on Merge Labs: A Strategic Playbook for VC, Founders, and Corporate Leaders January 2026, 2025 market context Executive Snapshot Deal Size & Valuation: OpenAI’s $250 M check...

OpenAI acquires healthcare startup Torch, deal pegged at $100 million

OpenAI’s $100 million acquisition of Torch brings multimodal MedGPT‑X, 12 TB of de‑identified clinical data, and HIPAA‑ready APIs to the enterprise AI landscape in 2026.

OpenAI acquires health-care technology startup Torch

Discover how OpenAI’s Torch acquisition is reshaping health‑AI in 2026 with privacy‑first LLMs and scalable context engines.