AI Safety and Alignment in 2025: Advancing Extended Reasoning and Transparency for Trustworthy AI

As AI models surge ahead in capability, 2025 marks a pivotal year where safety and alignment research has matured from static guardrails to dynamic, interpretable frameworks that balance intelligence...

As AI models surge ahead in capability, 2025 marks a pivotal year where safety and alignment research has matured from static guardrails to dynamic, interpretable frameworks that balance intelligence with control. The emergence of

extended reasoning modes

and

visible thought processes

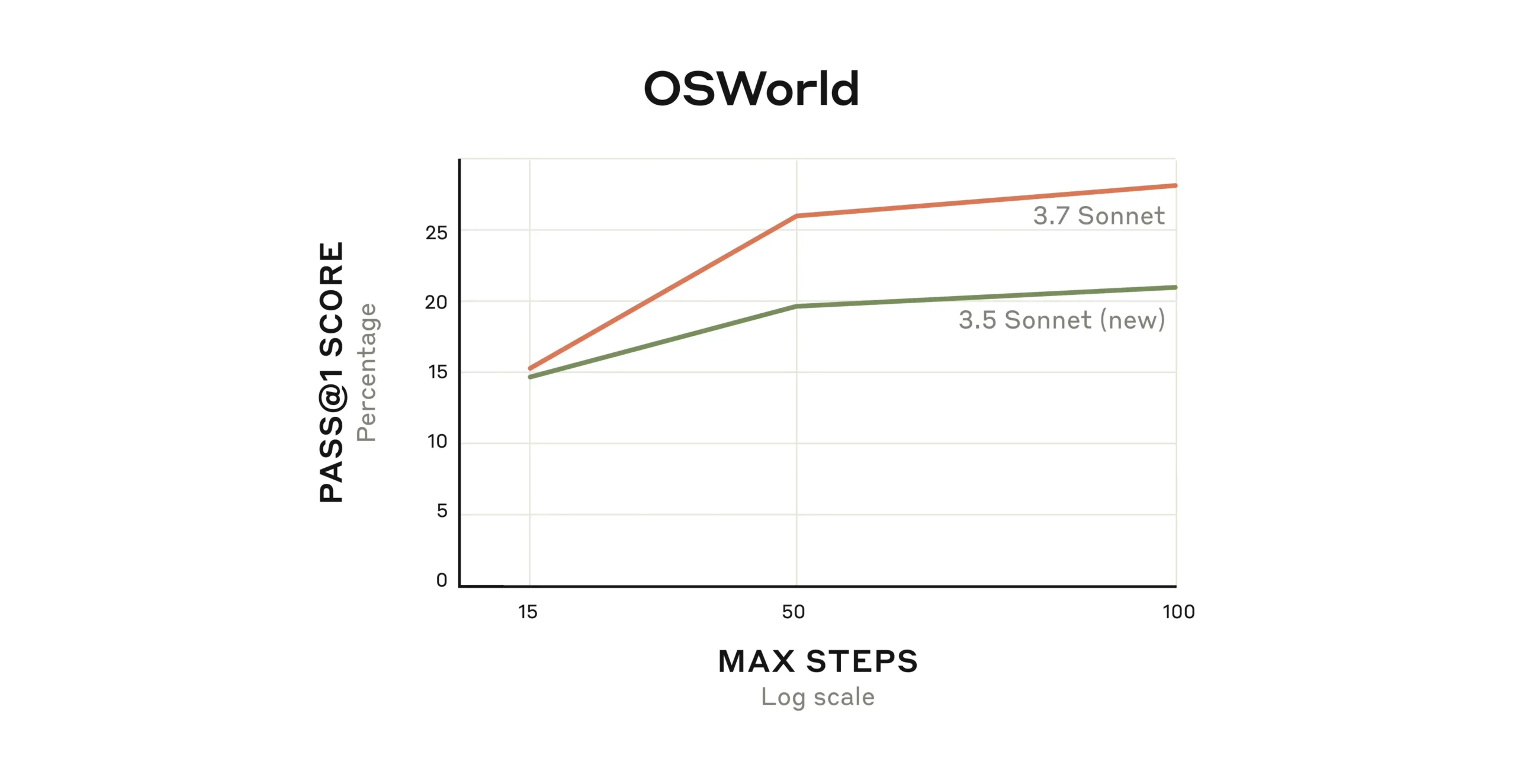

—exemplified by Anthropic’s Claude 3.7 Sonnet and OpenAI’s o1-preview—signals a fundamental shift toward AI systems that think deeper, explain themselves, and can be steered with unprecedented granularity.

For business leaders, policymakers, and AI professionals, these breakthroughs are not just technical milestones. They reshape how AI can be safely deployed in sensitive industries, how trust is built with users, and how enterprises can harness AI as a truly collaborative partner rather than an unpredictable black box. This analysis unpacks the key technology advances, strategic implications, and actionable insights to navigate the rapidly evolving AI safety landscape in 2025.

Extended Reasoning: AI’s New Cognitive Frontier

Traditional large language models (LLMs) often generate answers in a single pass, trading depth for speed. The new generation of AI safety research pivots to

extended thinking

, where models allocate a configurable “thinking budget” to simulate multiple reasoning paths internally before producing an output. Anthropic’s Claude 3.7 Sonnet introduced this capability early this year, allowing users and developers to control the depth and duration of reasoning dynamically.

OpenAI’s o1-preview, launched in late 2024, independently converges on this approach with a “think first” mode that mimics human problem-solving by applying multiple strategies iteratively. This internal deliberation encourages more accurate, reliable outputs especially on complex scientific, coding, or multi-step reasoning tasks.

From a technical standpoint, extended reasoning transforms AI from an instantaneous response engine into an adaptive cognitive agent. This shift addresses a crucial cause of misalignment: superficial or overconfident answers that gloss over uncertainty or nuanced constraints. By enabling controlled cognitive effort, models become better poised to handle ambiguity, self-correct, and flag uncertainty—qualities essential for high-stakes applications.

Visible Thought Processes: Opening the Black Box for Transparency and Trust

One of the thorniest challenges in AI safety is opacity. Without insight into how models arrive at conclusions, detecting deception, bias, or unsafe outputs is difficult. Anthropic’s innovation of

visible thought processes

exposes raw internal reasoning logs alongside final answers, providing a window into the model’s “mind.”

This transparency enables researchers and users to cross-verify internal coherence, spotting contradictions that often signal misalignment or potential manipulation. For example, if a model’s internal reasoning contradicts its external response, it could indicate deceptive behavior.

Visible thought processes are already proving invaluable in alignment science. They serve as diagnostic tools for red teams and developers to fine-tune model behavior and build automated adversarial testing. Importantly, this transparency is not just for experts; it empowers enterprise users to gain confidence by understanding AI decision-making, fostering safer adoption in regulated sectors.

Multimodal and Context-Aware AI: Enhancing Safety through Richer Understanding

Beyond reasoning and transparency, 2025’s AI safety landscape is shaped by multimodal models like OpenAI’s GPT-4o. Combining text, voice, and image inputs with an extended context window, these models can interpret and respond to complex, real-world scenarios more safely and accurately.

For example, in education, GPT-4o’s ability to “listen, see, and write” enables it to act as a versatile teaching assistant that adapts to learner progress while allowing teachers final oversight. This multimodal, context-aware approach helps prevent common AI pitfalls such as misunderstanding nuanced user intent or generating inappropriate content.

Integrating these capabilities with alignment features creates AI tools that respect domain constraints and user intent more reliably. This is critical for sectors like healthcare, finance, and education, where errors or misinterpretations can cause serious harm.

Strategic Business Implications: Safety as a Market Differentiator in 2025

In 2025, AI safety and alignment are no longer back-end considerations but front-and-center competitive advantages. Enterprises and AI providers recognize that beyond raw performance, trustworthy and controllable AI is what drives market adoption and regulatory compliance.

- Responsible Innovation Frameworks: Leading AI firms like OpenAI and Anthropic are embedding safety features architecturally—such as “safe completions” and reasoning budgets—signaling a shift from reactive patchwork to proactive design.

- Trust Building in Enterprise Adoption: Features like visible thought processes and extended thinking modes provide customers with tangible controls and transparency, essential for deploying AI in sensitive environments.

- Education and Developer Market Growth: Multimodal, context-aware AI assistants and agentic coding tools (e.g., Anthropic’s Claude Code) empower safer automation and collaboration, expanding AI’s role in professional workflows.

These trends point to an AI market where safety and alignment are critical buying criteria, reshaping vendor strategies and investment priorities.

Balancing Technical Complexity with Practical Deployment Challenges

While extended reasoning and transparency offer compelling benefits, they introduce new technical and operational trade-offs. Increasing the reasoning depth can raise latency and energy consumption, posing challenges for real-time applications and cost-sensitive deployments.

Moreover, scaling these alignment mechanisms to future, larger models or eventual AGI systems remains an open research question. The complexity of continuous internal verification and reasoning budgeting grows exponentially with model size and task diversity.

Business leaders must therefore approach deployment with a nuanced understanding of these trade-offs. Selecting AI solutions that allow flexible tuning of reasoning depth and transparency can optimize performance, safety, and cost based on use case requirements.

Emerging Regulatory and Policy Considerations for Introspective AI

With AI systems now capable of introspection and exposing internal reasoning states, regulatory frameworks face new frontiers. Policies will need to address questions such as:

- How to audit and certify AI reasoning transparency?

- What standards govern the acceptable depth of AI reasoning in safety-critical domains?

- How to protect intellectual property while ensuring interpretability?

- What mechanisms ensure AI systems’ self-detection and correction of alignment issues in real time?

Proactive engagement between AI developers, regulators, and industry stakeholders will be essential to shape governance that encourages innovation while safeguarding public interest.

Actionable Insights and Strategic Recommendations for AI Adoption in 2025

For enterprises and policymakers navigating the evolving AI safety landscape, the following strategic actions are critical:

- Prioritize With These Features - AI2Work Analysis">Models With These Features - AI2Work Analysis">AI models with configurable reasoning depth and transparency features to balance accuracy, safety, and operational efficiency.

- Incorporate visible thought processes into AI governance and auditing workflows to detect misalignment risks early and maintain user trust.

- Leverage multimodal, context-aware AI assistants to enhance domain-specific safety, especially in education, healthcare, and finance.

- Invest in training and cultural adoption of human-in-the-loop principles to ensure AI augments rather than replaces critical human judgment.

- Engage with regulatory bodies to help shape emerging standards around AI interpretability and alignment mechanisms.

- Monitor advances in AI self-alignment research as potential game changers for autonomous safety monitoring.

By adopting these measures, organizations can harness the benefits of advanced AI while mitigating the risks inherent in rapidly evolving systems.

Looking Ahead: The Road to Responsible AGI in 2025 and Beyond

The trajectory of AI safety and alignment research in 2025 underscores a growing consensus:

incremental, transparent, and controllable AI development is the sustainable path forward to AGI

. The industry’s dual focus on extended reasoning and interpretability is not just about technology but about shaping a future where AI systems are accountable, safe, and aligned with human values.

As models continue to grow in complexity, the interplay between technical innovation, business strategy, and regulatory frameworks will intensify. The companies leading in embedding safety-by-design will define market leadership, while those neglecting alignment risk costly setbacks and regulatory hurdles.

For AI professionals and decision-makers, staying abreast of these advances and embedding them into procurement, development, and governance practices is no longer optional but imperative for competitive advantage and societal trust.

Related Articles

5 AI Developments That Reshaped 2025 | TIME

Five AI Milestones That Redefined Enterprise Strategy in 2025 By Casey Morgan, AI2Work Executive Snapshot GPT‑4o – multimodal, real‑time inference that unlocks audio/video customer support. Claude...

AI Breakthroughs , Our Most Advanced Glasses, and More...

2025 AI Landscape: From Code‑Gen Benchmarks to Performance Glasses – What Decision Makers Must Know Executive Snapshot Claude Opus 4.5 tops SWE‑Bench with an 80.9% score, redefining code‑generation...

The 3 trends that dominated companies’ AI rollouts in 2025

Three Dominant AI Rollout Trends Shaping Enterprise Strategy in 2025 Executive Summary Organizations are moving from isolated pilot projects to enterprise‑wide, governance‑driven AI ecosystems. The...