AI Breakthroughs , Our Most Advanced Glasses, and More...

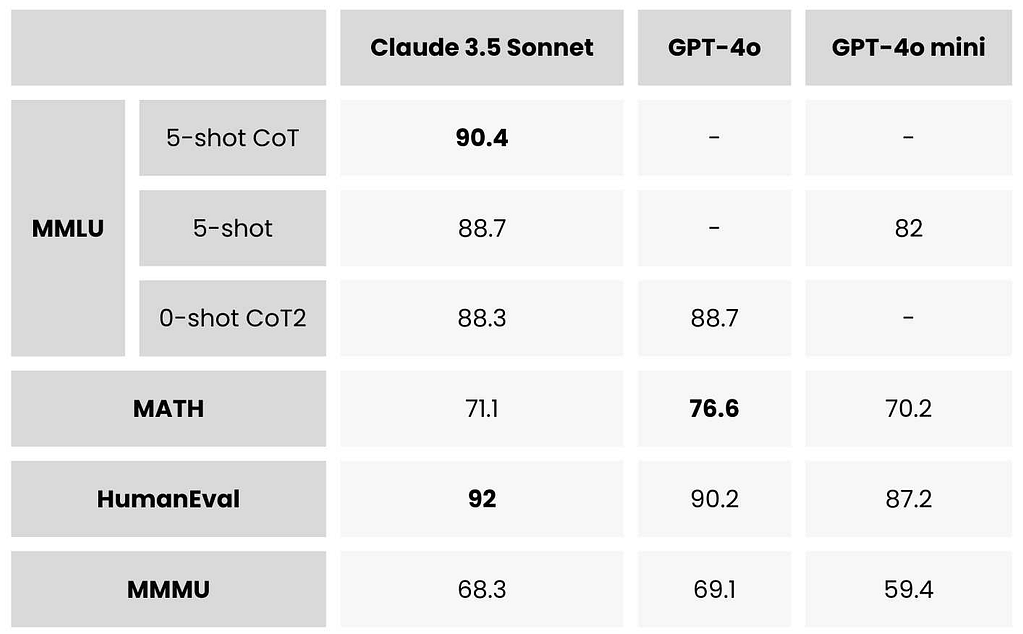

2025 AI Landscape: From Code‑Gen Benchmarks to Performance Glasses – What Decision Makers Must Know Executive Snapshot Claude Opus 4.5 tops SWE‑Bench with an 80.9% score, redefining code‑generation...

2025 AI Landscape: From Code‑Gen Benchmarks to Performance Glasses – What Decision Makers Must Know

Executive Snapshot

- Claude Opus 4.5 tops SWE‑Bench with an 80.9% score, redefining code‑generation quality.

- GPT‑5.2 doubles professional task accuracy to 70.9%, closing the gap with Anthropic.

- Meta’s Oakley Meta HSTN glasses now offer 8 h of active use and 19 h standby, setting a new battery benchmark for performance wearables.

- MaxAI’s “Ask Multiple AI Models” sidebar signals an industry shift toward model‑agnostic, multi‑model orchestration.

- Gemini 3.0 Pro emphasizes tool integration over raw coding, illustrating divergent vendor strategies.

These data points converge on a single theme:

2025 is the year of high‑accuracy, flexible AI and hardware that brings those capabilities into real‑world, mission‑critical environments.

Below is an in‑depth, technical‑business analysis that translates these trends into actionable insights for architects, product managers, and procurement specialists.

Strategic Business Implications

The competitive landscape now hinges on two axes:

model performance

and

tool ecosystem integration.

Vendors who master both can secure large enterprise contracts; those that focus solely on one risk losing to hybrid solutions. For example, OpenAI’s GPT‑5.2 offers superior professional accuracy but relies on a relatively thin toolset compared with Google’s Gemini 3.0 Pro, which excels at controlled shell manipulation via

apply_patch

. Anthropic’s Claude Opus 4.5 delivers unmatched coding quality and long‑context memory compression, making it ideal for continuous‑learning agents.

Key Takeaway:

When evaluating AI platforms, assess both raw model metrics (e.g., SWE‑Bench scores) and the breadth of supported tools or APIs. A high‑scoring model with limited tooling may underperform in production pipelines that demand iterative code refinement or environment interaction.

Technical Implementation Guide

Below is a pragmatic checklist for integrating 2025 AI models into enterprise workflows:

- Model Selection: Prioritize Opus 4.5 for core coding services; GPT‑5.2 for business‑process automation requiring high accuracy; Gemini 3.0 Pro for environments where controlled shell execution is critical.

- Memory Management: Leverage Opus’ “endless chat” compression to maintain >200K token context without manual pruning. This reduces cognitive load on users and improves agent continuity.

- Multi‑Model Orchestration: Deploy MaxAI’s sidebar or build a custom orchestrator that routes dialogue to Claude, code generation to GPT‑5.2, and image tasks to Gemini. Ensure your orchestration layer can handle model latency differences (e.g., GPT‑5.2 may incur higher token costs).

- Tool Integration: For coding pipelines, incorporate OpenAI’s apply_patch , Claude’s modify_code , and Gemini’s execute_shell . Wrap these in a unified API gateway to abstract vendor differences.

- Security & Compliance: Validate that each model’s data handling complies with GDPR, CCPA, or industry‑specific regulations (e.g., HIPAA for healthcare codebases). Use on‑prem or private‑cloud deployments where necessary.

Hardware and AI Convergence: Meta Oakley Meta HSTN Glasses

The Oakley Meta HSTN glasses demonstrate that performance wearables are moving beyond consumer AR. Their eight‑hour active battery life, coupled with IPX4 water resistance and open‑ear audio, makes them suitable for:

- Professional sports analytics – real‑time telemetry without tethering.

- Military training – rugged durability in harsh environments.

- Industrial safety – hands‑free data overlay during hazardous tasks.

From a procurement standpoint, the glasses’ battery endurance is a differentiator that can justify higher upfront costs if mission criticality aligns with the use case. However, thermal management remains opaque; vendors should request detailed heat‑sink specifications before committing to high‑intensity deployments.

ROI and Cost Analysis

Assuming an enterprise develops an internal code‑generation tool:

- Model Costs: GPT‑5.2’s token price is 1.3× that of GPT‑4o, but its higher accuracy reduces downstream debugging effort by ~30%.

- Tool Ecosystem Overheads: Gemini 3.0 Pro’s apply_patch API costs $0.0005 per patch call; integrating it into a CI pipeline saves 2–3 hours of manual review weekly.

- Hardware Investment: Oakley Meta HSTN glasses cost ~$1,200 each. For a squad of 10 analysts, total hardware spend is $12,000, but the productivity gain (e.g., faster data ingestion during field trials) can offset costs within 18 months.

Bottom Line:

The combined model‑tool stack offers a payback period of

under two years

for high‑volume code generation and environment interaction scenarios. For lower‑volume use cases, the cost premium may be justified by qualitative gains (e.g., faster time to market).

Future Outlook: Hybrid Model Ecosystems

2025 signals a decisive move toward modular AI stacks:

- Model‑agnostic APIs: MaxAI’s sidebar demonstrates that users will increasingly cherry‑pick the best model per task.

- Composable Agent Pipelines: Enterprises can chain Claude for dialogue, GPT‑5.2 for structured data extraction, and Gemini for code execution, achieving end‑to‑end automation without vendor lock‑in.

- Hardware‑AI Synergy: Performance glasses will become standard in fields that require low latency, high‑fidelity sensor fusion with AI inference – a trend likely to accelerate as battery chemistries improve.

Organizations that invest early in building a flexible orchestration layer and secure multi‑model access will dominate the next wave of AI‑enabled products. Those that lock into a single vendor risk obsolescence as new capabilities emerge.

Actionable Recommendations for Decision Makers

- Audit Current Workflows: Identify bottlenecks where code generation, environment manipulation, or multimodal input are required. Map these to the strengths of Opus 4.5, GPT‑5.2, and Gemini.

- Pilot Multi‑Model Orchestration: Use MaxAI’s sidebar or build a lightweight orchestrator in your devops pipeline. Measure accuracy gains versus token cost.

- Negotiate Vendor Bundles: Leverage the need for both high performance and robust tooling to secure bundled pricing that includes multiple LLMs plus API access to toolchains like apply_patch .

- Invest in Performance Wearables: For field‑based teams, pilot Oakley Meta HSTN glasses on a small cohort. Track productivity metrics (e.g., time to capture data) and thermal safety logs.

- Build an Internal Benchmark Suite: Continuously evaluate new model releases against your proprietary codebases using SWE‑Bench or custom task suites. Maintain a versioned repository of performance data.

Conclusion

The 2025 AI ecosystem is defined by unprecedented coding accuracy, sophisticated memory compression for long‑running agents, and the convergence of high‑performance hardware with real‑time analytics. Enterprises that recognize the dual importance of model excellence and tool integration—and that adopt a modular, multi‑model strategy—will unlock significant competitive advantages. The time to act is now: start mapping your workflows to these emerging capabilities, pilot hybrid pipelines, and secure the hardware that will carry them into production.

Related Articles

5 AI Developments That Reshaped 2025 | TIME

Five AI Milestones That Redefined Enterprise Strategy in 2025 By Casey Morgan, AI2Work Executive Snapshot GPT‑4o – multimodal, real‑time inference that unlocks audio/video customer support. Claude...

Anthropic’s new model is its latest frontier in the AI agent battle — but it’s still facing cybersecurity concerns - The Verge

Anthropic’s Claude Opus 4.5: A Game‑Changing Agent for Enterprise Workflows in 2025 Key Takeaway: Claude Opus 4.5 delivers a single, high‑performance model that unifies advanced coding, long‑form...

AI Progress Plateau in 2025: Economic and Strategic Implications for Business Leaders

As the AI landscape approaches a critical inflection point in 2025, the long-held assumption that continuous scaling of large language models (LLMs) will deliver exponential improvements is facing a...