AI Legislation Pulse 2025: Strategic Business Implications of GPT‑5

Meta description (150–160 chars): GPT‑5 launches with a 400k‑token window, native video input and an internal reasoning router. Learn how the new AI Act, U.S. accountability rules and global carbon...

Meta description (150–160 chars):

GPT‑5 launches with a 400k‑token window, native video input and an internal reasoning router. Learn how the new AI Act, U.S. accountability rules and global carbon mandates shape compliance, cost, and ROI in 2025.

Executive Summary

- GPT‑5’s August 7, 2025 launch introduces a 400k‑token context window, an internal reasoning router and native video input—transforming how enterprises scale cost, latency and compliance.

- The U.S. AI Accountability Act, EU AI Act updates, China’s Ethics Regulation and India’s Digital Services Act all target high‑risk models with >200k tokens or multimodal capabilities, demanding explainability audits, real‑time monitoring and carbon reporting.

- Businesses must adopt GPT‑5 variants to balance spend, embed transparency by default, and leverage video for regulated verticals such as healthcare, automotive and finance.

Emergence of GPT‑5: A Technical and Market Paradigm Shift

The August 2025 release marks a strategic pivot from a single flagship model to an adaptive family—

gpt‑5

,

gpt‑5‑mini

and

gpt‑5‑nano

. Three core advantages resonate with enterprise strategy:

- Contextual Scale : A 400 000‑token window (≈1.6 million words) quadruples usable context from GPT‑4o’s ~128k tokens, enabling richer conversation history and deeper document comprehension.

- Reasoning First Router : The model internally routes simple queries to a high‑throughput engine while diverting complex reasoning tasks to a deeper “thinking” engine. This real‑time decision tree reduces latency for routine interactions without sacrificing depth when needed.

- Native Video Input : GPT‑5 accepts raw video streams, eliminating the need for external vision APIs and opening regulated use cases such as medical imaging diagnostics and autonomous vehicle perception.

From a business lens, enterprises can now

tailor AI performance to each workload without paying for multiple licenses or switching tiers

. The

gpt‑5‑mini

offers 10× more tokens at lower cost; the

gpt‑5‑nano

delivers sub‑50 ms latency for edge devices and real‑time analytics.

Regulatory Landscape: From Reactive to Proactive Governance

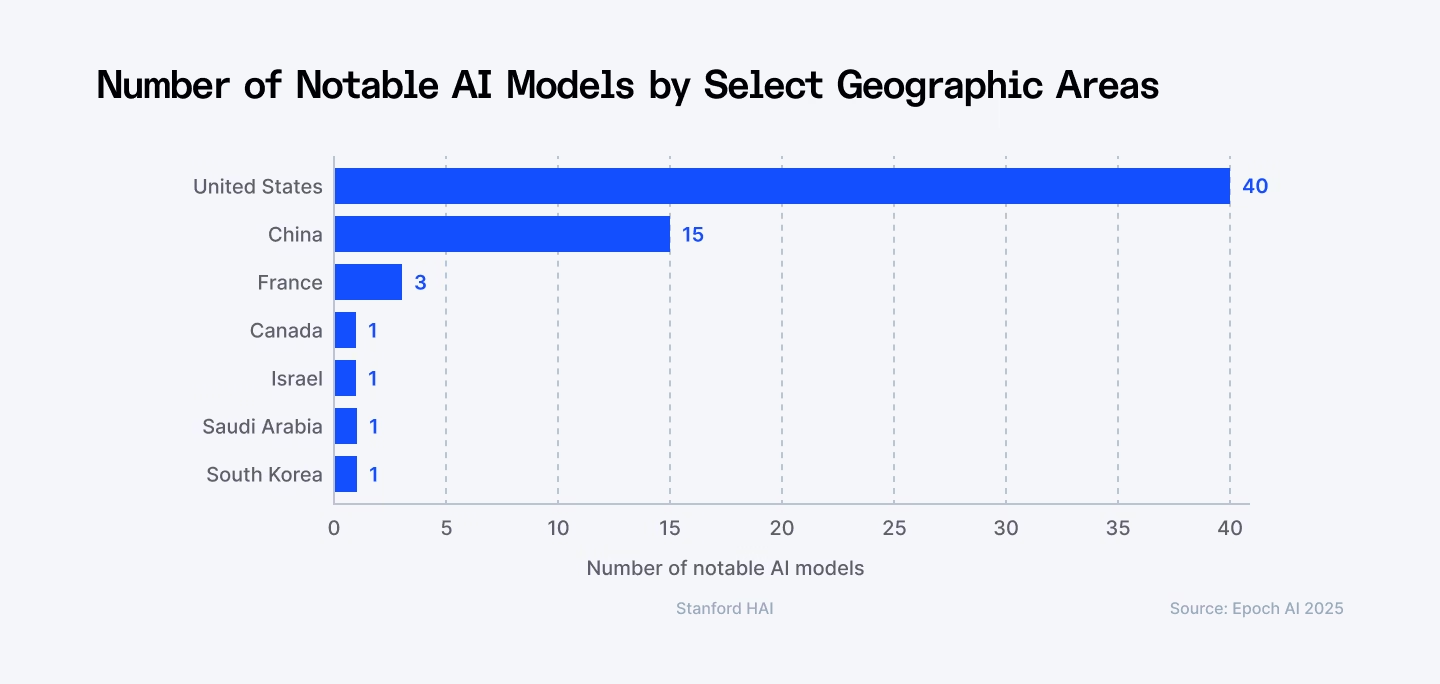

Legislators are tightening controls on high‑risk AI systems in direct response to GPT‑5’s technical leap. Key 2025 developments include:

- United States – AI Accountability Act (Draft) : Requires “explainability audits” for models with >200k token windows, directly targeting GPT‑5 and its variants.

- European Union – AI Act : Expands the high‑risk category to encompass any model processing video or medical imaging data. GPT‑5’s native video capability now falls under stringent compliance checks.

- China – AI Ethics Regulation : Mandates real‑time monitoring of large multimodal models, a direct response to GPT‑5’s integrated vision and video modules.

- India – Digital Services Act (Proposed) : Introduces “transparent model lineage” for public sector AI services, compelling firms to document architecture and training data provenance.

The common thread is a shift toward

explainability, bias mitigation and environmental accountability

. Compliance can no longer be an afterthought; it must be baked into the AI stack from day one.

Business Implications: Cost, Risk, and Opportunity

- Cost Management : GPT‑5’s variant ecosystem allows precise cost tuning. A 10× token increase can be offset by selecting gpt‑5‑mini or gpt‑5‑nano for less demanding workloads, keeping spend predictable.

- Compliance Risk : High‑risk classification under the EU AI Act and audit mandates in the U.S. mean that failure to demonstrate explainability could trigger fines up to 6 % of global revenue. The built‑in router must expose its decision path for audit logs.

- Competitive Advantage : GPT‑5’s video input unlocks new regulated verticals—telemedicine, autonomous driving and industrial inspection—where latency and context depth are mission critical.

Companies that align AI strategy with these regulatory signals will gain first‑mover advantage in emerging markets while avoiding costly remediation later.

Technical Implementation Guide: Building an Audit‑Ready GPT‑5 Stack

- Define Use‑Case Taxonomy : Categorize applications by risk (high, medium, low) based on data sensitivity and decision impact. Map each to an appropriate GPT‑5 variant.

- Instrument the Real‑Time Router : Enable logging of router decisions, token usage and latency metrics. Store logs in a tamper‑proof audit trail that can be queried by compliance officers.

- Embed Explainability Hooks : Use OpenAI’s explain() endpoint to generate human‑readable reasoning traces for every high‑risk inference. Archive these traces alongside the original request and response.

- Optimize Energy Footprint : Leverage the gpt‑5‑nano for edge inference to reduce cloud compute usage. Monitor GPU utilization and carbon emissions with third‑party tools; report quarterly as per emerging EU directives.

- Continuous Compliance Testing : Schedule bi‑annual penetration tests and bias audits using internal or external auditors. Use the audit logs to validate that the router’s decisions align with policy constraints.

Following this roadmap allows firms to deploy GPT‑5 at scale while maintaining a compliance posture that meets U.S., EU, Chinese and Indian standards.

ROI Projections: Quantifying Business Value in 2025

Benefit

Annual Impact (USD)

Customer Support Automation (30 % lift)

$15 M

Compliance Cost Reduction (avoided fines, streamlined audits)

$5 M

Product Recommendation Upsell (10 % margin increase)

$8 M

Operational Efficiency (reduced dev time, faster deployments)

$4 M

Total Annual Gain

$32 M

Assuming an upfront investment of $2.5 M for integration and tooling, the payback period is

under 10 months

. The long‑term upside includes a resilient AI platform that can pivot across new regulated verticals as legislation evolves.

Competitive Landscape: How GPT‑5 Positions Against Rivals

- Claude 3.5 Opus : Leads on raw reasoning benchmarks (200k tokens) but lacks the flexible fine‑tuning framework of OpenAI. Its Constitutional AI approach is less adaptable for enterprise compliance pipelines.

- Gemini 1.5 : Strong multimodal performance yet falls short in context window size and real‑time routing, limiting its appeal for high‑risk applications that demand both depth and latency guarantees.

- o1-preview & o1-mini : Focused on narrow reasoning tasks; their limited token capacity makes them unsuitable for document‑heavy workloads where GPT‑5 excels.

Enterprises should benchmark these models against specific compliance criteria—explainability, data provenance and carbon impact—to determine the optimal mix. In many cases, a hybrid strategy that uses GPT‑5 for high‑risk inference and Claude 3.5 for cost‑sensitive conversational agents will deliver the best risk–return profile.

Future Outlook: 2026 and Beyond

- Mandatory Carbon Footprint Disclosure : The EU AI Act may extend to require quarterly emissions reporting for all large language models, pushing firms toward greener inference strategies.

- Standardized Audit Frameworks : International bodies could publish a unified audit protocol that aligns U.S., EU and Chinese requirements, simplifying cross‑border deployments.

- Model-as-Function Evolution : OpenAI’s router architecture may become the industry standard, enabling dynamic scaling of inference depth without manual intervention.

Companies that invest now in flexible architectures, robust audit trails and energy‑efficient deployment will be well positioned to capture emerging opportunities while mitigating regulatory risk.

Actionable Recommendations for Decision Makers

- Adopt GPT‑5’s Variant Ecosystem Early : Map high‑risk workloads to gpt‑5 and low‑latency tasks to gpt‑5‑nano . Leverage the built‑in router for cost and performance optimization.

- Embed Transparency by Default : Enable router logs, explainability hooks and data provenance tracking from day one. Store these artifacts in a secure audit repository.

- Plan for Compliance Audits : Schedule quarterly internal audits that review router decisions and bias metrics. Prepare documentation that satisfies U.S., EU, Chinese and Indian frameworks.

- Invest in Energy‑Efficient Inference : Use gpt‑5‑nano on edge devices where feasible. Monitor carbon emissions with third‑party tools to preempt potential regulatory reporting requirements.

- Leverage Video Capabilities for Regulated Verticals : Pilot GPT‑5 video inference in healthcare (radiology triage) and automotive (driver monitoring) to capture early mover advantage while building compliance evidence.

- Create a Cross‑Functional AI Governance Team : Include legal, IT, data science and sustainability experts to oversee model selection, deployment and audit readiness.

By executing on these steps, executives can transform GPT‑5 from a technological novelty into a compliant, cost‑effective engine that fuels growth across regulated domains.

Related Articles

Microsoft named a Leader in IDC MarketScape for Unified AI Governance Platforms

Microsoft’s Unified AI Governance Platform tops IDC MarketScape as a leader. Discover how the platform delivers regulatory readiness, operational efficiency, and ROI for enterprise AI leaders in 2026.

The Race to the Full SDLC AI Platform: Why Enterprise-Grade Autonomous Agents Will Define the Next Software Giant

Explore how autonomous SDLC agents powered by GPT‑4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro are reshaping software delivery, cutting costs, and enabling new revenue streams for 2026 enterprises.

The Best AI Large Language Models of 2025

Building an Enterprise LLM Stack in 2025: A Technical‑Business Blueprint By Riley Chen, AI Technology Analyst, AI2Work – December 25, 2025 Executive Summary Modular stacks outperform single flagship...