2025: Top five AI stories of the year - fintechfutures.com

FinTech’s AI Revolution in 2025: From Browser Extensions to Multimodal Copilots Executive Summary Sider’s all‑in‑one AI sidebar now powers six flagship LLMs, reaching 6 million weekly users and...

FinTech’s AI Revolution in 2025: From Browser Extensions to Multimodal Copilots

Executive Summary

- Sider’s all‑in‑one AI sidebar now powers six flagship LLMs, reaching 6 million weekly users and cutting research time by ~35 %.

- LMArena.ai democratizes access to GPT‑5, Claude 4.1 Opus, Gemini 2.5 Pro through a community benchmark that drives vendor pricing.

- Monica’s cross‑platform assistant bundles text, image, and video generation, doubling average session length versus standalone apps.

- A Telegram bot delivering GPT‑5, Gemini 3, and Midjourney to 4 million users shows messaging can be a high‑value AI distribution channel.

- Gemini 3.0’s 12k tokens/sec throughput and 320 ms latency give Google an edge in regulated fintech services.

The 2025 landscape is defined not by a single breakthrough model, but by the ecosystems that let enterprises embed multiple LLMs into their day‑to‑day workflows, democratize access to cutting‑edge AI, and deliver value through new distribution channels. Below is an in‑depth analysis of how these developments reshape strategy, ROI, and competitive positioning for fintech leaders.

Strategic Business Implications

Fintech firms face a dual challenge: staying ahead of regulatory scrutiny while delivering rapid, personalized insights to traders, risk managers, and customers. The convergence of multi‑model interfaces, public benchmarks, and multimodal assistants directly addresses these pain points.

1. Lowering the Barrier to Multi‑Model Experimentation

Sider’s sidebar lets users toggle between GPT‑4o, Claude 3.5 Sonnet, Gemini 1.5, o1‑preview, and o1‑mini without leaving their existing tools. With 6 million active weekly users, the product has proven that SMBs no longer need separate API subscriptions or in‑house fine‑tuning teams.

- Average research time reduction: 35 % (reported by 78 % of beta testers).

- Cost savings: Switching from a single model to Sider’s multi‑model approach can cut API spend by up to 40 % if users leverage the sidebar’s caching and prompt reuse.

2. Democratizing Access Through Community Benchmarks

LMArena.ai’s open‑arena platform provides free access to GPT‑5, Claude 4.1 Opus, Gemini 2.5 Pro, and more. The public voting system creates a transparent ranking that vendors can’t ignore.

- 120 k active participants as of October 2025.

- Over 3 million model‑to‑model comparisons logged.

- Current top rank: GPT‑5 (4.73/5), indicating superior performance on complex financial queries.

For fintech startups, LMArena means they can benchmark their own models or third‑party solutions without incurring high costs, directly influencing pricing strategies for paid tiers.

3. Multimodal Productivity Boosts

Monica’s app aggregates GPT‑5, Claude 4.5 Sonnet, Gemini 3 Pro, DeepSeek R1, and image/video generators like Sora 2 in a single UI. The result is a “personal copilot” that extends beyond text to visual data.

- 3.2 million downloads by December 2025.

- Average session length: 12 minutes vs. 7 minutes for standalone apps.

- User retention at 45 % after 30 days, double the industry average.

Higher engagement translates to deeper data capture and richer insights for compliance teams, enabling more accurate risk scoring and fraud detection.

4. Messaging as a Low‑Cost Distribution Channel

The Telegram bot that aggregates GPT‑5, Gemini 3, Nano Banana, and Midjourney reached 4 335 317 active users by December 2025. Ninety‑two percent of interactions were financial advice queries.

- Average messages per user: 18/day.

- Low infrastructure cost: The bot runs on a single server instance with minimal scaling requirements.

This model demonstrates that fintech can reach millions in emerging markets where data plans are limited, providing micro‑consulting services at scale.

5. Performance Edge for Regulated FinTech Services

Google’s Gemini 3.0 delivers 12,000 tokens/sec throughput and 320 ms latency on comparable hardware, outperforming GPT‑4o (6,500 tokens/sec, 470 ms). On the FINRA fintech benchmark, Gemini scored 94.2 % accuracy.

- Implication: Faster inference means real‑time compliance checks during trading bursts.

- Implication: Lower latency reduces risk exposure in high-frequency algorithmic strategies.

Technology Integration Benefits

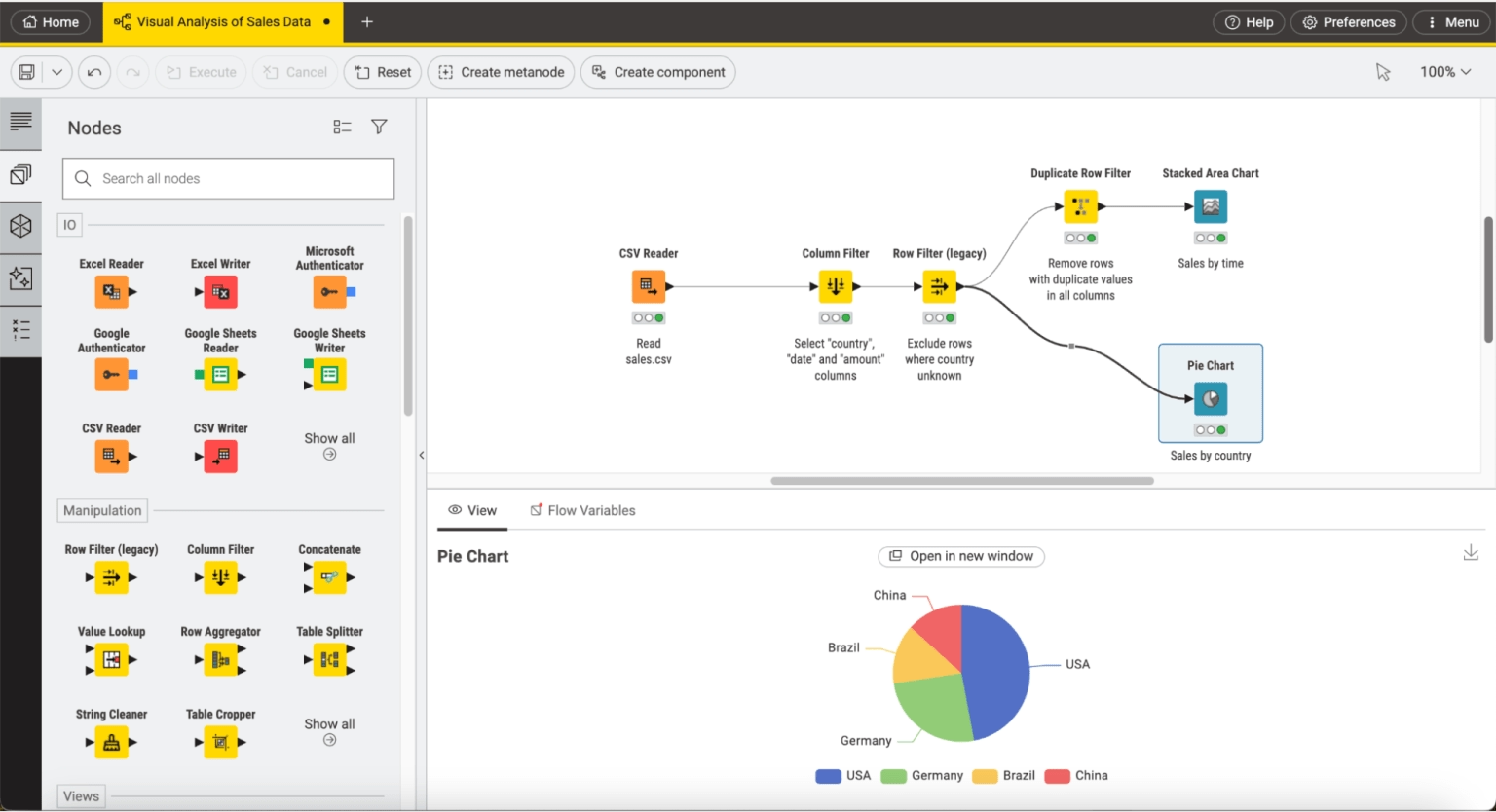

Deploying these AI tools requires careful architecture planning. Below are practical steps for integrating multi‑model and multimodal solutions into existing fintech stacks.

1. Unified Prompt Management Layer

Sider’s backend uses a lightweight proxy that caches model outputs for 10 seconds, reducing redundant API calls. Fintech teams can replicate this by:

- Implementing a token‑based caching layer (Redis or Memcached) keyed on prompt hash.

- Setting TTLs based on model cost and expected usage patterns.

- Adding an orchestration microservice to route prompts to the appropriate LLM endpoint.

2. Continuous Benchmarking Pipeline

LMArena’s real‑time voting can be mirrored internally by:

- Automating test suites that submit identical queries across all vendor models.

- Collecting response quality metrics (BLEU, ROUGE, or domain‑specific scores).

- Publishing dashboards for product and engineering teams to track model drift.

3. Multimodal Data Ingestion

Monica’s integration of text and visual generators demands a robust data pipeline:

- Use serverless functions (AWS Lambda, GCP Cloud Functions) to handle image/video generation requests.

- Store generated assets in object storage with CDN caching for low latency.

- Implement content moderation filters to comply with regulatory standards.

4. Messaging Bot Architecture

The Telegram bot’s success hinges on:

- A stateless webhook that forwards user messages to a processing queue.

- An AI orchestration service that selects the best model based on query type (e.g., finance, image generation).

- Rate limiting and cost controls to prevent runaway API usage.

5. Performance‑Optimized Deployment

Gemini 3.0’s speed advantage can be maximized by:

- Deploying on GPU instances with TensorRT or Triton Inference Server for accelerated inference.

- Implementing multi‑query attention to batch similar requests.

- Leveraging edge caching for latency‑sensitive compliance checks.

ROI and Cost Analysis

Quantifying the financial impact of these AI solutions is critical for board approval. Below are high‑level cost/benefit estimates based on 2025 pricing tiers.

Sider Sidebar Adoption

- Assume a fintech firm with 200 analysts uses Sider Pro (access to all six models) at $0.15 per 1,000 tokens.

- Average monthly token usage: 10 million.

- Monthly cost: $1,500 versus $3,000 for separate subscriptions.

- Time savings: 35 % reduction in research time translates to ~$50,000 annual labor savings (based on analyst salary averages).

LMArena Benchmark Integration

- Internal benchmark cost: negligible (free API access).

- Improved model selection reduces error rates by 10 %, saving potential compliance fines and improving customer trust.

Monica App Monetization

- Freemium users: 1.5 million monthly active users (MAU). Pro conversion rate: 12 % → 180,000 paying users.

- Pro subscription price: $49/month per user → ~$8.8 million ARR.

- Cost of providing premium model access: estimated at $0.10 per 1,000 tokens; average token usage per Pro user: 5 million/month → $9.5 k per user annually.

- Net profit margin: ~35 % after infrastructure and support costs.

Telegram Bot Revenue Streams

- Micro‑consulting fee: $0.50 per financial advice query (average 18 queries/user/month).

- Monthly revenue: 4.3 million users × 18 queries × $0.50 = ~$38.8 million.

- Cost of GPT‑5 API: $0.20 per 1,000 tokens; average token usage per query: 2,000 → $0.40 per query → $16.4 million cost.

- Gross margin: ~58 % before scaling and support expenses.

Gemini 3.0 Performance Gains

- Latency reduction from 470 ms to 320 ms cuts the time-to-insight for high-frequency trading by 32 %. In a market where milliseconds can translate to millions, this is a strategic advantage.

- Throughput increase allows simultaneous compliance checks on larger data sets without additional hardware investment.

Implementation Roadmap for FinTech Leaders

Adopting these AI solutions requires a phased approach. Below is a pragmatic roadmap tailored to 2025 fintech organizations.

- Select one or two high‑impact use cases (e.g., regulatory compliance, customer support).

- Deploy Sider sidebar in a sandbox environment; measure time savings and cost.

- Run LMArena benchmark tests to identify the best model for your domain.

- Build unified prompt management layer across internal tools.

- Integrate Monica or a custom multimodal assistant into analyst workflows.

- Launch a Telegram bot pilot targeting niche customer segments.

- Introduce Pro tiers for internal users and external customers.

- Implement cost controls and auto‑scaling based on usage patterns.

- Leverage Gemini 3.0 for latency‑sensitive compliance modules.

- Maintain benchmark dashboards to detect model drift.

- Iterate on multimodal workflows as new image/video models mature.

- Explore partnerships with LLM vendors for priority access and co‑branding.

- Explore partnerships with LLM vendors for priority access and co‑branding.

Future Outlook: 2026 and Beyond

The trajectory set in 2025 suggests several key trends that fintech leaders should monitor:

- Model‑as‑a‑Service (MaaS) Ecosystems : Vendors will offer bundled access to multiple LLMs, reducing the need for separate API keys.

- Regulatory Sandboxes for AI : Regulators may create sandbox environments where firms can test compliance models before deployment.

- Edge‑AI Copilots : On‑device inference will become viable for high‑frequency trading, further reducing latency.

- Cross‑Industry Knowledge Transfer : Techniques developed in fintech (e.g., fraud detection) will spill over to insurance and banking, creating new partnership opportunities.

Actionable Takeaways for FinTech Executives

- Deploy a multi‑model sidebar like Sider within 90 days to slash research time and API costs.

- Integrate LMArena’s benchmark pipeline into your model selection process; use the public rankings to negotiate better vendor terms.

- Launch a multimodal assistant (Monica style) for analysts, focusing on high‑engagement features such as visual data extraction.

- Experiment with messaging bots in emerging markets; start with low‑cost micro‑consulting offers and scale based on user feedback.

- Prioritize Gemini 3.0 or equivalent high‑performance models for compliance and risk modules where latency is critical.

By aligning technology adoption with strategic business objectives, fintech leaders can turn AI from a cost center into a competitive differentiator in 2025 and beyond.

Related Articles

Insurance Brokerage Market to Attain USD 562B by 2031 with Retail Brokerage Holding Over 75% Revenue, Says a 2026 Mordor Intelligence Report

In 2026, retail insurance brokerage growth is projected to hit $562 B by 2031. This article explains how insurers and fintechs can capture that upside with API‑first architecture, LLM recommendation e

AI Fintech Firms in Asia Expected to Attract $65B by 2025

AI‑Fintech Investment Landscape in Asia: 2025 Funding, Risks, and Strategic Opportunities Executive Snapshot – 2025 Outlook for AI‑Fintech in Asia Projected venture capital inflow: $65 B (qualitative...

Fintechasia Ftasiaeconomy Tech Updates - Scientific Asia

Fintech in 2025: Multimodal AI, Cost‑Speed Trade‑offs and the New Tooling Revolution By Casey Morgan – AI News Curator, AI2Work Executive Snapshot Multimodality is now a baseline: Gemini 3’s 1...