State of AI 2025: McKinsey Report

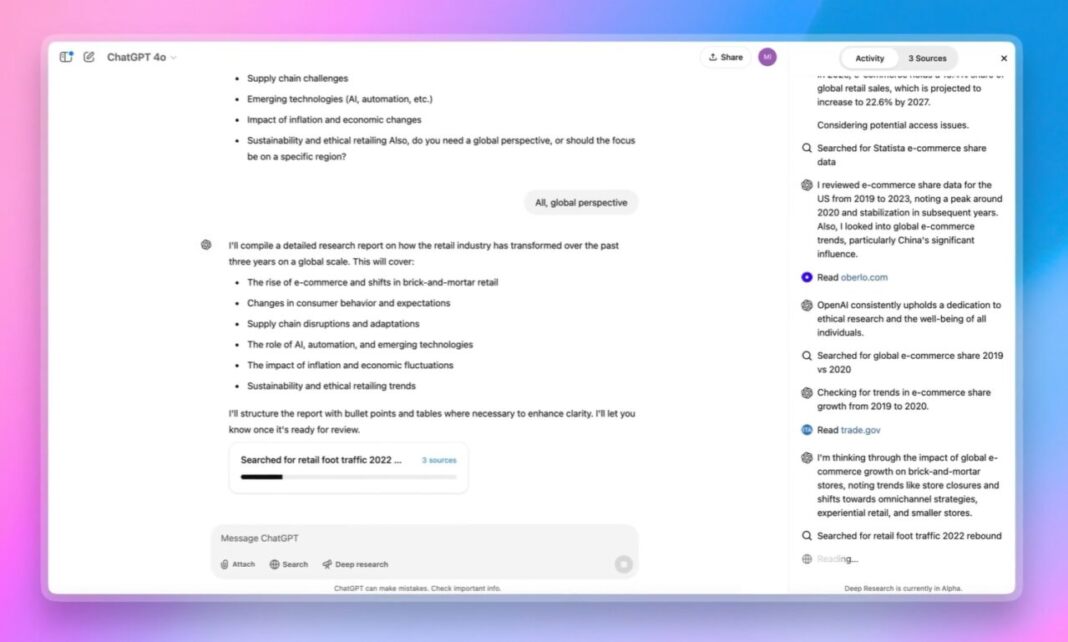

From Pilots to Enterprise‑Wide Orchestration: What 2025’s AI Landscape Means for Business Leaders The past twelve months have turned the AI conversation from “what can we build?” into a disciplined...

From Pilots to Enterprise‑Wide Orchestration: What 2025’s AI Landscape Means for Business Leaders

The past twelve months have turned the AI conversation from “what can we build?” into a disciplined enterprise agenda of

execution, integration and scale.

A fresh McKinsey State of AI 2025 survey—backed by hard adoption metrics, cost data and talent trends—shows that while almost nine in ten firms now embed AI somewhere in their stack, only one in three have moved beyond pilots. The gap is no longer about technology availability; it’s about the foundations that enable sustainable value: unified data, mature MLOps, responsible governance and an agentic operating model.

Key Findings at a Glance

- 88 % of organizations use AI in at least one function.

- Only 33 % scale AI enterprise‑wide.

- Agentic systems are the next growth engine—62 % explore them, yet only 39 % see measurable profit gains.

- Model cost and hardware efficiency remain critical constraints; Gemini 1.5 , Llama 3.2‑70B and o1‑preview illustrate the current spectrum of heavy‑weight versus lightweight inference.

- Data quality is the single most cited barrier to value realization.

- MLOps maturity and responsible AI practices differentiate high performers from laggards.

For executives, the lesson is simple:

build data foundations first, embed MLOps rigor next, then deploy agentic workflows that can scale across functions while maintaining robust governance.

Why 88 % of Firms Still Struggle to Monetize AI

McKinsey’s survey reveals a stark disconnect between the prevalence of AI pilots and their financial impact. Three forces explain this:

- Data Silos & Poor Quality – Models that learn from fragmented, unclean data drift within weeks.

- Lack of Business‑First Objectives – Pilots often surface as technology demos rather than revenue or cost levers.

- Inadequate MLOps Practices – Continuous training, monitoring and rollback are rarely institutionalized beyond a single data science team.

Leaders should therefore set enterprise‑wide AI KPIs tied to financial targets (margin lift, cycle‑time reduction), create cross‑functional steering committees that include data stewards and compliance officers, and invest in a unified data platform that supports multimodal ingestion—text, audio, image—to unlock use cases such as predictive maintenance or personalized customer journeys.

Agentic AI: The New Productivity Engine

The McKinsey Five Fifty series identifies autonomous agents—software entities capable of orchestrating multi‑step tasks—as the dominant driver of transformation. Yet only 39 % report noticeable profit improvements from agent pilots, because many pilots remain

feature demos

, not fully integrated workflow engines.

High performers follow a phased approach:

- Stage 1: Low‑Risk Automation – Generating routine reports or auto‑responding to support tickets using ChatGPT‑4o, Claude 3.5 Sonnet or Gemini 1.5 embeddings.

- Stage 2: End‑to‑End Process Orchestration – Integrating agents with ERP systems to approve purchase orders, route invoices for audit or manage inventory replenishment.

Senior leaders should

invest early in agentic platforms but scale gradually, ensuring governance layers—audit trails, human oversight and bias mitigation—are baked in from day one.

MLOps Maturity: The Scalability Lever

McKinsey’s 2025 report repeatedly emphasizes that robust MLOps practices are prerequisites for scaling AI. Firms with mature pipelines can:

- Reduce model drift by retraining every 30–90 days using automated data refreshes.

- Deploy continuous monitoring dashboards that flag performance degradation before it impacts customers.

- Implement rollback mechanisms to revert to previous versions within minutes, minimizing downtime.

Operationally, this means building an MLOps stack that integrates seamlessly with existing CI/CD workflows. Cloud providers now offer end‑to‑end solutions—AWS SageMaker Pipelines, Azure ML Ops and Google Vertex AI—that can be leveraged to reduce infrastructure overhead and accelerate time‑to‑value.

Cost–Scale Paradox in 2025: From Gemini 1.5 to o1‑Preview

The most powerful models—

Gemini 1.5

,

Llama 3.2‑70B

and the lightweight yet highly capable

o1‑preview

—illustrate a new reality: model size and cost are no longer strictly proportional. Efficiency gains come from:

- Specialized hardware (TPUs, neuromorphic chips) that reduce training time per epoch.

- Pruned architectures and quantization techniques that cut inference costs by up to 80 %.

- Federated learning approaches that eliminate the need for central data aggregation.

Business leaders should adopt a dual strategy: invest in cutting‑edge hardware where ROI is highest (e.g., high‑frequency trading, real‑time fraud detection) and deploy lightweight models—Gemini 1.5 embeddings or o1‑preview—for broader operational use cases such as customer support or supply‑chain optimization.

Responsible AI: From Policy to Practice

Compliance frameworks are common; enforcement lags behind. The gap between policy statements and measurable outcomes is a risk vector—both regulatory and reputational. Key actions include:

- Embedding bias audits into every model release cycle.

- Deploying explainability layers that provide human‑readable rationales for decisions.

- Linking compliance metrics (fairness scores, audit readiness) to executive bonus structures.

By making responsible AI a tangible part of performance measurement, leaders can turn ethics from buzzword to competitive advantage.

Talent & Culture: Building an AI Center of Excellence

The talent gap is narrowing but remains critical. High‑performing firms maintain dedicated AI Centers of Excellence that serve as:

- Research hubs for rapid experimentation with agentic and multimodal models.

- Training centers that upskill data scientists, engineers and business analysts on the latest frameworks (LangChain, LlamaIndex, o1‑preview SDKs).

- Governance bodies that enforce MLOps standards and responsible AI practices.

Practical steps:

- Partner with universities for residency programs focused on agent design and multimodal inference.

- Offer micro‑learning modules embedded in existing LMS platforms to upskill staff in real time.

- Implement rotational programs that expose business leaders to AI technical teams, fostering a shared language and mutual understanding.

Sectoral Opportunities: Where AI Is Delivering the Highest Returns

The IEEE Spectrum 2025 Index estimates AI’s contribution to global GDP at $2.3 trillion, with finance, healthcare and manufacturing leading the charge. Specific use cases include:

- Finance – AI‑driven credit scoring models that reduce default risk by 15 % while speeding approvals.

- Healthcare – Multimodal diagnostic assistants combining imaging and patient history to improve early detection rates.

- Manufacturing – Predictive maintenance agents that cut downtime by 20 % and extend equipment life cycles.

Leaders in lagging sectors should benchmark against these metrics and accelerate adoption of proven AI pilots before the window closes.

Roadmap to an Agent‑Driven Enterprise by 2030

- Pilot (2025–2026) – Deploy low‑risk agents in support functions; measure impact on cycle time and error rates.

- Integration (2027–2028) – Embed agents into core workflows such as procurement, HR onboarding and compliance monitoring.

- Governance & Scale (2029–2030) – Implement enterprise‑wide MLOps standards, bias mitigation frameworks and audit trails; ensure agents can be audited by regulators.

Each phase should be governed by KPIs:

time to value, cost savings per agent, compliance incidents, employee adoption rates.

Actionable Recommendations for Today’s Leaders

- Build an MLOps Playbook – Standardize model training, deployment, monitoring and rollback procedures across all business units.

- Adopt Agentic Platforms Early – Start with open‑source frameworks (LangChain, LlamaIndex) or cloud‑managed templates; iterate quickly to prove ROI before scaling.

- Embed Responsible AI Metrics – Link fairness, explainability and audit readiness to executive performance metrics.

- Invest in Talent Development – Create a structured AI curriculum for data scientists, engineers and business stakeholders; partner with academia for residency programs.

- Monitor Cost Efficiency – Evaluate model economics on a per‑use basis; favor lightweight models (Gemini 1.5 embeddings, o1‑preview) for high‑volume tasks while reserving heavyweights for strategic functions.

- Align AI Strategy with Corporate Goals – Ensure every AI initiative is tied to a clear business outcome (revenue lift, margin improvement).

Conclusion: The New Operating Model for 2025 and Beyond

The McKinsey State of AI 2025 report paints a picture of an industry on the cusp of mass transformation. Adoption is widespread, but true value emerges only when organizations align data quality, MLOps maturity, agentic orchestration and responsible governance into a coherent strategy.

For senior leaders, the challenge is to move from

pilot mindset

to an enterprise‑wide operating model that treats AI as a core business capability. By focusing on data foundations, embedding robust MLOps practices, scaling agentic workflows responsibly and nurturing talent, companies can unlock sustained competitive advantage—and position themselves at the forefront of the 2030 agent‑driven economy.

In short:

Adopt, integrate, govern, and scale—do it now to reap the rewards that AI promises for the next decade.

Related Articles

Cursor vs GitHub Copilot for Enterprise Teams in 2026 | Second Talent

Explore how GitHub Copilot Enterprise outperforms competitors in 2026. Learn ROI, private‑cloud inference, and best practices for enterprise AI coding assistants.

OpenAI Enterprise Expansion: Deep Research & Operator – A Strategic Blueprint for 2025

Deep Research, OpenAI’s enterprise‑grade research assistant powered by the o3 model, redefines AI from conversation to actionable insight. Explore its architecture, verified benchmarks, governance fra

Multimodal, Multi‑Agent AI: The 2025 Playbook for Enterprise Leaders

Executive Snapshot GPT‑4o dominates volume with 80 % of generative‑AI traffic. Claude 4’s 98.3 % accuracy and autonomous tool invocation carve a premium niche. Gemini 2.5 offers the largest context...