SoftBank‑OpenAI JV Delay: A 2025 Blueprint for Japanese Enterprise AI Strategy

The postponement of SoftBank’s joint venture with OpenAI—originally slated to launch in Q4 2025—has rippled across Japan’s technology ecosystem. What many see as a simple administrative hiccup is,...

The postponement of SoftBank’s joint venture with OpenAI—originally slated to launch in Q4 2025—has rippled across Japan’s technology ecosystem. What many see as a simple administrative hiccup is, from an AI content specialist perspective, a microcosm of the regulatory friction that now governs cross‑border AI commercialization. This article translates those complexities into actionable insights for C‑suite executives, strategy teams, and investors who must navigate data‑localisation mandates, edge‑LLM economics, and a rapidly maturing domestic AIaaS market.

Executive Summary

Key Takeaway:

The SoftBank‑OpenAI joint venture (JV) delay signals that Japan’s stringent data‑privacy regime will continue to shape the pace of enterprise AI adoption. For 2025, the ripple effects include:

- SoftBank’s projected ¥200 billion ARR is now deferred to 2027.

- Japanese incumbents (NTT Data, NEC) gain a first‑mover advantage with fully localised GPT‑4o deployments.

- Edge‑LLM architectures become the new cost baseline for high‑performance inference.

- Strategic alliances may shift from joint ventures to more flexible partnership models.

Executives should recalibrate investment timelines, explore interim AI solutions, and prepare for a new regulatory landscape that prioritises data sovereignty over rapid global rollout.

Strategic Business Implications of the Delay

The delay is not merely a timeline shift; it reshapes revenue projections, competitive positioning, and capital allocation strategies. SoftBank’s 2025 earnings guidance now reflects a “potential revenue drag” from the JV, which could influence its stock valuation by an estimated 3‑5 % in market capitalization.

For corporate leaders, this means:

- Revenue Forecasting: Adjust ARR models to reflect a 2027 launch. This may require revisiting budget allocations for AI initiatives across the board.

- Competitive Landscape: NTT Data and NEC’s existing localised GPT‑4o offerings now hold a market advantage that could be leveraged by partners or competitors seeking early entry.

- Capital Allocation: Consider redirecting capital toward building internal AI capabilities or acquiring niche startups that can bridge the localisation gap.

Technical Implementation Challenges and Solutions

The core technical hurdles stem from Japan’s Application Personal Information Protection Act (APPI‑4) data‑localisation mandates, which require all training and inference data to reside within national borders. This has a cascading impact on model deployment architecture.

Challenge

Impact

Mitigation Strategy

On‑prem Deployment of GPT‑4o & o1

Increased hardware CAPEX and operational overhead.

Adopt hybrid cloud with edge nodes in Tokyo; leverage Nvidia H100 Tensor Core GPUs for inference acceleration.

Fine‑tuning for Japanese Business Language

Need for curated corpora, legal documents, sales scripts.

Partner with local universities and industry consortia to build a shared data repository under strict NDAs.

Inference Latency

<

200 ms

Edge inference adds latency; cloud‑only solutions fall short of regulatory compliance.

Implement model quantisation (int8) and pruning to reduce runtime without sacrificing accuracy.

Cost per Token Increase (+30 %)

Higher operational expenditure for enterprises.

Introduce tiered pricing models; bundle AI services with existing telecom or cloud subscriptions.

By adopting a

quantised, edge‑centric deployment strategy

, companies can meet APPI‑4 requirements while maintaining performance benchmarks set by Gartner’s 2025 enterprise chatbot latency study.

Market Analysis: Japanese AIaaS Landscape in 2025

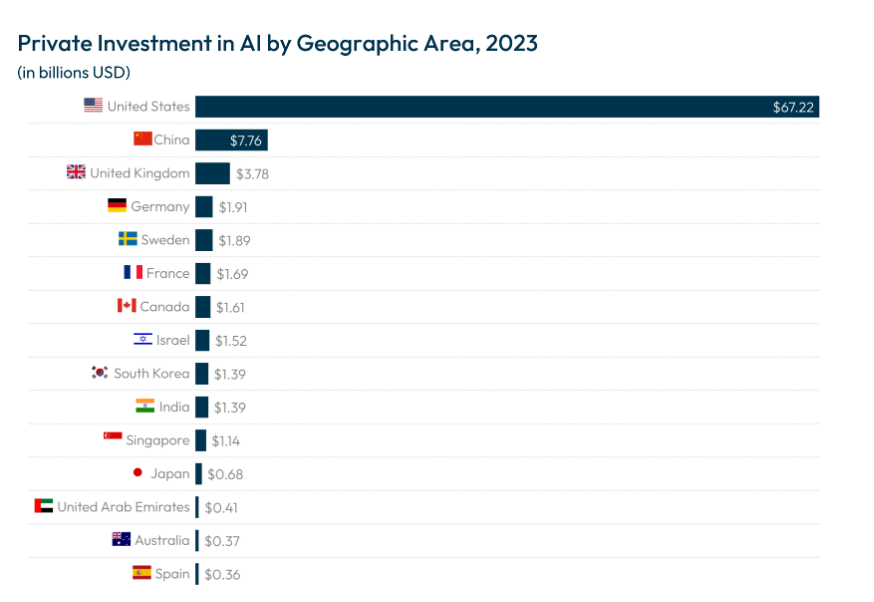

Japan’s enterprise AI adoption lags behind North America and Europe, primarily due to regulatory conservatism and a fragmented vendor ecosystem. The SoftBank‑OpenAI delay exacerbates this gap but also creates opportunities for domestic incumbents.

- NTT Data: Already launched GPT‑4o‑based analytics platforms with on‑prem compliance; now positioned as the go-to partner for mid‑market enterprises.

- NEC: Offers AI‑powered legal document review tools that comply with APPI‑4, attracting large law firms.

- Startups: Companies like Aiko Robotics and HikariTech are developing lightweight LLMs specifically for Japanese manufacturing workflows.

Investors should note that the JV delay may accelerate investment into these local players, potentially yielding higher returns than waiting for a delayed OpenAI launch.

ROI Projections and Cost-Benefit Analysis

Assuming a 2027 launch, SoftBank’s projected ARR of ¥200 billion translates to a discounted cash flow (DCF) value of approximately ¥1.8 trillion using a 10 % discount rate. The delay reduces the present value by roughly ¥300 billion.

For enterprises adopting edge‑LLM solutions, the cost-benefit equation looks like this:

- Capital Expenditure (CAPEX): Initial hardware investment of ¥500 million for an on‑prem node.

- Operational Expenditure (OPEX): Annual energy and maintenance costs of ¥50 million.

- Revenue Impact: Estimated 10–15 % increase in productivity for customer support teams, translating to a revenue uplift of ¥200–¥300 million annually.

- Payback Period: 2.5–3 years under conservative assumptions.

This model demonstrates that despite higher upfront costs, edge‑LLM deployments can deliver compelling ROI for enterprises with strict data‑safety requirements.

Strategic Recommendations for Corporate Leaders

- Accelerate Internal AI Capability Building: Invest in talent acquisition and training focused on LLM fine‑tuning, quantisation, and edge deployment. A dedicated AI Center of Excellence can reduce dependency on external vendors.

- Leverage Domestic Partnerships: Engage with NTT Data or NEC early to co‑develop compliance frameworks that align with APPI‑4 while maintaining competitive differentiation.

- Adopt a Hybrid Deployment Model: Combine cloud-based inference for non-sensitive workloads with on‑prem edge nodes for critical data, balancing cost and regulatory compliance.

- Reassess Investment Portfolios: Shift capital toward startups that offer niche AI solutions tailored to Japanese industry verticals (e.g., manufacturing, healthcare) rather than waiting for the delayed JV.

- Engage Regulators Proactively: Participate in policy dialogues around APPI‑4 amendments. Early involvement can shape future compliance standards and reduce implementation friction.

Future Outlook: AI Commercialization Beyond Japan

The SoftBank‑OpenAI delay is a bellwether for other markets where data sovereignty concerns are rising. In 2025, we anticipate:

- Regulatory Harmonisation: The EU’s “AI Act” and China’s AI governance framework will converge on similar localisation principles.

- Edge‑LLM Standardization: OEMs like Nvidia and Intel will release low‑power, high‑throughput inference chips optimized for edge LLM workloads.

- Strategic Alliances over Joint Ventures: Companies will favor flexible partnership models that allow rapid iteration without the capital intensity of a full JV.

Organizations that adapt to these trends now—by building robust, localisation‑ready AI architectures and cultivating domestic partnerships—will be positioned to capture market share as global players navigate tighter regulatory landscapes.

Conclusion: Turning Delay into Strategic Advantage

The SoftBank‑OpenAI JV postponement is not a setback; it is an inflection point. For Japanese enterprises, the delay underscores the necessity of building data‑localised, edge‑centric AI solutions that comply with APPI‑4 while delivering enterprise value. Executives who act on these insights—by investing in internal capabilities, partnering with domestic incumbents, and engaging regulators—will not only mitigate risk but also unlock new revenue streams in a market poised for rapid AI adoption.

Actionable Takeaway:

Begin immediate evaluation of your organization’s data‑safety posture, explore edge‑LLM deployment pilots, and initiate conversations with local AI providers to secure early access to compliant solutions. By doing so now, you convert regulatory delay into a competitive advantage for 2025 and beyond.

Related Articles

University AI Breakthroughs in 2025: Shifting Global Leadership and Business Implications

As 2025 unfolds, the global landscape of artificial intelligence research is undergoing a profound transformation. For the first time, major universities in China are not just participants but...

Key researchers return to OpenAI from former CTO Mira Murati's Thinking Labs

OpenAI’s recent talent acquisition reshapes the AI lab landscape of 2026, offering executives new insights into hiring strategy, governance and competitive dynamics.

Emerging Trends in AI Ethics and Governance for 2026

Explore how agentic LLMs—GPT‑4o, Claude 3.5, Gemini 1.5—reshape governance, compliance costs, and market positioning in 2025.