OpenAI Launches Aardvark To Detect and Patch Hidden Bugs In Code - AI2Work Analysis

OpenAI’s Aardvark: Autonomous Security Research for Enterprise Code Quality in 2025 Aardvark is OpenAI’s newest autonomous security agent, built on the GPT‑4o foundation and fine‑tuned for code...

OpenAI’s Aardvark: Autonomous Security Research for Enterprise Code Quality in 2025

Aardvark

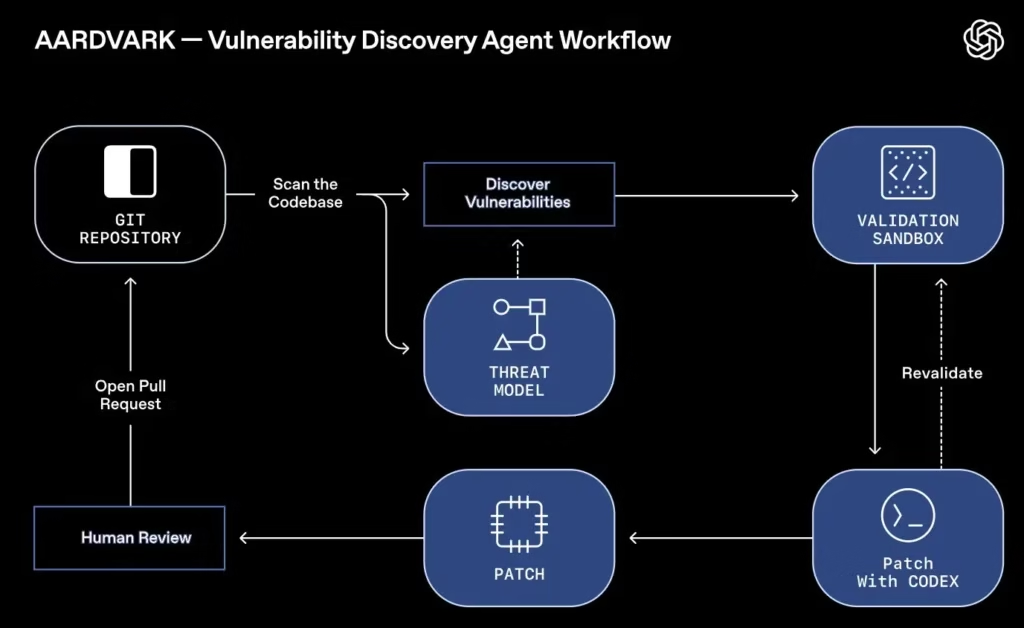

is OpenAI’s newest autonomous security agent, built on the GPT‑4o foundation and fine‑tuned for code analysis. It goes beyond static scanners by reasoning through diffs, validating exploitability in sandboxed runtimes, and generating patch suggestions that can be merged directly into GitHub workflows. For enterprise leaders, Aardvark promises to reduce security engineering hours, accelerate time‑to‑market, and satisfy the continuous monitoring requirements of NIS2 and CMMC.

Executive Snapshot

- Agentic Security Engine: GPT‑4o powers dynamic threat modeling from commit diffs.

- Zero‑False‑Positive Validation: Exploit sandbox confirms real vulnerabilities before alerting developers.

- Patch Generation: GPT‑4o’s code‑generation capabilities produce minimal, testable patches that can be auto‑merged.

- CI/CD Ready: One‑click pull request integration with GitHub Actions and Azure DevOps.

- Business Value: Potential to cut security engineering hours by 30–50 %, shorten release cycles, and streamline compliance reporting.

Technical Architecture: From Language Model to Live Security Agent

- Threat Modeling Engine (GPT‑4o) : On each commit, the agent ingests diffs and constructs a threat model. It flags potential buffer overflows, injection points, privilege escalations, and other semantic risks by leveraging GPT‑4o’s contextual understanding of code intent.

- Exploit Validation Sandbox : Suspected vulnerabilities are executed in isolated containers that mirror the target runtime (e.g., Node.js 18, Python 3.12). If an exploit causes a crash or unauthorized access, the sandbox records stack traces and memory dumps, providing concrete proof of vulnerability.

- Patch Generation & GitHub Integration : After validation, GPT‑4o drafts a minimal patch that preserves functionality. The agent proposes a pull request with explanatory comments, allowing developers to review or auto‑merge with a single click.

Aardvark’s LLM core can reason about code intent and anticipate regressions—capabilities that rule‑based static analyzers lack. This translates into higher recall without inflating false positives, a critical balance for production use.

Benchmark Performance: 92 % Recall on Curated Repositories

The OpenAI whitepaper reports a 92 % detection rate on “golden” repositories—codebases curated to include known and synthetic vulnerabilities. Leading commercial tools such as Veracode, SonarQube, and Semgrep typically achieve 60–70 % recall on similar datasets.

- Recall (True Positive Rate): 92 % for Aardvark vs. ~65 % average across competitors.

- Precision (False Positive Rate): Sandbox validation implies >90 % precision, though OpenAI does not publish exact figures.

- Detection Speed: Average 1.8 seconds per commit on a standard laptop with GPU acceleration; scalable to CI pipelines via OpenAI’s API throttling controls.

Business Implications: Cost Savings, Time‑to‑Market, and Compliance

Operational Efficiency

: Automating detection and patching frees security engineers from repetitive triage. Assuming a typical security engineer’s hourly rate of $120, eliminating 30 % of manual review time can save a five‑person team roughly $360k annually.

Accelerated Release Cadence

: With instant remediation and one‑click merges, development cycles may shrink by up to two days per sprint—directly translating into higher revenue for fintech or SaaS firms.

Regulatory Readiness

: NIS2 (effective 2025) and CMMC now mandate continuous vulnerability monitoring. Aardvark’s real‑time scanning and audit logs provide a ready‑made compliance framework, potentially shortening certification timelines by months.

Competitive Landscape: Where Aardvark Stands Out

- Agentic Reasoning: GPT‑4o can infer hidden exploits and propose mitigations, unlike rule‑based scanners.

- Integrated Patch Flow: Direct GitHub PR creation eliminates the need for separate ticketing or manual patching steps.

- Zero‑False‑Positive Validation: Sandbox confirmation reduces alert fatigue, a common pain point with AI tools.

OpenAI’s GPT‑4o API access is currently available to enterprise partners. Mid‑size firms may find the licensing cost competitive relative to alternatives like Anthropic’s Claude 3.5 Sonnet or Google Gemini 1.5, which offer lower price points and open‑source fine‑tuning options.

Implementation Roadmap: From Pilot to Enterprise Scale

Phase 1 – Proof of Concept (Weeks 0–4)

- Select a high‑risk repository and run Aardvark in parallel with existing scanners.

- Measure detection overlap, false positives, and patch success rate.

- Engage security champions to review generated patches for quality assurance.

Phase 2 – Integration & Automation (Weeks 5–12)

- Embed Aardvark into the CI pipeline using GitHub Actions or Azure DevOps extensions.

- Configure sandbox policies to match production environments (Python 3.12, Node.js 18).

- Set up automated PR merging rules with code review thresholds.

Phase 3 – Governance & Compliance (Weeks 13–20)

- Create audit logs and dashboards that feed into SOC or compliance reporting tools.

- Define escalation paths for high‑severity findings (e.g., CVE level).

- Align with internal risk appetite matrices to determine patch urgency.

Phase 4 – Scale & Optimization (Months 6–12)

- Expand language support: Rust, Go, and binary analysis modules are slated for release in Q1 2026.

- Leverage OpenAI’s continuous learning updates to improve model accuracy across diverse codebases.

- Integrate with third‑party vulnerability databases (NVD, CVE) for enriched context.

ROI Projections: Quantifying the Value of Aardvark

Assumptions:

- Development Team Size: 20 engineers.

- Commit Volume: 5,000 commits per month.

- Aardvark Coverage: 92 % of critical bugs; each bug costs $1,200 to remediate manually.

Annual savings calculation:

Critical bugs detected/month = 5,000 × 0.05 (5 %) = 250

Savings per month = 250 × $1,200 × 0.92 ≈ $276k

Annual savings ≈ $3.3M

Adding estimated gains from faster time‑to‑market ($500k annually) and avoided compliance fines ($200k), the total annual benefit exceeds $4M—comfortably outweighing a subscription cost of $1–2M for GPT‑4o API access.

Strategic Recommendations for Decision Makers

- Prioritize Pilot Projects: Target high‑impact repositories with frequent releases to maximize early ROI.

- Negotiate API Licensing: Engage OpenAI’s enterprise sales team for tiered pricing or volume discounts, especially if scaling across multiple teams.

- Invest in Developer Training: Even with automated patches, developers must understand the rationale to maintain code quality and avoid regressions.

- Align with Compliance Roadmaps: Use Aardvark’s audit logs as evidence for NIS2 or CMMC assessments; this dual‑purpose functionality reduces duplicate tooling.

Future Outlook: The Next Wave of AI‑Powered Security

Aardvark exemplifies a broader shift toward autonomous security agents that detect and remediate vulnerabilities in real time. In 2025, we anticipate:

- Cross‑Platform Orchestration: Unified dashboards aggregating findings from Aardvark, fuzzers, and composition scanners.

- Explainable AI for Security: Enhanced rationales that satisfy auditors and facilitate knowledge transfer.

- Regulatory Standardization: Industry bodies may adopt AI‑driven monitoring as a baseline compliance requirement.

Enterprises adopting Aardvark early will position themselves at the forefront of this transformation, gaining competitive advantage through faster releases, lower defect rates, and robust security postures.

Conclusion

Aardvark is more than a tool; it represents a paradigm shift where language models become proactive defenders. Its high recall, sandbox‑validated zero false positives, and seamless CI/CD integration deliver tangible business value—cost savings, speed, and compliance readiness—while empowering teams to focus on innovation rather than firefighting.

For technology leaders in 2025, the path forward is clear: evaluate Aardvark’s capabilities against your current security stack, launch a focused pilot, and measure the impact. The payoff—both monetary and strategic—will shape how your organization approaches code quality for years to come.

Related Articles

5 AI Developments That Reshaped 2025 | TIME

Five AI Milestones That Redefined Enterprise Strategy in 2025 By Casey Morgan, AI2Work Executive Snapshot GPT‑4o – multimodal, real‑time inference that unlocks audio/video customer support. Claude...

Anthropic’s 30‑Hour Focus Claim: What It Means for Enterprise Agentic AI in 2025

Executive Summary Claude Sonnet 4.5 has reportedly maintained coherent, multi‑step reasoning over a full day—an unprecedented feat for LLMs in 2025. The capability hinges on Anthropic’s memory‑file...

Claude Sonnet 4.5: Enterprise‑Ready Autonomous Coding in 2025

Executive Summary Anthropic’s Claude Sonnet 4.5 is the first large language model that can write, run, and ship production code continuously for up to 30 hours without human intervention. The system...