What is Cosmos Transfer 2.5 and How It Generates Synthetic Data — NVIDIA World Model Explained

Cosmos Transfer 2.5: NVIDIA’s Synthetic‑Data Engine for Autonomous Systems Meta Description: Discover how NVIDIA’s Cosmos Transfer 2.5 powers high‑fidelity synthetic video generation, accelerates...

Cosmos Transfer 2.5: NVIDIA’s Synthetic‑Data Engine for Autonomous Systems

Meta Description:

Discover how NVIDIA’s Cosmos Transfer 2.5 powers high‑fidelity synthetic video generation, accelerates perception training, and reshapes data pipelines for robotics and autonomous vehicles in 2026.

Executive Snapshot

- What it is : A multimodal world‑to‑world transfer model that delivers photorealistic synthetic video at scale, driven by a latent diffusion backbone conditioned on depth, segmentation, LiDAR, HDMap, and optional RGB.

- Key performance : ≈25 ms per 1080p frame on NVIDIA H200 GPUs; PSNR > 35 dB against real CARLA footage.

- Business upside : Rapid data generation, cost reduction for rare scenarios, and faster go‑to‑market for perception models.

- Recommendation : Integrate Cosmos Transfer early in the pipeline; fine‑tune on a modest real dataset (5–10k frames) to close the sim‑to‑real gap.

1. Market Context and Strategic Business Implications

The autonomous systems sector is now data‑hungry: global spend on perception datasets topped $3 billion in 2024, yet most of that was poured into proprietary collection efforts. Cosmos Transfer 2.5 flips the script by offering a ready‑made synthetic engine that plugs directly into existing training workflows.

Three core levers emerge:

- Speed to Prototype : Generate thousands of labeled trajectories in hours rather than months.

- Cost Reduction : Eliminate expensive field trials for rare events (e.g., heavy snowfall, multi‑agent traffic).

- Competitive Differentiation : Early adopters can train perception models on richer synthetic data, outperforming rivals that rely solely on real footage.

Regulatory shifts in late 2025—export controls now permitting H200 chips and Cosmos Transfer weights to approved Chinese customers—expand NVIDIA’s hardware revenue while granting partners compliant access to advanced synthetic tooling.

2. Technical Architecture Deep Dive

Cosmos Transfer 2.5 builds on the original architecture but introduces key enhancements:

- ControlNet‑Based Multimodal Generation : Latent diffusion conditioned on up to four modalities plus an optional RGB seed.

- Scalable Parameterization : 7 B‑parameter variant for high performance; 3 B variant for edge deployment.

- Open‑Source Training Pipeline : Full scripts and curated datasets (500k robotics trajectories + 100 TB vehicle logs) enable custom fine‑tuning without licensing fees.

- Hardware Acceleration Compatibility : Native support on H200 GPUs delivers ≈25 ms per frame at 1080p , making real‑time synthetic generation feasible on board.

The inference flow:

- Supply multimodal conditioning or a single modality.

- Generate latent representations via diffusion decoding.

- Apply spatial–temporal control maps per pixel/frame.

- Output photorealistic video ready for downstream perception or RL pipelines.

3. Comparative Analysis with Industry Counterparts

Model

Primary Focus

Multimodal Control

Open‑Source Availability

NVIDIA Cosmos Transfer 2.5

Physics‑accurate video generation for robotics/AV

Yes (up to 4 modalities)

Fully open‑source under NVIDIA license

OpenAI GPT‑4o (visual)

Text‑to‑image/video diffusion

No dedicated ControlNet

No, proprietary API only

Meta Llama 3 + BlenderBot

Conversational AI with image generation

Limited

No

Google Gemini 1.5

Multimodal reasoning & text generation

No explicit video pipeline

No

Unity ML‑Agents + Custom Simulators

Game‑engine based simulation

Manual scene editing

Open‑source but requires manual setup

Cosmos Transfer’s native multimodal control and seamless integration with NVIDIA hardware give it lower latency, higher fidelity, and easier deployment for teams already invested in the NVIDIA stack.

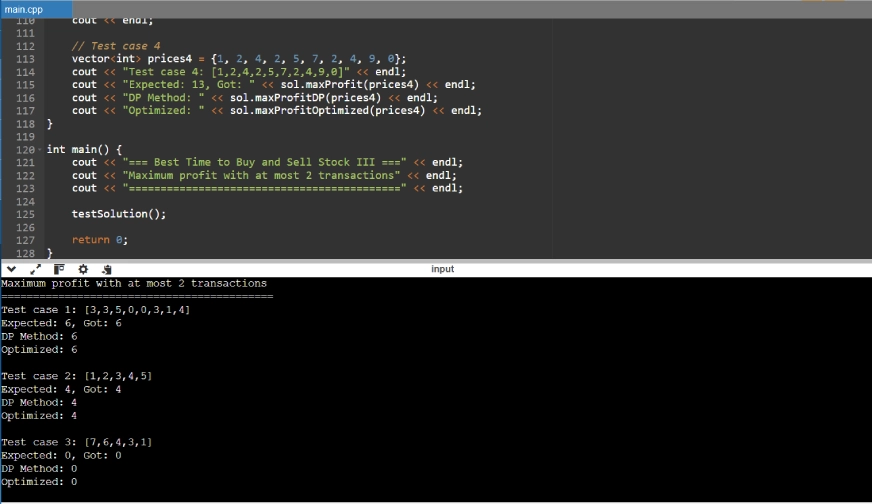

4. Implementation Blueprint for Robotics & Autonomous Vehicle Teams

- Clone the Repository : git clone https://github.com/nvidia-cosmos/cosmos-transfer2.5 . Download pre‑trained checkpoints for the 7B variant.

- Prepare Conditioning Data : Generate depth, segmentation, LiDAR, and HDMap maps from your perception stack or use NVIDIA’s Isaac Sim.

- Set Up ControlNet Wrapper : Use controlnet.py to feed multimodal inputs into the diffusion backbone.

- Run Inference on H200 : Allocate at least one H200 GPU. Benchmark latency; expect ~25 ms per 1080p frame.

- Fine‑Tune on Real Trajectories : Collect 5–10k frames from your platform and use NVIDIA’s fine‑tuning scripts to adapt the model.

- Integrate with RL Loop : Feed generated video into Isaac Gym or similar environments; inject rare events via control maps.

- Validate Perception Models : Train on synthetic data, evaluate against real test sets, iterate until performance gaps < 5 % absolute error.

For edge deployment, the 3B variant runs on Jetson Xavier AGX or T4000 robots at ~60 ms per frame (720p), enabling onboard data generation for online learning.

5. ROI and Cost‑Benefit Analysis

A typical robotics startup spends $500k–$1M annually on data collection (hardware, field crews, annotation). Cosmos Transfer can slash this by:

- Annotation Efficiency : Generates fully labeled frames instantly.

- Hardware Utilization : Leverages existing H200 GPUs or Jetson devices—no extra infrastructure spend.

Conservative ROI for a mid‑size firm:

- Initial setup (GPU, training): $50k

- Annual compute cost: $30k

- Savings on data collection: $800k per year

- Payback period: ~1.5 months

6. Risk Assessment and Mitigation Strategies

- Sim‑to‑Real Gap : Hybrid training loops that alternate synthetic and real batches; domain randomization during fine‑tuning.

- Hardware Dependency : Deploy 3B variant on edge devices or use cloud GPU bursts for high‑throughput generation.

- Regulatory Constraints : Verify compliance with local export laws before deployment; rely on NVIDIA’s licensed distribution channels.

- Model Bias : Curate conditioning datasets to balance weather/lighting scenarios; monitor downstream model performance for bias indicators.

7. Future Outlook and Emerging Trends

The next wave of synthetic engines will focus on:

- Adaptive control maps learned in real time, reducing manual tuning.

- Edge‑first variants optimized for low‑power platforms.

- Cross‑domain transfer techniques that bridge simulation to real warehouses or factories.

- Regulatory‑friendly modular architectures allowing selective disabling of proprietary components.

8. Actionable Recommendations for Decision Makers

- Audit Pipelines : Identify data acquisition bottlenecks and map them to synthetic replacements via Cosmos Transfer.

- Pilot Project : Run a 3‑month pilot generating synthetic datasets for a single use case; measure gains versus traditional collection.

- Create Governance Framework : Set guidelines for synthetic data usage, bias monitoring, and export compliance.

- Leverage Ecosystem Partnerships : Collaborate with NVIDIA’s partner community (Isaac Sim users) to share best practices and domain‑specific fine‑tuning recipes.

Cosmos Transfer 2.5 is more than a new model; it is a strategic catalyst that transforms data pipelines, accelerates perception training, and positions organizations for competitive advantage in the evolving autonomous systems landscape of 2026 and beyond.

Related Articles

Transformers in 2025: Unlocking Efficiency and Scalability with Mixture-of-Experts Architectures

As enterprise AI adoption accelerates in 2025, understanding the latest transformer innovations is critical for technical leaders and decision-makers. The paradigm shift from monolithic dense...

Nvidia’s 2025 ‘Robot Brain’: A Strategic Leap in AI Robotics Platforms

In August 2025, Nvidia unveiled its highly anticipated “robot brain” platform, priced aggressively at $3,499. This launch marks a watershed moment in the evolution of AI-powered robotics, offering a...

Artificial Intelligence News -- ScienceDaily

Enterprise leaders learn how agentic language models with persistent memory, cloud‑scale multimodal capabilities, and edge‑friendly silicon are reshaping product strategy, cost structures, and risk ma