Multimodal AI developer Luma AI raises $900M in funding

Luma AI’s $900 M Series‑C: What It Means for Multimodal Startups and Enterprise Investors in 2025 In November 2025 Luma AI crossed a watershed milestone: a $900 million Series‑C that pushes its...

Luma AI’s $900 M Series‑C: What It Means for Multimodal Startups and Enterprise Investors in 2025

In November 2025 Luma AI crossed a watershed milestone: a $900 million Series‑C that pushes its valuation past four billion dollars. For founders, VCs, and corporate leaders eyeing the next wave of generative AI, the deal is more than headline cash—it signals a shift in where multimodal technology will generate revenue, how it can be scaled, and what investors are willing to pay for early movers.

Executive Summary

- Luma’s Ray3 video‑reasoning model offers realistic motion generation from static images, a first in commercial multimodal AI.

- The company’s Photon image engine delivers 1080p output for under half a cent, setting a new cost benchmark.

- A $4 B+ valuation and backing from Andreessen Horowitz, AMD Ventures, and Saudi firm HUMAIN signal strong confidence in multimodal monetization.

- Key business levers: API‑driven SaaS, strategic creative‑suite integrations (Adobe Firefly), and a low‑latency edge deployment roadmap.

- Immediate takeaways for founders: focus on data alignment pipelines, partner with creative software vendors, and build a scalable inference stack that can run on commodity GPUs.

Strategic Business Implications of Luma’s Funding Surge

The capital infusion is not just a runway extension—it redefines the competitive landscape for multimodal startups. Here are three core implications:

- Valuation Benchmarking for Video‑Reasoning Startups : Prior to Luma, most multimodal companies (e.g., RunwayML, Descript) hovered around $1–2 B after Series B rounds. The jump to $4 B+ sets a new bar and forces competitors to accelerate product differentiation or seek strategic M&A.

- Investor Appetite for High‑Margin Video Generation : VCs are increasingly allocating funds toward generative models that can produce video at scale—a commodity that previously required massive compute budgets. Luma’s Photon cost efficiency demonstrates a viable path to profitability, encouraging investors to chase similar low‑cost architectures.

- Enterprise Integration as the Growth Engine : Adobe’s Firefly plug‑in indicates that embedding multimodal APIs into existing creative workflows is the fastest route to volume adoption. Startups that can partner with SaaS suites or cloud marketplaces stand to capture enterprise spend more quickly than those relying on standalone apps.

Funding Dynamics: What VCs Are Looking For in 2025 Multimodal AI

VCs today evaluate multimodal startups through a triad of lenses:

- IP Strength and Technical Breadth : Luma’s team, with roots in NeRF and DDIM, showcases deep expertise that translates into faster inference and higher visual fidelity—qualities VCs equate to defensibility.

- Ecosystem Partnerships : The Adobe integration signals that the company can embed itself into high‑traffic creative ecosystems. VC funds often prioritize companies with “ready‑made” partner pipelines that accelerate go‑to‑market.

- Scalable Monetization Pathways : Photon’s sub‑cent per image cost and Ray3’s ability to generate 16‑bit HDR clips suggest a high‑margin SaaS model. VCs assess whether the product can support a subscription tier, usage‑based billing, or enterprise licensing.

Business Model Blueprint: From API to Enterprise Licensing

Luma’s current revenue streams appear twofold:

- SaaS API Subscriptions : Tiered pricing based on inference volume, with a free tier for low‑volume developers. This model is standard in the generative space (e.g., OpenAI’s GPT‑4o API). It allows rapid scaling and predictable cash flow.

- Enterprise Licensing & Customization : Large media studios and advertising agencies pay premium rates for on‑prem or edge deployments, guaranteed SLAs, and data sovereignty guarantees. This mirrors the licensing approach of companies like Meta’s Make‑A‑Video when it launched.

Founders should map their pricing strategy against these models:

usage‑based tiers for developers

,

fixed‑price enterprise contracts with dedicated inference clusters

, and

white‑label options for creative SaaS vendors

.

Technical Implementation Guide for Startups Scaling Video Generation

Ray3’s architecture is a hybrid of image embeddings, temporal diffusion layers, and NeRF‑style 3D reconstruction. While the details are proprietary, here are actionable engineering takeaways:

- Model Quantization & Pruning : Photon’s sub‑cent cost implies aggressive quantization (e.g., INT8) without sacrificing perceptual quality. Startups should invest early in hardware‑aware training pipelines.

- Edge Deployment Strategy : Low latency is critical for real‑time editing tools. Building containerized inference services that can run on NVIDIA RTX 3060 GPUs or even ARM‑based edge devices opens new verticals (e.g., mobile AR, live streaming overlays).

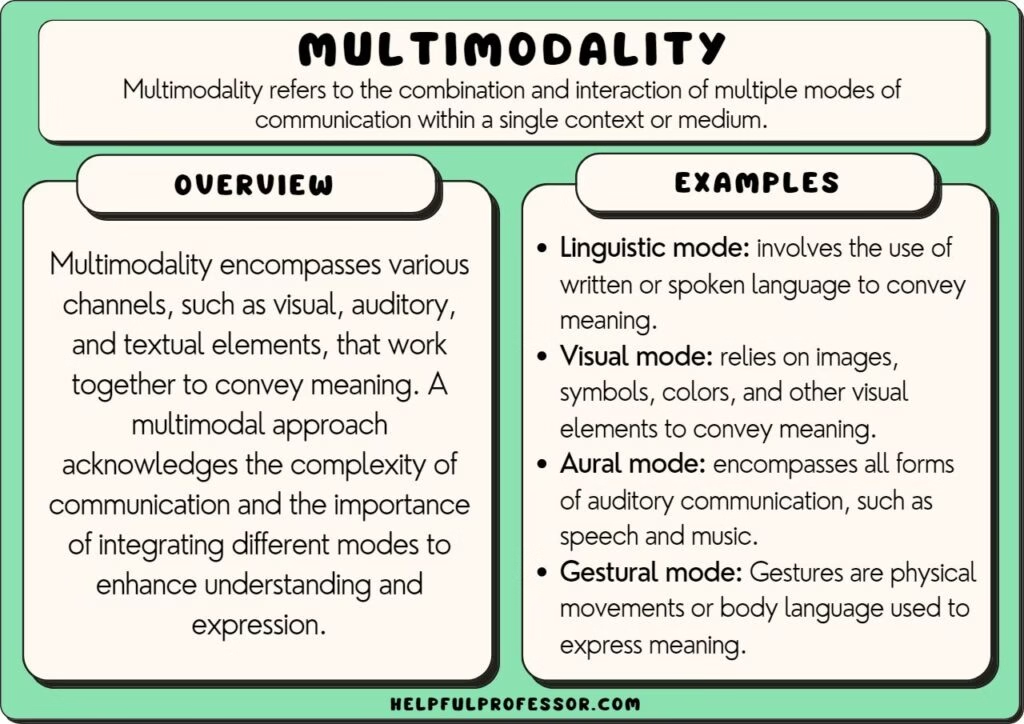

- Data Alignment Pipelines : As Forbes notes, multimodal training demands high‑quality aligned datasets. Automating the alignment process—using weak supervision and synthetic data generation—reduces label noise and speeds up model iteration.

- Monitoring & Feedback Loops : Human evaluation remains essential for video quality metrics. Deploying a lightweight annotation interface for real‑time feedback can surface issues before they hit production customers.

Market Analysis: Where Luma’s Technology Fits in 2025 Ecosystem

The multimodal AI market is fragmenting into three distinct segments:

- Creative Content Generation : Companies like Adobe, Canva, and Figma are embedding AI to automate design tasks. Luma’s Ray3 can become the default “animation” engine within these suites.

- Advertising & Media Production : Agencies demand rapid video assets at scale. The cost advantage of Photon positions Luma as a low‑budget alternative to traditional VFX pipelines.

- Enterprise Automation : Internal marketing teams and e‑learning platforms need on‑demand video creation. A subscription API model can capture this market, especially if coupled with data privacy guarantees.

Competitive mapping shows Luma ahead of RunwayML in video reasoning, but behind Meta’s Make‑A‑Video in raw compute power. The differentiator will be

ease of integration and cost efficiency

.

ROI Projections for Enterprise Adopters

A typical mid‑size marketing team currently spends $50 k annually on outsourced video production (average 20 videos/month). Replacing this with an API that charges $0.10 per 1080p clip could reduce costs to $12 k, yielding a

76% savings

. Add the benefit of faster turnaround times and creative flexibility, and the ROI becomes compelling within six months.

For larger studios, licensing Ray3 for on‑prem deployment can unlock a new revenue stream: a 10% margin on high‑volume video rendering jobs that would otherwise require GPU farms costing $1 M per year.

Potential Challenges and Mitigation Strategies

- Regulatory Scrutiny on Synthetic Media : As synthetic content proliferates, compliance frameworks (e.g., EU AI Act) will demand provenance metadata. Luma should embed watermarking and audit trails in its SDK.

- Data Privacy Concerns : Enterprises may be wary of sending proprietary images to the cloud. Offering a self‑hosted inference bundle mitigates this risk and can command premium pricing.

- Model Drift Over Time : Video generation models can degrade as visual styles evolve. Implementing continuous learning pipelines that ingest new data from partner studios will keep Ray3 relevant.

- Competitive Response : Large incumbents may launch their own low‑cost video engines. Luma’s advantage lies in its early mover status and deep integration with creative suites—maintaining these partnerships is critical.

Strategic Recommendations for Founders and Investors

- Accelerate Partnering with Creative SaaS Platforms : Secure API agreements with Adobe, Canva, or Figma to embed Luma’s technology directly into the design workflow. This unlocks a massive user base without heavy marketing spend.

- Build a Tiered Pricing Engine Early : Design your billing platform to support per‑clip, subscription, and enterprise licensing models from day one. Leverage usage analytics to refine price points.

- Invest in Edge Deployment Capabilities : Develop lightweight inference containers that can run on consumer GPUs or mobile devices. This opens new markets in live streaming, AR/VR content creation, and on‑the‑go editing.

- Create a Data Governance Framework : Implement automated alignment pipelines and data labeling tools to maintain dataset quality. Offer customers the ability to host their own datasets for compliance.

- Plan for Regulatory Compliance Early : Embed watermarking, provenance logs, and user consent flows into your SDK. Position these as selling points to enterprise buyers concerned about deepfake detection.

- Leverage Investor Networks for Market Access : Use Andreessen Horowitz’s media contacts and AMD Ventures’ hardware expertise to accelerate go‑to‑market and infrastructure scaling.

Future Outlook: Where Luma AI Could Go Next

Looking beyond 2025, the trajectory for multimodal video generation is clear:

- Higher Resolution & Frame Rates : As consumer devices support 4K and 60 fps, models will need to scale without inflating costs. Photon’s quantization techniques can be extended to these targets.

- Cross‑Modal Storytelling Engines : Integrating text prompts with audio cues (e.g., voiceover scripts) will enable end‑to‑end video production pipelines—an area where Luma could pioneer a unified API.

- Open‑Source Collaboration : While proprietary models dominate revenue, open‑source components (e.g., diffusion backbones) can attract community contributions and reduce R&D costs.

- Vertical SaaS Solutions : Tailored offerings for e‑learning, real‑estate virtual tours, or sports highlights could become niche revenue streams.

Conclusion: Why Luma’s $900 M Round Matters to You

The Luma AI funding milestone is a bellwether for the multimodal AI space. It confirms that investors are willing to pay premium valuations for first movers in video generation, and it demonstrates a clear path to monetization through API licensing and enterprise contracts. For founders, the lesson is simple: build scalable, low‑cost inference engines; partner with creative SaaS platforms; and prepare for regulatory compliance from day one.

For VCs, Luma’s success underscores the importance of evaluating technical depth (NeRF, DDIM), ecosystem integration potential, and a clear revenue model. For corporate executives, the opportunity lies in reducing video production spend while gaining creative flexibility—an ROI that can be realized within months.

The next wave of multimodal startups will either ride Luma’s coattails by focusing on niche verticals or compete head‑on by delivering faster, cheaper, and more integrated solutions. The choice is yours.

Related Articles

AI Funding 2025: How Mega‑Rounds and Analytics Are Reshaping Venture Strategy

September 2025 has already seen a seismic shift in the AI investment landscape. The headline is OpenAI’s $40 billion round, but beneath that headline lies a pattern that founders, investors, and...

Achieving AI Product-Market Fit in 2025: Strategic Insights for Startup Founders and Investors

In 2025, the path to AI product-market fit no longer hinges on experimentation or superficial feature additions. Instead, entrepreneurs must embrace a strategic, deeply integrated approach to AI that...

The Week’s 10 Biggest Funding Rounds: A Busy Time For ...

Explore the 2026 AI startup funding landscape, top deal metrics, geographic shifts, and strategic take‑aways for founders and investors.