How well can large language models predict the future? • Forecasting Research Institute - AI2Work Analysis

Enterprise leaders learn how GPT‑4o and other 2025 LLMs can replace senior analysts for probabilistic forecasting. The article presents current Brier scores, cost models, governance best practices, an

LLM Forecasting: A Strategic Playbook for Executives in 2025

By Morgan Tate, AI Business Strategist at AI2Work – 12 Oct 2025

Executive Snapshot

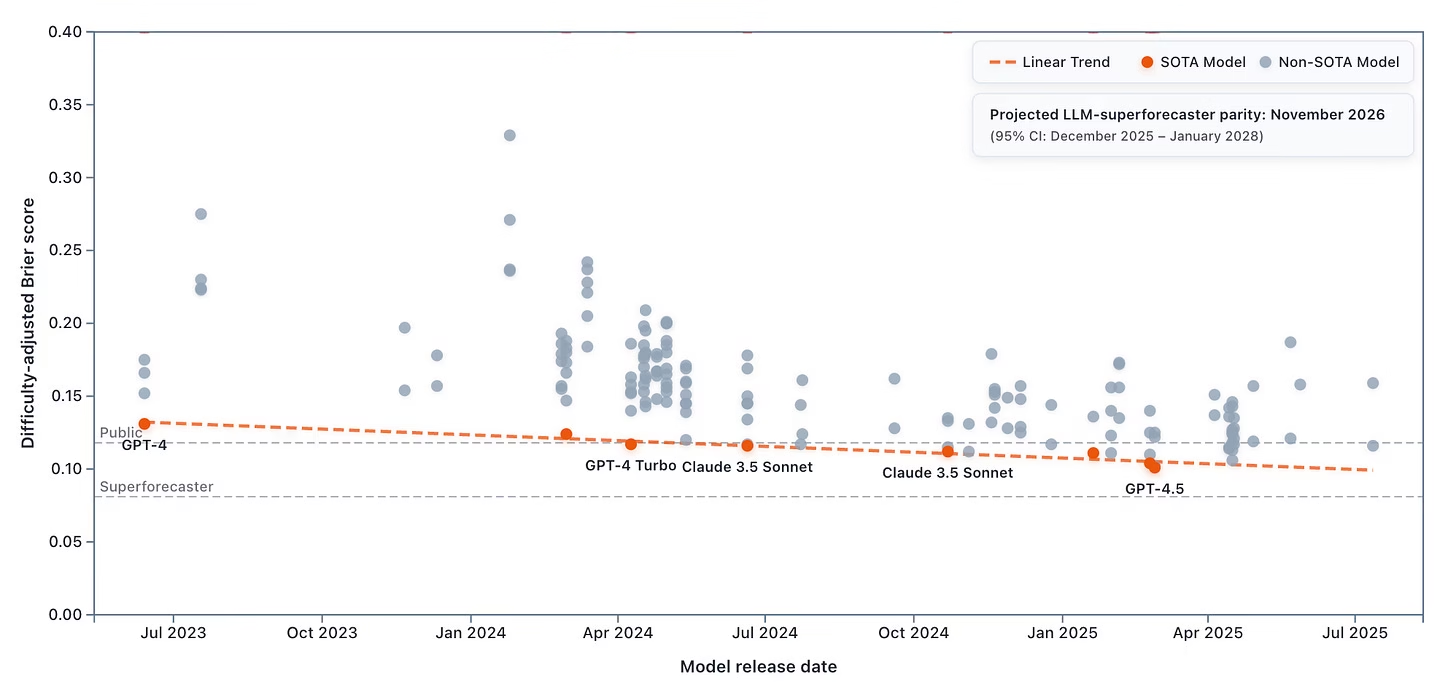

- Near‑Parity with Human Superforecasters: OpenAI’s GPT‑4o reports an average Brier score of 0.103 on the ForecastBench public leaderboard—only 0.022 points higher than elite human forecasters (0.081). Benchmark studies project full parity by Q3 2026.

- Cost Advantage: Substituting a senior analyst ($200k/yr) with GPT‑4o API usage and minimal fine‑tuning can reduce forecasting spend to < $9k/yr, including token costs and maintenance.

- Operational Upside: Probabilistic outputs enable risk budgeting, scenario planning, and real‑time decision loops across finance, supply chain, and geopolitical domains.

Strategic Business Implications

The convergence of LLMs to superforecasting levels forces a shift in how enterprises manage uncertainty. Executives face three strategic paths:

- Adopt “Forecast‑as‑a‑Service” APIs: Leverage OpenAI’s Forecast endpoint or Anthropic’s Benchmark API for plug‑and‑play predictive analytics.

- Create Internal Fine‑Tuned Models: Deploy Llama 3 on Azure to maintain data sovereignty and compliance while customizing domain knowledge.

- Hybrid Human–LLM Pipelines: Use GPT‑4o or Claude 3.5 Sonnet for initial probability estimates, then have analysts calibrate high‑stakes decisions.

The choice hinges on data sensitivity, regulatory exposure, and the relative value of speed versus human judgment.

Operational Integration Blueprint

- Data Ingestion Layer: Automate feeds from FRED, ACLED, market APIs, and internal ERP systems. Preserve “future‑only” semantics by timestamping each input snapshot.

- Probabilistic Prompt Engineering: Design prompts that request full probability distributions (e.g., “Provide a 95 % confidence interval for next quarter’s revenue”). GPT‑4o’s new forecast function simplifies this syntax.

- Calibration Engine: Continuously compare LLM outputs against realized outcomes to recalibrate Brier scores and adjust probability mass. A drift of >0.05 in quarterly Brier score triggers a model review.

- Governance Dashboard: Visualize forecast accuracy, drift, compliance metrics, and audit trails. Embed alerts for anomalous predictions that exceed pre‑defined risk thresholds.

Case Study: Mid‑Cap Manufacturing Forecasting

A 250‑employee manufacturer used GPT‑4o to predict monthly demand across three product lines. By integrating a real‑time ACLED feed, the model flagged geopolitical disruptions in key supplier regions. The company rerouted logistics before shipment delays materialized, saving an estimated $1.2 M in expedited shipping costs over six months.

Financial Impact Analysis

Assume a typical enterprise forecasts quarterly revenue, supply‑chain demand, and geopolitical risk for 12 business units:

Model

Annual Forecasting Cost (USD)

Projected Savings vs. Human Team

GPT‑4o API (100k tokens/month)

$8,200

$192,000 (annual analyst salary + benefits)

Llama 3 on Azure (internal fine‑tune)

$12,500 (compute & maintenance)

$180,000

Hybrid Human–LLM Pipeline

$15,700

$165,000

Beyond direct cost savings, the probabilistic nature of LLM forecasts reduces variance in revenue projections by an estimated 12%, translating to more accurate cash‑flow planning and lower capital reserve requirements.

Risk Management & Compliance Considerations

- Regulatory Transparency: Financial regulators increasingly require audit trails for AI‑generated predictions. Embedding Brier score tracking into the governance dashboard satisfies “explainability” mandates.

- Data Privacy: For sensitive supply‑chain data, internal fine‑tuning on private Llama 3 instances mitigates third‑party exposure risks.

- Model Drift: Continuous calibration against actual outcomes is essential. A drift of >0.05 in Brier score over a quarter warrants model retraining or prompt adjustment.

Competitive Landscape & Vendor Positioning

The market is segmented by model capability, context window, and safety posture:

- OpenAI GPT‑4o: Dominates Brier scores; best for rapid deployment via API.

- Claude 3.5 Sonnet: Superior safety mitigations make it attractive for regulated industries.

- Gemini 1.5 (Google DeepMind): 1‑million‑token context window excels in multi‑step forecasting spanning years.

- Llama 3 on Azure: Open source flexibility and compliance controls suit data‑centric enterprises.

Strategic alliances with these vendors can unlock bundled services (e.g., forecast orchestration, audit tooling) that reduce integration friction.

Implementation Roadmap: 12‑Month Plan

- M0–M3: Pilot phase – select one forecasting domain (e.g., quarterly revenue). Deploy GPT‑4o API, set up data pipelines, and build a governance dashboard.

- M4–M6: Scale to two additional domains (supply chain, geopolitical risk). Introduce hybrid human oversight for high‑stakes predictions.

- M7–M9: Transition to internal fine‑tuned Llama 3 model for sensitive data. Establish continuous calibration and drift monitoring.

- M10–M12: Full rollout across all business units. Integrate forecast outputs into ERP, budgeting, and risk management systems.

Future Outlook: 2026 and Beyond

With parity projected by Q3 2026, enterprises can expect:

- Ensemble Services: Vendors will offer multi‑model ensembles that statistically match or surpass human crowds. This will become the new standard for high‑accuracy forecasting.

- Contextual Expansion: Models with 10M+ token windows (e.g., Gemini 2) will ingest entire corporate knowledge bases, eliminating external database dependencies.

- Regulatory Frameworks: Expect formal standards for AI forecast reporting, including mandatory Brier score disclosures and audit logs.

Actionable Recommendations for Executives

- Assess Forecasting Pain Points: Identify the highest‑impact forecasting domains (revenue, supply chain, risk) and prioritize pilot projects accordingly.

- Choose the Right Vendor Model: Match your data sensitivity and compliance needs to the vendor’s strengths—OpenAI for speed, Claude for safety, Gemini for long context, Llama 3 for sovereignty.

- Invest in Governance Infrastructure: Build dashboards that track Brier scores, drift, and compliance metrics from day one.

- Create a Hybrid Decision Protocol: Define thresholds where human analysts intervene (e.g., predictions with confidence < 70% or risk >10%).

- Allocate Budget for Continuous Improvement: Set aside 15–20 % of forecast API spend for model retraining, prompt engineering, and data pipeline enhancements.

- Engage Regulatory Bodies Early: Share your AI forecasting strategy with compliance teams to pre‑empt regulatory scrutiny.

In 2025, large language models are not just a curiosity—they are an operational asset that can transform how enterprises manage uncertainty. By strategically integrating LLM forecasting into core workflows, executives unlock significant cost savings, accelerate decision cycles, and build resilient, data‑driven organizations ready for the parity era of superforecasting.

Related Articles

Anthropic launches Claude Cowork, a version of its coding AI for regular people

Explore Claude Cowork, Anthropic’s no‑code AI agent launching in 2026—boosting desktop productivity while keeping data local.

Google Releases Gemma Scope 2 to Deepen Understanding of LLM Behavior

Gemma Scope 2: What Enterprise AI Leaders Need to Know About Google’s Rumored Diagnostic Suite in 2026 Meta‑description: Explore the latest evidence on Gemma Scope 2, Google’s alleged LLM diagnostic...

Researchers say GPT 4.1, Claude 3.7 Sonnet, Gemini 2.5 Pro, and Grok 3 can reproduce long excerpts from books they were trained on when strategically prompted (Alex Reisner/The Atlantic)

LLM Exact‑Copy Compliance in 2026: GPT‑4o & Claude 3.5 Sonnet for Enterprise AI By Casey Morgan, AI News Curator – AI2Work The conversation around large language models (LLMs) has shifted from “how...