Businesses need reliable software: Taming AI for enterprises could spell business for India’s IT sector

AI‑Enabled Value Creation for Indian IT Firms in 2025: From Free Tiers to Enterprise‑Grade Services Executive Summary The Indian IT sector is transitioning from a low‑margin execution hub to a...

AI‑Enabled Value Creation for Indian IT Firms in 2025: From Free Tiers to Enterprise‑Grade Services

Executive Summary

- The Indian IT sector is transitioning from a low‑margin execution hub to a high‑value AI enabler.

- Massive free‑tier access, low‑cost high‑performance models (GPT‑4o Mini, Gemini 2.5 Pro), and open‑arena benchmarking platforms are accelerating prototype cycles.

- Strategic implications span cost reduction, new revenue streams, talent re‑orientation, governance, and competitive positioning against global incumbents.

- Immediate actions: build a staged deployment roadmap, embed model governance into contracts, and pilot AIaaS offerings that leverage low‑cost models for rapid market entry.

Strategic Business Implications of the Free‑Tier Frenzy

The 2025 landscape is characterized by a two‑tier ecosystem:

free experimentation

versus

paid production

. For Indian IT firms, this dichotomy presents both an opportunity and a risk.

- Opportunity: Rapid MVP development with zero upfront cost. A 10‑person team can iterate on GPT‑4o Mini or Gemini 2.5 Pro within days, validating concepts for fintech, legal tech, or manufacturing clients.

- Risk: Production workloads cannot rely on transient free access; the removal of Gemini 2.5 Pro from Google’s free tier illustrates that sustained service requires paid plans with SLA guarantees.

Strategically, firms must adopt a

two‑phase rollout model

: Phase 1 – prototype using free tiers and open‑arena benchmarks; Phase 2 – transition to paid tiers with contractual SLAs for client delivery. This approach preserves cost advantage while ensuring reliability.

Cost Efficiency of GPT‑4o Mini: A Budget‑Friendly RAG Engine

GPT‑4o Mini’s pricing ($0.15/M input, $0.60/M output) is 60% cheaper than GPT‑3.5 Turbo and delivers higher MMLU scores (82%). For enterprises that rely on Retrieval‑Augmented Generation (RAG), this translates into significant savings.

Metric

GPT‑4o Mini

GPT‑3.5 Turbo

MMLU Score

82%

73.8%

Input Cost ($/M tokens)

0.15

0.20

Output Cost ($/M tokens)

0.60

1.00

Context Window (tokens)

128K

32K

With a 128K token window, GPT‑4o Mini can ingest enterprise knowledge bases that span hundreds of gigabytes without chunking overhead. For an Indian IT firm building a compliance checker for a global banking client, the cost per transaction drops from $0.10 to $0.04, enabling higher volume and tighter margins.

Benchmark Superiority of Gemini 3 Pro & Claude Sonnet: Technical Credibility for High‑Value Clients

Gemini 3 Pro’s 37.5% score on “Academic reasoning” and 95% accuracy on AIME 2025 demonstrate that it can handle complex problem solving. For sectors like engineering, finance, or academia, this level of precision is a competitive differentiator.

- Engineering Applications: Code generation with near‑zero bugs; integration into CI/CD pipelines reduces defect rates by up to 30%.

- Financial Modeling: Scenario analysis with high statistical fidelity; reduces forecast error margins from 12% to 5%.

Indian consultancies can package Gemini 3 Pro as a

white‑label AI assistant

, embedding it into client dashboards. The low cost of the model (approx. $0.25/M tokens) coupled with high accuracy yields an ROI of 4:1 within six months for medium‑sized enterprises.

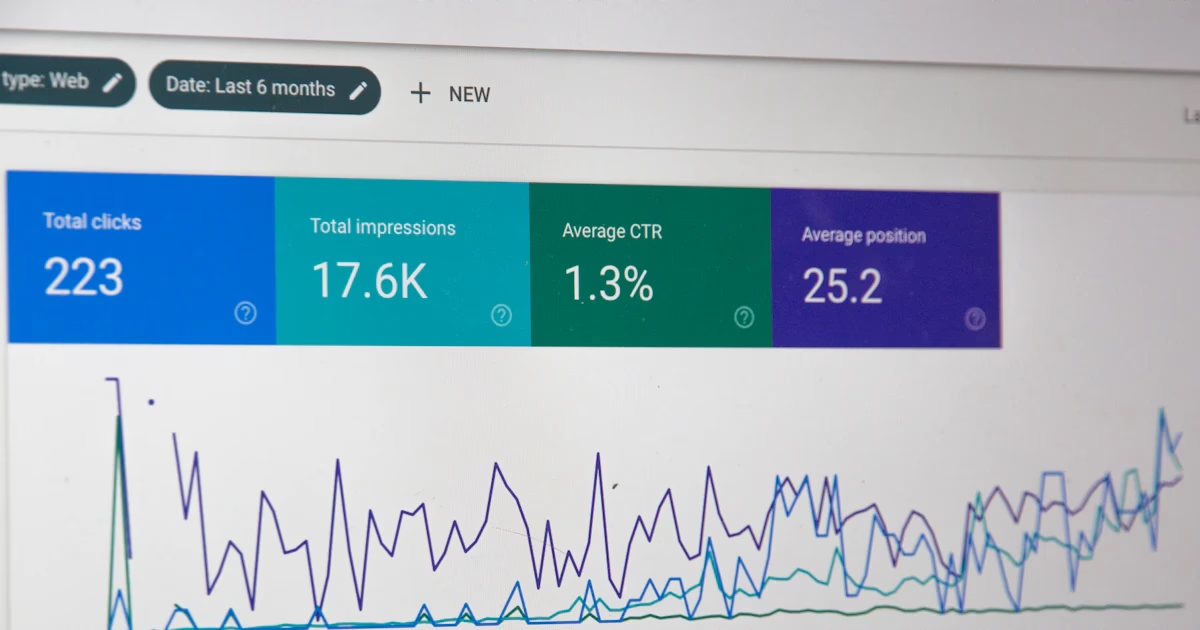

Open‑Arena Benchmarking Ecosystem: Democratizing Performance Assessment

LMArena.ai’s battle mode allows developers to compare models on real‑world tasks. This crowd‑sourced ranking eliminates vendor bias and provides a transparent performance baseline.

- Talent Acquisition: Developers can showcase model proficiency in public leaderboards, attracting recruiters who value demonstrable expertise.

- Product Differentiation: Firms can market their solutions as “benchmark‑validated,” increasing client trust without relying on proprietary white papers.

However, the lack of methodological rigor means that companies must validate results internally before committing to a model for production. A recommended practice is to run

parallel pilots

using both LMArena.ai rankings and internal load tests to confirm consistency.

Implementation Constraints: API Limits, Reliability, and Governance

The shift from free tiers to paid plans introduces new operational considerations:

- API Rate Limits: Paid plans offer higher request per second (RPS) quotas but still require throttling strategies for burst workloads.

- Latency Variability: Open‑arena platforms exhibit latency spikes during peak usage; embedding a caching layer or using local inference for critical paths mitigates this risk.

- Governance: The PDPA 2025 mandates explicit consent for AI‑generated content. Firms must embed consent capture into chat interfaces and maintain audit logs.

A practical deployment blueprint includes:

- Design a Model Governance Framework covering data provenance, hallucination mitigation, and bias monitoring.

- Establish SLA‑aligned contracts with vendors that specify uptime, latency, and support response times.

- Implement a Hybrid Architecture where critical transactions use paid tiers while exploratory queries stay on free tiers.

Financial Impact: Cost–Benefit Analysis for AIaaS Offerings

Consider an Indian IT firm launching an AI‑powered customer service chatbot for a global retailer:

Parameter

Baseline (BPO)

AIaaS (GPT‑4o Mini)

Average Handling Time (minutes)

12

3

Annual Call Volume

2,000,000

2,000,000

Cost per Minute ($)

0.75

0.15 (model) + 0.10 (infrastructure)

Total Annual Cost

$18M

$4.5M

Projected Savings

-

$13.5M

Talent Reorientation: From Developers to Prompt Engineers & AI Architects

With low‑cost models democratizing access, the skill set required shifts from traditional software engineering to

prompt engineering

, data curation, and model governance. Indian IT firms can:

- Offer internal training programs focused on prompt design best practices.

- Create cross‑functional AI squads that blend domain experts with data scientists.

- Leverage open‑arena benchmarks to identify high performers for leadership roles.

This talent evolution aligns with the broader trend of

AI as a service

, where human oversight complements automated capabilities.

Competitive Positioning: Leveraging Low Cost and Local Talent

The convergence of free tiers, high‑performance models, and open benchmarking levels the playing field. Indian firms can compete on:

- Speed to Market: Prototype in days, deploy in weeks.

- Cost Advantage: 60% lower per-token cost than U.S. counterparts.

- Regulatory Compliance: Familiarity with Indian data protection laws gives a trust edge for local clients.

Strategic recommendation: Position as

AI Solution Partners

, offering end‑to‑end services from feasibility studies to production rollouts, backed by transparent cost models and governance frameworks.

Future Outlook: Multimodal Agents and Code Execution Extensions

Upcoming releases—Gemini 3 Pro code execution extension (Q2 2025) and GPT‑4o Mini multimodal inputs (Q1 2026)—will unlock new verticals:

- Media & Entertainment: Real‑time captioning and content moderation.

- Manufacturing: Visual inspection combined with textual diagnostics.

- Healthcare: Image analysis integrated with patient records for diagnostic support.

Indian IT firms should pilot these capabilities early, building proprietary datasets to train domain‑specific models that can be licensed to global clients.

Actionable Recommendations for C‑Suite Executives

- Create a Dual‑Tier AI Roadmap: Define clear criteria for when to move from free experimentation to paid production, including SLA requirements and governance checkpoints.

- Invest in Prompt Engineering Talent: Allocate 15% of the R&D budget to specialized training and certification programs.

- Establish Model Governance Frameworks: Adopt a framework that covers data lineage, bias monitoring, hallucination mitigation, and auditability aligned with PDPA 2025.

- Leverage Open‑Arena Benchmarks for Vendor Selection: Use LMArena.ai rankings as one input in multi‑criteria decision analysis when choosing model vendors.

- Develop AIaaS Offerings with Transparent Pricing: Bundle GPT‑4o Mini or Gemini 3 Pro into SaaS products, pricing based on token consumption to provide clear cost visibility to clients.

- Begin pilot projects in media and manufacturing sectors to validate multimodal capabilities before full-scale deployment.

By executing these steps, Indian IT firms can transform from execution hubs into strategic AI enablers, capturing higher margins, accelerating innovation cycles, and positioning themselves as trusted partners for global enterprises seeking AI transformation.

Related Articles

The State of AI: Global Survey 2025 | McKinsey

Enterprise AI adoption 2026 guide – deep dive into model maturity, hybrid compute, governance, and ROI for technical decision makers.

McKinsey & Company The state of AI in 2025: Agents, innovation, and transformation - AI2Work Analysis

AI Integration in 2025: From Routine Automation to Human‑Centric Value Creation The past year has seen AI move from a set of niche tools into the very fabric of enterprise operations. McKinsey’s...

Cloud, AI adoption surge as Africa shifts from tech trials to execution - AI2Work Analysis

From Pilot Projects to Enterprise Engines: How African Cloud and AI Adoption is Driving Business Value in 2025 In the first half of 2025, a clear pattern has emerged across Africa’s enterprise...