Generative AI Adoption in 2025: A Strategic Blueprint for Enterprise Leaders

Executive Summary Hybrid, multi‑vendor model stacks are now the norm; vendor lock‑in is eroding. GPT‑4o remains the production workhorse, but agentic models (Claude 3.5 Sonnet, Gemini 1.5) are...

Executive Summary

- Hybrid, multi‑vendor model stacks are now the norm; vendor lock‑in is eroding.

- GPT‑4o remains the production workhorse, but agentic models (Claude 3.5 Sonnet, Gemini 1.5) are rapidly filling regulated niches.

- Enterprise AI budgets have surged to $75 B+ in 2025, shifting AI from pilot projects to core operating expenses.

- Predictable pricing, robust RAI tooling, and seamless interoperability will be decisive competitive differentiators.

In a landscape where generative AI (Gen‑AI) is moving beyond hype into the heart of business operations, senior technology leaders must understand not just

what

models are available but

how

to structure budgets, governance, and workflows to capture real value. This article distills the latest Andreessen Horowitz research into a practical strategy framework that aligns with leadership, operational excellence, workflow optimization, decision science, and long‑term strategic positioning.

Strategic Business Implications of Multi‑Vendor Gen‑AI Portfolios

The 2025 cohort shows that

78 % of CIOs run at least two distinct generative‑AI engines in production simultaneously

. This shift is driven by three forces:

- Performance heterogeneity : No single model delivers optimal results across all workloads. GPT‑4o excels at multimodal content creation, while Claude 3.5 Sonnet offers policy‑driven summarisation for compliance teams.

- Cost elasticity : Vendors compete on token pricing and subscription tiers, allowing enterprises to cherry‑pick cost‑effective engines for high‑volume tasks.

- Risk diversification : Multi‑vendor stacks reduce dependency risk and enable rapid re‑routing in case of outages or policy changes.

For leaders, this means that procurement strategies must evolve from “single vendor lock‑in” to a

model marketplace mindset

. Contracts should specify clear SLAs for each engine, include token caps, and mandate audit logs for every model interaction. This structure transforms Gen‑AI from an experimental sandbox into a predictable line item on the operating budget.

Operationalizing Agentic AI in Regulated Industries

Agentic capabilities—where models can autonomously orchestrate tasks across multiple APIs—are gaining traction at

36 % of surveyed enterprises

. In finance, for example, a Gemini 1.5 agent can ingest transaction data, run fraud detection models, and trigger compliance alerts without human intervention.

Key operational levers:

- Policy‑as‑Code : Claude 3.5 Sonnet’s built‑in policy engine allows teams to encode regulatory constraints directly into the model workflow, reducing audit risk.

- Observability & Control : Implement a centralized observability platform that captures token usage, latency, and compliance flags across all agentic workflows.

- Iterative Governance : Use a feedback loop where compliance officers review agent decisions weekly, feeding corrections back into the policy engine.

The ROI is tangible: a fintech firm reduced false positives by 40 % in just 90 days after deploying Gemini 1.5 for real‑time fraud detection, translating to millions in avoided loss and regulatory fines.

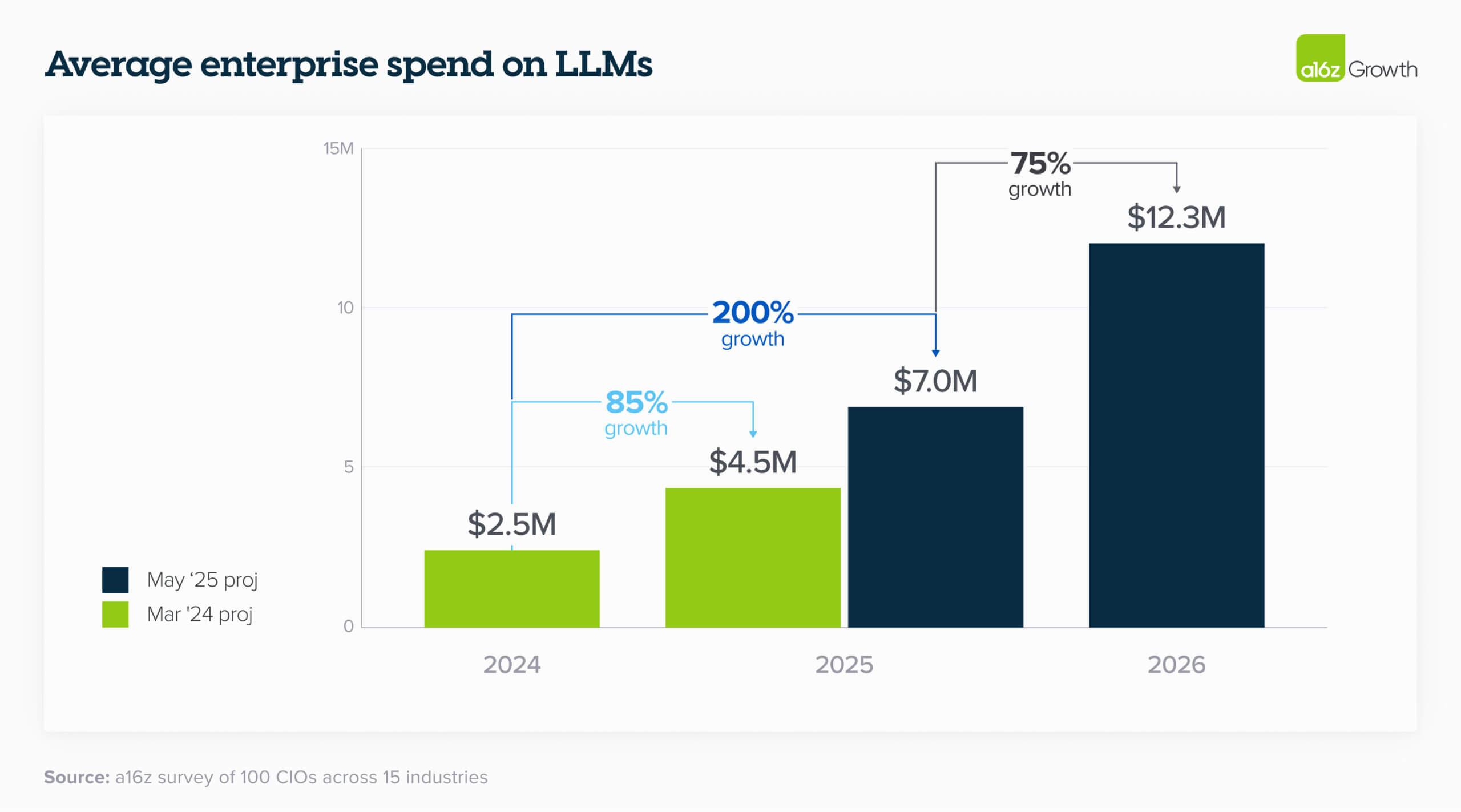

Budgeting for AI as an Operating Expense

Enterprise AI budgets now average

$1.2 B per year

, a 75 % YoY increase from 2024. The allocation pattern is:

- 55 % to core IT (infrastructure, data pipelines)

- 25 % to business units (application development, analytics)

- 20 % to external vendor contracts

This shift signals that AI must be treated like any other critical enterprise service:

service level agreements, cost allocation models, and return‑on‑investment frameworks become mandatory.

Practical budgeting steps:

- Create a token‑based costing model that aligns with vendor pricing tiers.

- Allocate a fixed percentage of the core IT budget to GPU clusters (NVIDIA H100) and edge deployment - AI2Work Analysis">edge deployment - AI2Work Analysis">edge deployment s where latency is mission critical.

- Set aside 10 % of the external spend for RAI tooling subscriptions, ensuring auditability and compliance coverage.

Infrastructure: Cloud, Edge, and Hybrid GPU Clusters

Hardware adoption trends show that

72 % of enterprises deploy NVIDIA H100 GPUs for LLM inference

, with an average per‑GPU cost of $12 k and power draw of 500 W. In‑house inference costs about $0.08 per token, versus $0.12 via OpenAI’s API.

Edge deployment is gaining traction in latency‑sensitive sectors:

24 % of firms use micro‑datacentres for Llama 3 on Nvidia Jetson AGX

. This approach cuts end‑to‑end latency to ~180 ms and reduces data residency concerns.

Deployment checklist:

- Assess workload latency requirements; route high‑latency tasks to cloud, low‑latency to edge.

- Implement a unified monitoring layer that aggregates GPU utilisation, token throughput, and power consumption across all sites.

- Adopt spot‑instance bidding strategies for non‑critical inference to further reduce costs.

Governance & Responsible AI as a Procurement Criterion

Regulatory pressure is higher than ever.

88 % of CIOs have formal RAI frameworks

, often tied to vendor policy engines. GDPR, CCPA, and the U.S. AI Act require fine‑grained audit logs and data residency controls.

Governance best practices:

- Mandate that all models expose an API for logging request metadata (user ID, context length, token count).

- Require “policy‑as‑code” capabilities so compliance rules can be versioned and audited automatically.

- Implement a governance board that reviews model updates quarterly, ensuring alignment with evolving regulations.

Competitive Advantage: AI‑Native Applications vs. Custom Builds

The data shows that off‑the‑shelf AI‑native SaaS solutions outperform bespoke internal builds by 3× in time‑to‑value. Enterprises can achieve rapid ROI by integrating ready‑made Gen‑AI modules (e.g., customer service bots, document summarisation) rather than building from scratch.

However, the most successful organisations combine both strategies: they adopt AI‑native solutions for common use cases and reserve custom builds for mission‑critical workflows that require unique data pipelines or compliance constraints.

Strategic Recommendations for 2025 Enterprise Leaders

- Create a Model Marketplace Governance Framework : Treat each Gen‑AI engine as a service with its own SLAs, cost models, and audit requirements. This decouples vendor dependency and enables rapid experimentation.

- Invest in Agentic AI Pilot Programs that focus on high‑impact regulated workflows (fraud detection, compliance monitoring). Use policy‑as‑code to embed regulatory rules directly into the agent’s decision logic.

- Adopt a Token‑Based Costing Model across all departments. Allocate budgets proportionally to token usage and enforce per‑token caps through vendor contracts.

- Build a Hybrid Infrastructure Strategy that balances cloud inference for bulk workloads with edge deployment for latency‑critical tasks. Monitor GPU utilisation centrally to optimise power and cooling costs.

- Embed RAI into Procurement Criteria . Require vendors to provide audit logs, policy engines, and data residency controls as part of the contract. This mitigates compliance risk and aligns AI spend with regulatory obligations.

- Leverage AI‑Native SaaS for Rapid Value Delivery , while reserving custom development for scenarios where data sensitivity or unique business logic demands a bespoke solution.

Future Outlook: 2026–2027 – Predictable Pricing and Interoperability Standards

The next two years will likely see:

- Per‑token subscription tiers that cap costs while offering volume discounts, making AI budgeting more predictable.

- Emergence of interoperability standards (e.g., OpenAI’s “Model Connect” API) that enable seamless switching between engines without code rewrites.

- Increased focus on edge‑centric Gen‑AI workloads , driven by autonomous vehicles, industrial IoT, and real‑time analytics.

- A shift toward AI‑as‑a‑service marketplaces where enterprises can rent model capabilities on demand, further reducing capital expenditure.

By aligning procurement, budgeting, governance, and infrastructure around these evolving dynamics, enterprise leaders can secure a competitive advantage that transforms generative AI from a strategic initiative into a core operational engine.

Actionable Takeaways

- Audit your current Gen‑AI spend: Map token usage to business outcomes and identify cost‑optimization opportunities.

- Define a model marketplace charter: Include governance, SLAs, pricing models, and audit requirements for each engine.

- Pilot agentic workflows in regulated domains: Start with low‑risk use cases, measure impact, and scale based on proven ROI.

- Implement hybrid infrastructure controls: Centralise monitoring of GPU utilisation, token throughput, and power consumption across cloud and edge sites.

- Embed RAI into every procurement decision: Make auditability and policy compliance a non‑negotiable requirement for all vendors.

In 2025, generative AI is no longer an optional technology; it is a strategic imperative that reshapes how enterprises operate. By embracing a multi‑vendor, agentic, and governance‑centric approach, leaders can unlock unprecedented efficiency, compliance assurance, and competitive differentiation.

Related Articles

Funding Pulse 2025: How Agentic Generative Models and Quantum‑Edge are Reshaping the Startup Playbook

Executive Snapshot Series B/C rounds in 2025 now favor startups that embed policy‑aware agents , rather than pure model research. Quantum‑edge chips deliver a measurable latency edge for real‑time...

AI Startups Raise Record $150B in 2025 , Redefining Venture ...

Explore how the $150 B AI funding wave of 2025–26 reshapes startup strategy. Learn about cost‑efficiency models, agent reliability, compliance, and investment outlook for enterprise AI leaders in 2026

Inside Thinking Machines Lab, Mira Murati’s New AI Startup | Built In

Explore how Thinking Machines Lab’s record‑sized seed round is poised to democratize fine‑tuning, lower AI costs, and shift funding toward talent. Technical insights for enterprise leaders.