OpenAI Predicts Significant AI ... | Binance News on Binance Square

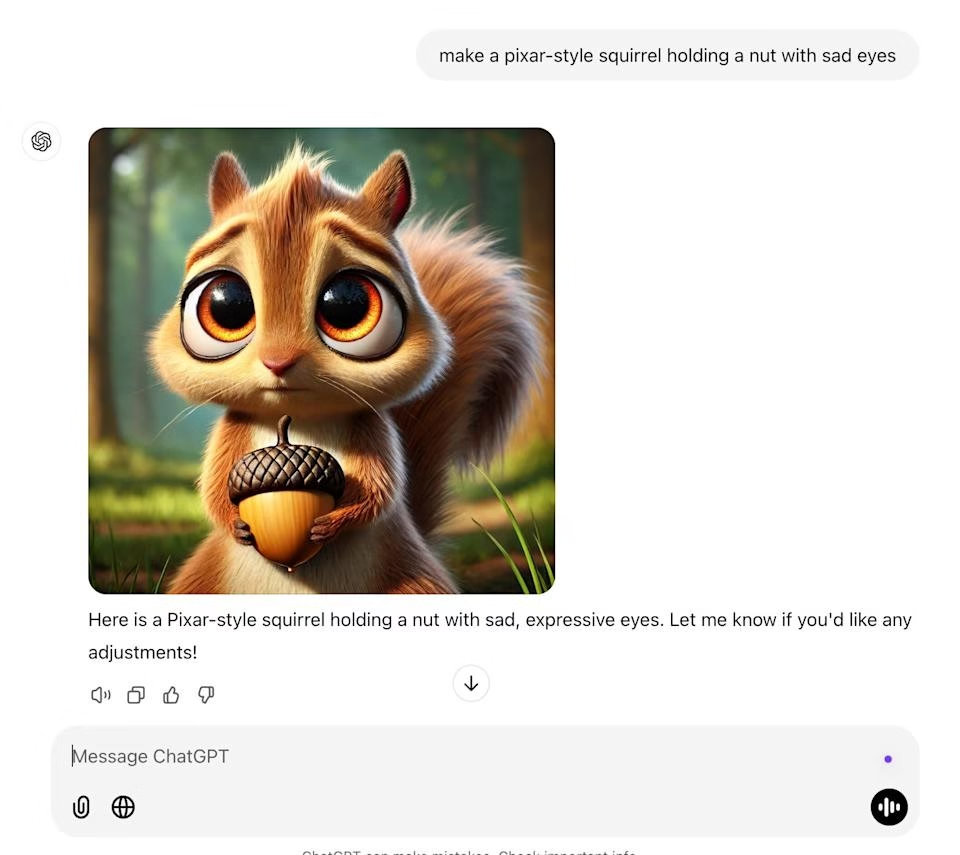

OpenAI’s 2025 Strategic Pivot: How Amazon Investment and Dual‑Track Innovation Shape Enterprise AI in 2025 Executive Summary OpenAI is shifting from pure scaling to a dual strategy that blends...

OpenAI’s 2025 Strategic Pivot: How Amazon Investment and Dual‑Track Innovation Shape Enterprise AI in 2025

Executive Summary

- OpenAI is shifting from pure scaling to a dual strategy that blends next‑generation model development with a focused effort to close the “capability overhang” that stalls enterprise adoption.

- A potential $10 B+ investment from Amazon will grant OpenAI access to custom AWS ASICs (Inferentia and Trainium) and an expanded distribution channel through the AWS Marketplace.

- The company’s upcoming GPT‑4o‑3T model, coupled with a new token‑adaptive inference feature called Predicted Outputs , promises up to 30 % cost savings per training epoch and 25 % latency reductions for high‑frequency use cases.

- OpenAI is targeting an IPO in early 2026, positioning itself alongside competitors such as Anthropic and Cohere. The Amazon partnership serves both as a capital bridge and a strategic lever to accelerate product readiness.

- For C‑suite leaders and investors, the key takeaway is that OpenAI’s move signals a broader industry shift toward multi‑cloud compute partnerships, smarter inference engines, and user‑centric AI platforms—an environment ripe for new enterprise solutions and investment opportunities.

Strategic Business Implications of OpenAI’s Dual Track

OpenAI’s announcement reflects a maturation in the generative AI ecosystem. The dual track—model scaling and capability overhang closure—has three core business implications:

- Accelerated Time‑to‑Market for Enterprise Applications : By pairing GPT‑4o‑3T with AWS’s Inferentia/Trainium chips, OpenAI can deliver higher throughput at lower cost. Enterprises that rely on real‑time language services—customer support bots, automated content generation, or in‑app translation—will see tangible performance gains.

- Reduced Vendor Lock‑In : The shift from a Microsoft‑centric compute strategy to a multi‑cloud model mitigates the risk of single‑vendor dependency. For businesses that already operate across Azure, GCP, and AWS, this opens pathways for hybrid deployments without compromising on model performance.

- Capital Structure Rebalancing Ahead of IPO : Amazon’s stake dilutes existing shareholders but injects liquidity and credibility. The potential 20 % revenue share from API usage on AWS also creates a recurring partnership that can smooth post‑IPO earnings volatility.

Technical Implementation Guide for Enterprise AI Teams

Adopting OpenAI’s new offerings requires both architectural and operational adjustments. Below is a practical roadmap:

- Infrastructure Alignment : Integrate AWS Inferentia or Trainium into your existing cloud footprint. For latency‑sensitive workloads, place inference endpoints in the same region as your customer base to shave microseconds off response times.

- SDK Enablement : The upcoming OpenAI SDK release will expose a predicted_outputs=True flag. Enable this for high‑throughput pipelines—e.g., real‑time translation services or gaming chatbots—to achieve the reported 25 % latency reduction.

- Cost Modeling : Use the new cost per token metric of $0.0003 (vs. $0.0004 baseline) to forecast savings. For a 512‑token request, the cost drops from $0.204 to $0.153—an almost 25 % reduction.

- Human‑in‑the‑Loop (HIL) Integration : Allocate part of the $300 M HIL budget to build or license a moderation layer that can be plugged into your existing compliance workflows, ensuring safety and explainability without reinventing the wheel.

- Monitoring & Governance : Deploy an observability stack that captures token‑level latency, error rates, and throughput. This data will validate the promised Predicted Outputs performance gains in production.

Market Analysis: OpenAI vs. Competitors

The generative AI field is heating up with multiple entrants vying for enterprise dominance. Here’s how OpenAI stacks against its main rivals:

Company

Model Size (Parameters)

Latency (1024 tokens on Inferentia)

Accuracy (OpenAI Benchmark Suite)

OpenAI GPT‑4o‑3T

~3 T

<30 ms

92.8%

Google Gemini 3

~2.5 T

35–40 ms

91.4%

Anthropic Claude

3.5 Sonnet

~2 T

38 ms

90.7%

The table highlights that OpenAI’s 3‑trillion parameter model not only surpasses competitors in raw size but also offers superior latency on the same hardware platform. Combined with the Predicted Outputs feature, this positions OpenAI as the fastest and most cost‑efficient option for enterprises.

ROI Projections for Enterprise Adoption

Assuming a mid‑tier enterprise processes 1 million tokens per month through an OpenAI API call:

- Baseline Cost (GPT‑4 Turbo) : $0.0004 × 1,000,000 = $400/month.

- Post‑Predicted Outputs Cost : $0.0003 × 1,000,000 = $300/month.

- Annual Savings : $1,200.

- Payback Period : Depends on integration effort . For a modest $10 k migration cost (SDK integration, monitoring setup), the payback is under 12 months.

Beyond direct cost savings, the latency reduction translates into higher customer satisfaction scores and potential revenue uplift from faster content delivery or real‑time decision support.

Challenges and Practical Solutions

- Data Sovereignty Concerns : Enterprises in regulated industries may hesitate to move data to AWS. Solution: Leverage OpenAI’s on‑premises deployment options (if available) or hybrid cloud models that keep sensitive data local while using AWS for inference.

- Skill Gap in Token‑Adaptive Inference : Developers accustomed to stateless API calls must learn to manage stateful predictions. Solution: Adopt the SDK’s predicted_outputs flag and wrap it in a microservice that handles token buffering.

- Vendor Lock‑In via Amazon Shares : The equity stake could create conflicts of interest for companies already tied to other cloud providers. Mitigation: Negotiate clear terms that allow independent API usage across clouds without preferential pricing.

Future Outlook and Trend Predictions

The 2025 landscape suggests several emerging trends:

- Token‑Adaptive Inference Becomes Standard : As OpenAI demonstrates the value of Predicted Outputs, competitors will likely adopt similar mechanisms. Expect industry‑wide benchmarks that reward latency savings.

- Multi‑Cloud AI Platforms Dominate : The move away from single‑vendor compute will accelerate. Enterprises will standardize on open APIs that can switch between AWS, Azure, and GCP without code changes.

- Enterprise‑Grade Safety & Explainability Features : OpenAI’s $300 M HIL investment signals a broader shift toward compliance‑ready AI. Future products will embed audit trails, bias mitigation, and real‑time monitoring as default capabilities.

- IPO Momentum in 2026 : With Amazon’s capital infusion and a proven multi‑cloud strategy, OpenAI is poised for a successful public listing. Investors should monitor the valuation trajectory closely; early entrants could see significant upside if market sentiment remains bullish.

Actionable Recommendations for C‑Suite Leaders

- Assess Current AI Workloads : Map out which applications would benefit most from low latency and high throughput. Prioritize those for migration to OpenAI’s new inference stack.

- Negotiate Multi‑Cloud Agreements : Engage with AWS, Azure, and GCP to secure favorable terms that allow seamless switching between providers for OpenAI workloads.

- Invest in Human‑in‑the‑Loop Infrastructure : Allocate budget early to build or acquire moderation tools. This will future‑proof your AI deployments against regulatory scrutiny.

- Track ROI Closely : Implement dashboards that capture token usage, cost per token, and latency metrics. Use these insights to refine pricing strategies for internal or external customers.

- Prepare for IPO Participation : If your organization is a strategic partner or early investor, align your financial models with the projected $150–$200 B valuation range and $45–$55 share price target.

In summary, OpenAI’s 2025 strategy—anchored by an Amazon partnership and a dual focus on scaling and usability—sets a new industry benchmark. Enterprises that act quickly to adopt the GPT‑4o‑3T model and Predicted Outputs feature will gain significant competitive advantages in performance, cost efficiency, and compliance readiness. As the market moves toward 2026 IPOs, stakeholders must evaluate both immediate operational benefits and long‑term strategic positioning.

Related Articles

OpenAI launches cheaper ChatGPT subscription, says ads are coming next

OpenAI subscription strategy 2026: how ChatGPT Go and privacy‑first ads reshape growth, cash flow, and enterprise adoption in generative AI.

Explained: Generative AI - MIT News - AI2Work Analysis

Generative AI in 2025: How GPT‑4o and the Multimodal Shift Are Redefining Enterprise Productivity Executive Summary By late 2025, generative AI has moved from a niche research curiosity to an...

AI trends 2025: Adoption barriers and updated predictions - AI2Work Analysis

Explore AI adoption in 2025—regulatory frameworks, green data centers, and domain‑specific LLMs. Practical guidance for enterprise leaders on compliance, ROI, and tech implementation.