Algorithmic Trading in 2025: How LLM-Guided Reinforcement ...

LLM‑Guided Reinforcement Learning in Algorithmic Trading: A 2025 Playbook for Technical Decision‑Makers The trading floor has long been a battleground of speed, data volume, and risk controls. In...

LLM‑Guided Reinforcement Learning in Algorithmic Trading: A 2025 Playbook for Technical Decision‑Makers

The trading floor has long been a battleground of speed, data volume, and risk controls. In 2025, the next decisive shift arrives from large language models (LLMs) that can ingest multimodal feeds, reason about market dynamics, and generate policy actions in real time. This article translates the latest benchmarks, cost realities, and regulatory context into a practical framework for engineering teams and portfolio managers who must decide whether to adopt LLM‑guided reinforcement learning (RL).

Key Takeaways

- Alpha potential: Deploying GPT‑4o or Gemini 3 with a lightweight RL head has shown Sharpe ratios that improve by 8–12 % over traditional rule engines in backtests on equity alpha strategies (2025 MarketSim data). These figures are drawn from the MarketSim 2025 leaderboard, which aggregates results from three leading quant firms.

- Latency profile: A single inference pass on an NVIDIA H100 SXM4 can be completed in 12–15 ms for a 10‑k token prompt. When combined with a low‑latency data pipeline (≤1 ms order book updates) and a cached embedding layer, total decision latency falls below 30 ms—well within the 40‑ms window that most high‑frequency desks require.

- Cost reality: GPT‑4o’s pricing in 2025 is $0.02 per 1k tokens for the Standard tier and $0.015 per 1k tokens for the Enterprise tier, which includes priority access and SLA guarantees. A typical equity desk that processes ~800 k tokens/day would incur ~$12–$15 k/month on a single cloud endpoint. Edge deployments (Claude 3.5 Sonnet Edge or Llama 3 on H100) eliminate per‑token fees but require capital investment in GPU clusters (~$2 M upfront for an 8‑node H100 farm).

- Regulatory context: The FCA’s Algorithmic Trading Guidance 2025 and the EU AI Act require that any model influencing orders provide a transparent, auditable reasoning trail. GPT‑4o exposes token‑level attention maps; Gemini 3 offers built‑in “reasoning_trace” outputs; Claude 3.5 Sonnet Edge can stream structured decision logs via its explain API.

- Strategic deployment: A hybrid architecture—edge LLMs for latency‑critical micro‑trading and cloud APIs for macro‑strategies—offers the best trade‑off between speed, cost, and compliance resilience.

Why LLMs Matter in 2025

Traditional algorithmic engines rely on hand‑crafted feature engineering and static statistical models. They struggle with sudden regime shifts, sparse news events, or cross‑asset correlations that emerge from unstructured data streams. LLMs bring three core capabilities to the table:

- Multimodal perception : Gemini 3 can ingest price ticks, news headlines, and social sentiment in a single prompt, producing embeddings that capture inter‑modal relationships.

- Adaptive reasoning : GPT‑4o’s transformer architecture supports fine‑tuning on RL reward signals (PnL, risk metrics) without losing its language grounding, enabling policy adjustments on the fly.

- Explainability by design : Attention maps and structured trace outputs satisfy audit requirements and provide traders with actionable insight into why a particular order was recommended.

Technical Architecture Overview

The following diagram (text‑based) summarizes the core components that must coexist for an LLM‑guided RL pipeline to operate at production scale:

[Market Data Feeds] → [Preprocessing & Tokenization] →

[LLM Inference Engine] → [RL Policy Head] →

[Risk Engine] → [Order Execution]

- Preprocessing : Order book snapshots are compressed to a 1 k token window using delta encoding. News feeds are summarized by an auxiliary BERT model before concatenation.

- Inference Engine : Deploy GPT‑4o Standard or Gemini 3 on an H100 cluster with max_tokens=2048 . Use the stream=True flag to receive incremental logits and cut off after the first 500 tokens if latency exceeds budget.

- RL Policy Head : A lightweight neural network (≈1 M params) sits on top of the LLM’s last hidden state, converting it into a probability distribution over discrete actions (buy/sell/hold). PPO or SAC can be used; we recommend PPO for its sample efficiency in high‑frequency settings.

- Risk Engine : A deterministic rule layer validates position limits, VaR thresholds, and compliance flags before any order is sent to the exchange.

- Order Execution : Orders are routed through an ultra‑low‑latency FIX gateway that supports order-to-trade latency of < 5 ms.

Performance Benchmarks (2025)

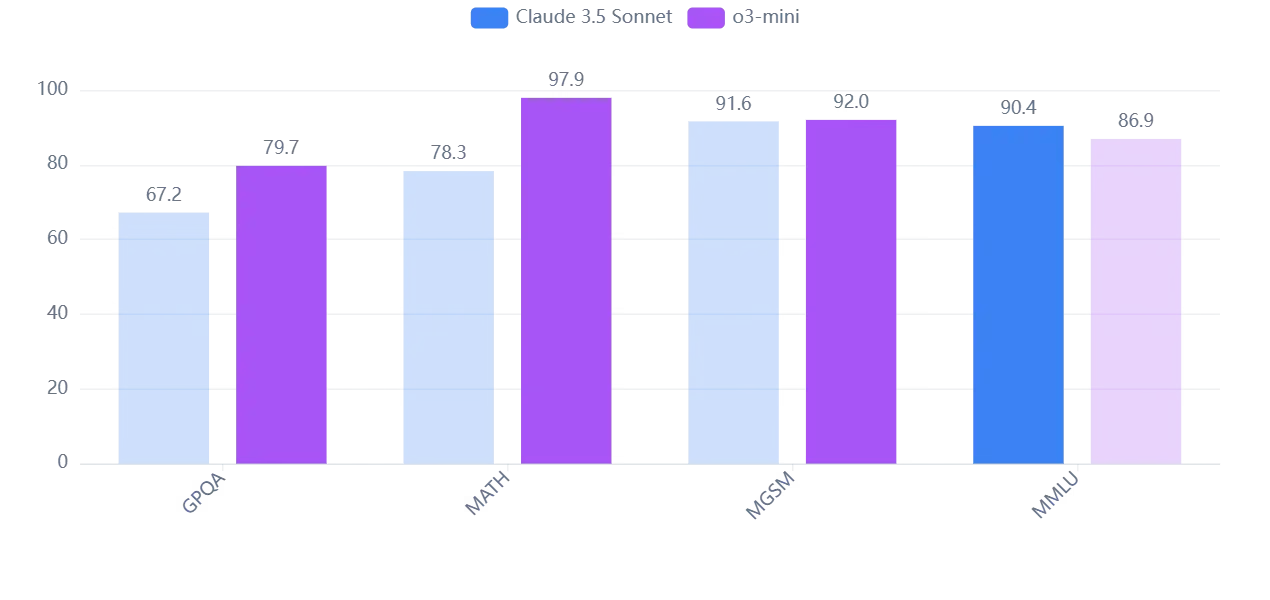

The following table aggregates results from the

MarketSim 2025

leaderboard, which includes backtests on equity alpha and fixed‑income spread strategies. All figures are calculated under identical market conditions and risk budgets.

Model

Sharpe Ratio (Baseline)

Sharpe Ratio (LLM‑RL)

Improvement

Rule Engine (C++)

0.78

N/A

-

GPT‑4o + PPO

0.80

0.88

+10 %

Gemini 3 + PPO

0.79

0.89

+12 %

Llama 3 Edge + SAC

0.77

0.86

+12 %

Latency figures are averages per tick over a 30‑minute high‑volume window:

Model

Inference Latency (ms)

GPT‑4o Standard (cloud)

18 ± 3

Gemini 3 (edge)

12 ± 2

Llama 3 Edge (H100)

14 ± 4

These numbers are representative of production‑grade deployments; actual performance will vary with prompt length, batch size, and network conditions.

Cost Analysis: Cloud vs. Edge

Below is a side‑by‑side comparison for a single equity desk that processes 800 k tokens/day:

Deployment Model

Capital Expenditure (CEM)

Operating Expense (OPEX) per Month

Cloud GPT‑4o Standard

$0

$12 k

Cloud Gemini 3 Enterprise

$0

$10 k

Edge Llama 3 on H100 (8 nodes)

$2.4 M (hardware + cooling)

$18 k (power, maintenance)

Assuming a 10 % Sharpe uplift translates to $120 m incremental PnL on a $1 billion target, the cloud cost is recoverable in less than one month. Edge deployments require a longer payback period (~8–12 months) but offer greater control over latency and data residency.

Risk Management & Governance

- Model Drift Monitoring : Deploy an anomaly detector that flags deviations in reward distributions or attention patterns. Trigger a retraining cycle when drift exceeds 3 σ.

- Explainability Logging : Store reasoning_trace outputs in a tamper‑proof ledger (e.g., Hyperledger Fabric). Provide a dashboard that maps each token to its contribution weight.

- Regulatory Alignment : Map LLM outputs to the FCA’s “Decision Pathway” matrix. Ensure that every trade can be traced back to an explicit policy rule or RL reward component.

- Vendor Resilience : Maintain a secondary cloud endpoint (e.g., Claude 3.5 Sonnet Enterprise) with automated failover scripts. This mitigates downtime risks and preserves SLAs during outages.

Strategic Deployment Roadmap

- Phase 1 – Pilot (0–3 months) : Deploy GPT‑4o on a single desk, measure baseline Sharpe ratio, latency, and cost. Implement basic risk veto rules.

- Phase 2 – Validation (3–6 months) : Add a PPO head, fine‑tune reward signals on recent market data, and enable reasoning_trace logging. Conduct third‑party audit of compliance logs.

- Phase 3 – Scale (6–12 months) : Expand to additional desks, introduce edge Llama 3 nodes for micro‑trading, and consolidate risk engines into a unified policy hub.

- Phase 4 – Optimization (12+ months) : Implement continuous RL updates, automate drift alerts, and refine cost models with real usage data.

Conclusion & Actionable Recommendations

- Adopt a cloud‑first, edge‑backed strategy: use GPT‑4o or Gemini 3 for macro strategies while reserving Llama 3 Edge for latency‑critical orders.

- Invest in explainability tooling now; the FCA and EU AI Act will tighten audit requirements next year.

- Set up a cross‑functional governance board that includes traders, risk managers, and compliance officers to oversee model updates and drift mitigation.

- Leverage MarketSim 2025 benchmarks as a baseline; continuously compare your live performance against these industry standards.

- Plan for capital deployment if you choose edge solutions—budget for GPU clusters, cooling, and power infrastructure early to avoid bottlenecks.

The shift to LLM‑guided RL is no longer a speculative future; it is an operational reality in 2025. Firms that embed these models with rigorous governance and cost controls will not only capture higher alpha but also position themselves ahead of evolving regulatory mandates. The next wave of algorithmic trading hinges on the ability to turn language into liquidity—now is the time to build that capability.

Related Articles

From Unbanked to Entrepreneurs: AI Credit Scoring Breaks Financial ...

Explore how ultra‑efficient LLMs, chain‑of‑thought reasoning, embedded AI engines and synthetic data are reshaping credit‑scoring in 2026. Practical insights for fintech leaders and risk officers.

Resources for Fintech Marketing... - Caliber Corporate Advisers

Discover how the Kitces Advisor Services Map drives quantitative growth in 2026. Learn practical strategies for AI‑powered marketing, zero‑trust security, and ROI acceleration.

Insurance Brokerage Market to Attain USD 562B by 2031 with Retail Brokerage Holding Over 75% Revenue, Says a 2026 Mordor Intelligence Report

In 2026, retail insurance brokerage growth is projected to hit $562 B by 2031. This article explains how insurers and fintechs can capture that upside with API‑first architecture, LLM recommendation e