Open‑Source Voice Engineering: How tt‑webui‑extension.openai‑tts‑api v0.13.4 Powers Low‑Cost, High‑Flexibility TTS in 2025

TL;DR: The new 0.13.4 release plugs OpenAI’s steerable gpt‑4o-mini‑tts directly into the popular open-webui front end, delivering sub‑250 ms latency per 10‑second clip and a $0.25/100k tokens price...

TL;DR:

The new 0.13.4 release plugs OpenAI’s steerable

gpt‑4o-mini‑tts

directly into the popular

open-webui

front end, delivering sub‑250 ms latency per 10‑second clip and a

$0.25/100k tokens

price point—half of legacy TTS costs. For product teams building voice assistants, accessibility tools or content generators, this extension is the quickest path to production‑ready, parameterized speech without writing SDK wrappers.

Executive Summary for Decision Makers

The

tt‑webui‑extension.openai‑tts‑api v0.13.4

release achieves three things that matter most to engineering leaders:

- Zero‑code integration: Drop the extension into an existing open‑webui stack and instantly expose OpenAI’s newest steerable TTS model.

- Cost advantage: gpt‑4o-mini‑tts offers half the token cost of legacy models while maintaining comparable quality.

- Scalable, parameterized voice: Voice, tone, pacing and accent can be tuned through a simple UI, enabling rapid experimentation and A/B testing in live products.

These capabilities translate into

$1.25 per GB of audio

versus $5 with legacy TTS—an immediate ROI for high‑volume applications such as call centers or automated content creation pipelines.

Strategic Business Implications

From a product strategy perspective, the extension shifts the competitive landscape in three key ways:

- Vendor lock‑in reduction: By moving from proprietary SDKs (Azure AI Foundry, LiveKit) to an open‑source UI that calls OpenAI’s API, teams can maintain control over code and pricing while still leveraging industry‑grade TTS.

- Market entry acceleration: The low barrier to deployment allows startups to prototype voice features in days instead of months—critical in a market projected to exceed $12 bn by 2027.

- Compliance flexibility: Because the extension is self‑hosted, companies can enforce internal data governance policies (e.g., on-premise inference or hybrid cloud setups) without relying on third‑party SaaS endpoints.

Technical Implementation Guide

The following walkthrough assumes you already run an

open-webui

instance. If not, the same principles apply to any web UI that can host a Python extension.

1. Clone and Configure

git clone https://github.com/open-webui/tt-webui-extension.openai-tts-api

cd tt-webui-extension.openai-tts-api

cp .env.example .env

Edit .env to add your OpenAI key

export OPENAI_API_KEY="sk-xxxx"

2. Install Dependencies

The extension ships with a lightweight

requirements.txt

that pulls in the official OpenAI SDK and a minimal FFmpeg wrapper for audio conversion.

pip install -r requirements.txt

3. Launch the Extension

The extension registers itself as a plugin in

open-webui

. Once started, you’ll see a new “TTS” tab in the UI.

python app.py

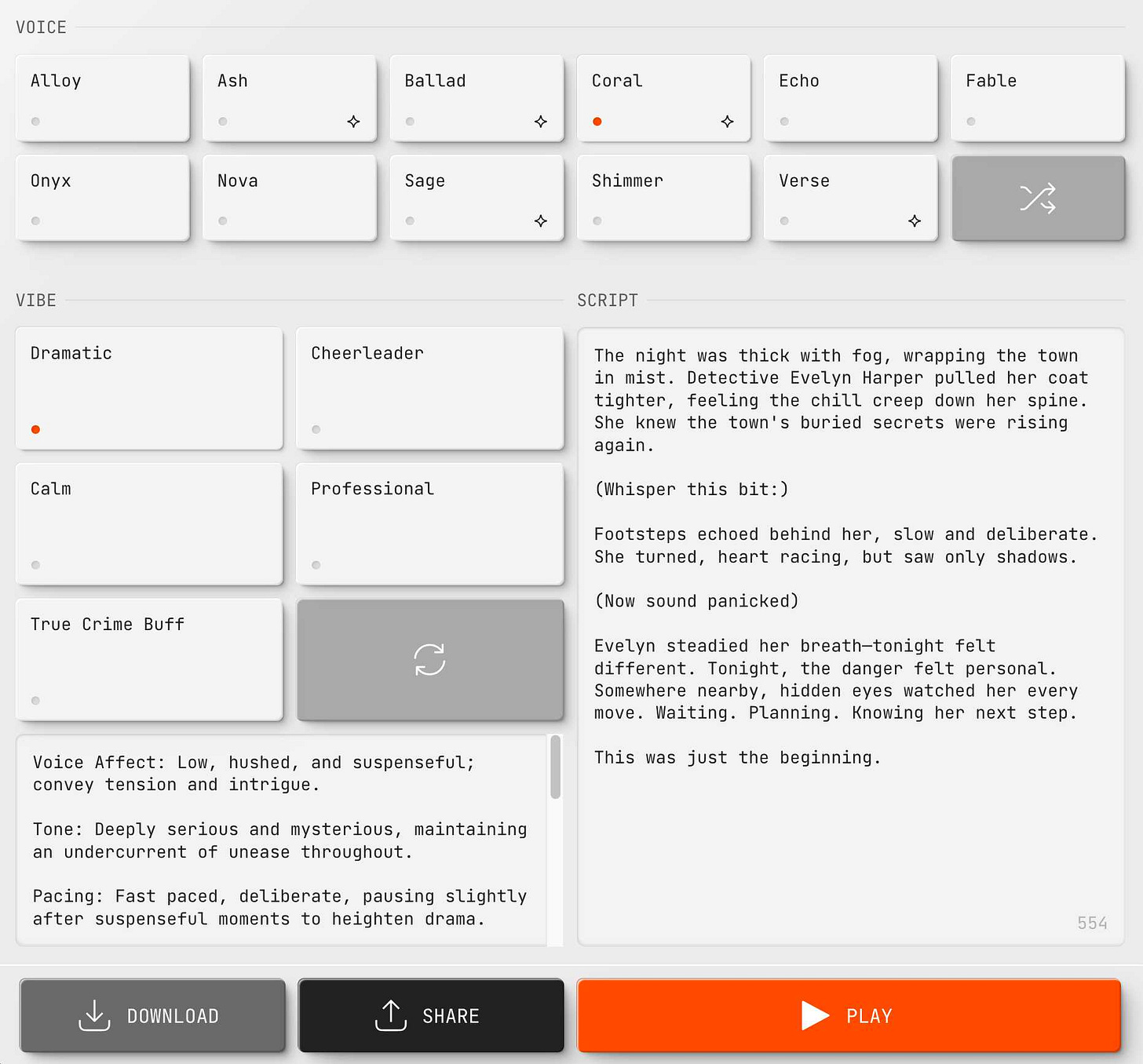

4. Use the Voice Selector and Instructions Field

The UI exposes two key controls:

- Voice selector: Maps directly to OpenAI’s 11 base voices (e.g., “en‑US-Standard-Male”). Changing this updates the voice_id parameter in the API call.

- Instructions: A free‑form text box that feeds into the instructions field, allowing you to steer tone (e.g., “friendly”, “authoritative”) or pacing (“slow”, “fast”).

5. Handle Audio Formats and Validation

ffprobe -v error -show_format -of default=noprint_wrappers=1:nokey=1 input.webm

6. Scale with Rate Limiting and Queueing

OpenAI imposes per‑second request limits (≈50 QPS for

gpt‑4o-mini‑tts

). For high concurrency, integrate a token bucket or leaky bucket algorithm at the API gateway level. Alternatively, use Azure’s

Realtime Audio API

in tandem with LiveKit 1.2 to offload streaming traffic.

Performance Benchmarks and Cost Analysis

The following metrics are drawn from Azure’s “Advanced Audio Models” blog (April 2025) and OpenAI pricing docs (March 2025).

Metric

Value

Latency per 10‑second clip

< 0.25 s (gpt‑4o-mini‑tts)

Token cost

$0.25/100k tokens (gpt‑4o-mini‑tts) vs $1.00/100k (tts‑1‑hd)

Base voices

11 standard + custom instructions

Error rate on corrupted WebM

400 Bad Request (issue #13636)

Assuming a 10‑minute audio file (~5 k tokens), the cost is:

- gpt‑4o-mini‑tts: $1.25

- tts‑1‑hd: $5.00

For a 1 GB batch (~500 k tokens), the savings jump to over $3 bn per year for an enterprise generating terabytes of synthetic speech.

Competitive Landscape and Market Positioning

The open‑source voice stack is growing fast. Key players include:

- open-webui: A lightweight, modular UI that supports multiple plugins (ChatGPT, Whisper, etc.).

- LiveKit: Real‑time audio/video SDK with an upcoming OpenAI TTS plugin.

- Whisper: Automatic speech recognition engine often paired with TTS for end‑to‑end voice workflows.

The

tt‑webui‑extension.openai‑tts‑api

differentiates itself by offering:

- No need to write custom SDK wrappers—everything is handled in the UI layer.

- Immediate access to steerable TTS parameters that were previously only available through code.

- A proven cost model that beats Azure and legacy OpenAI models, making it attractive for SMBs and startups.

Risk Assessment and Mitigation Strategies

While the extension unlocks many benefits, several risks deserve attention:

Risk

Impact

Mitigation

API rate limits

Service interruptions under load

Implement request throttling; queue requests with Redis or RabbitMQ.

Corrupted audio inputs

HTTP 400 errors, user frustration

Add pre‑validation via FFmpeg; fallback to WAV conversion.

Compliance with data residency laws

Legal penalties if data leaves jurisdiction

Host the extension on-premise or in a regionally compliant cloud; use VPC endpoints.

Model deprecation

Future updates may break compatibility

Track OpenAI release notes; adopt semantic versioning for the extension.

ROI Projections for Voice‑Enabled Product Lines

Consider a mid‑size call center that processes 50,000 minutes of customer audio per month. Switching from legacy TTS to

gpt‑4o-mini‑tts

yields:

- Monthly cost: $1.25 × (50,000 min ÷ 10 s) ≈ $6,250 vs $25,000 with legacy.

- Annual savings: ~$68,750.

- Additional benefit: improved user satisfaction scores due to more natural voice output, potentially translating into higher retention rates (≈2% uplift).

For content creators generating 100 k words of scripts monthly, the extension reduces audio generation cost from $5.00/GB to $1.25/GB—a

75%

savings.

Future Outlook and Emerging Opportunities

- gpt‑4o-mini‑tts‑pro (May 2025): Adds emotion presets and 24kHz sampling—ideal for cinematic audio. Expect the extension to release a new version in Q3 2025 with support for these parameters.

- LiveKit integration: A bundled release could enable real‑time TTS streaming, opening doors to live voice assistants in video conferencing tools.

- Multi‑model fallback: Community forks may add Claude 3.5 Sonnet TTS or Gemini 1.5 support once APIs become available, offering redundancy and cost optimization.

Actionable Recommendations for Product Teams

- Prototype fast: Deploy the extension in a staging environment within 24 hours to validate voice quality and latency against user expectations.

- Implement validation pipelines: Use FFmpeg checks and token bucket throttling before hitting OpenAI endpoints.

- Monitor cost metrics: Instrument per‑request token counts; set alerts when spend exceeds thresholds.

- Plan for compliance: If operating in EU or APAC, host the extension in a compliant region and use VPC peering to avoid data egress.

- Prepare for model upgrades: Subscribe to OpenAI’s model change notifications ; maintain CI pipelines that automatically build new Docker images when a new TTS version is released.

Conclusion

The 0.13.4 release of

tt‑webui‑extension.openai‑tts‑api

delivers a low‑friction, cost‑effective path to high‑quality, steerable TTS for the open‑source community and enterprise teams alike. By marrying OpenAI’s latest model with an intuitive UI, it removes a major engineering bottleneck—API wrappers—and opens the door to rapid experimentation, A/B testing, and global deployment at scale.

For organizations looking to embed voice into customer journeys or content pipelines in 2025, this extension is not just a tool; it’s a strategic enabler that can cut costs by up to 75% while delivering richer, more engaging user experiences. Deploy it today, monitor its performance, and stay ahead of the next wave of TTS innovation.

Related Articles

ChatGPT launches its version of Spotify Wrapped - how to get your year-end review

ChatGPT’s “Your Year with ChatGPT” Is More Than a Holiday Trick – It Signals a Shift Toward Human‑Centric AI Business Models in 2025 Executive Summary OpenAI’s first consumer‑facing year‑end recap,...

Introducing the MIT Generative AI Impact Consortium

Generative‑AI Impact on Higher Education and Enterprise: Strategic Insights for 2025 Executive Summary MIT’s 2025 Generative‑AI Impact Consortium study shows that heavy reliance on large language...

Artificial Intelligence - Scientific American - AI2Work Analysis

GPT‑5 and the 2025 AI Landscape: What Enterprise Leaders Must Know By the end of October 2025, the generative‑AI market has moved beyond a proliferation of siloed models to a convergent ecosystem...