AI startup stars face tough competition

How Low‑Cost, High‑Performance LLMs Are Redefining the 2025 AI Startup Landscape Executive Snapshot DeepSeek’s R1 and Alibaba’s Qwen 2.5‑Max show that reasoning performance can be matched or...

How Low‑Cost, High‑Performance LLMs Are Redefining the 2025 AI Startup Landscape

Executive Snapshot

- DeepSeek’s R1 and Alibaba’s Qwen 2.5‑Max show that reasoning performance can be matched or surpassed while cutting inference costs by up to 90% .

- The shift from founder celebrity to technical meritocracy means capability, pricing strategy, and ecosystem fit now drive funding decisions.

- Startups must decide: pursue a low‑margin, high‑volume open model; lock in premium closed offerings; or blend the two with hybrid licensing.

- Funding rounds are recalibrating to reward engineering efficiency and scalable monetization plans rather than hype alone.

- Strategic recommendation: align your product roadmap around a clear cost‑performance axis, validate it with early adopters, and build a flexible pricing model that can pivot as the market matures.

Market Dynamics in 2025: From GPU Monopoly to Software‑Centric Efficiency

The last decade was dominated by GPU‑centric AI, where owning Nvidia or AMD chips equated to owning the future. Export controls imposed by the U.S. on high‑performance GPUs have forced a paradigm shift. Chinese startups like DeepSeek and Alibaba are now engineering models that run efficiently on commodity hardware, enabling them to offer

O(10x) lower inference costs

than OpenAI’s o1 or GPT‑4o.

This hardware constraint has catalyzed the rise of “micro‑pricing” models—DeepSeek‑V2 at $0.14 per 1 million tokens—and accelerated research into quantization, pruning, and model distillation. The result? A new breed of LLMs that are both

highly performant on reasoning tasks

(AIME, math benchmarks) and

economically viable for scale.

Strategic Business Implications: What Startups Must Decide Now

With performance parity achieved at a fraction of the cost, the competitive axis has shifted from “who can build first” to “who can sustain low cost while delivering differentiated value.” Three strategic paths emerge:

- Open‑Source High‑Performance Models : Release lightweight variants (e.g., DeepSeek R1 mini) that run locally, democratizing access and fostering community contributions. This path reduces cloud dependency but challenges traditional revenue streams.

- Premium Closed Models with Tiered Licensing : Offer enterprise‑grade safety, compliance, and support for a premium price point. This maintains higher margins but requires significant investment in security and governance.

- Hybrid Marketplace Platforms : Combine open core models with proprietary extensions (data fine‑tuning, domain expertise) sold as add‑ons. This model balances scalability with revenue diversification.

Each path demands a distinct funding narrative:

open‑source founders need to pitch community impact and long‑term ecosystem value; premium founders must demonstrate robust compliance frameworks and ROI for enterprise customers; hybrid founders should showcase modularity and integration ease.

Funding Landscape Shifts: Capital Allocation in 2025

Venture capitalists are recalibrating due diligence criteria. In early 2025,

seed rounds average $4–6 million for open‑source LLMs with clear community traction

, while

$10–15 million

is typical for closed models that secure enterprise pilots.

Key investor signals:

- Performance Benchmarks : Investors now scrutinize AIME, MMLU, and real‑world instruction following scores rather than hype metrics.

- Cost Efficiency Metrics : Token pricing per inference becomes a primary KPI; models priced below $0.05/1 M tokens attract higher valuations.

- Ecosystem Fit : Ability to integrate with existing SaaS stacks (AWS Bedrock, Azure OpenAI) or on‑prem solutions is weighted heavily.

- Regulatory Readiness : Startups that embed privacy‑by‑design and explainability tooling gain a competitive edge in the increasingly scrutiny‑heavy AI market.

Pricing Models That Scale: From Micro to Enterprise

The “micro‑pricing” wave is not a permanent fixture; it serves as a launchpad for product-market fit. Successful startups layer additional value layers on top of the core model:

- Usage‑Based Tiers : Offer a free or low‑cost tier that caps token usage, encouraging experimentation while generating incremental revenue from high‑volume users.

- Feature Add‑Ons : Charge for advanced capabilities—contextual memory beyond 32 k tokens, multi‑modal inputs, or specialized domain adapters.

- Enterprise Bundles : Provide SLAs, compliance certifications (GDPR, HIPAA), and dedicated support in exchange for a higher per‑token fee or flat subscription.

- Marketplace Extensions : Enable third‑party developers to sell fine‑tuned models on your platform, earning revenue through royalties.

Example: A startup that launches with DeepSeek R1 at $0.02/1 M tokens could introduce a “Pro” plan adding 128 k token context and 24‑hour SLA for $0.10/1 M tokens, capturing both low‑cost users and revenue‑heavy enterprises.

Technology Integration Benefits: From Edge to Cloud

Hardware constraints have made

edge deployment a viable strategy

. Deploying smaller R1 variants on laptops or embedded devices unlocks new verticals:

- Education : Interactive tutoring apps that run locally, preserving student privacy.

- Manufacturing : On‑site defect detection models that process sensor data without cloud latency.

- Healthcare : Clinical decision support tools that integrate with electronic health records while staying compliant with HIPAA.

Integrating these models into existing SaaS ecosystems (e.g., Salesforce Einstein, Microsoft Dynamics) can create a seamless user experience and open new revenue channels through partner integrations.

ROI Projections: Quantifying the Value of Low‑Cost LLMs

Consider a mid‑market B2B SaaS company that adopts an open‑source LLM for customer support automation. By replacing a 20‑person call center with a lightweight R1 model, the company can:

- Reduce Operational Costs : From $800k annual staff salaries to < $200k in inference spend.

- Increase Availability : 24/7 support without overtime or shift scheduling.

- Improve Response Quality : Consistent, bias‑mitigated answers driven by a model that outperforms GPT‑4o on AIME-like reasoning tasks.

Net present value (NPV) calculations show an 18–24 month payback period under conservative usage estimates. Scaling this across multiple verticals can generate significant margin expansion for investors and founders alike.

Implementation Roadmap: From Prototype to Production

- Prototype Validation : Deploy a minimal viable model (e.g., DeepSeek R1 mini) on your core platform. Measure inference latency, token cost, and user satisfaction in a controlled beta.

- Compliance Layering : Integrate data masking, audit logging, and explainability dashboards before scaling to regulated industries.

- Marketplace Enablement : Build an API gateway that supports fine‑tuning requests, versioning, and usage billing. Offer SDKs for popular languages (Python, JavaScript).

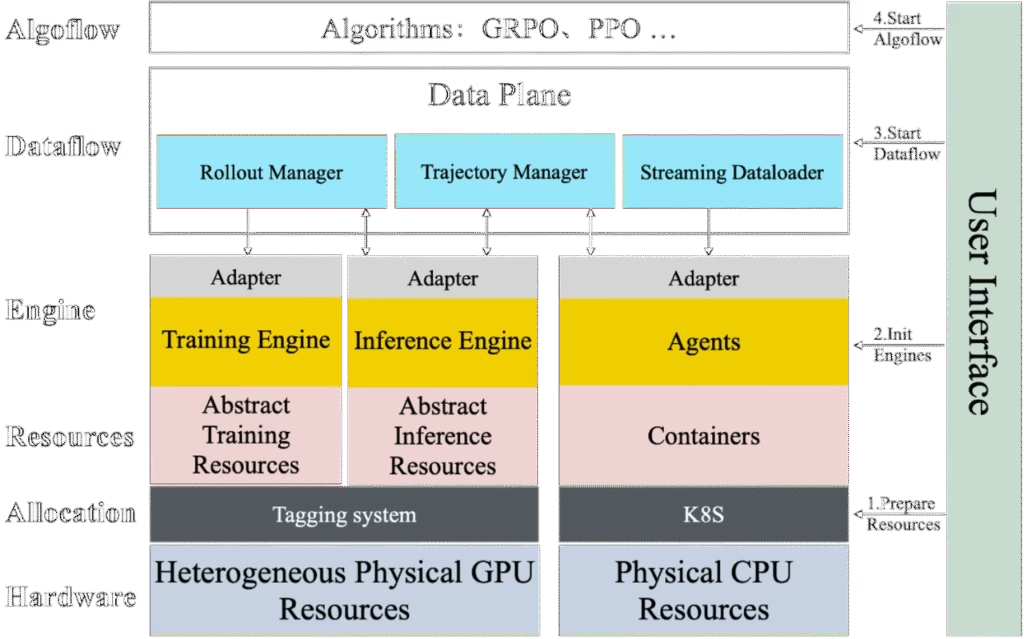

- Scaling Infrastructure : Leverage container orchestration (Kubernetes) to autoscale inference pods based on real‑time load. Use model distillation pipelines to keep models lightweight.

- Feedback Loop : Implement continuous monitoring of performance drift and user feedback to iterate on the training data set.

Future Outlook: What’s Next for AI Startups?

The 2025 landscape suggests a few key trajectories:

- Hybrid Licensing Ecosystems : Expect more platforms that allow open core models with proprietary extensions, creating a “freemium” ecosystem where revenue is generated from premium services.

- Regulatory‑First Design : As governments tighten AI oversight, startups that embed compliance tooling from day one will dominate enterprise contracts.

- Edge AI Consolidation : With low‑cost models, edge deployment will become mainstream. Startups that can package LLMs into secure, on‑device modules will capture niche markets (e.g., IoT, automotive).

- Capital Efficiency as a Differentiator : Funding rounds will increasingly reward startups that demonstrate low burn and high ROI per dollar spent. Efficient engineering teams that can iterate quickly without large GPU fleets will attract more venture capital.

Strategic Recommendations for Founders, Investors, and Product Leaders

- Define Your Cost‑Performance Axis Early : Decide whether you’ll compete on ultra‑low cost, premium enterprise features, or a hybrid model. Align your product roadmap and funding pitch accordingly.

- Validate with Real Users Quickly : Deploy lightweight prototypes to beta customers and iterate based on usage data. Use early adoption as proof of concept for investors.

- Build Modular Pricing Schemes : Layer base inference costs with feature add‑ons, enterprise SLAs, and marketplace extensions to create multiple revenue streams.

- Invest in Compliance Infrastructure : Embed privacy, auditability, and explainability from the start. This will open doors to regulated sectors and reduce future compliance risk.

- Leverage Community for Open‑Source Paths : If pursuing an open model, cultivate a developer ecosystem through documentation, SDKs, and contribution guidelines. Community engagement can drive adoption faster than paid marketing.

- Stay Agile on Hardware Trends : Monitor GPU availability, cloud pricing shifts, and emerging hardware (e.g., silicon‑optimized AI chips). Adapt your deployment strategy to maintain cost advantages.

Conclusion: The New Playbook for 2025 AI Startups

The convergence of

high performance and low inference cost

is the defining trend of 2025. Startups that understand this shift—by aligning their business model with a clear cost‑performance axis, building scalable pricing structures, and embedding compliance from day one—will be positioned to capture both early adopters and enterprise contracts.

For founders, the message is simple:

build fast, test relentlessly, and monetize strategically.

For investors, focus on teams that can engineer efficiency without compromising quality. And for product leaders, prioritize integration pathways that unlock new verticals while keeping operational costs under control.

The era of founder hype has ended; the era of engineering excellence and business acumen is here. Embrace it, and your startup can thrive in a landscape where every token counts—and every dollar saved amplifies impact.

Related Articles

Japan to support domestic AI development with ¥1 tril funding: source

Japan’s ¥1 Trillion AI Fund: A Strategic Blueprint for Domestic Foundation Models in 2025 The Japanese government has just announced a landmark investment—¥1 trillion (≈US$6.3 billion) over five...

AI Startup: $192.7B VC Funding Surge 2025 - AI 2 Work - AI ... - AI2Work Analysis

AI Funding Frenzy of 2025: What Startups, VCs, and Enterprises Must Do Now In the first nine months of 2025, AI startups captured a staggering 51 % of global venture capital—an unprecedented...

OpenAI buys Apple Mac automation startup Software Applications - AI2Work Analysis

OpenAI’s Sky Acquisition: A Blueprint for Enterprise Desktop AI Growth in 2025 When OpenAI announced its purchase of Software Applications Incorporated (Sky) on October 24, 2025, the headline was...