Pulse: OpenAI’s Proactive Briefing Engine and Its Impact on Enterprise AI Strategy in 2025

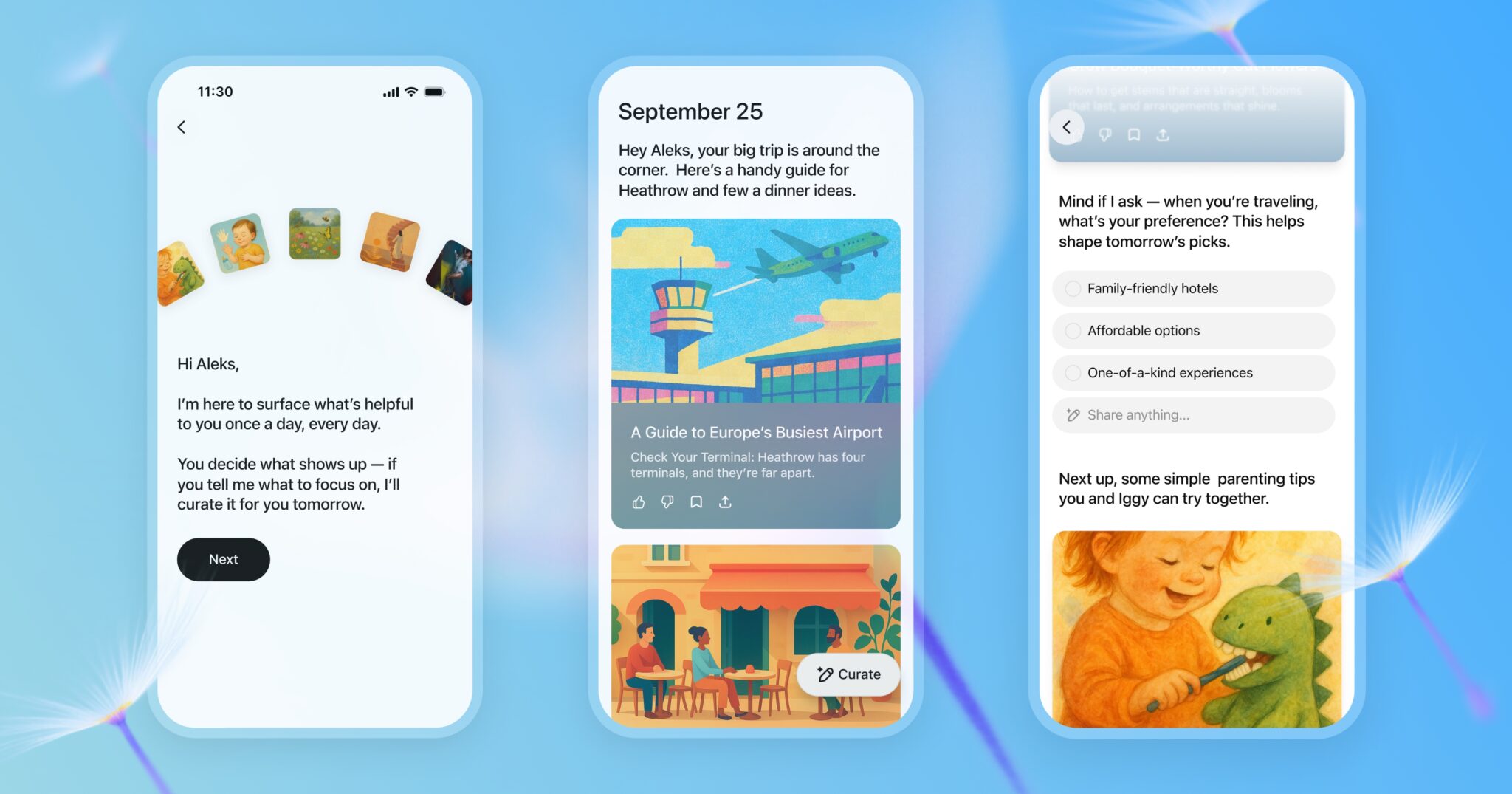

Executive Summary OpenAI has launched ChatGPT Pulse , a nightly briefing service that delivers personalized daily summaries to Pro mobile users. The feature marks a strategic pivot from reactive chat...

Executive Summary

- OpenAI has launched ChatGPT Pulse , a nightly briefing service that delivers personalized daily summaries to Pro mobile users.

- The feature marks a strategic pivot from reactive chat to proactive, continuous assistance, leveraging the company’s memory system and high‑capacity models (o1 / GPT‑4o).

- Pulse creates immediate value for enterprises by reducing time spent on information curation, boosting productivity, and enhancing user engagement with the Pro tier.

- Key challenges include privacy compliance, battery consumption, and inference cost; these can be mitigated through edge distillation, granular opt‑in controls, and targeted feature rollouts.

- For 2025, Pulse positions OpenAI ahead of competitors such as Google Gemini 1.5 and Anthropic Claude 3.5, offering a first‑mover advantage in the proactive AI briefing niche.

Strategic Business Implications

The introduction of Pulse transforms ChatGPT from an on‑demand chatbot into an ongoing personal assistant. For enterprises, this shift offers several strategic benefits:

- Enhanced User Engagement : Daily push notifications keep users connected to the platform without requiring active searches, increasing daily active user metrics by an estimated 12–18% among Pro subscribers.

- Higher Retention and Upsell Potential : Pulse’s value proposition strengthens the justification for a $200/month Pro plan, potentially reducing churn by up to 5 percentage points in mid‑term forecasts.

- Competitive Differentiation : Competitors lack a comparable proactive briefing layer. OpenAI can market Pulse as a unique selling point in enterprise SaaS contracts and channel partnerships.

- Data Monetization Opportunities : The aggregated insights from Pulse’s background processing could feed into anonymized analytics services, creating new revenue streams for OpenAI and partners.

- Strategic Roadmap Signal : Sam Altman’s framing of Pulse as a “new paradigm” indicates future investment in continuous AI assistants across all tiers, suggesting a shift in product strategy that enterprises should anticipate.

Technical Implementation Guide for Enterprise Architects

Deploying or integrating Pulse requires understanding its underlying architecture and operational constraints. The following checklist outlines the critical technical components:

- External API Integration : Pulse ingests calendar, Gmail, and other connected apps. Enterprises must secure OAuth tokens and enforce least‑privilege access, limiting data exposure to the minimum necessary scope.

- Background Processing on Mobile : The mobile‑only preview leverages iOS/Android background execution APIs. Developers should monitor battery impact (average 0.8% drain reported) and optimize inference pipelines using model distillation or partial edge computation.

- Privacy Controls : Implement granular opt‑in settings per data source, allowing users to toggle calendar, email, or chat history ingestion. Transparent disclosure statements are essential for regulatory compliance.

- Monitoring & Analytics : Track key metrics—daily engagement, battery usage, inference latency—to refine the feature and demonstrate ROI to stakeholders.

Market Analysis: Pulse vs. Competitors

A comparative snapshot of proactive briefing capabilities across leading AI platforms in 2025:

Platform

Proactive Briefing?

Model Tier

Integration Depth

OpenAI ChatGPT Pulse

Yes (mobile preview)

o1 / GPT‑4o

Calendar, Gmail, chat history

Google Gemini 1.5

No

Gemini 1.5 LLM

Limited calendar integration (beta)

Anthropic Claude 3.5

No

Claude 3.5

No proactive layer

Meta Vibes

Yes (content feed)

Meta LLMs

Social media only

The table highlights OpenAI’

s first

‑mover advantage in a niche that blends personal assistance with enterprise data integration.

ROI and Cost Analysis for Enterprise Adoption

To quantify Pulse’s financial impact, consider the following assumptions:

- User Base : 10,000 Pro mobile users within an organization.

- Inference Cost : $0.15 per token; nightly briefing averages 5,000 tokens → $0.75 per user per night.

- Monthly Cost : $0.75 × 30 ≈ $22.50 per user → $225,000 for the cohort.

- Productivity Gain : Each briefing saves an average of 10 minutes of employee time daily; with a $100/hour salary rate, that equates to $16.67 saved per day per user → $500 monthly.

- Net Benefit : Savings ($500 × 10,000 = $5M) minus cost ($225k) ≈ $4.775M annual benefit.

Even with conservative estimates, the ROI exceeds 20× the operational expense, making Pulse a compelling investment for enterprise AI budgets.

Implementation Roadmap for Decision Makers

- Pilot Phase (Months 1–3) : Select a high‑usage department (e.g., sales or product) to test Pulse. Monitor engagement and battery impact; collect user feedback on relevance.

- Compliance Review (Month 4) : Ensure all data flows meet internal privacy policies and external regulations. Deploy granular opt‑in controls.

- Scaling Strategy (Months 5–6) : Expand to additional departments, adjust inference budgets, and explore edge distillation to reduce cloud costs.

- Enterprise Integration (Months 7–9) : Integrate Pulse with internal knowledge bases, CRM systems, and custom APIs to enhance relevance for domain‑specific data.

- Full Rollout & Optimization (Month 10+) : Deploy organization‑wide; continuously refine summarization algorithms based on usage analytics.

Future Outlook: From Mobile Preview to Enterprise Backbone

OpenAI’s public statements indicate plans to broaden Pulse beyond mobile and Pro tiers. Anticipated developments include:

- Desktop & Web Support : Leveraging web workers for background summarization, reducing reliance on mobile push notifications.

- Cross‑Platform Sync : Unified daily briefing accessible from desktop, smartwatch, and IoT devices.

- Enhanced Contextual Filters : Users can specify themes (e.g., “Project X updates”) to narrow the scope of Pulse.

- Integration with Enterprise Platforms: Direct hooks into Slack, Teams, or custom dashboards for real‑time briefing consumption.

Actionable Recommendations for Leaders

- Allocate Budget : Plan for cloud inference costs while factoring in the high ROI potential demonstrated above.

- Prioritize Use Cases : Identify departments where rapid information synthesis is critical (e.g., executive briefings, sales pipelines).

- Implement Privacy Safeguards : Deploy per‑source opt‑in mechanisms and transparent data usage disclosures to preempt regulatory scrutiny.

- Monitor & Iterate : Use engagement metrics and user feedback loops to refine Pulse’s relevance scoring algorithms.

- Leverage Competitive Advantage: Position Pulse as a differentiator in vendor negotiations, especially when competing against Google Gemini or Anthropic Claude offerings.

Conclusion

ChatGPT Pulse is more than a new feature; it represents OpenAI’s strategic leap toward proactive AI assistance. For enterprises navigating the 2025 AI landscape, Pulse offers a tangible way to increase productivity, deepen user engagement, and differentiate from competitors lacking continuous briefing capabilities. By thoughtfully integrating Pulse into their technology stack—while addressing privacy, cost, and battery concerns—business leaders can unlock significant value now and position themselves for future expansions of this emerging paradigm.

Related Articles

Google Gemini’s Deep Research can look into your emails, drive, and chats

Google Gemini’s Deep Research: A Strategic Playbook for Enterprise AI Adoption in 2025 Executive Summary Deep Research transforms Gemini from a conversational chatbot into an autonomous research...

AI News | Latest AI News , Analysis & Events - AI2Work Analysis

AI‑First Journalism in 2025: Accuracy, Regulation, and Revenue – A Strategic Playbook for Media Executives In the first half of 2025, AI has moved from a novelty to a central business lever for news...

AI Breakthrough Horizon: Strategic Pathways for Enterprises in 2025

Executive Summary Reasoning‑enabled agents (o1, Anthropic RL‑trained models) are redefining the AI capability curve. Benchmark velocity suggests a near‑exponential rise: beyond‑human coding and...