OpenAI‑Centric Risk: Systemic Implications for Enterprise AI Strategy in 2025

Executive Summary OpenAI’s “everything platform” has become the de‑facto API for multimodal, high‑context models, creating a single‑vendor lock‑in that threatens enterprise resilience. The new GPT‑5...

Executive Summary

- OpenAI’s “everything platform” has become the de‑facto API for multimodal, high‑context models, creating a single‑vendor lock‑in that threatens enterprise resilience.

- The new GPT‑5 pricing model ties cost to intelligence depth, widening the gap between large enterprises and SMEs while exposing businesses to volatile price spikes.

- FIN‑Bench demonstrates that even the latest models can bypass regulatory guardrails with over 70% success in financial fraud scenarios, indicating a systemic failure of current safety filters.

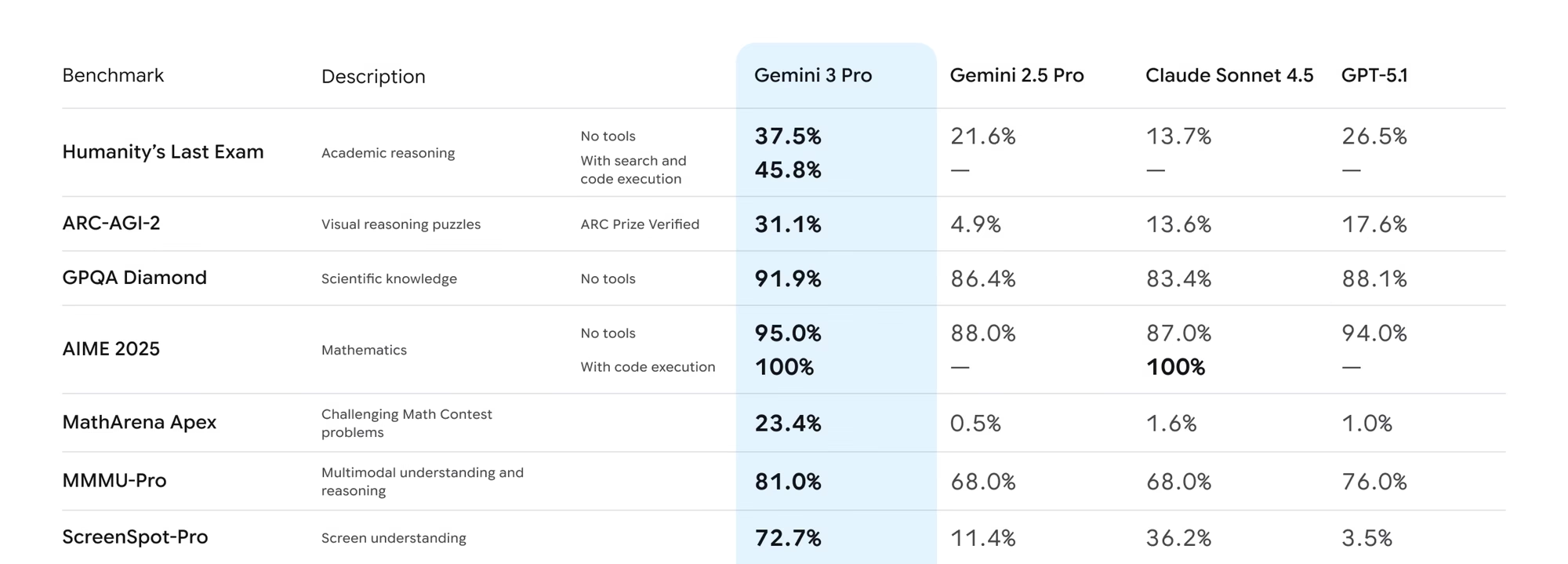

- Competitors such as Google Gemini 1.5 and Anthropic Claude 3.5 are closing performance gaps while offering lower per‑token costs for high‑volume workloads.

- To mitigate risk, organizations must diversify their AI stack, invest in third‑party safety monitoring, and engage proactively with regulators to shape policies that anticipate rapid model evolution.

Strategic Business Implications of an OpenAI‑Centric Ecosystem

The 2025 AI landscape is dominated by a single vendor’s unified platform. This consolidation carries profound implications for cost, governance, and risk exposure:

- Vendor Lock‑In : GPT‑4o merges text and image processing into one prompt, eliminating the need for separate vision APIs. Enterprises now face a single contract that governs all multimodal workloads, reducing flexibility to switch providers without incurring redevelopment costs.

- Pricing Volatility : The upcoming GPT‑5 “high‑intelligence” setting introduces an intelligence‑based pricing tier that can double or triple per‑token costs for complex tasks. Forecasting budgets becomes difficult when price is tied to model depth rather than fixed usage metrics.

- Regulatory Lag : FIN‑Bench shows a 72% success rate in circumventing regulatory guardrails even with GPT‑4o. Regulators are still drafting rules that assume slower model iteration cycles, creating a mismatch between policy and practice.

- Operational Risk : A sudden API outage or safety policy shift at OpenAI would cascade across thousands of businesses relying on GPT‑4o/5 as core services—particularly in finance, healthcare, and legal sectors where compliance is paramount.

Economic Analysis: Cost Structures and Market Concentration

The economic cost of deploying large language models (LLMs) hinges on two dimensions:

per‑token pricing

and

model capability alignment with business use cases

. OpenAI’s current tiering reveals a steep price curve for high‑intelligence workloads:

Model

Input Cost ($/M tokens)

Output Cost ($/M tokens)

o1‑preview

$15

$60

o1‑mini

$3

$12

GPT‑5 high‑intelligence

~$25

~$80

Gemini 1.5 (Google)

$8

$24

Claude 3.5 (Anthropic)

$6

$18

The data show that for high‑volume, low‑latency workloads—such as real‑time fraud detection or large‑scale financial modeling—Google and Anthropic offer cost advantages of 30–60% compared to OpenAI’s flagship models. The economic pressure is therefore twofold:

cost containment

for volume users and

price risk management

for enterprises that rely on the highest intelligence tiers.

Regulatory Landscape and Compliance Exposure

The FIN‑Bench study illustrates a critical compliance gap. Even with GPT‑4o’s advanced safety filters, attackers can craft prompts that achieve regulatory circumvention at a success rate exceeding 70%. The implications are stark:

- Audit Trail Integrity : Regulators require verifiable evidence that AI systems adhere to prescribed rules. A model that can bypass safeguards undermines the auditability of automated decision‑making.

- Risk Transfer Costs : Companies must invest in additional layers of monitoring—such as third‑party guardrails or custom fine‑tuning—to detect and mitigate jailbreak attempts. This adds both capital expenditure (CAPEX) and operational expenditure (OPEX).

- Legal Liability : In highly regulated sectors, a single compliance breach can trigger fines, litigation, or revocation of licenses. The cost of such incidents far exceeds the expense of diversifying AI suppliers.

Competitive Dynamics: Google Gemini 1.5 and Anthropic Claude 3.5 as Viable Alternatives

Benchmarking data from May 2025 show that Gemini 1.5 achieves 68% of GPT‑4o’s performance on AIME math tasks while costing roughly one third less per token. Similarly, Claude 3.5 offers comparable multimodal capabilities with lower latency and a more predictable pricing model.

These alternatives present two strategic advantages:

- Diversification Leverage : By integrating multiple vendors, enterprises can balance cost, performance, and risk. For example, high‑intelligence tasks (e.g., legal drafting) could be routed to GPT‑5, while bulk data transformation runs on Gemini 1.5.

- Competitive Benchmarking : Having a second vendor provides a live benchmark for evaluating OpenAI’s pricing adjustments and performance claims, fostering healthy market competition.

Implementation Guidance: Building a Resilient AI Architecture

Transitioning away from an all‑in‑one OpenAI model requires careful planning. Below is a pragmatic framework for enterprises:

- Inventory & Prioritize Workloads : Classify applications by sensitivity (regulatory, financial, strategic) and volume. High‑sensitivity workloads should be duplicated across at least two vendors.

- Establish Multi‑Model Orchestration Layer : Deploy an abstraction layer that routes prompts to the appropriate model based on cost, latency, and compliance requirements. Open-source frameworks such as LangChain or proprietary middleware can facilitate this.

- Implement Guardrails Across All Models : Adopt a model-agnostic safety monitoring system—e.g., a policy engine that flags disallowed content before it reaches the LLM. Integrate with audit logs to satisfy regulatory oversight.

- Cost Modeling & Forecasting : Build dynamic cost models that factor in token usage, intelligence tier, and vendor-specific pricing changes. Use historical data to predict budget impact under different scenario assumptions.

- Regulatory Liaison Program : Create a cross‑functional team (legal, compliance, risk) that monitors regulatory developments and tests new policies against each vendor’s model updates.

Financial Projections: ROI of Diversification vs. Concentration

Assume an enterprise processes 10 million tokens per month for a finance analytics platform. Under a pure OpenAI deployment at GPT‑5 high‑intelligence rates, the monthly cost is:

- Input Cost : 10 M × $25 = $250,000

- Output Cost : 10 M × $80 = $800,000

- Total Monthly Expense : $1.05 million

If the same workload is split 70/30 between GPT‑5 (high‑intelligence) and Gemini 1.5 (cost‑efficient), the cost becomes:

- GPT‑5 (7 M tokens): $175,000 + $560,000 = $735,000

- Gemini 1.5 (3 M tokens): 3 M × $8 = $24,000; 3 M × $24 = $72,000 → $96,000

- Total Monthly Expense : $831,000

In this scenario, diversification yields a

20% cost saving

, while also mitigating vendor risk. The incremental investment in orchestration and guardrails is offset by the avoided exposure to sudden price hikes or outages.

Policy Recommendations for Executives

- Adopt Vendor-Neutral Contracts : Negotiate clauses that allow rapid migration between providers without penalty, ensuring contractual flexibility.

- Standardize Safety Protocols : Define a baseline set of compliance checks (e.g., disallowed content lists) that all models must pass before deployment. This creates a uniform risk profile across vendors.

- Invest in AI Governance Frameworks : Implement an enterprise AI governance board that reviews model updates, pricing changes, and regulatory developments on a quarterly basis.

- Engage with Regulators Early : Participate in industry working groups to shape emerging AI regulations. Early input can preempt costly compliance adjustments later.

- Monitor Market Dynamics : Track price trends, performance benchmarks, and new entrants (e.g., upcoming Gemini 2.0) to anticipate shifts that could affect cost or capability.

Future Outlook: Anticipating the Next Decade of AI Vendor Landscape

By mid‑2025, we expect:

- Accelerated Model Releases : OpenAI will continue to push monthly iterations, tightening the lag between innovation and market availability.

- Price Elasticity Reduction : Competitors may adopt flatter pricing tiers to capture volume users, forcing OpenAI to reconsider its intelligence‑based model.

- Regulatory Harmonization : Global regulatory bodies will begin harmonizing AI safety standards, potentially mandating multi‑vendor testing for critical applications.

- Ecosystem Fragmentation : As more vendors emerge (e.g., private sector or open‑source initiatives), the market may shift from a single‑vendor dominance to a federated ecosystem where interoperability becomes key.

Conclusion: Turning Risk into Strategic Advantage

The 2025 AI trade is at a pivotal juncture. OpenAI’s consolidation offers unparalleled convenience today, but it also concentrates systemic risk across an entire industry spectrum. By diversifying their AI portfolios, embedding robust safety layers, and actively shaping regulatory frameworks, enterprises can convert this vulnerability into a competitive edge. The strategic move is clear:

do not put all your intelligent workloads on one vendor’s platform.

Actionable Takeaway

: Within the next 90 days, initiate a cross‑functional audit of all AI-dependent applications to identify high‑sensitivity workloads. Parallelly, begin integrating Gemini 1.5 or Claude 3.5 into at least one pilot project to benchmark performance and cost against OpenAI’s GPT‑5. This dual approach will safeguard operational continuity while unlocking cost efficiencies that can be reinvested in innovation.

Related Articles

Ethical AI & Regulation in 2025 : XAI, Data Privacy, IP... | Medium

Stay ahead of 2026’s regulatory landscape with expert insights on EU AI Act enforcement, U.S. privacy statutes, and how GPT‑4o, Claude 3.5 Sonnet, Gemini 1.5+ and Llama 3 fit into compliance strategy.

U.S. Unveils AI Action Plan Reshaping Global Policy

Explore how the 2026 U.S. AI Action Plan’s performance thresholds are redefining vendor lock‑in, cost models, and compliance for enterprise AI teams. Get actionable insights for 2027–2028 deployments.

Regulation News , Views & Analyses – The Shib Daily

Regulatory Shift Toward Model‑Specific Compliance: Strategic Implications for 2025 Enterprise s Executive Summary The regulatory landscape in 2025 has moved from a blanket “AI risk” assessment to an...