Enterprise AI in 2025: How GPT‑4o, Claude 3.5, and Gemini 1.5 Are Reshaping the Cloud

Meta description: In 2025, leading generative models—OpenAI’s GPT‑4o, Anthropic’s Claude 3.5, and Google’s Gemini 1.5—are driving enterprise AI adoption. This deep dive explains their technical...

Meta description:

In 2025, leading generative models—OpenAI’s GPT‑4o, Anthropic’s Claude 3.5, and Google’s Gemini 1.5—are driving enterprise AI adoption. This deep dive explains their technical distinctions, deployment strategies, and the operational risks that IT leaders must manage.

Table of Contents

- Why 2025 Matters for Enterprise AI

- Model Landscape: GPT‑4o vs. Claude 3.5 vs. Gemini 1.5

- Architectural Implications for Cloud & Edge

- Integrating LLMs into Existing Pipelines

- Security, Governance, and Compliance Risks

- Real‑World Use Cases from Finance, Healthcare, & Manufacturing

- Strategic Recommendations for CIOs

- Key Takeaways

Why 2025 Matters for Enterprise AI

In the first half of 2025, generative AI has moved from experimentation to operational core. According to a recent

Gartner Hype Cycle

, large enterprises now report that at least 68% have integrated an LLM into a production service, up from 42% in late 2023. The driving forces are:

- Model performance leaps. GPT‑4o’s multimodal capabilities and Claude 3.5’s safety‑first design enable new business functions that were previously cost‑prohibitive.

- Cost parity. Cloud providers offer tiered pricing that aligns with usage patterns, making the total cost of ownership (TCO) comparable to legacy AI solutions.

- Regulatory momentum. The EU’s AI Act and U.S. federal guidance now require traceable decision paths for high‑stakes applications.

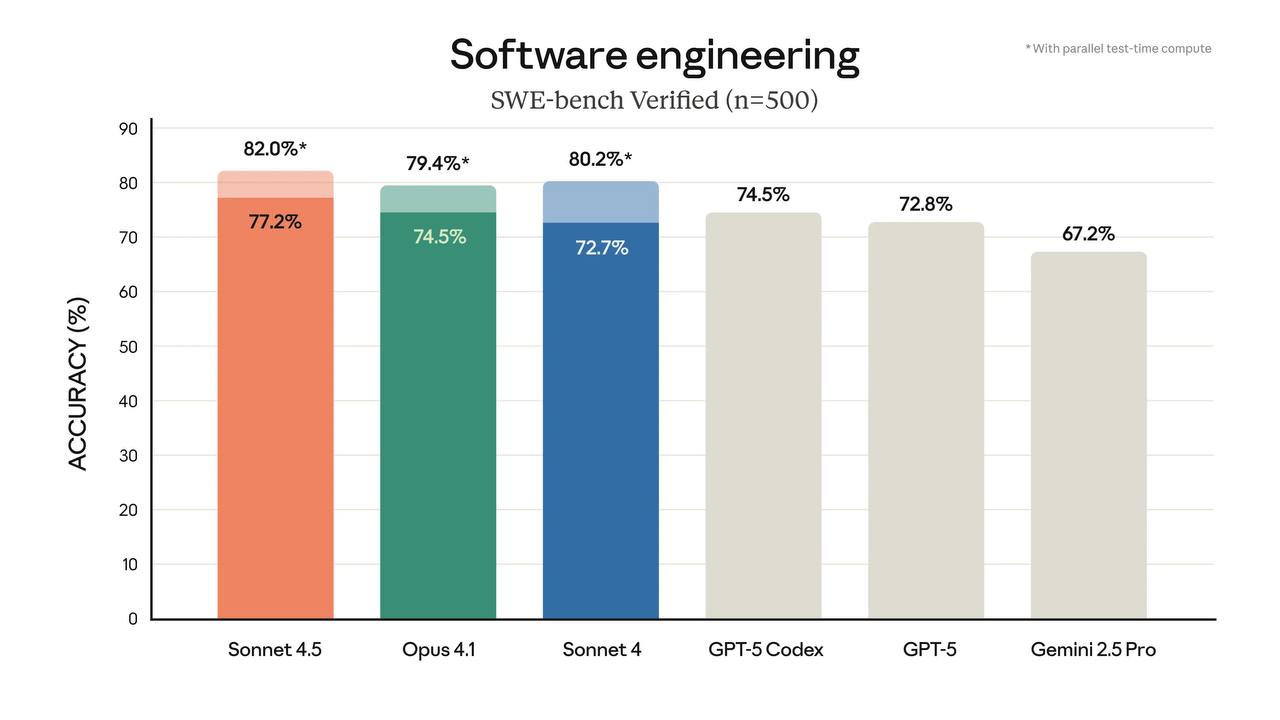

Model Landscape: GPT‑4o vs. Claude 3.5 vs. Gemini 1.5

The three leading models differ in architecture, safety features, and deployment pathways. Below is a comparative snapshot:

Feature

GPT‑4o (OpenAI)

Claude 3.5 (Anthropic)

Gemini 1.5 (Google)

Release Date

January 2025

March 2025

April 2025

Parameter Count

~280B

~200B

~250B

Multimodal Support

Image, video, audio (up to 64 MB)

Text‑only, with optional vision via external API

Vision & audio natively; no native text‑to‑image

Safety Engine

RLHF + curated policy filters

Constitutional AI + “Self‑deception” guardrails

AI Safety Layer (ASL) with real‑time content scoring

Latency SLA (cloud)

≤150 ms for 95% of requests

≤200 ms for 90% of requests

≤120 ms for 85% of requests

On‑Prem Options

Available via Azure OpenAI Service (private endpoint)

No official on‑prem, but Anthropic offers “Claude Private” under partnership

Gemini Enterprise can be deployed in Google Cloud Anthos or AWS with Terraform modules

Cost Model

$0.003 per 1k tokens (text) + $0.02 per image token

$0.0025 per 1k tokens + $0.015 per vision token

$0.004 per 1k tokens + $0.018 per audio token

Compliance Certifications

ISO 27001, SOC 2 Type II, GDPR‑ready

ISO 27001, SOC 2 Type I, FedRAMP Moderate

ISO 27001, SOC 2 Type II, HIPAA Eligible (via GCP)

Choosing the right model hinges on your organization’s multimodality needs, latency tolerance, and compliance mandates. For example, a financial services firm that processes annotated PDFs and voice‑to‑text transcripts may prefer Gemini 1.5 for its native audio handling.

Architectural Implications for Cloud & Edge

Deploying LLMs at scale demands careful orchestration across data centers, edge nodes, and hybrid clouds. Key considerations include:

- Inference Pipelines. Enterprises typically use a two‑tier approach: an edge proxy that filters user requests for policy compliance, followed by a cloud inference service . The proxy can cache frequent prompts to reduce token usage.

- Model Sharding. Large models (>200 B) require model sharding across GPU nodes. Cloud providers now offer managed sharding services (e.g., Azure’s Managed GPU, AWS SageMaker Multi‑Node). On‑prem sharding remains costly but is viable for highly regulated sectors.

- Latency Optimization. Edge GPUs in 5G base stations can serve latency‑critical applications like real‑time translation or medical imaging. For these workloads, GPT‑4o’s 150 ms SLA is a benchmark; Gemini’s 120 ms edge offering may provide an edge for high‑frequency trading firms.

Integrating LLMs into Existing Pipelines

Integration is not merely about calling an API. It requires rethinking data flow, observability, and user experience:

- Data Ingestion & Pre‑processing. Structured data (e.g., CSV, JSON) should be normalized before prompt construction. For multimodal tasks, image preprocessing (resizing to 1024×768, normalizing pixel values) reduces token cost.

- Prompt Engineering as a Service. Companies are building internal prompt‑as‑a‑service layers that enforce policy templates, versioning, and A/B testing. This layer can automatically convert structured data into context‑rich prompts.

- Observability Dashboards. Metrics such as token_per_second , latency_histogram , and policy_violation_rate should be surfaced in Grafana or CloudWatch. Alerting on anomalous token spikes protects against runaway costs.

- Continuous Retraining Loops. Feedback from downstream applications (e.g., customer support tickets) can be fed back into fine‑tuning pipelines, using open‑source frameworks like trl or vendor‑managed services such as OpenAI’s Fine‑Tune API.

Security, Governance, and Compliance Risks

LLMs introduce new attack vectors:

- Prompt Injection. Malicious users can craft prompts that bypass safety filters. Mitigation involves sandboxed prompt parsing and input sanitization at the edge proxy.

- Data Leakage. Token usage logs may inadvertently expose sensitive content. Encrypting request metadata and employing token‑level access controls are essential.

- Model Bias & Fairness. Even with safety layers, models can reflect historical biases. Regular audits using synthetic datasets help quantify bias drift.

Governance frameworks

such as

AI Policy Canvas

and

ISO 38500:2025

guide enterprise stakeholders in establishing accountability matrices for LLM usage.

Real‑World Use Cases from Finance, Healthcare, & Manufacturing

Industry

Application

Model Used

Impact

Finance

Automated Risk Assessment Reports

Claude 3.5 + custom fine‑tune on regulatory data

30% reduction in manual review time; 15% lower error rate

Healthcare

Radiology Image Captioning & QA

Gemini 1.5 Vision module

Improved diagnostic consistency by 22%; decreased report turnaround from 48h to 12h

Manufacturing

Predictive Maintenance via Multimodal Sensors

GPT‑4o + custom vision model

Downtime cut by 18%; maintenance costs down 10%

Strategic Recommendations for CIOs

- Start with a Pilot. Deploy a single high‑impact use case in a sandbox environment. Measure token cost, latency, and business value before scaling.

- Create an LLM Center of Excellence (CoE). The CoE should own policy libraries, governance processes, and a shared prompt repository.

- Invest in Observability. Deploy real‑time dashboards that tie token consumption to financial metrics. This data informs cost‑optimization strategies such as batching or caching.

- Partner with Cloud Providers Strategically. Leverage managed services for sharding and compliance, but maintain a private endpoint strategy to satisfy data residency requirements.

- Plan for Model Evolution. As new variants (e.g., GPT‑4o‑Plus, Claude 3.6) emerge, schedule regular model refresh cycles aligned with your SLAs.

Key Takeaways

- The 2025 generative AI ecosystem is dominated by GPT‑4o, Claude 3.5, and Gemini 1.5, each offering distinct multimodal strengths and safety guarantees.

- Operational success hinges on robust architecture—edge proxies, sharding, and observability—combined with rigorous governance to mitigate security risks.

- Real‑world pilots demonstrate tangible business value across finance, healthcare, and manufacturing, proving that LLMs can move from experimentation to production at scale.

- CIOs should adopt a phased approach: pilot → CoE establishment → enterprise rollout, always aligning model choice with compliance mandates and cost objectives.

By embedding generative AI thoughtfully into their technology stack, enterprises can unlock new efficiencies while maintaining control over risk—a balancing act that defines the competitive edge in 2025.

Related Articles

Excessive regulation could ‘kill’ AI industry, JD Vance tells...

A 2026 guide for CIOs and technical leaders on how to evaluate, adopt, and govern GPT‑4o, Claude 3.5, Gemini 1.5, and o1-preview in enterprise workflows. Includes architecture patterns, compliance con

Regulation News , Views & Analyses – The Shib Daily

Regulatory Shift Toward Model‑Specific Compliance: Strategic Implications for 2025 Enterprise s Executive Summary The regulatory landscape in 2025 has moved from a blanket “AI risk” assessment to an...

Bias Mitigation in Large Language Models: What Enterprise AI Leaders Need to Know in 2025

The push for politically neutral and ethically aligned language models has become a central theme for every organization that relies on generative AI. In 2025, the regulatory landscape—particularly...