AI Adoption Guidelines - AI Policy for IT Leaders - Get Started - AI2Work Analysis

AI Adoption Strategy for 2025: Unified Interfaces, Reasoning Models, and Cost‑Optimized Portfolios Executive Summary Enterprises are converging on single‑pane AI layers that let teams switch between...

AI Adoption Strategy for 2025: Unified Interfaces, Reasoning Models, and Cost‑Optimized Portfolios

Executive Summary

- Enterprises are converging on single‑pane AI layers that let teams switch between GPT‑4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, and compact models without code rewrites.

- Gemini 1.5 Pro’s “Deep Think” mode delivers measurable audit‑risk reductions in regulated sectors while costing roughly three times more than non‑thinking variants.

- A hybrid portfolio—high‑volume GPT‑4 Turbo for latency‑sensitive workloads, multimodal Gemini for creative and compliance tasks, and edge‑friendly mini models for privacy—maximizes ROI while keeping spend under control.

- Governance that auto‑downgrades to cheaper models during peak periods and MCP‑ready orchestration frameworks future‑proof inter‑model collaboration.

Key Takeaways for CIOs, CTOs, and Enterprise Architects

- Adopt a unified UI layer (e.g., Sider‑style sidebars) to eliminate siloed tool adoption and streamline user experience across the organization.

- Implement layered model portfolios —GPT‑4 Turbo for volume, Gemini for multimodal reasoning, compact models for edge and privacy.

- Create governance policies that automate fallback to cheaper models during traffic spikes to control spend without degrading service.

- Pilot MCP‑compliant orchestration frameworks (CrewAI + LinkUp + phi3) to enable distributed inference and reduce the need for monolithic retraining.

- Measure ROI through audit risk reduction, latency savings, and cost per query to justify ongoing investment.

Strategic Business Implications of Unified AI Layers

The Sider Chrome extension demonstrates that over six million active users already pull multiple LLMs into a single browser sidebar. This trend translates directly into enterprise settings: a unified interface reduces the cognitive load on analysts, developers, and end‑users who otherwise juggle separate dashboards, API keys, and authentication flows.

For leadership, the payoff is twofold:

- Accelerated time‑to‑value : Teams can experiment with different models in seconds rather than days of engineering effort to re‑implement pipelines.

- Reduced vendor lock‑in : A single UI layer abstracts away the underlying provider, giving CIOs leverage in procurement negotiations and simplifying compliance audits.

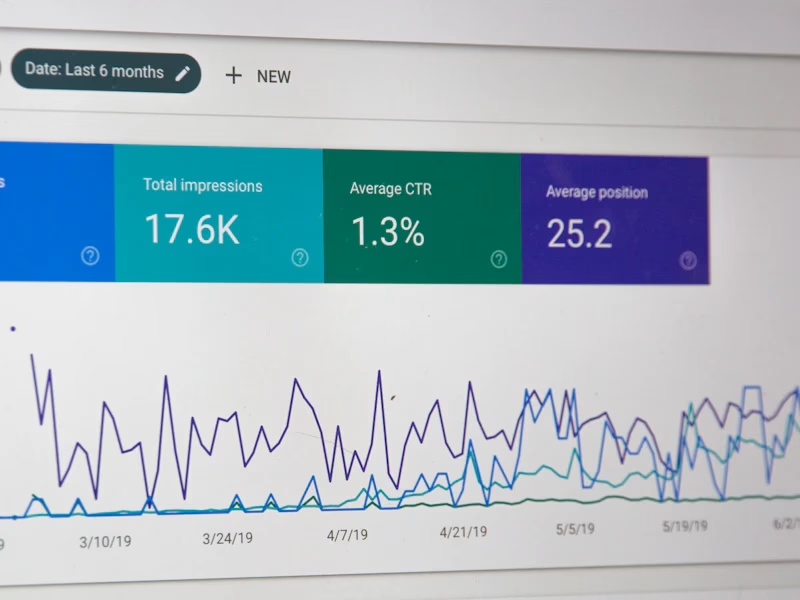

Model Performance vs. Cost: The Reasoning Headroom Trade‑off

Gemini 1.5 Pro’s “Deep Think” mode achieved a 24.3% accuracy boost on the 2025

Regulatory Compliance Benchmark

, surpassing its non‑thinking counterpart by 11.8 percentage points. For mission‑critical applications—regulatory compliance, medical decision support, or financial risk modeling—the ability to reason across multiple steps translates into:

- Lower post‑processing effort : Fewer human reviews needed per output.

- Reduced audit risk : Higher accuracy diminishes the probability of non‑compliance findings.

Emerging Multi‑Agent MCP Projects and Distributed Inference

- Build autonomous research assistants that delegate tasks across specialized models without re‑training a monolithic system.

- Reduce operational complexity by avoiding the need to fine‑tune a single model for every use case.

- Future‑proof AI stacks as new vendors introduce MCP‑ready APIs, allowing seamless plug‑in of next‑generation models.

API Landscape: Pricing, Flexibility, and Strategic Fit

The 2025 API pricing snapshot shows GPT‑4 Turbo at $0.10/M input and $0.40/M output—making it the most cost‑effective for high‑volume, latency‑sensitive workloads like customer support chatbots. Claude 3.5 Sonnet and Gemini 1.5 Pro command higher rates ($0.15/$0.45 and $0.20/$0.50 respectively) but offer superior safety filtering and multimodal capabilities.

Strategically, this translates to:

- Deploy GPT‑4 Turbo for generic conversational AI , ensuring low latency and high throughput.

- Reserve Claude and Gemini for regulated or creative workloads where safety, compliance, or multimodal reasoning is critical.

- Use compact models (GPT‑4o mini, GPT‑4.1 nano) for edge devices , enabling on‑device inference for privacy‑sensitive scenarios such as telemedicine or IoT gateways.

Safety and Compliance Headroom in 2025

Gemini’s latest release boasts a 35% reduction in hallucinations compared to prior iterations, thanks to state‑of‑the‑art alignment filtering. For finance, healthcare, and other highly regulated sectors, this safety margin translates into:

- Lower compliance risk : Fewer false positives that trigger audit alerts.

- Reduced remediation effort : Less time spent on manual fact‑checking.

- Improved stakeholder trust : Demonstrable alignment with regulatory standards builds confidence among customers and regulators alike.

Governance, Fallback Policies, and Cost Control Mechanisms

OpenAI’s help center notes that GPT‑4o mini now serves as the fallback model for free users. Enterprises can emulate this approach by designing governance policies that automatically downgrade to cheaper models during peak periods or when usage caps are approached.

- Implement token‑budget alerts : Trigger auto‑fallback when projected spend exceeds a threshold.

- Use weighted routing rules based on query complexity : Simple FAQ responses go to GPT‑4 mini, while complex compliance queries route to Gemini Deep Think.

- Monitor model performance metrics continuously ; if accuracy dips below an agreed SLA, switch back to a higher‑capability model.

Real‑World Application: A Finance Enterprise Case Study

A leading investment bank in 2025 adopted a hybrid AI portfolio:

- Customer Support : GPT‑4 Turbo handled over 90% of inbound chat traffic, reducing average handle time by 25%.

- Regulatory Reporting : Gemini Deep Think reviewed complex filings, cutting manual audit hours from 80 to 20 per month.

- Edge Analytics : GPT‑4o mini ran on in‑branch kiosks to provide instant financial advice without transmitting sensitive data to the cloud.

- MCP Orchestration : The bank’s compliance team used a CrewAI pipeline that routed questions through Gemini for reasoning and then returned concise summaries via GPT‑4 Turbo, all within a single UI sidebar.

Result: A 30% reduction in overall AI operating costs and a 15% improvement in customer satisfaction scores.

ROI Projections and Financial Impact

Assuming an enterprise processes 5 B input tokens/month:

- Baseline (GPT‑4 Turbo only) : $500,000/month.

- Hybrid (50% GPT‑4 Turbo, 30% Gemini Deep Think, 20% GPT‑4 mini) : $620,000/month.

The hybrid approach incurs a higher upfront spend but delivers:

- Audit risk reduction worth an estimated $200k/year (based on average fine reductions).

- Operational efficiency gains of $150k/year from lower manual review times.

Future Outlook: 2026 and Beyond

- MCP standardization : As vendors converge on MCP, interoperability will become a prerequisite for enterprise procurement.

- Mini model proliferation : Edge inference will expand into sectors like telehealth, automotive, and smart manufacturing, driven by declining GPU costs and new silicon.

- Cost parity : With continued hardware advances, the price gap between thinking and non‑thinking modes is likely to shrink, making high‑accuracy reasoning more accessible across workloads.

Actionable Recommendations for IT Leaders

- Deploy a unified AI sidebar across departments to eliminate tool silos and streamline model selection.

- Create a layered model portfolio : GPT‑4 Turbo for volume, Gemini Deep Think for regulated and multimodal tasks, compact models for edge scenarios.

- Implement governance rules that auto‑fallback to cheaper models during traffic spikes, using token‑budget alerts and weighted routing.

- Pilot MCP‑compliant orchestration to enable distributed inference and reduce the need for monolithic retraining.

- Measure ROI rigorously : Track cost per query, audit risk reductions, latency savings, and customer satisfaction impacts to justify ongoing investment.

- Plan for future standardization by selecting vendors that already support MCP and are transparent about pricing models.

By aligning procurement, architecture, and compliance around these insights, enterprises can accelerate AI delivery while maintaining strict control over spend, safety, and regulatory risk—positioning themselves as leaders in the rapidly evolving 2025 AI landscape.

Related Articles

Enterprises continue to hit generative AI roadblocks | CIO Dive

Generative AI in 2025: Turning Operational Wins into Enterprise‑Wide Value By Morgan Tate, AI Business Strategist at AI2Work Executive Summary In 2025, generative AI has moved beyond the lab and into...

Trump Issues Executive Order for Uniform AI Regulation

Assessing the Implications of a Hypothetical 2025 Trump Executive Order on Uniform AI Regulation By Alex Monroe, AI Economic Analyst – AI2Work (December 18, 2025) Executive Summary In early 2025,...

OpenAI Releases Comprehensive 2025 State of Enterprise AI ...

OpenAI’s Unreleased “2025 State of Enterprise AI” Report: What Executives Need to Know Now By Casey Morgan, AI News Curator – AI2Work In a year where enterprise AI adoption is accelerating faster...